Power is the first constraint of every space mission. Not mass, not communication bandwidth. Without reliable supply of power, a satellite’s instruments go dark, a rover’s batteries freeze solid in a lunar night that lasts fourteen earth days. Without megawatts of reliable supply, the in-situ resource utilization plants that turn lunar regolith into rocket propellant cannot run. Space is an energy problem before it is anything else.

For sixty years, that problem was solved in isolation: every spacecraft carried its own power system, operated it independently, asked or relied on nothing of outside. Existing power system on satellites, solar arrays, batteries, power management electronics, became a masterpiece of design throughout years of engineering refinement, within its assumptions.

These assumptions are still relevant, but incomplete for the future. We are going through an unprecedented growth into a new space era. The pace of the change means the consequences of ignoring the fact are compounding in real time. More than 14,000 active satellites orbit Earth today. Orbital data center companies are getting backed and filing for constellations of hundreds of thousands of compute satellites. NASA, and many commercial companies, eye on establishing lunar base and extend industrial developments there. Wireless power beaming contracts have been signed to deliver power between satellites or to lunar surface. None of these were true just five years ago.

This article provides a survey of what has been built, an honest accounting of where the existing architecture will break, and an analysis of what must be designed before the window to design it closes.

Section I examines the engineering foundation: what we have achieved and the current landscape. Section II maps the emerging orbital power economy, beaming, refueling, servicing, and arrival of data centers. Section III confronts the frontier cases where the isolation architecture breaks on lunar surface. Section IV draws the lessons from Earth grid development, not as an analogy but as a failure mode to avoid.

Existing EPS on satellites contains three components, generation, storage, and distribution, together they are designed to meet the missions’ power demand.

Solar power generation is the dominate method of power generation in space now.

Early satellites used silicon photovoltaic cells. Vanguard I (1958) was the first satellite that used solar cell to produce power, with less than 1 watt output at roughly 10% efficiency. Silicon solar cell’s efficiency is capped by its bandgap of ~1.1 eV, and it doesn’t match AM0 spectrum optimally. The unfiltered solar flux of 1,366W/m² (per ASTM E490) reaching the solar panel on a satellite is rich in short wavelength particles that silicon cannot capture efficiently.

The transition to gallium arsenide (GaAs, with bandgap ~1.42eV) in 1980s raised efficiencies to ~ 22% AM0. Today’s standard triple-junction, stacking InGaP(~1.85eV)/GaAs(~1.42eV)/Ge(~0.67eV), harvest three distinct spectral slices simultaneously, can reach ~30% AM0 efficiency.

Solar cells performance degrades over time in the harsh space environment. Primarily due to high energy particle radiation, extreme thermal cycling, and atomic oxygen erosion. For satellites operate at GEO, dominant factor is the radiation caused by high energy electrons, thermal cycling and atomic oxygen erosion are negligible, with average annual degradation of ~1-1.5% (Degradation rate is not linear). For satellites operate at LEO, thermal cycling fatigue became significant due to high numbers of eclipse cycles and big temperature swing per cycle, AO erosion is not negligible any more, combining the radiation, solar cell efficiency would see annual degradation of ~3-5% on average. For any space mission, it’s typical to prepare and plan for ~15-20% of total power loss when the mission reaches EOL.

Except very lower power CubeSats that may use body-mounted solar panels, most of space mission will require deployed solar arrays. Deployed solar arrays are often the largest structure on a satellite. As launch costs mount, the economic imperative is unmistakable: efficiency above all else, as every percentage increase of efficiency saved kilograms of array mass.

A NASA survey of 389 space missions shows that empirical array specific power clusters tightly around 30 W/kg at system-level, with a floor of 1 W/kg and a maximum of 200 W/kg. Critically, the high-power, low-mass quadrant of the design space remains technologically inaccessible. This constraint matters enormously for the orbital data center discussion later.

The supply chain for space solar cells is moderately concentrated with three dominate players. In United States, Spectrolab (Boeing subsidiary) and SolAero Technologies (Rocket Lab subsidiary, acquired 2022) are the two primary domestic producers of high-efficiency space-grade solar cells. AZUR Space (Germany) is the principal European producer. Commercial market research estimates the three collectively hold about 60% of the global market. The remaining market is divided among Sharp (Japan), CESI (Italy), CETC (China), etc.

On top of that, the Gallium production, which is the primarily materials for multi-junction solar cells, is dominated by China, who controls over 95% of the global supply. Geopolitical escalation can halt the GaAs solar cell manufacturing outright, cascading into the power systems of every satellite mission.

Beyond solar, two other power generation technologies matter.

Radioisotope Thermoelectric Generators (RTGs) convert the decay heat of Pu-238 (~0.57Wt/g, half-life of 87.7 years) into electricity via thermocouples using the Seebeck effect. The thermoelectric conversion efficiency is capped by Carnot efficiency ceiling and the thermocouple materials. The conversion efficiency is typically in the single-digits percent range, with majority of thermal power dissipated as waste heat.

Despite the low efficiency, it requires no moving parts and it has no failure modes from mechanical wear, and it does not rely on availability of high solar energy. Also the wasted heat that radiated through RTG’s fins helps to keep instruments warm. These attributes have helped extend the interplanetary mission into regions that would not have been possible with non-nuclear power sources. Voyager mission’s MHW-RTGs produced ~470 We at launch in 1977 (3 RTGs, each ~158 We at BOM), Today, nearly 50 years later, they are still operating, in an interstellar mode where they continue to provide new information back to Earth.

The Pu-238 supply constraint is acute. US stopped domestic production in 1980’s. Since then, DoE and NASA have utilized the existing inventories and supplemental purchases from Russia through 2020’s. In 2012, DoE and NASA initiated effort to reestablish the production at Oak Ridge National Laboratory. At 2023 DoE shipped its first production-quantity batch of 0.5 kg to Los Alamos National Laboratory, and it’s targeting 1.5 kg/year by 2026.

Nuclear fission remains the unfinished third option. The history of space fission power has a long tail of ambition and a short tail of demonstrated hardware.

The KRUSTY experiment on March 2018 verified reactor neutronics in a space-simulated vacuum environment and confirmed self-regulating thermal stability. The nuclear and mechanical systems achieved TRL 5 per report.

However the distance from this demo to a flight-ready system is substantial, with challenges on low power conversion rate, scaling-up barriers, launch qualifications, radiation shielding, etc.

Direct energy generation source may not always be available. For majority of the missions that rely on solar energy, the mission’s orbit, duration, distance from the sun, or peak loads requires stored onboard energy. There are two categories of batteries used in space: Primary and Secondary batteries. Primary batteries are not rechargeable and used for specific mission types or purpose. Secondary batteries are rechargeable and are used in majority of missions.

Before diving in the specific types of batteries, it’s important to understand their specifications:

Ah (Ampere-hour): Battery capacity measured by total charge it can deliver.

Cycle Life: Number of charging and discharging cycles a battery can undergo before performance degradation.

Operating Temperature: Range of temperature a battery is qualified to operate in.

Wh/L (Volumetric Energy Density): Energy per battery volume.

Wh/kg (Specific Energy Density): Energy per battery weight.

Choices of batteries used in a mission is carefully made after evaluating the mission requirements and finding the matching specifications.

Primary batteries typically have very high energy density, long shelving life but very low cycle life. Which made it a good choices for short duration missions, energy fail-safe, and deep space missions. Common choices of primary batteries include Lithium Sulfur Dioxide (LiSO2), Lithium Carbon Monofluoride (LiCFx) and Lithium Thionyl Chloride (LiSOCl2). There is also a commonly used primary batteries for very high power delivery for short period of time, used in almost every Mars rover, thermal batteries.

Secondary batteries are used for much wider space applications, due to their rechargeability. Numerous types of batteries exist today. With each showcasing different characteristics and may fit for different space missions.

Underlying chemistry has evolved several generations, Nichel-cadmium (NiCd) served early missions but suffered from memory effect and low specific energy. Nickel-hydrogen (NiH2) was used on ISS from launch through 2020, designed for ~38,000 cycle life in LEO, roughly 6.5 years at 16 cycles/day.

Lithium-ion are one of the most commonly used batteries today, due to its high energy density, long cycle life, and low self-discharging. However thermal management is critical for these batteries.

Lithium-Sulfur batteries showcases significantly high energy density, thus being invested by many organizations for upcoming missions. It’s currently not widely used as they still face some challenges, such as faster capacity fading and the necessities for custom-made battery management systems.

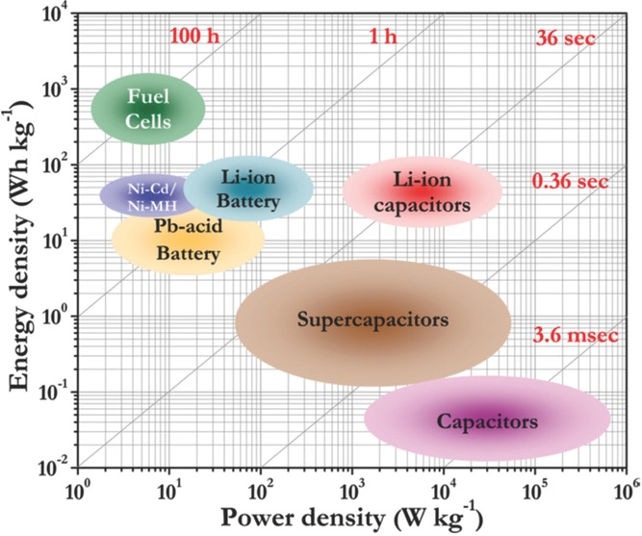

When it comes to battery design and choices, there are always high power batteries and high energy batteries. NASA’s power vs. density chart below illustrates this.

Supercapacitors are being designed to provide very high power density, with fast charge and discharge time, and ability to withstand millions of charging cycles.

As traditional lithium-ion batteries are exposed to physical and thermal damage, they require extra managements and protections, thus increases mission weight and risks. Solid-State Batteries are currently being researched to have significantly higher energy density, and improve on safety. A demonstration mission on solid-state battery was successfully conducted by Kanadevia, in collaboration with JAXA and NASA. The demo have exposed the Solid-State battery in ISS Exposed Section for 434 days with 562 charge-discharge cycles with no significant degradation.

Power Management and Distribution (PMAD) system might be least glamorous and most operationally consequential element of the spacecraft EPS. This part involves Power Conditioning Unit (PCU) that regulates and conditions the power, Power Distribution Unit (PDU) that routes the power from sources to loads, Battery Management Unit that monitors and controls battery functions, and many others.

In a well-designed spacecraft PMAD, multiple power bus architectures are commonly used to optimize the efficiency. Unregulated bus lets voltage swing with battery state of charge, minimizing conversion losses but requiring load-side regulation. Regulated bus uses shunt or series regulator to hold constant voltage, simplifying loads at the cost of regulation dissipation. And the Hybrid bus, regulated in eclipse and unregulated in sunlight, is common in GEO where eclipse is infrequent.

ISS EPS is probably the most complex power distribution system for a crewed spacecraft. (Probably also the clearest demonstration of what happens when system is designed without agreed standards.) The US segment generates power from solar arrays, stabilized at ~160V DV for the main bus, then through DC-DC converter to 124V DC for loads. On the other hand, the Russian segment operates at 28V DC. These two systems are not natively compatible. Power crosses the interfaces through Converter Units that steps between 28V and 124V with associated losses. Miles of wiring distribute power throughout the station. PMAD software manages hundreds of individually addressable loads, maintains load-shedding tables for operational contingencies, and orchestrates the startup sequencing that prevents inrush current from tripping breakers when large loads power up after eclipse.

The ISS hybrid bus is not a design choice anyone would make today. It’s the artifacts of two agencies that began assembling the station using hardware developed independently, without ever agreeing on a common voltage standard. Every watt that crosses the 28V/124V interface incurs a loss in the conversion. That overhead persists for the station’s entire operational lift. It’s a permanent tax on a decision made by omission before the station was built.

A satellite’s power budget is organized around load categories. For a typical communication satellite, for example, the breakdown is approximately:

Payload, including transponders, reflectors, communication systems: 60-70% of the total power. This is also the revenue-generating subsystem.

Attitude Determination and Control (ADCS), including reaction wheels, star trackers, GPS: 8-10%.

Thermal control, including heaters, heat pipes, radiators: 10-15%

On-board Computer and data handling: 3-5%

Telemetry and Monitoring: 3-5%

The ratio may change from mission to mission, but they have same critical design property for power usage: determinism. Satellite operator must consider the load profile before launch, simulate every operational mode, and design the EPS to the worst-case scenario with margin.

The load schedule is a known function. The generation is a calculated function of orbital geometry and cell performance. The battery capacity is sized by calculation. Then array is sized for EOL power at the worst seasonal sun angle, ensuring the battery can charge fully in every sunlit period and discharge only to its DoD limit in every eclipse. Margin is typically added at 5-25% depending on design maturity.

This determinism is what current satellite architecture was optimized for. But future applications, such as space data centers, will struggle to get this correct.

The satellite EPS system that we have today achieved genuine engineering maturity. Triple-junction solar cells generating power, Li-Ion batteries with high energy density surviving tens of thousands of charge cycles, PMAD architectures with fault detection, isolation, and recovery logic, and deterministic power budgeting tools that predict mission performance throughout EOL. And the power subsystem typically accounts for 8-12% of satellite dry mass, a fraction that has been stable for two decades now because of the optimization maturity.

But the optimization solved for a very specific problem statement: generate, store, and manage power within a single isolated node, with no external power interfaces and no network neighbors.

That problem statement is no longer sufficient. The next era demands a network, and a network requires fundamentally different design choices at every layer.

Advanced satellite missions require increasing more power. For example, direct to cell connectivity, AI-enabled earth observation that requires high-throughput onboard inference. They are hitting the power ceiling imposed by array mass budgets. A SmallSat on a rideshare cannot carry the wing area and mass such mission demands. The commercial response is generation elsewhere and deliver wirelessly.

Companies such as Star Catcher and Volta are developing the capabilities on this front. Star Catcher is proposing a power network concept that have LEO satellites concentrate sunlight and beam it to client satellite’s existing solar panel at 1-10 solar constants, with no hardware retrofit required on client. The company set a record in November 2025 by wirelessly transmitting 1.1 kW of optical power to commercial solar panels at NASA’s Kennedy Space Center, and aim for on-orbit demonstration in near future. Volta is planning a demo for wirelessly transmit power to lunar surface to aid future infrastructure on the moon.

The practical challenges of wireless power beaming, among several others, is beam pointing over inter-satellite distances of hundreds of kilometers. A laser beaming system with 1 m aperture transmitting 1 µm has a diffraction-limited beam divergence of ~1.2 µrad, thus producing a spot radius of ~0.12 m at 100 km range. Achievable with state-of-art laser pointer control, but demands continuous fine pointing at sub-µrad accuracy.

Orbit Fab has built RAFTI (Rapidly Attachable Fluid Transfer Interface), a docking port installable on satellites before launch and used for on-orbit propellant delivery. RAFTI has been designed into hundreds of spacecrafts across government and commercial programs now, and US Space Force designated RAFTI as an accepted refueling interface for military satellites in August 2024. Astroscale’s Life Extension In-Orbit (LEXI) servicer fleet has a take-or-pay fuel sale agreement with Orbit Fab for up to 1,000 kg of xenon, which is the first commercial fuel sale contract of its kind.

The standardization story here is probably more important than the technology. RAFTI became a de facto interface standard not through a regulatory process, standards body, or inter-agency agreement, but through procurement adoption. The government agency (Space Force) designation, combined with then commercial adoption, created the network effect that makes any subsequent competitor face a switching cost. This is a model where a standard became universal because one influential buyer committed to it and everyone else followed.

Propellant service is not electrical power service, but it’s probably the best available model for how electrical power interface standards might eventually be established. And the lesson, for good or bad, is early government procurement decisions establish de facto standards. The absence of government procurement decisions for electrical interfaces may leave that field open to fragmentation.

The most consequential new entrant to the orbital power demand landscape is compute infrastructure. In November 2025, Starcloud launched a satellite carrying an Nvidia H100 GPU, which successfully trained a small language model (nano GPT) in orbit and ran inference on Google’s Gemini, marking the first demo of operation of a state of the art commercial AI chip in space and first LLM trained in orbit. Besides Starcloud, many other players are developing plans to operate space data centers, such as Google’s Project Suncatcher, SpaceX, Blue Origin, Aethero, and so on.

The commercial logic is driven by a genuine terrestrial crisis. Global data center electricity consumption reached 415 TWh in 2024, and IEA projects it could exceed 1,000 TWh by 2030. Local governments are rejecting new data center proposals that strain grid capacity and water use, and industry will face a huge power gap between projected data center load and available generation and interconnection. Against this backdrop, space offers some advantages: uninterrupted solar in sun-synchronous orbit, relaxed regulations, and a thermal sink that enables purely radiative heat rejection with no water consumption. But it comes with challenges.

Generation Scale

A 1 GW orbital data center operating at 30% cell efficiency under AM0 irradiance would require approximately 2.5 km²of solar array area. Also at current specific power averaging at 30 W/kg, that’s over 30,000 tons of arrays alone, before structure, thermal, compute, communication hardware. Even at Starship’s projected $100/kg to LEO, that’s $3B in launch cost for array alone per gigawatt.

Thermal rejection

An Nvidia H100 GPU dissipates approximately 700 W at TDP. A rack of eight H100 at ~5.6 kW, a cluster of one thousand H100’s generates ~700 kW of waste heat. In space, all of this heat must be rejected through thermal radiation, no conduction, no forced convection. The Stefan-Boltzmann law governs the required radiator area.

\(P = \varepsilon \cdot \sigma \cdot T^4 \cdot A\)

An example scenario where ε = 0.85 (typical for spacecraft radiator), σ = 5.67×10⁻⁸ W/m²K⁴, and T = 320 K ( 47°C operating temperature after heat transport), the radiator rejects ~505 W/m². Even with ideal calculation (real net radiation will be lower), to reject 700 kW requires 1,386 m² of radiator area.

A few hundred megawatt orbital data center would require radiators thousands of times larger than anything ever deployed. This is not a materials problem or a cost problem, it’s a fundamental scaling problem with the physics of radiative heat rejection.

The calculation above assumes a conventional liquid cooling loop as thermal transport mechanism, which constrains the radiator temperature to a narrow operating window above the coolant loop temperature but below chip junction temperature. Emerging technologies such as sorption-based thermal management can decouple the two, and allow the radiator to reject heat at much higher temperature than chip junction temperature. The effect is dramatic because radiative heat rejection scales as T⁴, thus reducing areas required substantially. But the fundamental scaling challenge remains regardless.

The Honest Timeline

Google Suncatcher feasibility study projects that gigawatt-scale orbital data centers become commercially viable if launch costs reach $200/kg, which may occur around 2035 if Starship achieves high-rate operations. The near-term commercially tractable case is edge compute for Earth observation, latency-sensitive inference for defense, and hosted payload compute for constellations that already have power-rich buses.

The multi-hundred-megawatt hyperscale case is a 2035+ scenario contingent on launch cost reductions and radiator technology that does not yet exist at required scale.

NASA’s analysis for a lunar base camp supporting six crew members requires approximately 28 kW continuous, with an ISRU oxygen production plant adding another 26 kW. A combined baseline of 54 kW for basic operations. Industrial ISRU operations producing rocket propellant at commercially meaningful rates requires power generation of megawatts scale.

A 40 kW solar array at 30% efficiency under lunar solar flux requires approximately 300 m² of deployed array area, plus tracking mechanisms, dust mitigation, structural support, and cable runs across regolith terrain with no construction industry and no tolerance for failure. The 2018 Martian dust storm led to the end of Opportunity misson, declared in February 2019 after 1000+ unanswered recovery signals, while nuclear-powered Curiosity kept driving. That contrast is the proof that solar power as the primary energy source for planetary surface operations may be operationally unacceptable where survival is at stake.

Fission scales differently. The Kilopower 10 kWe system concept is projected at ~1,500 kg total mass, a specific mass of ~150 kg/kWe that improves as power level increases. Four Kilopower units produce 40 kWe, sufficient for an outpost per NASA’s analysis. The KRUSTY experiment demonstrated the viability, but the qualification gap to get a flight-ready system is still huge, as previously discussed.

Lunar night lasts 354 hours at the equator. A habitat requiring 40 kW continuous must store 14,160 kWh. At current Li-Ion specific energy of ~200 Wh/kg, this requires ~71,000 kg of batteries. This mismatch between battery technology and the application requirement is probably fundamental.

Regenerative fuel cells (RFC) offer a viable storage mass budget. An RFC electrolyzes water during the lunar day when solar power is available, stores the gases, then recombines them in a fuel cell during lunar night which produces electricity and water that is fed back to the electrolyzer. NASA studies find RFC specific energy at approximately 830 Wh/kg which is much higher than Li-Ion. The trade-off is round-trip efficiency, where RFC can only achieve 50-60%. This means the solar array must be sized to generate roughly twice the energy the habitat consumes to account for RFC round-trip losses. RFC also requires hydrogen and oxygen storage, water management systems with tight closure requirements.

Thermal storage using regolith-derived phase-change materials, heating a molten medium during the lunar day and drawing electricity through a Stirling engine at night, is an active ESA/NASA research direction which can potentially eliminate earth-supplied storage media, but it remains at low TRL currently.

Every satellite is a single node, with generation, storage, and PMAD serving a known fixed set of loads and topology. Surface development introduces multiplicity for the first time, providing support for habitats, pressurized rovers that requiring variable charging, unpressurized logistics rovers, ISRU plants with surge power demands during electrolysis, communication relays, and additional modules from subsequent missions.

These creates many distinct problems with the satellite PMAD was never designed to solve:

Multiple generation sources at different terminal characteristics must share variable load automatically. On earth, droop control handles this, with each source slightly reduces terminal voltage as its output increases, creating an automatic load-sharing equilibrium without explicit communication between sources. Droop control tuning for space systems with very different source impedances (solar array vs. reactor vs. battery) is non-trivial and has no heritage at the required scale.

When a new module arrives and connects to the power bus, the PMAD must detect the new node, verify its interface compatibility, accept its load, and update its fault model. And it must do them autonomously, without human communication from earth. Current space PMAD assumes a static topology validated at launch and does not include node discovery protocols.

Earth-Moon round-trip communication delay is ~2.6 seconds. Earth-Mars one-way ranges from 3 to 22 minutes. A cascading power fault in a crewed Mars habitat can progress from detectable to life-threatening in shorter timer. Ground controlled fault response, such as the ISS model, is physically impossible beyond the Earth-Moon system. The PMAD must detect, diagnose, isolate, and recover from faults autonomously, in safety-critical time windows, across a multi-node network, with a system capable of handling failure modes that were not enumerated at launch.

No governing body has specified what voltage, connector, communication protocol, or fault current limit a cislunar power network must use. This absence is an active harm accumulating with every piece of hardware that launches without agreed interfaces.

International Space Power System Interoperability Standards (ISPSIS) is probably the closest to it, which covers comprehensive power system quality specifications. But it focused on load side rather than generation or distribution side. It does not address physical interfaces, and it’s designed mostly for ISS heritage with 120V and 28V systems.

The Artemis Accords signed by 63 countries as of now, address scientific data sharing, emergency assistance, transparency in operations, and registration of activities, etc. They do not address electrical power interoperability.

The contrast with propellant transfer is instructive. Orbit Fab’s RAFTI achieved de facto standardization through a sequence of procurement. No standards body was involved. The procurement community made the decision by spending money on one specific interface specification, and the market followed.

Technicality is not the challenge here. Bus voltage levels, power negotiation protocols, node discovery protocols, all have equivalents in terrestrial industrial power systems. The challenge is convening the stakeholders (government agencies and commercial operators) to agree on specification before the hardware that must conform to it is already landed.

The commercial pressure makes this more urgent, not less. SpaceX, Blue Origin, and commercial habitat providers are building hardware on their own schedules and have strong competitive incentives to optimize for their own systems rather than the network they will eventually share with neighbors. A commercial operator who builds to a proprietary power bus voltage has slightly better system performance and full control over their supply chain. A commercial operator who builds to an agreed standard accepts a small performance penalty but can exchange power with other compliant operators, including during emergencies.

Questions around broader collaboration across nations remains unanswered. Major space players such as China and Russia did not sign on Artemis Accords, and most likely will not do so in foreseeable future.

One question readers should think about: Are we heading toward a competitive space race over territory and control, or building a future where space is explored collectively for humanity?

Earth’s electrical grid is probably the most cited analogy for what the cislunar infrastructure must become. It is also, in its specifics, a failure mode to replicate at all costs.

Earth’s grid accumulated over 140 years through locally rational decisions that no one would make from scratch today. It works extraordinarily well by some measures providing reliable power at scale, and extraordinarily poorly by others.

Edison’s direct-current Pearl Street Station in Manhattan (1882) was optimal for his technology and his customers. Tesla and Westinghouse’s alternating current was technically superior at transmission scale. The war of currents resolved in AC’s favor, but by the time it did, DC systems were already embedded in infrastructure that couldn’t be replaced simply.

The 60 Hz vs. 50 Hz split between US and much of the rest of world emerged from early, region-specific standardization during grid buildout. Neither choice was inherently wrong, but both became lasting interoperability constraints. As a result, grids operating at different frequencies cannot be directly connected via AC without frequency conversion, and typically are connected through HVDC links.

The Northeast Blackout of 2003 affected 55 million people across eight US states and Ontario Canada because of a transmission line failure and a software bug in Ohio that allowed a fault to cascade through a grid that had been expanded without adequate relay coordination.

The disjointed-grid problem is institutional and not technical. Each increment of Earth’s grid was built by an actor who optimized their own system and did not bear the cost of future neighbors. The cost of incompatibility only became visible when the network grew large enough that the issues compounded. By then, the incumbent infrastructure was too embedded to replace.

Many threads are converging in space power ecosystems. The industrial supply chain is producing the technology. The servicing market is proving that on-orbit resources can be bought, sold, and delivered. The power beaming ecosystem is demonstrating that electrical energy can be transmitted wirelessly across meaningful distances. And orbital data center and surface demands are placing the largest energy bet in space history, bets that only make sense if power infrastructure at unprecedented scale gets built.

What’s missing is the coordination layer. The interface standard, the policy framework, the institutional convening. Without it, each of these threads optimizes locally and the intersections between them become friction. Where in emergency situations adjacent systems cannot help each other because they were never designed to interact.

The satellite proved that space power engineering can achieve genuine mastery within an island. But the new demands of next computing era and next exploration era cannot be met by island alone.

Space power infrastructure is being designed NOW.

We have a once-in-history opportunity of design-from-scratch for cislunar power grid. The window is open now but will be closing soon. The decision being made today, by all government agencies and commercial players, will determine whether the space economy’s power infrastructure is well-designed, or accumulated-over-time.

History suggests that accumulated-over-time infrastructure is very hard to fix.

NASA (2025), State-of-the-Art of Small Spacecraft Technology: Chapter 3 Power. https://www.nasa.gov/smallsat-institute/sst-soa/power-subsystems/

Gary L. Bennett (2026), Space Nuclear Power: Opening the Final Frontier, AIAA, 4th International Energy Conversion Engineering Conference and Exhibit

Office of Nuclear Energy (2023), US Department of Energy Completes Major Shipmemnt of Plutonium-238 for NASA Missions. https://www.energy.gov/ne/articles/us-department-energy-completes-major-shipment-plutonium-238-nasa-missions

MRA (2026), Space Solar Cells Future Forecasts: Insights and Trends to 2033, https://www.marketreportanalytics.com/reports/space-solar-cells-83318

SatNow (2026), What are the Different types of Batteries used in Space? https://www.satnow.com/community/what-are-the-different-types-of-batteries-used-in-space

John Gould (2021), NASA Seeks to Create a Better Battery with SABERS, https://www.nasa.gov/aeronautics/nasa-seeks-to-create-a-better-battery-with-sabers/

Yu Miyazawa, et al (2025), Space Demonstration of All-Solid-State Lithium-Ion Batteries Aboard the International Space Station, Aerospace, 12(6), 514. https://doi.org/10.3390/aerospace12060514

Eric B. Gietl, et al (2000). The Electric Power System of The International Space Station - A Platform for Power Technology Development, Aerospace Conference

Anthony J. Colozza (2020), Small Lunar Base Camp and ISRU Oxygen Production Facility Power System Comparison, NASA

NASA, Fission Surface Power program, https://nasa.gov/exploration-systems-development-mission-directorate/fission-surface-power

David I. Poston, Marc A. Gibson, Thomas Godfroy & Patrick R. McClure (2020), KRUSTY Reactor Design, Nuclear Technology 206: sup1, S13–S30, DOI: 10.1080/00295450.2020.1725382.

Travis Beals (2025), Exploring a space-based, scalable AI infrastructure system design, Google, https://research.google/blog/exploring-a-space-based-scalable-ai-infrastructure-system-design/

IEA, Energy Demand From AI, https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai

David Kornuta et al (2019), Commercial Lunar Propellant Architecture: A collaborative study of lunar propellant production, Reach Volume 13

Mario F. Palos et al (2020), Lunar ISRU Energy Storage and Electricity Generation, Acta Astronautica 170:412–420.

Jeff Foust (2024), Volta Space Technologies unveils plans for lunar power satellite network, SpaceNews, https://spacenews.com/volta-space-technologies-unveils-plans-for-lunar-power-satellite-network/

Kevin Barry (2023), State of the Industry Report on Investment and Development of Space Solar Power, Acta Astronautica, 223

Wikipedia, Space-Based Solar Power. https://en.wikipedia.org/wiki/Space-based_solar_power