Story highlights

Anthropic Claude and other LLMs were used by US military in Iran strikes for intelligence, targeting and simulations. But Pentagon has already expanded AI use before Iran strikes. And most big AI companies are involved.

While the focus appears to be on the Pentagon having used Claude AI models of Anthropic for the attack against Iran on Saturday (Feb 28), it is not the only artificial intelligence tool in the hands of the US military. In the past few years, the US Department of Defense has been accumulating several AI models and tools to help in warfare, from simulations to intelligence assessment and more. Here is what we know.

Not just Anthropic: Pentagon has contracts with several major AI companies

The Pentagon awarded contracts worth up to $200 million in July 2025 through the Chief Digital and Artificial Intelligence Office (CDAO) for frontier AI prototyping and scaling adoption in warfare and war planning. The focus includes large language models (LLMs), agentic workflows, and classified and unclassified deployments for national security, warfighting, intelligence, and enterprise use.

Add WION as a Preferred Source

Besides Anthropic, the deals were reached with OpenAI, Google and xAI.

How Anthropic AI became embedded in US defence

Anthropic began significant engagement with US defence and intelligence agencies in late 2024 and into 2025. In November 2024, it partnered with Palantir and Amazon Web Services to supply Claude to US defence and intelligence systems, including classified environments. In June 2025, Anthropic introduced Claude Gov, a version tailored for government and national security workflows, which was in active use at US intelligence and defence agencies by late 2025.

Trending Stories

Footage of damaged Pakistani radar released in Operation Sindoor documentary | WATCH

India releases documentary film on Operation Sindoor | WATCH

Who is Jaylan Amhad Davis? 18-year-old turns himself in for Oklahoma 'Sunday Funday' mass shooting

Trump's 'Project Freedom' gets boost as Saudi Arabia, Kuwait lift curbs in Strait of Hormuz: Report

US-Iran war: Tehran accuses US of violating ceasefire with attack on ships in Strait of Hormuz

Will Vijay become Tamil Nadu Chief Minister? Permutations and combinations as resort politics starts

How can Vijay miss out on Tamil Nadu CM post despite TVK emerging as single largest party? 3 scenarios EXPLAINED

How can Vijay become Tamil Nadu CM despite TVK not getting majority? 4 options EXPLAINED

Shocking ticket scandal behind moving IPL 2026 final from Bengaluru: BCCI Secretary

Who will get Ted Turner’s fortune?

'Let them impose President's Rule': Mamata Banerjee refuses to resign again even as post-poll violence engulfs West Bengal

Bought an iPhone for AI Siri? Apple may repay you up to $95: Check how to claim refund

Russia-Ukraine War: Will Zelensky Cross Putin's Red Line?

Tamil Nadu Elections: What Are Vijay's Options For Power?

Did Manon confirm exit from KATSEYE during livestream amid hiatus? Here's what we know

Austria shooting: At least three killed outside Linz restaurant

200+ American targets in the Gulf hit by Iran, much more than acknowledged by the US, claims report

New Princess Diana Documentary: Story of 'the people's princess' in her own words

South Korean actor Lee Jae Wook, best known for Alchemy of Souls and Extraordinary You to begin military service; agency confirms date

Not the first time? Chandranath Rath is not the only Suvendu Adhikari aide to die under mysterious circumstances | All details here

Avatar director James Cameron hit with lawsuit over alleged unauthorized use of actress’s features

Russia-Ukraine War: Ukraine Unleashes Massive 347-Drone Blitz Near Moscow

Nostradamus prophecies for 2026: Bees, 7 months great war, 3 fires and more

Daniel Kinahan: Irish Drug Lord Caught by Dubai Police After Years on the Run

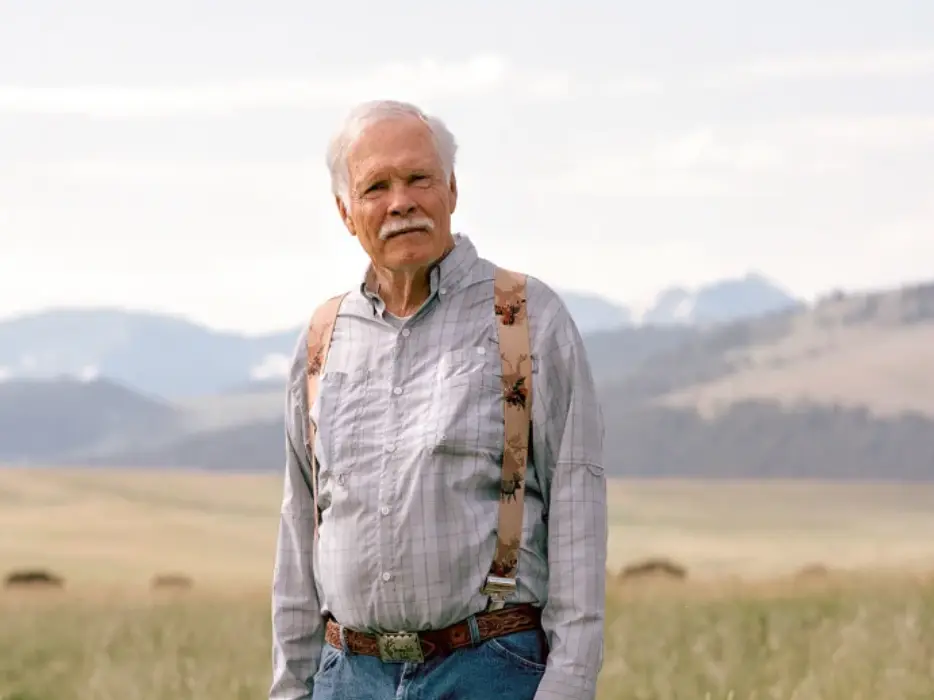

‘Bigger than two US states’: The secret reason Ted Turner bought 2 million acres

Mumbai family death mystery deepens: Rat poison found in watermelon and victims’ bodies

Mamata Banerjee ceases to be CM after Governor dissolves West Bengal Assembly

‘Business pressure and mental health’: Why Ted Turner father took his own life back in 1963?

'Draft Limits': Why the USS Abraham Lincoln cannot enter most ports

‘Grandest scientific experiment’: World’s largest fusion reactor nears reality, seeks to replicate Sun’s energy

BTS members net worth 2025: Who is the richest K-Pop sensation among them?

'Brass Screws': How the USS Abraham Lincoln pushes 100,000 tons

In July 2025, the Department of Defence awarded AI firms a contract valued at up to $200 million for AI services, including from Anthropic. Public contracting records indicated only a small portion had been paid by early 2026, suggesting gradual integration rather than a one-time purchase. These were usage contracts and partnerships allowing the DoD and intelligence networks to access Claude through secure arrangements.

Claude AI: The core asset of Pentagon from Anthropic

Anthropic’s Claude family of large language models was at the disposal of the Pentagon until the contract dispute in late February. Before the contract was put on hold, Anthropic provided custom safeguarded versions of its tools for government and classified use via partners such as Palantir and Amazon Web Services Top Secret Cloud. Anthropic’s AI tools were uniquely integrated into classified networks until the dispute, after which the company was designated a “supply chain risk” on February 27 by the Trump administration. It was expected to take at least six months for the Pentagon to transition away from Anthropic’s AI tools.

The primary AI models at the centre of reports on the Iran strikes were from Anthropic’s Claude family. Claude, designed for reasoning, summarising and assistance tasks, and Claude Gov were reportedly used by the military. Variants such as Claude Opus or custom government-deployed versions were said to be involved.

Key areas in which Claude AI was used: Intelligence assessment, target identification, battle simulation

In the strikes on Iran, the Claude AI models were used in intelligence assessment, target identification, and simulation of battle scenarios for the US military, according to multiple reports. US Central Command (Centcom) in the Middle East used the models to process and analyse vast amounts of data, including intercepts, satellite imagery and signals intelligence, to generate summaries, threat evaluations and situational insights.

In intelligence assessment, Claude analysed data to evaluate threats, enemy positions and situational awareness.

In target identification, the AI models helped locate, prioritise, cross-reference and confirm high-value targets such as Iranian leadership compounds, military assets or strategic sites.

In simulation of battle scenarios, Anthropic’s tools were used to model potential outcomes, rehearse strike sequences, predict risks and collateral damage, and support operational planning.

Claude was not used as a fighter, but as a helper

In all these cases, Claude’s role was as a decision-support tool, providing insights, summaries and simulations to human operators. As per reports, Claude was not used to independently control weapons systems during the Iran attack. It did not make lethal decisions without humans and did not act as a mastermind of the strikes. Its involvement was limited to data processing, analysis and strategic assistance rather than direct weaponisation, said the reports.

The Anthropic vs Trump administration dispute: What went wrong, and why was Claude still used in Iran?

Just ahead of the Iran strike, US President Donald Trump issued a directive banning federal agencies from using Anthropic’s tools following a contract dispute over ethical guardrails. Defence Secretary Pete Hegseth was involved in the designation of Anthropic as a supply chain risk and in directing agencies to cease using its technology, triggering a phase-out period.

Despite the ban, integration of Claude into intelligence assessment, war simulation and target identification systems was reportedly so deep that the Iran strikes proceeded with Claude’s support anyway, during the transition period.

The dispute centered on usage rights. The Pentagon demanded broader rights to use Claude “for all lawful purposes,” including potential battlefield targeting and weapon support. Anthropic refused to remove its ethical constraints, which are part of its Constitutional AI framework. This means Claude is programmed to reject use in fully autonomous lethal weapons and will not authorise strikes without human oversight. It also refuses use for mass domestic surveillance of US citizens.

The Pentagon sought unrestricted military use that could have included mass surveillance or fully autonomous lethal weapons, but Anthropic declined to remove safeguards, leading to contract termination threats and the ban.

Beyond Anthropic: Other AI companies with footprint in US military systems

OpenAI’s ChatGPT and underlying GPT series models, including frontier LLMs such as o1 or successors, have reportedly been used by the Pentagon. These were initially deployed for unclassified tasks. Following the Anthropic dispute, OpenAI reached a new agreement for classified network deployment of its models and tools, with safety guardrails in place, even as the Pentagon had sought use for any lawful purpose.

Alphabet, Google’s parent company, has provided Gemini for Government products to the Pentagon, available for unclassified use, including through the GenAI.mil platform that began rolling out in late 2025. The company has been in negotiations for expansion into classified systems.

Elon Musk’s xAI, the parent of Grok, has provided a Grok for Government suite for military use, initially for unclassified tasks. The company signed an agreement in February for use in classified systems as well, positioning it as a potential rapid replacement amid the Anthropic fallout.

GenAI.mil, Grok, Gemini and ChatGPT were mainly used for unclassified purposes, while classified use was initially more closely associated with Anthropic’s deployments. Once the dispute emerged, classified use expanded to include xAI and OpenAI systems.

Basically, OpenAI and xAI stepped in rapidly to meet the classified AI needs of the US military after Anthropic walked away from expanded terms.

One thing is now very clear: AI has become deeply integrated in US military planning and execution by early 2026, particularly in intelligence assessment, target identification and operational simulations. That has become evident in the Iran strikes. It will be used again. We are already in the AI era of war.

Disclaimer: WION takes utmost care to accurately and responsibly report ongoing conflicts in West Asia involving Israel, Iran, US, Gulf nations and non-state actors like Hezbollah, Hamas, Houthis, Islamic State, and others. Claims and counterclaims, disinformation and misinformation are being made online and offline. Given this context, WION cannot independently verify the authenticity of all statements, social media posts, photos and videos.

)

)

)

)

)

&im=FitAndFill=(700,400))

)

)

)

&im=FitAndFill=(700,400))

)

)

)

)

)

)

)

)

)

)

)

)

&im=FitAndFill=(700,400))

)

&im=FitAndFill=(700,400))

)

)

&im=FitAndFill=(700,400))

&im=FitAndFill=(700,400))

)

&im=FitAndFill=(700,400))

&im=FitAndFill=(700,400))