Welcome, humans.

ICYMI: In our latest podcast episode, we talk to Carina Hong, who dropped out of Stanford's PhD program at 24 to build “mathematical superintelligence.” And her startup Axiom Math just raised $64M to make it happen.

This isn't about making ChatGPT better at algebra. Carina's building an AI that discovers entirely new mathematical theorems, proves them formally, and gets smarter with each iteration. They've already solved a 130-year-old problem about Lyapunov functions that stumped Poincaré, Newton, and Lagrange.

Why math? Because it's the algorithmic pillar of AI for science. Master math, and you unlock chip design, quantum computing, aircraft safety, and every scientific field that runs on proofs. Carina calls it the “bedrock of the digital world.”

This is one of our favorite episode to date, y’all. It’s technical, but absolutely fascinating.

Here’s what happened in AI today:

OpenAI developed two new screenless AI devices.

ChatGPT web traffic share dropped below 70%, Gemini climbed to ~20%.

ByteDance planned $14B spend on NVIDIA AI chips in 2026.

ACCA will halt remote exams on from March 2026 due to AI cheating.

OpenAI's Building Its First AI Devices—And They're Not Phones

Right now, when you use AI, you are glued to a screen. Either your phone or your laptop, and that’s true even if you have one of those weird Friend pendants (because you have to text it). Perhaps the only non-screen device you can use Ai with is the AR-free Meta Ray Bans. Perhaps that’s why they are so popular…

Anyway, OpenAI's about to change all that.

The company is going all-in on screenless and audio-first AI with two new devices: firstly, with an AI-powered pen codenamed “Gumdrop”, and secondly, with a separate portable audio device designed to be your voice-first AI companion (at least, if new leaks are to be believed…).

Here’s what we know:

The pen device, developed with former Apple design chief Jony Ive, will transcribe handwritten notes directly to ChatGPT and enable voice conversations with the AI.

Think of it like a smart pen that turns your scribbles into structured digital notes while you chat with an AI assistant about what you're writing.

Designed as a “third core device“ alongside your laptop and phone that can be carried in pockets or placed on desks.

In case you forgot, OpenAI acquired Ive's hardware startup io for ~$6.5B back in May 2025, bringing roughly 55 engineers and the designer behind the iPhone, iPad, and MacBook Air into the fold. And the device will be manufactured by Foxconn in Vietnam, cause OpenAI specifically didn't want Chinese manufacturers… perhaps for political reasons??

Here's the hardware lineup:

There’s actually three concepts under evaluation, with the pen likely launching first, followed by a portable “to-go“ audio device.

None will be earphones or traditional wearables… these are entirely new form factors.

All three are supposed to be “elegantly simple“ and “playful“ according to Ive, and should provide a calmer experience than smartphones (w/o all the apps and constant notifications iIm guessing).

The big audio overhaul: According to The Information, OpenAI has apparently unified multiple engineering teams to completely rebuild its audio AI from the ground up. They're building a new audio model architecture to launch in Q1 2026 that should fix everything frustrating about current voice AI:

More natural speech patterns (no more robotic pauses).

Faster response times, as currently, audio lags behind text-based models.

Real-time interruption handling so you can actually interrupt the AI mid-sentence, like a real conversation.

Better accuracy, matching text-based model performance.

The effort will be led by Kundan Kumar, a researcher OpenAI recruited from Character.AI specifically for this project. Apparently building good audio AI requires poaching talent from companies that already figured it out.

Why this matters: OpenAI is betting that the next phase of AI is about getting AI off the screen entirely, and TBH, we agree. You’ve probably heard us gush about voice-first AI interfaces for a long time now. Who actually likes to type?? Well, we do… but not for everything we need to do online all the time, anyway.

After watching devices like Humane's AI Pin and Rabbit R1 flop, OpenAI is taking a different approach: specific use cases (note-taking and more niche interactions) rather than trying to replace your phone. With Ive's design chops and $6.5B invested, they're positioning these as tools that complement your existing devices, not to compete with them… at least for now…

I guess the only open question is what do they call the pen? My pitch: the “O-Pen” LOL. GET IT?? O-Pen AI, the AI pen?! Kay clearly I’m going stir crazy on vacay over here…

FROM OUR PARTNERS

Spend $5. Build Software That Pays You Back

Most people use AI to experiment. A few use it to make money.

Emergent is for builders who want revenue, not demos. With a simple chat, build production-ready apps people actually pay for, SaaS, paid tools, internal utilities, or niche apps you can launch fast.

Build, test demand, charge users, iterate faster in 2026.

That’s why 2.5M+ founders use Emergent to turn ideas into revenue, faster than traditional MVPs.

Plans start at $5.

Prompt Tip of the Day

Make resolutions you can actually test (and quit guilt-free).

Most New Year’s goals are giant lifestyle overhauls… which is why they die around mid-January. This prompt flips it: you’re running 12 tiny experiments—low-cost, low-time, high-curiosity. If one flops, you learned something. If one clicks, you’ve got a new habit without forcing it.

The “12 tiny experiments” Prompt

Propose 12 small experiments I can try this year (one per month) focused on fun, learning, creativity, or relationships.

Each experiment must cost under $50 and take under 2 hours to start.

Include a clear starting step and what success looks like.

Then ask me which to keep, tweak or drop before we finalize the list.

Treats to Try

*Agent Bricks: High-quality AI agents on your data. Spot-checking doesn’t scale. Build reliable agents with built-in evaluation based on your data and your goals. See how Agent Bricks works.

LongCat-Video-Avatar generates expressive audio-driven character animation, including long video continuation.

Dedalus Labs gives you one endpoint to deploy an agent that can call tools (MCP servers), so you can wire up actions like “read from GitHub” or “send a Slack message” without building the plumbing yourself.

Design Arena lets you run head-to-head design battles and vote on winners so you can see what “wins” in real user taste (and climb the leaderboard).

Chatterbox is open-source TTS (with Turbo) that supports tags like [laugh] and [cough] for more realistic voice agents (Turbo version is optimized for low-latency speech).

VibeVoice-Realtime streams speech with low first-audio latency so agents can talk while the LLM thinks.

Around the Horn

Similarweb said ChatGPT’s share of genAI web traffic dipped below 70% over the past year while Gemini climbed toward ~20% (and Grok kept gaining).

ChatGPT ads were reportedly tested as sponsored units alongside answers, likely to blur into recommendations and shopping flows, meaning transparency could be minimal with ads harder to recognize, especially for vulnerable users.

Mistral appeared to be testing a workflow builder + connectors for repeatable multi-step automations.

UK accounting org ACCA said it will largely halt remote exams from March 2026 after AI-assisted cheating got too hard to police.

IDC warned PC shipments could drop in 2026 as RAM pricing spikes and supply stays tight.

Related: NPR reported AI’s memory-chip demand could push up prices for consumer devices.

ByteDance reportedly planned to spend ~$14B on Nvidia AI chips in 2026.

VCs predicted enterprises would spend more on AI in 2026 while cutting vendor sprawl as pilots consolidate into a few default platforms.

FROM OUR PARTNERS

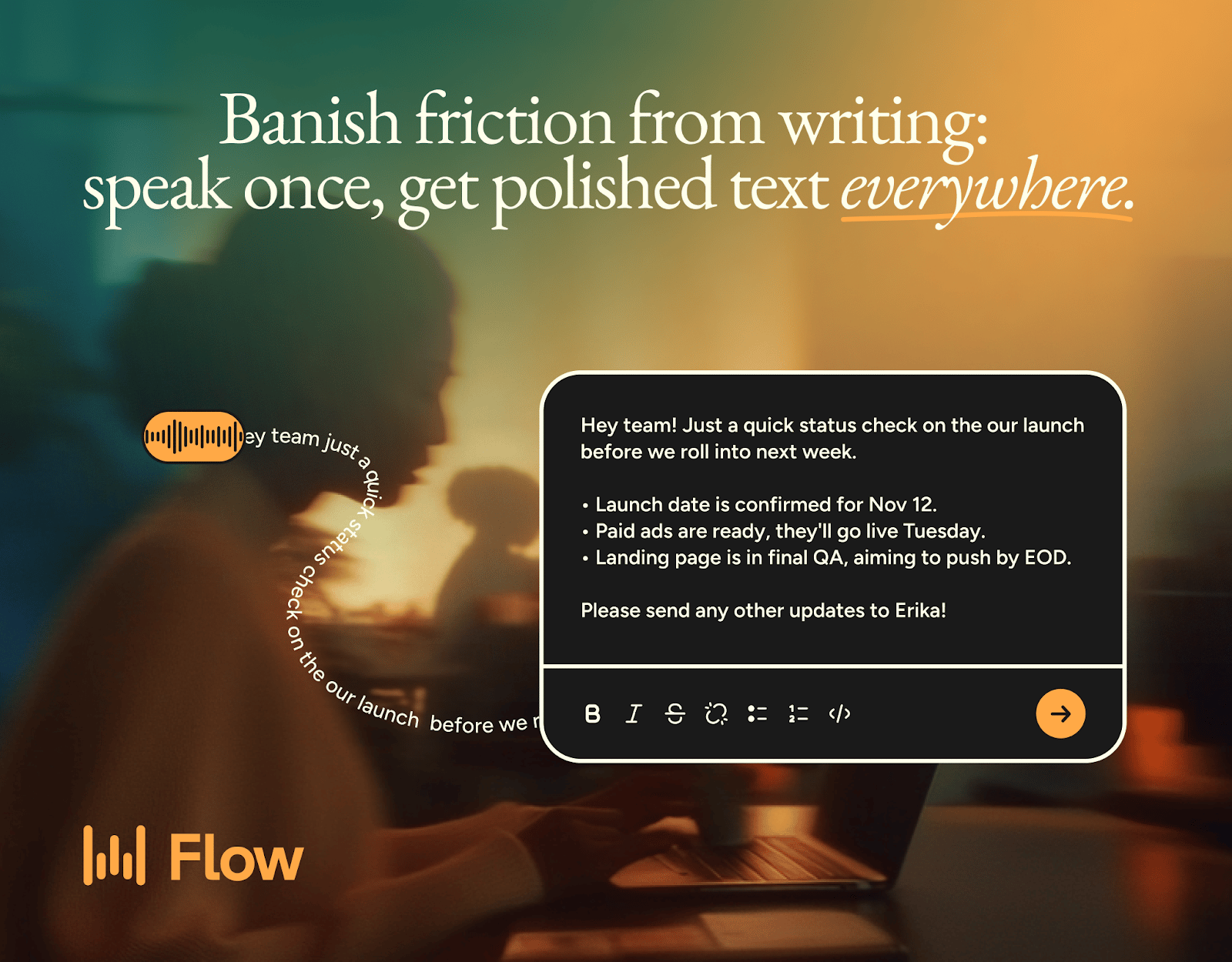

Ideas move fast; typing slows them down.

Wispr Flow turns your speech into clean, final-draft writing across email, Slack, and docs. It matches your tone, handles punctuation and lists, and adapts to how you work on Mac, Windows, and iPhone. No start-stop fixing, no reformatting, just thought-to-text that keeps pace with you. When writing stops being a bottleneck, work flows.

Intelligent Insights

OpusClip CEO Young Zhao shared how he built $215M AI company from $0 without VC funding.

A single superconductor produced the quantum junction effect for the first time, breaking physics assumptions.

Steve Yegge explains “vibe coding” and argues that today's AI coding tools like Claude Code aren't truly it yet, because real vibe coding means contributing zero latency and zero manual actions while the AI handles everything from implementation to debugging to production.

Sankalp's guide to Claude Code 2.0 shares lessons from actually using coding agents in production, including how to structure projects, when agents fail, and what makes the difference between frustration and 10x productivity.

A linguist explains why everyone sounds like ChatGPT now in a TED talk examining how AI language patterns are seeping into human writing and speech, creating a feedback loop where people train on AI outputs that were trained on human outputs.

The UK AI Security Institute released its Frontier AI Trends Report, tracking compute scaling, model capabilities, safety developments, and deployment patterns across the industry with government-backed analysis of where AI progress is actually headed.

This piece on the 70% productivity myth argued AI gains often feel huge but don’t show up in real throughput because teams don’t measure the metrics that really matter: cycle time, rework, and output (plus defect rates and developer satisfaction).

Microsoft researcher Zeyuan Allen-Zhu launched a tutorial series on the “Physics of Language Models,“ teaching how large-scale training results are often artifacts of noise rather than real model capabilities, and demonstrating how to design synthetic pretraining playgrounds that expose architectural truths using 100M-parameter models instead of wasting resources on unreliable 8B-parameter runs.

| That’s all for now. |