Earlier this week I published the original analysis of Google's silent installation of a 4 GB Gemini Nano model on user devices, Google Chrome silently installs a 4 GB AI model on your device without consent. Yesterday I published a response to the public statements made by Parisa Tabriz, Google's "Boss" of Chrome, in which she asserted that users could simply opt out of that unsolicited installation, Google's "Boss" of Chrome gaslights on unlawful Nano push.

Today I want to show readers something quieter, and in some ways more telling than either of the two preceding pieces. My thanks to "RaufLegend" on Reddit for bringing this to my attention.

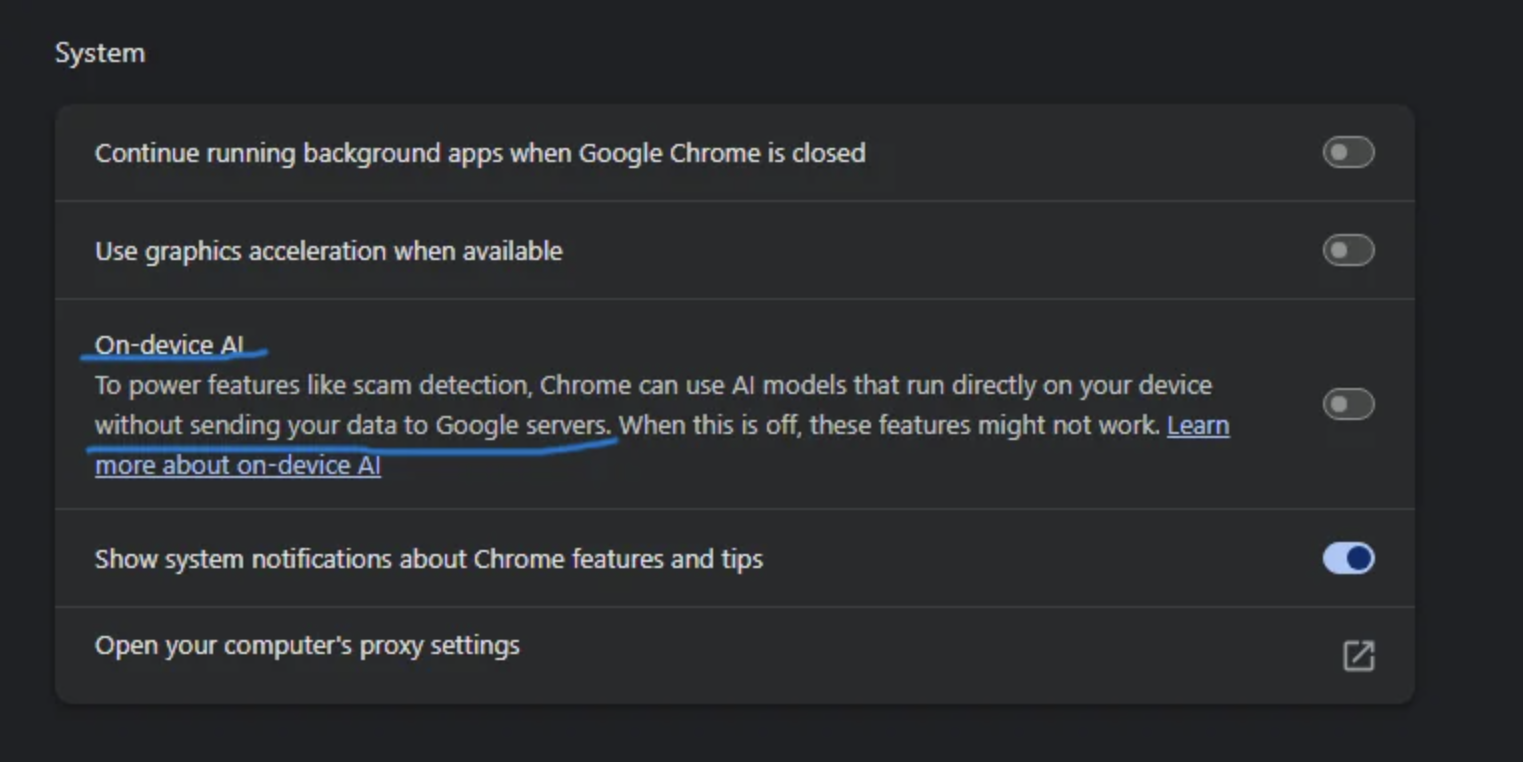

In an earlier version of Chrome's Settings UI, the on-device AI toggle lived inside the System block and carried the following description:

Read the underlined sentence again. "Without sending your data to Google servers." That is not marketing copy. That is a privacy representation. It is also a legal representation, because users in the EU, the UK and a number of other jurisdictions are entitled to rely on a vendor's stated processing claims when deciding whether to permit a particular processing activity. If a user leaves a default toggle in place because the vendor has assured them that no data leaves the device, the vendor is bound by that assurance.

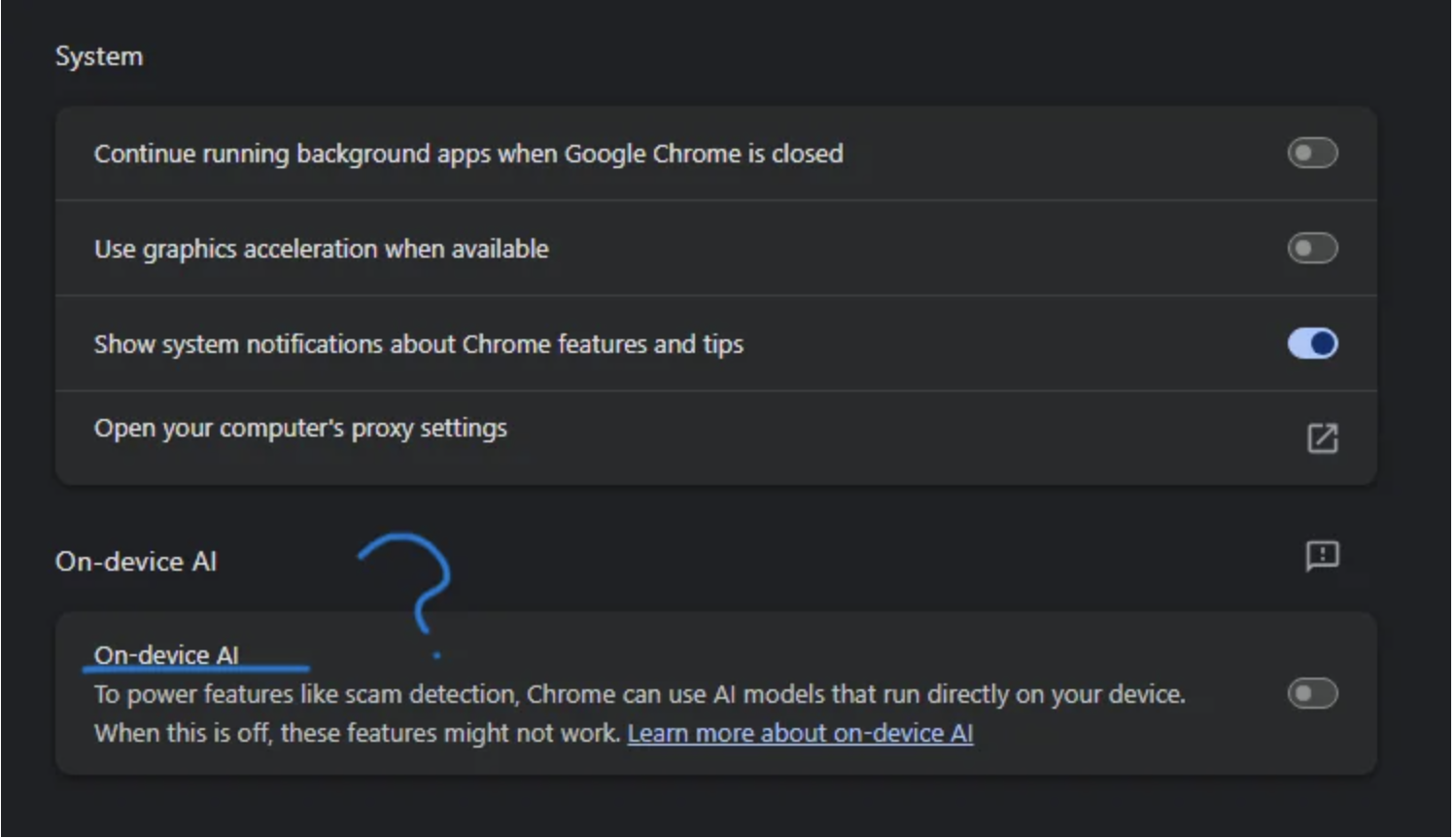

Here is the same screen in the current version of Chrome:

The assurance is gone. The sentence promising that the model runs locally without sending user data to Google's servers has been deleted from the Settings UI. At the same time the toggle has been moved out of the System block and given a dedicated section, which has the secondary effect of visually decoupling it from the surrounding device controls and reducing the chance that an ordinary user notices the change at all.

I want to be blunt. There are only three plausible reasons for a billion-user vendor to remove a specific privacy claim from a product surface, and each of them is a serious problem.

The claim was inaccurate when it was made. Data was being sent to Google's servers, or could be sent under conditions Google did not disclose, and the company is correcting the text before a regulator notices. If this is the explanation, every user who relied on the previous text was processed under a misrepresentation. That makes the processing unlawful under Article 6 of the GDPR for the entire window during which the assurance was published, and it makes the conduct actionable as a misleading commercial practice under the Unfair Commercial Practices Directive in the EU and as deceptive conduct under equivalent statute in most other jurisdictions where Chrome is distributed.

The architecture is changing. The model now does, or shortly will, transmit user data to Google's servers, and the previous text would no longer be accurate going forward. If this is the explanation, the silent installation I documented on Monday becomes worse, not better. A 4 GB push that users did not authorise was justified to the press on the basis of a privacy promise that the vendor is now in the process of withdrawing.

Legal hedging. Google's counsel concluded that the original text was a binding representation the company was not prepared to defend in regulatory or civil proceedings, and recommended its removal. If this is the explanation, the deletion is itself an admission that the company is not confident the assurance was accurate, and the user is left with neither the assurance nor a way to verify what the model actually does on their device.

None of these explanations is good for users. Two of them are direct breaches of the GDPR, the ePrivacy Directive and consumer protection law. All three of them are acts of bad faith from a vendor that has, in the last seventy-two hours, also publicly asserted that users can simply opt out of an installation that they did not opt in to in the first place.

Whichever of the three explanations applies, the legal exposure is largely independent of intent. Under the EU Unfair Commercial Practices Directive (2005/29/EC), a commercial practice is a misleading action where it contains false information, or where, including in its overall presentation, it deceives or is likely to deceive the average consumer in relation to one or more material characteristics of the product, even where the information is factually correct (Article 6). A practice is a misleading omission where the trader omits or hides material information that the average consumer needs in order to take an informed transactional decision (Article 7). A privacy assurance about whether user data is transmitted to Google's servers is plainly material. The average user is unlikely to leave a default toggle in place, or to remain on Chrome at all, without that assurance. Publishing the assurance, allowing it to inform millions of installation and configuration decisions, and then silently removing it without notice or correction is, on its face, a misleading omission, and depending on the underlying facts a misleading action as well.

The same conduct is actionable in the United States under Section 5 of the Federal Trade Commission Act, which prohibits "unfair or deceptive acts or practices in or affecting commerce" (15 U.S.C. § 45). The FTC's deception standard, set out in the 1983 Policy Statement on Deception and applied consistently from Cliffdale Associates onward, requires three elements: a representation, omission or practice likely to mislead the consumer; a consumer acting reasonably in the circumstances; and materiality. All three are met here. The privacy assurance was a representation made on the vendor's own settings surface. Consumers acted reasonably in relying on it, because it was the vendor's own explicit description of the toggle's behaviour. And the assurance was material, because it was the very basis on which a privacy-conscious user would have decided whether to permit the feature on their device. Silently deleting the representation after consumers have already acted on it does not cure the deception. It compounds it.

There is a further European hook that deserves to be on the record. Under the Digital Markets Act (Regulation (EU) 2022/1925), Google is a designated gatekeeper, and Chrome was specifically designated as one of its core platform services in September 2023. Article 13(4) of the DMA is an anti-circumvention provision that reads in terms specifically anticipating exactly this type of conduct: "The gatekeeper shall not engage in any behaviour that undermines effective compliance with the obligations of Articles 5, 6 and 7 regardless of whether that behaviour is of a contractual, commercial, technical or any other nature, or consists in the use of behavioural techniques or interface design." Quietly stripping a privacy assurance out of the gatekeeper's own settings UI is interface design used to frustrate informed consent, and Article 13(4) is, in my professional opinion, a clean fit. Article 5(2) of the DMA further requires the gatekeeper to obtain explicit user consent before combining personal data across its core platform services. If the architectural change is the explanation for the text removal, that is to say data from the on-device model now does or shortly will be transmitted to Google's servers and combined with other Google service data, then Article 5(2) is engaged on its own terms, irrespective of how the conduct also reads under the GDPR or the ePrivacy Directive.

This is the pattern. Reach into the device. Flip the flag. Push the model. Tell the press the user can opt out. Quietly remove the privacy assurance from the Settings UI. Hope that nobody is paying attention.

So a direct question, addressed to Parisa Tabriz, "Boss" of Google Chrome.

Why was the sentence "without sending your data to Google servers" removed from the on-device AI description in Chrome's Settings UI? Was the previous text inaccurate? Has the architecture changed? Was the wording withdrawn on legal advice because Google was unwilling to defend it as a representation? These are not rhetorical questions. They are the questions a regulator will ask, and the answers will be on the record one way or another. It would be better for Google to put them on the record voluntarily, and now, than to wait for an enforcement notice to make the answers compulsory.

I would like Google to do three things, and I would like them to do these things in public.

Confirm whether the on-device model has, at any point in any Chrome build that exposed it, transmitted user data to Google servers. Confirm with telemetry, build-by-build, not with marketing copy.

Restore the privacy assurance text to the Settings UI in identical wording to the previous version, if and only if it remains accurate. If it is no longer accurate, say so plainly, and tell users on what date and in which Chrome build it stopped being accurate.

Switch the on-device model from a silent push to an explicit opt-in, with the lawful consent flow that ePrivacy and the GDPR have required for the entire decade Google has been operating in the EU.

In my professional opinion the third demand is non-negotiable. Opt-out was never the legal standard here. The first two demands are the ones a regulator should be asking now, before any further public statements from Google about Gemini Nano are taken at face value.

The settings text was a representation. The removal is a signal. Read the signal.