Introduction

Computer vision and machine learning are increasingly transforming the analysis of planetary imagery, building on techniques first developed for biomedical and terrestrial remote sensing applications1,2,3. Convolutional neural networks (CNNs) have enabled the automated detection and classification of complex visual patterns in large data archives. Recent progress now allows such models to be deployed on lightweight, low-power hardware, supporting their use not only in ground-based data analysis but also in onboard “edge” computing for spacecraft autonomy.

The YOLO-ET (You-Only-Look-Once – ExtraTerrestrial) algorithm was originally developed for identifying and classifying micro- to millimetre-scale particles captured on Tanpopo silica aerogel collectors returned from Japan’s Kibo module on the International Space Station4,5,6. Its compact architecture, derived from TinyYOLOv27,8,9, permits real-time inference on resource-constrained systems. In the present work we adapt and extend this model to the detection of macroscale objects—specifically spacecraft hardware—on the lunar surface, under the designation YOLO-ETA (Extraterrestrial Artefact).

The Lunar Reconnaissance Orbiter Camera (LROC) Narrow Angle Camera has imaged the Moon continuously since 2009, providing a unique record of both natural and anthropogenic surface features at up to 0.25 m pixel⁻¹ resolution10,11,12,13,14,15,16. Identifying artificial objects within the resulting vast dataset remains a challenge owing to illumination variability, complex backgrounds, and the small pixel footprints of many targets17,18,19,20,21,22. While recent studies have begun to explore unsupervised anomaly detection using autoencoders or contrastive learning17, these approaches often demand substantial computational resources and do not yet deliver localisation or classification outputs interpretable by human analysts.

Our goal is to demonstrate that a lightweight, interpretable CNN can achieve practical accuracy in detecting known lunar artefacts and can assist in the search for undetected historical spacecraft. We train YOLO-ETA on Apollo landing-site imagery, evaluate its performance on unseen LROC tiles—including the Apollo 17 and Luna 16 sites—and then apply it to the still-unconfirmed landing area of Luna 9, the first successful soft lander on the Moon23,24,25,26,27,28,29,30. The ability to recover legacy artefacts has both scientific and operational importance: precise localisation enables contextual studies of regolith31disturbance and surface reflectance modification by descent engines (see Clegg et al. 201432), and, more broadly, supports the emerging need to catalogue and monitor lunar surface assets as exploration activities intensify.

In addition to reporting candidate detections of the Luna 9 hardware, we examine the surrounding topography using Lunar Orbiter Laser Altimeter (LOLA) data to assess horizon geometry relative to the spacecraft’s surface panoramas (see Stooke 200733 as well as extensive subsequent public analyses and helpful later commentaries on his Mastodon.social blog). These analyses illustrate how human interpretation and automated learning can complement each other in refining hypotheses of historical landing-site locations, while demonstrating the broader utility of compact machine-learning models for future orbital surveys and autonomous operations on and around the Moon.

Results

Initial Detection of Possible Luna 9 Moon Landing Site

The YOLO-ETA model was used to survey a five-by-five-kilometre area around the historically uncertain coordinates of the Luna 9 landing site (7.08° N, –64.37° E; 19). LROC QuickMap tools12,34 were employed to identify and access high-resolution Narrow Angle Camera images covering this region under a range of illumination and phase conditions. Several bright features visible to the human eye were excluded by the model as natural craters, but several darker, geometrically distinct objects were identified as potential artefacts. See Methods for notes and illustrations on our use of the QuickMap tool for image selection.

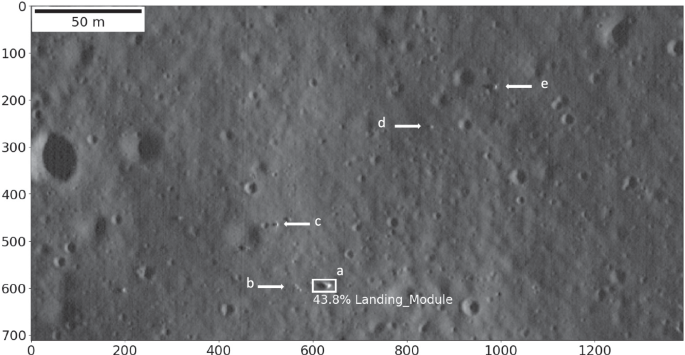

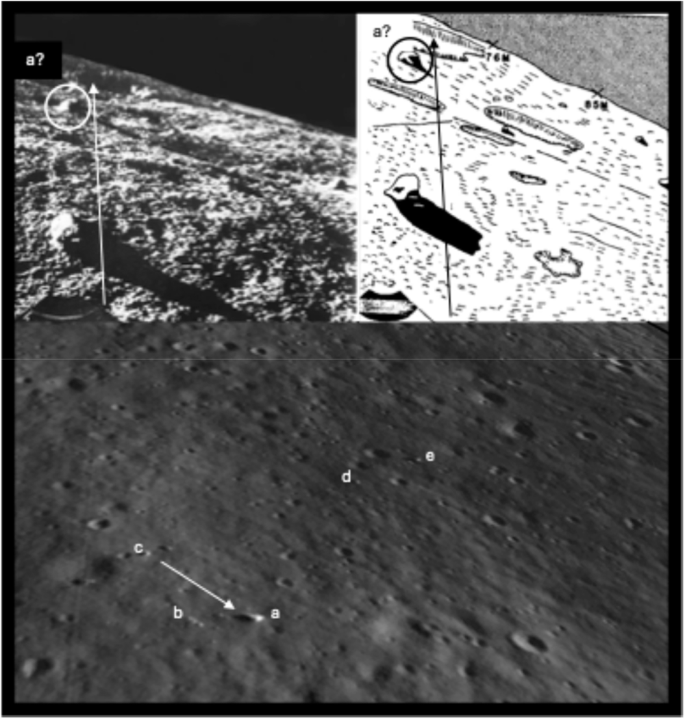

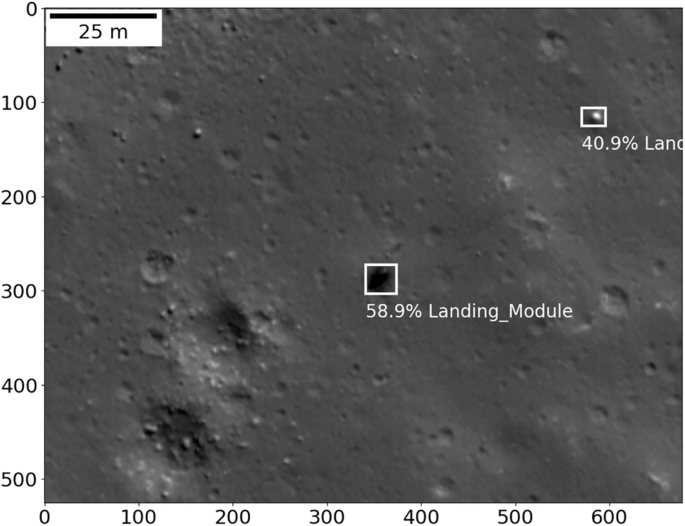

The model returned its first detection of an object classified as a landing module, centred near 7.029° N, –64.329° E, with a confidence score of 43.8% in LROC image M132071202LC (see Fig. 1).

Initial detection at (a) by the YOLO-ETA Machine Learning model of spacecraft hardware at 7.02907° N latitude and -64.32867° longitude, approximately 5 km from the reported landing location of the heretofore undetected Luna 9 spacecraft at 7.08° latitude and -64.37° E longitude. Other potential spacecraft hardware objects within approximately 100 m of each other can be seen at (b−e), which also trigger detections by the model under differing illumination conditions and with image cropping and centring. The image is from LROC M132071202LC (NASA/GSFC/Arizona State University). Units on the coordinate axes are pixels, at a spatial resolution of 0.39 m/pixel.

Nearby are smaller objects, separated by less than 200 metres. Although the confidence levels vary, repeated identification of the same features under independent lighting geometries is a strong indicator that the algorithm has isolated real, persistent structures rather than illumination artefacts.

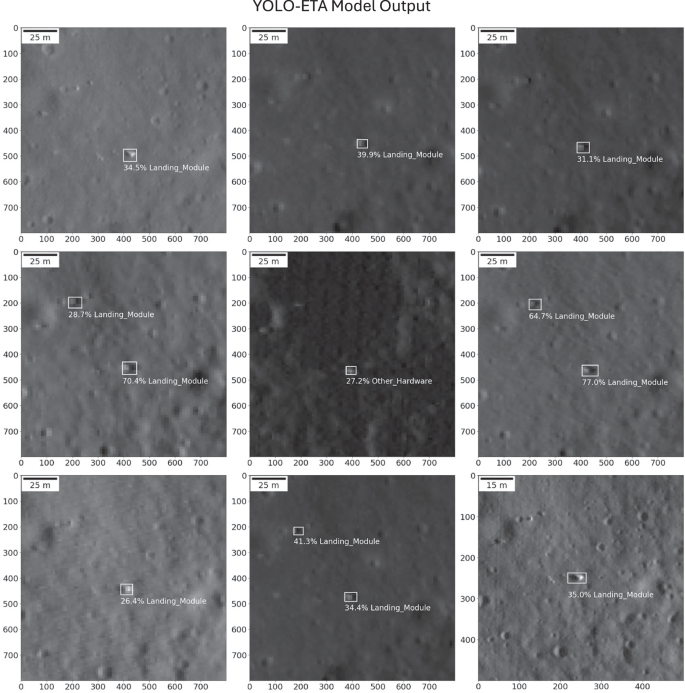

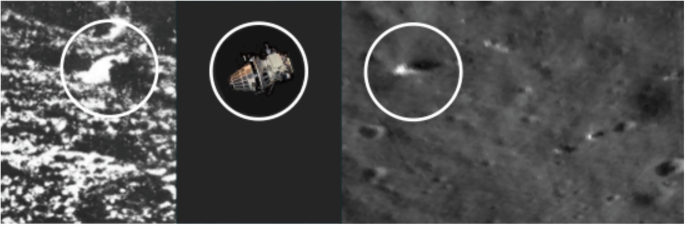

Feature Analyses Around the Candidate Luna 9 Coördinates

To further test the detection, a new QuickMap search was conducted around the candidate coordinates. Thirty-one LROC NAC image products were returned with overlapping coverage; of these, eight possessed both sufficient spatial resolution ( ≤ 0.4 m pixel⁻¹) and favourable illumination for model inference. In all eight, YOLO-ETA again detected the principal object, assigning confidence scores between 60% and 77%, and identified several nearby features that could represent associated hardware (Fig. 2). These results suggest that the model’s detections are robust to changes in illumination, incidence angle, and spacecraft altitude.

The final image lower right is a centred and cropped-close 500 ×500 pixel version of the original detection by the model; in this instance the detection confidence is about 8% lower and does not pick up additional potential artefacts detected by the YOLO-ETA model in other images; slightly wider context with additional information to process appears to boost model performance, with optimal performance attained at the 800 ×800 pixel scale. From top left to bottom right: selections from LROC images M114376090R, M1145219961R, M1206434341L, M1173477859R, M1293465872L, M1413249630R, M1424979793R, M1160525686R and M132071202LC (NASA/GSFC/ASU).

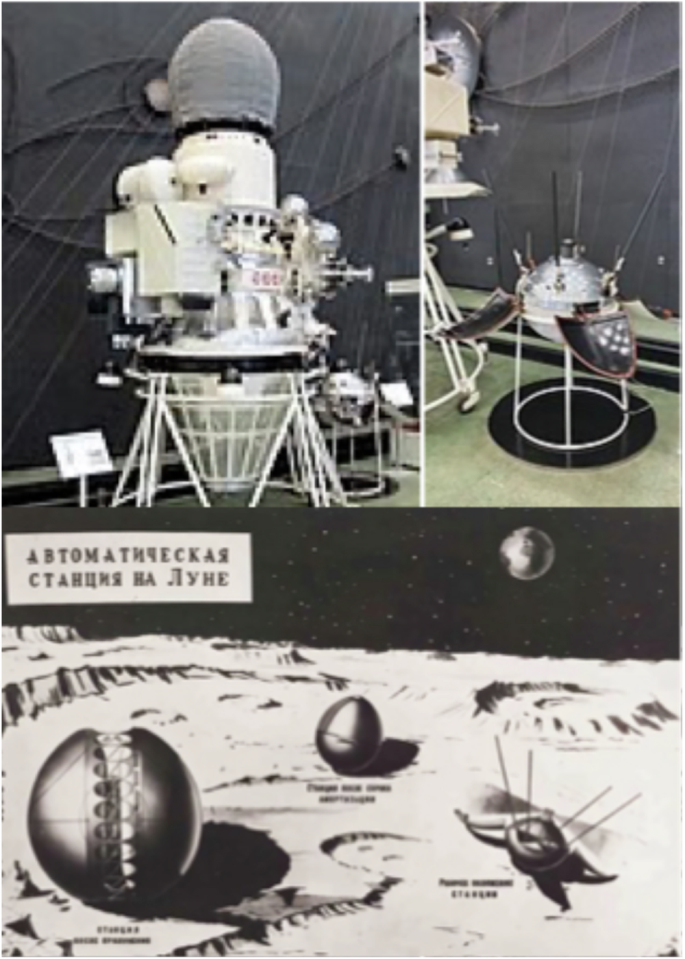

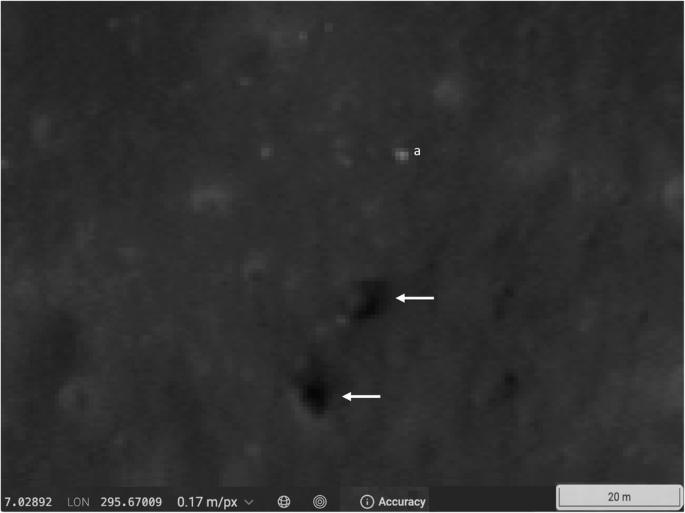

The spatial distribution of these features is broadly consistent with expectations from the Luna 9 landing sequence (see Fig. 3). Historical accounts indicate that the main spacecraft jettisoned its side modules and inflated an airbag-encased capsule roughly five metres above the surface23,24,25,26,27,28,29. The lander’s stabilising petals and antennas were deployed a few minutes after touchdown, and the separated flight stage likely impacted 100–200 metres downrange once its vernier engines ceased firing. The multiple detections by YOLO-ETA within this distance range could plausibly correspond to the lander and its ejected components. The surrounding area also contains two small dark patches, approximately 40 and 60 metres south-southwest of the principal detection, which correspond to five- and seven-metre craters visible only at high Sun angles (Fig. 4). While such features are not uncommon, their position relative to the detected artefacts suggests they might represent impact sites of the side modules, an interpretation that warrants further investigation.

Top left, pre-launch configuration of Luna 9 (Krassotkin, Wikimedia Commons, PD) in a mock-up displayed at the Kaluga exhibition; top right, deployed landing module mock-up at the Kaluga exhibition (Pbernardes, Wikimedia Commons, CC BY SA 4.0); below, landing sequence schematic, illustrating from right to left the spacecraft’s final approach to the lunar surface after having jettisoned descent engines, with propelled release of the airbag encased module (ejected upon surface sensor detection at 5 m altitude), the ovoid spacecraft itself on the lunar surface, and the lander’s final deployment with petals and antennas extended four minutes after airbag ejection (from the archives of the Russian State Scientific and Technical Documentation Department). Possible associated artefacts in the vicinity of the Luna 9 soft lander include the main spacecraft’s ejected descent engines, the main spacecraft itself, module-surrounding and jettisoned airbags, and elements of the module itself.

Evidence of Luna 9’s side modules might also be found in the wider area, and as indicated by the arrows two very unusual dark spots appear 40 m to 60 m South-Southwest of the largest object (a) in Fig. 1 and above could indicate their presence; the dark material appears to be ejecta from 2 craters about 5 and 7 meters across, which would be consistent with the 150 kg side compartments descending in ballistic free fall and impacting at nearly 500 m s-1, likely to have fragmented and making a significant shift in regolith albedo (NASA/GSFC/ASU).

Candidate Luna 9 Site Preliminary Topographical Assessment

The Luna 9 surface panoramas show a strikingly flat horizon, with no visible hills or relief features above the skyline. On this basis, Stooke (ref. 33) and subsequent analyses have argued that the lander must have come to rest well away from the prominent hills near the canonical coordinates (7.08° N, -64.37° E), where such topography would necessarily appear on the horizon. At our candidate site near 7.03° N, –64.33° E, however, elevation profiles derived from LOLA data indicate that the terrain lies roughly 25 m above adjacent mare and forms part of a gently raised plain. For a camera positioned about one metre above this surface, the geometric horizon would extend to roughly 9–10 km, meaning that any ridges less than about 100 m high and more than 20 km distant would be obscured below the line of sight. Contemporaneous Soviet and Lockheed analyses assume a close horizon, but the apparent absence of hills in the Luna 9 panoramas may be consistent with a site of modest elevation and distant surrounding relief, such as that indicated at our proposed coordinates.

To look at the objects in perspective, a 3D rendering of LROC image M132071202LC was generated in the QuickMap Tile Server and rotated to approximate the viewpoint of the Luna 9 surface camera. Crater groupings and local terrain relief visible in the Luna 9 images show a tentative match with features at the candidate site, although resolution differences prevent precise correlation. Possible artefacts labelled (a) through (e) (see Fig. 5) correspond to the approximate scale and separation expected between the flight stage, landing capsule, and ejected components. The distance between (a) and (c) is roughly 50 metres, comparable to estimates from the lander’s own panoramas and Lockheed Electronics’ 1968 analysis of the Luna 9 photographs (Fig. 6).

Top left: One of the first images transmitted by Luna 9 (image courtesy: NASA and RIA Novosti). Top Right: a contemporaneous Lockheed Electronics sketch interpreting the image; the Lockheed analysts posited the ‘spherical’ object toward the top left of the image (circled) to be part of the main spacecraft lander (image courtesy: NASA and Lockheed Electronics Company). Bottom: A QuickMap Tile Server we rendered of the site from LROC image M132071202LC (NASA/GSFC/ASU), was scaled and rotated to explore a tentative match of the terrain between the Luna 9 camera image and the LROC orthogonal view from orbit. Possible Luna 9 artefacts are labelled (a) through (e) as in Fig. 6. Assuming (c) may represent the Luna 9 lander, the largest artefact identified by the model at (a) could potentially be the flight module and align per the arrow to the spherical object posited by the Lockheed analysts.

A conceptual rendering drawing of how the Luna 9 flight module might be seen from the lander’s camera (Wikimedia Commons PD and NASA/GSFC/ASU).

Taken together, these results identify a small cluster of features near 7.03° N, –64.33° E that display spatial and morphological characteristics consistent with spacecraft hardware. Confirmation will require targeted imaging by LRO or future orbiters at lower altitude, ideally under repeatable illumination conditions. Regardless of the final interpretation, this study demonstrates that lightweight convolutional models can detect subtle, and possibly previously overlooked surface artefacts and thus contribute to the systematic catalogue of both lunar heritage sites and the fast-expanding array of new artefacts destined for the Moon.

Discussion

This study demonstrates the feasibility of using a compact convolutional neural network to identify spacecraft hardware on the lunar surface in high-resolution orbital imagery. The YOLO-ETA model, trained on a modest dataset of Apollo landing-site images, achieves balanced precision–recall performance and shows consistent generalisation to unseen data, including successful detections of the Luna 16 lander. When applied to the long-standing problem of locating the Luna 9 spacecraft, the model detected a cluster of candidate artefacts near 7.03° N, –64.33° E that meet several independent plausibility tests: recurrence under different illumination geometries, spatial separations compatible with the expected dispersal of mission components, and local topography consistent with the flat horizon recorded in Luna 9’s surface panoramas. These results do not constitute definitive proof of the lander’s recovery, but they identify a credible site for targeted re-imaging.

The application of YOLO-ETA highlights the potential of lightweight, interpretable machine-learning systems for planetary exploration. Models of this type can be embedded in orbiters, landers, or small satellites to perform onboard detection and triage of surface features in near real time, reducing downlink requirements and enabling responsive mission operations. For future lunar activity, such systems could support three main objectives1: cataloguing historical artefacts and ensuring the preservation of lunar heritage sites2, providing situational awareness and navigation support in increasingly congested surface environments, and3 assisting scientific investigations of regolith disturbance and albedo modification caused by landing and impact processes (Clegg et al. 201432).

The identification of possible Luna 9 hardware also underscores the value of combining automated methods with expert human analysis. Machine learning efficiently isolates statistically significant anomalies, while domain expertise remains essential for physical interpretation and validation. This collaborative approach, already integral to terrestrial remote sensing, is well suited to the growing complexity of extraterrestrial data archives.

Further confirmation of the candidate Luna 9 site will require directed imaging by the Lunar Reconnaissance Orbiter or future orbiters equipped with comparable resolution, ideally under a range of illuminations including matched conditions. Ground-based radar observations or photometric studies could provide additional constraints. Regardless of the outcome, this work establishes that compact deep-learning architectures such as YOLO-ETA can extend beyond Earth orbit, enabling systematic surveys of the Moon’s technological artefacts and supporting the responsible exploration of our nearest celestial neighbour.

Methods

Lunar Reconnaissance Orbiter Data Set and Prior Work

The Lunar Reconnaissance Orbiter Camera (LROC) Narrow Angle Camera (NAC) has provided continuous imaging of the lunar surface since 2009, producing a dataset of more than two million image tiles at resolutions between 0.5 and 1.0 m per pixel and, through image stacking, as fine as 0.25 m per pixel10,11,12. These data record both natural and anthropogenic surface features, including Apollo descent stages, rovers and tracks, Surveyor and Luna spacecraft, and numerous rocket-stage impacts18,19. The scope of this archive—covering roughly 4 × 10¹⁰ m²—makes the systematic detection of technological artefacts a compelling, but computationally demanding, target for machine-learning methods.

We trained YOLO-ETA using LROC NAC imagery of the Apollo landing sites7,8,9,20,21,22,35. Compared with recent approaches employing unsupervised or semi-supervised methods such as Virtual Autoencoders17, our model offers a scalable alternative that relies on compact convolutional architectures, standard GPU code, and commercially available software libraries. The emphasis is on algorithms suitable for future on-orbit or on-surface implementation within CubeSat-class spacecraft, landers, or rovers—where onboard computing power is limited but continuous visual analysis is desirable.

The LROC dataset poses challenges for computer-vision applications22: the imagery is not independently and identically distributed, illumination and phase angles vary widely, class imbalance is strong (for example, craters far outnumber boulders or artefacts), and the same object may be represented by multiple pixels or repeated passes under different conditions. Moreover, large tile sizes and the need for expert labelling complicate pre-processing and validation. These constraints underscore the importance of models capable of learning from limited data and generalising to new illumination geometries.

A relevant precedent is the work of Lesnikowski et al. (2020)17, who employed Virtual Autoencoder techniques trained on Apollo site imagery to identify tiles likely to contain spacecraft hardware. Their unsupervised model could highlight tiles of interest but provided no localisation or classification outputs. By contrast, YOLO-ETA performs fully supervised detection, localisation, and classification in a single pass, demonstrating—for the first time—automated high-confidence identifications of spacecraft hardware within LROC NAC images. This approach constitutes a practical step toward edge-computing applications capable of real-time orbital video coverage and artefact detection across the lunar surface.

Preparing the Data for YOLO-ETA Implementation

Over its 16 years of orbital operations, the Lunar Reconnaissance Orbiter Camera (LROC) has acquired imagery under a wide range of illumination, phase, and altitude conditions. Such variability provides both a challenge and an opportunity for machine-learning applications. Elongated shadows of landers at low Sun angles, the sharp rectilinear outlines of spacecraft components, and variations in albedo caused by regolith disturbance or specular reflections all create characteristic signatures that can guide automated detection. These effects are particularly informative for convolutional models such as YOLO-ETA, which learn contrast- and geometry-based cues without the need for explicit parameterisation.

The YOLO-ETA model builds on the lightweight TinyYOLOv2 convolutional architecture, originally adapted in our previous YOLO-ET work for microscopic particle detection in Tanpopo silica-aerogel panels returned from the International Space Station4,6. The present implementation retrains this architecture on macroscale features—spacecraft hardware visible in LROC images—and introduces new annotations, bounding boxes, and hyperparameter tuning optimised for the lunar surface context. Parameter sweeps explored batch size, bounding-box intersection-over-union (IoU) thresholds, and anchor scaling to maximise detection accuracy while maintaining compact model size.

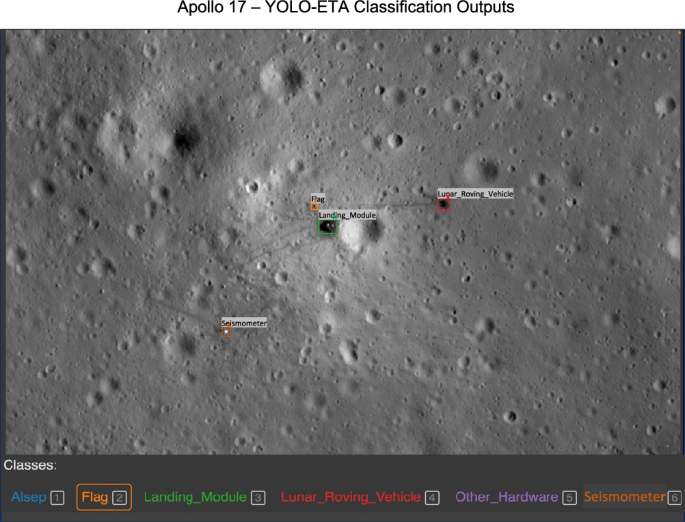

A combined dataset of 125 high-resolution images of Apollo landing sites (Apollo 11–17) was collected. Each image was manually annotated using the Widget BBox tool35. Initially we bounded and annotated Landing Modules and Lunar Roving Vehicles as well as smaller objects including Seismometers, ALSEP packages and Flags and included them in our training model (Fig. 7). However unique identifications were seldom returned by the model despite experiments in adjusting hyperparameters, Intersection over Union (overlap of the boxes) and longer iterations, etc. We concluded that the number of pixels in these target objects in the images was too small to provide robust distinction. The model did consistently pick them out as non-natural artefacts however, so we aggregated them into the class category of Other Hardware.

The LROC website provides a useful guide to each respective landing site, with layers indicating key hardware elements, EVA traverses and stops, and nomenclature of surrounding of geological features. LROC Apollo 16 Image M175179080LR (NASA/GSFC/ASU).

Given the limited number of available examples, standard augmentation techniques—horizontal and vertical flips, 90° rotations, and 1000 × 1000 pixel random crops—were applied to increase data diversity and reduce positional bias. The final dataset was divided into 80% training and 20% testing subsets. A concise summary is provided in Table 1, YOLO-ETA Training Dataset Composition.

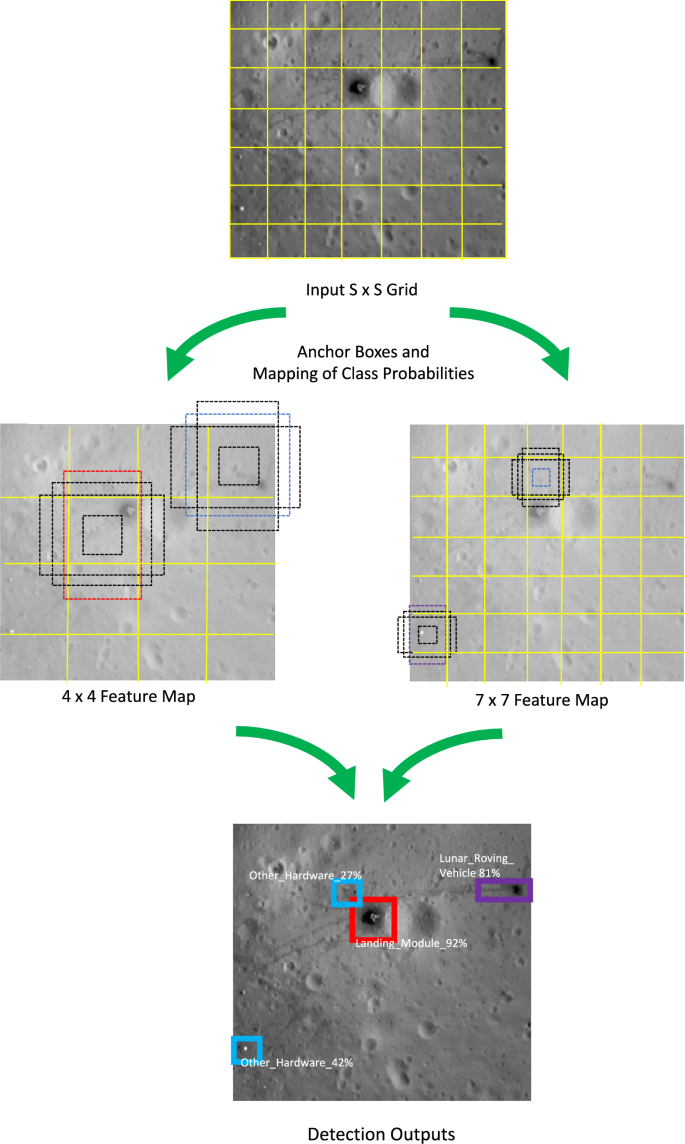

As with our earlier microscopic-particle model, YOLO-ETA processes each input image in a single network pass, simultaneously predicting object boundaries, classifications, and confidence scores. Input images are divided into grids to facilitate object detection, with S being the number of assigned divisions along one side of the image, e.g. if S = 7, the image is split into a 7 × 7 grid creating 49 equally sized cells. Each cell grid detects objects whose centre falls within it and predicts a fixed number of bounding boxes for it, anchored to the cell centre, as well as class probabilities for those boxes. The algorithm learns bounding-box coordinates relative to grid-cell positions at multiple feature-map scales (4 × 4 and 7 × 7). During annotation, some images contained multiple objects, while others contained none; the latter were intentionally retained to preserve realistic class imbalance and reduce bias toward over-detection (Fig. 8).

Feature maps at multiple scales, with coordinate predictions from different grid sizes, as well as class predictions (Landing Module, Lunar Roving Vehicle, and Other Hardware as also indicated by the varying box colours) are combined into a single detection output. Our algorithm learns to predict bounding box coordinates relative to the location of 4 × 4 and 7 × 7 feature map grid cells directly, along with the dimensions of the box anchored to each grid cell. YOLO-ETA has been tuned so that an output single percentage confidence score combining terms for bounding box prediction accuracy, object presence probability, and strength of class prediction is included in the detection output. LROC Apollo 16 Image M175179080LR (NASA/GSFC/ASU).

The model was further optimised for high recall, ensuring that most true artefacts are captured even at the cost of a moderate false-positive rate—an acceptable trade-off for later human verification. This balance between sensitivity and specificity is examined quantitatively here below.

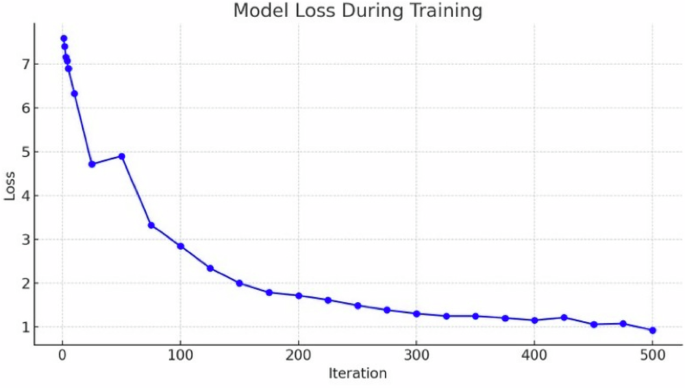

Measuring YOLO-ETA’s Performance

Training of the YOLO-ETA model followed standard supervised-learning procedures, using image–label pairs that specify bounding-box coordinates (x, y, w, h) and class membership for each annotated object. Each training iteration adjusted the network weights to minimise a composite loss function combining localisation, object-presence, and class-prediction terms4,7,8,32. A batch size of 32 and 500 iterations was found to provide stable convergence without over-fitting. The final training loss of 0.92 ( ≈ 8% overall prediction error) indicates good agreement between predicted and ground-truth annotations. Details of the loss-function terms and convergence behaviour are described as follows.

YOLO-ETA Loss Function

The YOLO loss function specifically combines terms for bounding box prediction accuracy, object presence confidence, and class prediction, ensuring the model is well-tuned across all aspects of object detection. In contrast to recent work on galaxy clusters36, the YOLO-ET(Artefact) adaptation of our model retains the comprehensive YOLO loss function, exploiting the full power of YOLO to simultaneously tackle the presence of multiple objects of different classes in an image. This has proven useful for aerogel-captured particles, where multiple particles may overlap or appear at different depths in the aerogel panel. For macro-scale object detection of spacecraft hardware on complex, monotoned planetary surfaces with highly variant illumination conditions like the Moon, these model attributes are particularly important.

Our loss function and its terms are defined per refs. 4,5,7,8,13,36 where, the first two terms, weighted by λcoord, penalise errors in the position (x, y) and size (w, h) of predicted bounding boxes compared to the ground truth. These are crucial for precise localisation. The third term penalises errors in object scores Ci, distinguishing between object presence and absence. The fourth term, scaled by λnoobj, specifically penalise false detections and the final term assesses the classification error for each class c across the objects detected, ensuring accurate class predictions8.

Localisation is more important with the LROC Apollo images than with the Tanpopo aerogel colour images taken under a digital microscope4. On the Tanpopo images, particles and fragments stand out from the typically evenly hued blue and green backgrounds of the silica aerogels. Accurate predictions between classes prioritised the fourth and final terms of the loss function, scaling by λnoobj to specifically penalise false detections and assessing the classification error for each class c across the objects detected, respectively, ensuring accurate class predictions. For the LROC Apollo images work, the first two terms, weighted by λcoord, and penalising errors in the position (x,y) and size (w,h) of the predicted bounding boxes compared to the ground truth, are more crucial for precise localisation, in the face of the many craters, shadows and other features surrounding the hardware on the Moon. The third term penalises errors in object scores Ci, distinguishing between object presence and absence, relevant to both data set experiments7,8.

During training, as with the Tanpopo work various batch sizes were experimented with. Given the larger LROC images, it was anticipated that larger batches demanding yet more memory due to the increased number of images loaded simultaneously, might not be achievable and that the resulting loss curve might not be as smooth, indicating a less stable model. But with the same batch size of 32, a good convergence was achieved, with only a minor stutter in the curve and still expedited convergence to the global minimum of the loss function, without having to make further memory trade-offs. With the batch size of 32 again effectively balancing computational resource demands and learning stability, each Apollo LROC epoch consists of 1584 images/32 = 49.5, approximately 50 iterations. Setting max iterations to 500 means the training process involved roughly 500/50 = 10 epochs. After experimenting with various numbers of max iterations, 500 was found to be optimal, striking a balance between model performance and computational efficiency (Fig. 9).

Halting the training process before overfitting - becoming excessively attuned to the training data - is particularly key for the Apollo site images, so that different types of spacecraft and spacecraft hardware can be recognised even in unseen scans of the lunar surface. In this case 500 iterations versus 2000 for Tanpopo proved a useful basis for optimal model convergence.

As with Tanpopo this choice was partly influenced by our choice of the Turi Create TinyYOLOv2 implementation, and specifically its constraints on validation set usage, which limited the ability to employ traditional validation techniques to fine-tune the number of iterations. Again, reliance was on trial and error in the creation of the model, along with runtime considerations, to determine the most effective training duration. Since the goal is automated on-device deployment for use in lunar orbit, the trial-and-error component in the model creation phase to achieve this goal is a practical trade off: the balance of convenience with a good modicum of configurability makes YOLO-ETA’s implementation a practical tool for rapid development, while recognising the limitations for more advanced experimentation and nuanced model optimisation. Evaluating the model on the test set that the model hasn’t encountered during training, serves as a proxy for real-world, unseen data and provides a more accurate measure of how well it will perform in the real world in comparison to the training set.

From the YOLO-ETA Turi Create training log, the loss is decreasing over iterations, which a good sign that the model is learning from the training data. The loss starts at 7.5868 and has decreased to 0.920158 by iteration 500. The elapsed time per iteration also seems to be consistent, indicating stable training performance (per Fig. 9 above). As the training progresses the loss values’ decreasing trend suggests that the model is improving. Since the loss does not start to increase or fluctuate significantly, it is less likely that it indicates overfitting or instability in the training process. Based on experiences with the Tanpopo data sets, a first next recourse would likely be to a more varied data set, not an adjustment to the learning rate or use of a different optimization algorithm.

The model’s performance was evaluated on the independent 20% test subset comprising 317 unseen LROC images. A detection was considered correct when the predicted bounding box achieved an Intersection-over-Union (IoU) > 0.5 with the ground-truth annotation. Evaluation metrics were computed as follows:

-

True Positive (TP): Feature exists and is correctly detected.

-

False Positive (FP): Detection reported, but no corresponding feature exists.

-

False Negative (FN): Feature present but missed by the detector.

-

Precision = TP / (TP + FP) – fraction of detections that are correct.

-

Recall = TP / (TP + FN) – fraction of actual objects detected.

-

F₁ = 2 · (Precision · Recall) / (Precision + Recall) – balanced measure of overall performance.

See Table 2 for YOLO-ETA performance on Apollo test data.

YOLO-ETA performs best on Landing Modules, which have distinct geometry and larger pixel footprints. The higher FP rate in smaller classes reflects deliberate tuning of the model toward high recall, a design choice favouring potential detections for later human verification. The model’s balanced precision–recall F₁ ≈ 0.60 demonstrates that even with limited training data, a lightweight CNN can generalise effectively to unseen lunar imagery.

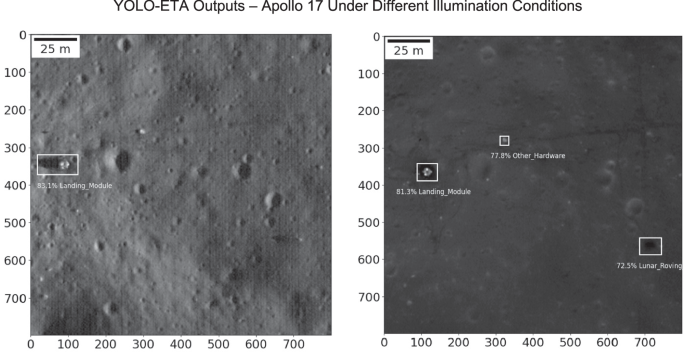

Although a few false positives were returned with high confidence—such as the Apollo 17 “Other Hardware” detection later verified as a rock (Fig. 10) —these cases highlight the algorithm’s sensitivity to shadow geometry and albedo contrasts. We consider such instances valuable for iterative refinement rather than model failure: adjusting thresholds to eliminate all false positives would risk suppressing genuine, but subtle, detections in unseen data.

In the left image (from LROC M131447374L), the elongated shadow of the descent stage is picked up together with the module itself and bounded and classed collectively as a Landing Module; the Lunar Roving Vehicle and other spacecraft hardware at known coordinates are not picked up, though the LRV in this image would also likely be a challenge for human detection. In the right image (from LROC M172717297R), under darker illumination, the algorithm assigns a slightly lower confidence score to the Landing Module detection but correctly picks up the LRV, while making an apparently spurious detection of a boulder as other spacecraft hardware; the algorithm appears to be ‘seeing’ contrast density, tracks or other indicators not readily distinguished by the human observers. (NASA/GSFC/ASU) Units on the coordinate axes are pixels, at a spatial resolution of 0.22 m/pixel.

Implementing YOLO-ETA

After training, the YOLO-ETA model was implemented on independent LROC NAC images to evaluate its generalisation under varying illumination and viewing geometries. Test images were selected from the same regions as the Apollo 17 landing site but from orbits and Sun angles unseen by the model. The algorithm correctly detected and localised the Apollo 17 descent-stage landing module with confidence scores up to 83%. Depending on illumination, the model sometimes grouped the elongated shadow of the module together with the hardware itself as a single detection—an effect arising from YOLO-ETA’s sensitivity to sharp contrast boundaries. Under darker illumination, the model also identified the Lunar Roving Vehicle (LRV) and ancillary hardware, although with lower confidence; this suggests that the network learns to associate contrast density and track morphology with anthropogenic features not always readily distinguishable to the human eye.

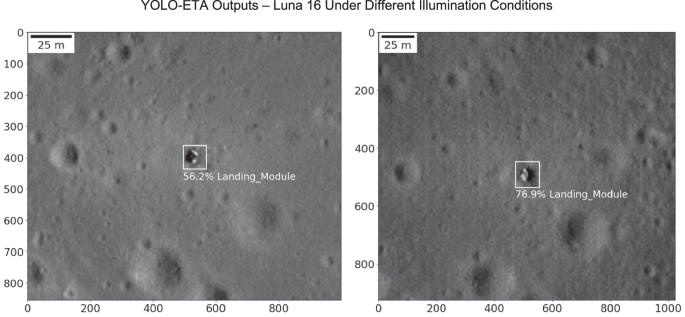

To test generalisation beyond Apollo-era data, the trained model—without retraining—was applied to LROC NAC images of the Luna 16 site, the first robotic probe to return a lunar regolith sample37. Although the model had never encountered non-Apollo spacecraft during training, it successfully detected and localised the Luna 16 lander, classifying it as a Landing Module with confidence scores up to 77% (Fig. 11). This cross-validation confirms that YOLO-ETA can recognise unfamiliar artefacts based solely on learned spatial–radiometric cues, rather than memorised templates.

Though trained only on images of the Apollo landing sites, the model readily detects and localises the Luna 16 spacecraft, classifying it as a Landing Module, and assigns confidence scores of up to 76.9% for terms combining bounding box prediction accuracy, object presence probability, and strength of class prediction. (NASA/GSFC/ASU) Units on the coordinate axes are pixels, at a spatial resolution of 0.22 m/pixel.

One of our objectives in these experiments is to create an easy-to-implement, mobile system that can be deployed in space for autonomous detection of objects amongst unseen data. YOLO-ETA is thus optimised to train on a small number of images and cycle a compressed number of convolutions to obtain fast high-confidence identifications using a minimal computing resource of a few standard GPUs. At one extreme, YOLO generally can be tuned to make successful identifications from just a single (one-shot) training image; conversely, extensive training on hundreds of images can be a burden to the implementation of these types of CNN models. Accordingly and as an additional test we applied YOLO-ETA without further training to LROC tiles containing Surveyor 7, an especially challenging target given the surrounding boulder field, and successful identifications were again made; as with the Apollo landing site experiments in training the algorithm, the model is likely parsing the spacecraft’s distinct shadow patterns even amongst the boulders in achieving this detection (Fig. 12).

Without further model training YOLO-ETA correctly detects the Surveyor 7 lander with a combined confidence score greater than 58%, despite the complex bouldery environment (a False Positive detection is made nearby at 40%). LROC Image: M175355093L (NASA/GSFC/ASU). Units on the coordinate axes are pixels, at a spatial resolution of 0.30 m/pixel.

Quick Map Navigation

The model was next used in an exploratory search for unidentified artefacts in a 5 × 5 km area surrounding the historically uncertain coordinates of the Luna 9 landing site (7.08° N, –64.37° E; 19). The search employed the LROC QuickMap interface12,34 to access Narrow Angle Camera (NAC) image products covering this region under differing illumination and incidence angles. A number of bright artefacts can be seen in the vicinity, but these are rejected as spacecraft hardware by the YOLO-ETA model and appear to be fresh craters rather than evidence of spacecraft hardware. Spanning out from the area and accessing multiple NAC image products however, the YOLO-ETA algorithm made a first detection; a Landing Module (as trained on Apollo spacecraft) is detected and localised at 7.02907° latitude and -64.32867° longitude with a 43.8% confidence score in the LROC image M132071202LC. To the human eye similar, smaller objects of potential interest can also be seen (see Fig. 1).

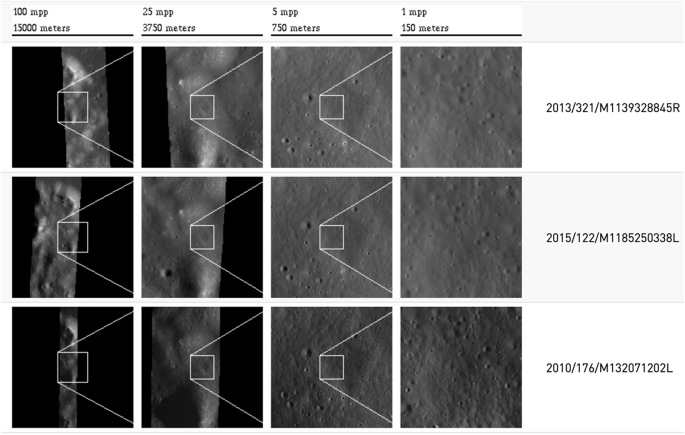

To further investigate the potential landing site and test the YOLO-ETA model in the immediate vicinity of the initial detection, in the QuickMap drawing tool a pin was placed near the centre of the region of interest at 7.03054° latitude and -64.32741° longitude, and a search request was initiated for other NAC image products around the pinned location at similarly high resolution. This generates multiple images of relevant LROC tiles containing the pinned point of interest, and a progressive zoom from 15 km at 100 m per pixel (mpp) to a 150 m x 150 m frame of the area of interest, aiding area of interest identification in the full image tiles (Fig. 13).

With the YOLO-ETA machine learning model having identified an array of apparent spacecraft hardware centred around the general area of 7.03054° latitude and -64.32741° longitude at a resolution of 0.39 m/pixel, a pin was placed at the centre of this area in the QuickMap drawing tool, and a search request was initiated for similar Lunar Reconnaissance Orbiter Narrow Angle Camera image products, in order to test and validate the initial identification. 31 LROC NAC image tiles of the area of interest were returned (of which three representative examples are shown here), zoomed in from 15000 m scale and 100 m per pixel to 150 m scale and 1 m per pixel. From these individual images from each tile were selected, further zoomed in to 0.22 m per pixel in 800 × 800-pixel increments, and input to the YOLO-ETA model (NASA/GSFC/ASU).

Of 31 image tiles examined, eight exhibited adequate resolution and lighting conditions for reliable inference. Across these, YOLO-ETA yielded multiple detections of candidate artificial objects, including the prominent feature centred near 7.03° N, –64.33° E with confidence scores up to 77%. These detections and their contextual analysis are described in detail in the Results section.

Data availability

The trained model and all associated code to reproduce the results of this work can be found at https://github.com/LewisJPinault/YOLO-ETA-Luna-9 and includes: Readme file with installation guide - YOLO-ETA Model - Image test sample for running in YOLO-ETA.

References

Rezaei, S. et al. Bridging gaps with computer vision: AI in (bio)medical imaging and astronomy. Astron. Comput. 51, 100921 (2024).

Kozlov, K. S. et al. Discovering organic reactions with a machine-learning-powered deciphering of tera-scale mass spectrometry data. Nat. Commun. 16, 2587 (2025).

Lieu, M. & Cheng, T.-Y. Machine learning methods in astronomy. Astronomy Comput. 47, 100830 (2024).

Pinault, L. J., Yano, H., Okudaira, K. & Crawford, I. A. YOLO-ET: A Machine Learning model for detecting, localising and classifying anthropogenic contaminants and extraterrestrial microparticles optimised for mobile processing systems. Astronomy Comput. 47, 100828 (2024).

Pinault, L.J. Searching for extraterrestrial artefacts on the Moon and in the solar system: detection strategies and techniques. PhD thesis, Birkbeck, University of London https://doi.org/10.18743/PUB.00054620 (2024).

Yamagishi, A. et al. Scientific targets of tanpopo: astrobiology exposure and micrometeoroid capture experiments at the japanese experiment module exposed facility of the international space station. Astrobiology 21, 1451–1460 (2021).

Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. You only look once: unified, real-time object detection. In Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 779, 788 (2016).

Redmon, J. & Farhadi, A. YOLO9000: Better, faster, stronger. In Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 7263, 7271 (2017).

Jiang, P., Ergu, D., Liu, F., Cai, Y. & Ma, B. A review of yolo algorithm developments. Procedia Comput. Sci. 199, 1066–1073 (2022).

Haase, I. et al. Mapping the apollo 17 landing site area based on lunar reconnaissance orbiter camera images and apollo surface photography. J. Geophys. Res. Planets 117, E00H20 (2012).

Nasa Scientific Visualization Studio. Lunar Reconnaissance Orbiter (LRO) 10th Anniversary Video. https://svs.gsfc.nasa.gov/5001 (2024).

Robinson, M. S. et al. Lunar reconnaissance orbiter camera (LROC) instrument overview. Space Sci. Rev. 150, 81–124 (2010).

Humm, D. C. et. al. Flight calibration of the LROC narrow angle camera. Space Sci. Rev. 200, 431–473 (2015).

Mahanti, P. et. al. Inflight calibration of the lunar reconnaissance orbiter camera wide angle camera. Space Sci. Rev. 200, 393–430 (2015).

Speyerer, E. J. et al. In-flight geometric calibration of the lunar reconnaissance orbiter camera. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. XXXIX-B4, 511−516 (2012).

Robinson, M. S. Lunar Reconnaissance Orbiter Camera Experimental Data Record, LRO-L-LROC-2-EDR-V1.0, NASA Planetary Data System. https://lroc.im-ldi.com/data/ (2010).

Lesnikowski, A., Bickel, V. T. & Angerhausen, D. Automated discovery of anomalous features in ultralarge planetary remote-sensing datasets using variational autoencoders. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 17, 6589–6600 (2024).

Wagner, R. V. et al. Coordinates of anthropogenic features on the Moon. Icarus 283, 92–103 (2017).

Stooke, P. J. Identification of the SMART-1 spacecraft impact location on the Moon. Icarus 321, 112–115 (2019).

Pokorný, P. et al. Machine Learning Driven Detection Of 1 Billion+ Lunar Impact Craters In Permanently Shadowed Regions Using Shadowcam Data. https://ntrs.nasa.gov/api/citations/20250002414/downloads/MazaricoMachinePoster.pdf (2025).

Yeh, C. et al. Using publicly available satellite imagery and deep learning to understand economic well-being in Africa. Nat. Commun. 11, 2583 (2020).

Kerner, H. Machine Learning for Remote Sensing. https://ml-for-rs.github.io/iclr2025/ (2019).

NASA Space Science Data Coordinated Archive (NSSDC). Spacecraft Details: 1966-006A. https://nssdc.gsfc.nasa.gov/nmc/spacecraft/display.action?id=1966-006A (2024).

Anonymous. The first soft landing on the Moon. Proc. Acad. Sci. USSR Phys. Ser. 30, 741–744 (1966).

Anonymous. Results of television observations from the surface of the Moon. Rep. Acad. Sci. USSR 168, 1277–1280 (1966).

Sobornik (ed. Kemurdzhiana, A.L.) Movement over lunar and planetary surfaces. In Proc. 50th Anniversary of Lunar and Planetary Rovers Development, Moscow (IEEE, 1986).

Khartov, V. V. et al. New Russian lunar unmanned space complexes. Sol. Syst. Res. 45, 690–696 (2011).

Efanov, V. V. & Khartov, V. V. Proceedings of the 50th Sci. Conf. in memoriam of K.E. tsiolkovskii and Stages of development of cosmonautics. In Abakukov, (N. A., Sergeev, G. A. & Kanukov, L. N. (eds), 27–31 (Kaluga: Eidos, 2015).

Anonymous. Automatic interplanetary station“Luna-9”. Aviation Cosmonautics 3, 4–7 (1966).

Lockheed Electronics Company. Analysis of Luna 9 Photography. Prepared for Manned Spacecraft Center. https://ntrs.nasa.gov/api/citations/19720022465/downloads/19720022465.pdf (1968).

Hoppe, R. LROC QuickMap goes 3D! Lunar Reconnaissance Orbiter Camera News. https://www.lroc.asu.edu/news/977, (2017).

Clegg, R. N., Jolliff, B. L., Robinson, M. S., Hapke, B. W. & Plescia, J. B. Effects of rocket exhaust on lunar soil reflectance properties. Icarus 227, 176–194 (2014).

Stooke, P. J. The International Atlas of Lunar Exploration. Cambridge University Press (2007).

Robinson, M. S. Quickmap – Try it out! Lunar Reconnaissance Orbiter Camera News. https://www.lroc.asu.edu/news/311, (2011).

Vasyukova, D. A Jupyter Widget For Annotating Images With Bounding Boxes. https://discourse.jupyter.org/t/a-jupyter-widget-for-annotating-images-with-bounding-boxes/9700 (2024).

Grishin, K., Mei, S. & Ilić, S. YOLO-CL: Galaxy cluster detection in the SDSS with deep machine learning. Astron. Astrophys. 679, A123 (2023).

Pillinger, C. T. & Gowar, A. P. The separation and subdivision of two 0.5g samples of lunar soil collected by the Luna 16 and 20 missions. Philos.l Transactions of the Royal Society of London. Series A, Mathematical and Physical Sciences. 284, 137–143 (1977).

Acknowledgements

We thank our three reviewers, P. J. Stooke and two anonymous referees, for their insightful comments which have improved the manuscript. The authors also wish to thank the LROC QuickMap team at Arizona State University for the development of their highly useful image tools.

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Pinault, L.J., Crawford, I.A. & Yano, H. Possible identification of the Luna 9 Moon landing site using a novel machine learning algorithm. npj Space Explor. 2, 4 (2026). https://doi.org/10.1038/s44453-025-00020-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44453-025-00020-x