Developers leave fingerprints in the code they write. Naming choices, indentation patterns, preferred APIs, and the way someone structures a loop or handles a pointer all carry traces of individual habit. Researchers have used these stylistic signals for years to identify the authors of anonymous code samples, sometimes with surprising accuracy. A team at the University of Massachusetts Dartmouth is now applying the same idea to a different question: can stylistic patterns also reveal which code is likely to contain security vulnerabilities?

Their model, called VulStyle, treats coding style as a signal alongside the code itself. The premise is that risky habits cluster. A developer who writes inconsistent buffer handling in one function may write it the same way elsewhere. Mixed naming conventions, unusual nesting, and irregular pointer use can correlate with the kinds of bugs that lead to memory corruption and other classic flaws in C and C++.

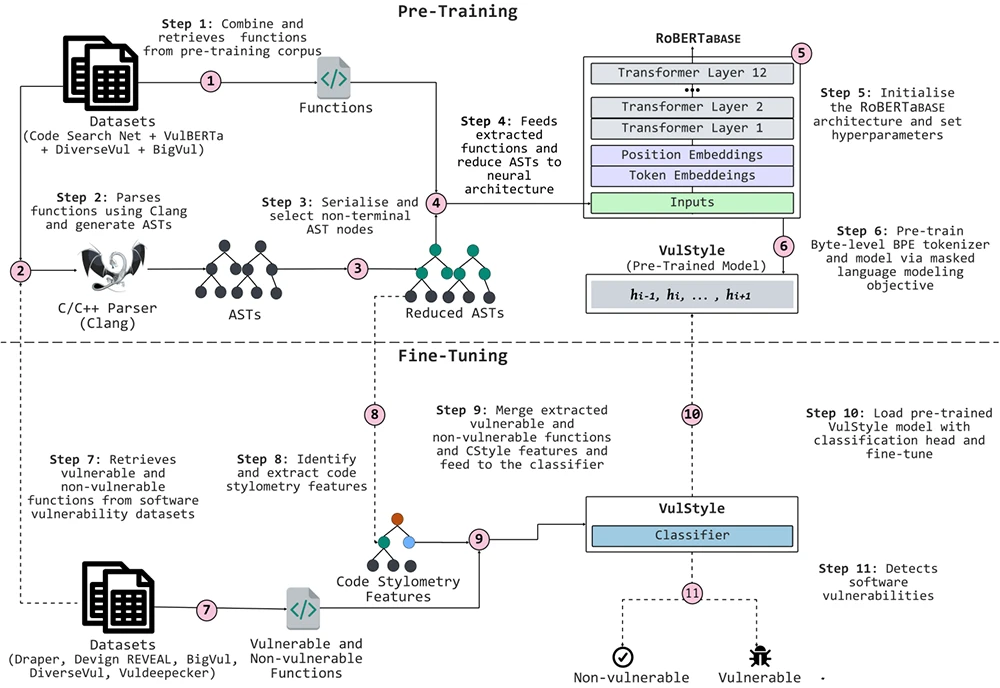

VulStyle’s approach (Source: Research paper)

A different angle on an old problem

Static analyzers have looked at vulnerable code for decades. Machine learning approaches more recently have focused on the tokens of a program, the keywords and operators, or on graph structures that capture how data and control flow through a function. VulStyle adds a third layer. It pulls out stylometric features such as expression types, declaration patterns, and statement structures, and combines them with a trimmed version of the code’s syntax tree and the original source text.

The model was pre-trained on roughly 4.9 million functions across seven programming languages, then fine-tuned on five widely used vulnerability detection datasets. On some of those benchmarks, the combination of style, structure, and tokens performed better than detectors that relied on tokens alone. The authors report that style and structure complement each other: structural information anchors what the code does, and stylistic signals capture how the developer tends to write it.

The benchmark problem behind the numbers

The same paper points to a deeper issue in the field. VulStyle posts strong scores on some datasets and much weaker scores on others. On DiverseVul, a benchmark designed to address shortcomings in earlier vulnerability datasets, the model’s F1 score drops sharply. The authors cite recent work showing that several popular vulnerability detection benchmarks contain noisy labels that can inflate reported performance. Recent work in adjacent ML security domains shows the same pattern. A 2026 study found that ML-based malware detectors trained on one dataset frequently stumble when tested on another, even when both use the same standardized feature format.

For practitioners, this gap is the more useful finding. It suggests that headline accuracy figures in this corner of security ML often say more about dataset construction than about real-world detection capability. A model that performs well on one benchmark may behave very differently on code drawn from a different source.

Open questions for security teams

The stakes for getting vulnerability detection right are rising. A recent Cloud Security Alliance briefing describes the shrinking window between vulnerability discovery and working exploit, driven in part by autonomous AI systems capable of finding zero-days at scale.

Two limitations stand out. The first is adversarial. The authors argue that style-aware detection is harder to evade because an attacker would need to coordinate changes across tokens, structure, and stylistic patterns at once. They did not test this empirically. A determined attacker could run vulnerable code through a formatter, rename variables, and restructure expressions to see whether style-based signals survive. That work remains open.

The second limitation is more pressing for anyone watching how software is written in 2026. Stylometric signals depend on the existence of an individual style. Code generated by LLMs tends to be uniform, formatted, and stripped of personal habit. The authors acknowledge that stylometry collapses on templated and auto-generated code. With LLM-assisted development now common in production repositories, the window in which developer style remains a useful signal may be narrowing.

What it means in practice

VulStyle remains a research model at this stage. Stylistic patterns carry information about risk, and combining them with structural and lexical features can sharpen detection on certain classes of bugs. The work is a reminder that signal sources matter, dataset choices matter more, and the assumption that any single benchmark reflects production conditions deserves scrutiny.

Download: Automating Pentest Delivery Guide