Versatile, ready-to-run AI models open infinite possibilities

Accelerate development with a wide range of AI models optimized for the integrated NPU of VENTUNO Q – or customize for your unique challenges! Powered by Edge Impulse and Qualcomm® AI Hub, this is your gateway to extensive AI libraries.

Local LLMs

Use Qwen for sophisticated natural language understanding entirely on-device, without cloud dependencies or data transmission.

Local VLMs

Qwen VLM combines visual perception with natural language understanding for image captioning, scene description, OCR, and more.

TTS & ASR

Leverage Melo TTS and Whisper, allow offline devices to understand natural speech, transcribe, and give human-like responses.

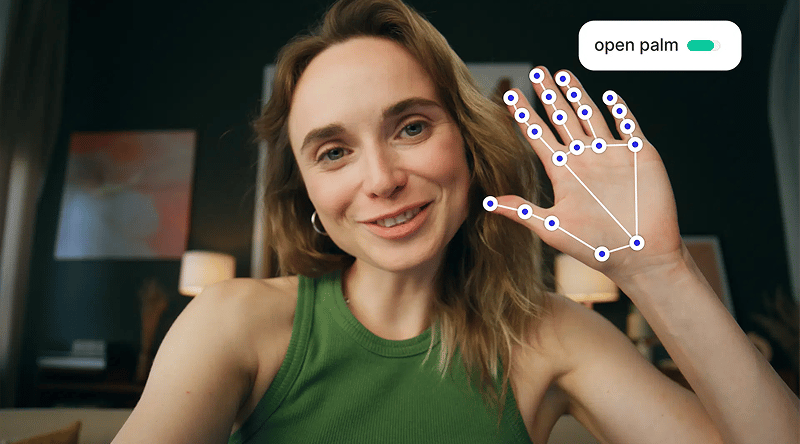

Gesture Recognition

Recognize hand gestures, finger movements, and sign language for touchless interfaces and human-robot interaction using MediaPipe.

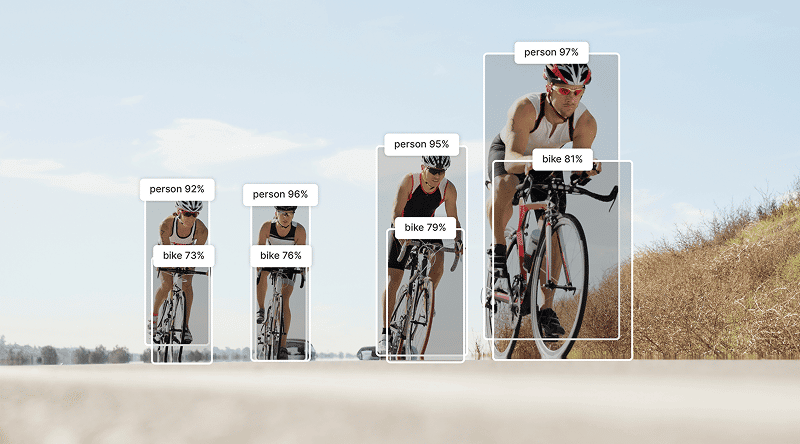

Object Tracking

Use YOLO-X (You Only Look Once - eXtended) to track people, vehicles, or objects in real-time across multiple camera views.

Pose Detection

Track body pose, joint positions, and movement patterns with PoseNet. For fitness applications, safety monitoring, and interactive gaming.

Frequently Asked Question

What makes VENTUNO Q different from other edge AI boards?

VENTUNO Q brings together up to 40 dense TOPS of AI compute with a dedicated real-time microcontroller in a unified dual-brain architecture. While competitors focus purely on AI inference, VENTUNO Q delivers both intelligence and deterministic actuation – essential for robotics, industrial control, and any application where AI must interact with the physical world. Arduino App Lab provides an accessible development experience that scales from beginner to professional level, while unmatched ecosystem compatibility (UNO shields, UNO carriers, Raspberry Pi Hats, Modulino nodes) accelerates prototyping.

Do I need to use Arduino App Lab, or can I develop with standard Linux tools?

Both options are fully supported. While Arduino App Lab streamlines AI and robotics development, VENTUNO Q itself is a complete Ubuntu/Debian Linux system. You can use standard IDEs such as VS Code, PyCharm, Eclipse, Vim, Emacs, Python virtual environments and package managers, Docker containers for isolated environments, SSH remote development and headless operation. Any framework or toolchain you prefer! App Lab accelerates development but never restricts your workflow.

Which operating systems does VENTUNO Q support?

VENTUNO Q officially supports Ubuntu for predictable long-term support and extensive package ecosystems, and Debian with full upstream support for embedded-focused development.

How much storage can I add beyond the onboard 64 GB?

The M.2 connector supports NVMe SSDs.

What AI models and frameworks does VENTUNO Q support?

VENTUNO Q supports multiple AI deployment paths: Qualcomm® AI Hub pre-optimized models, Edge Impulse trained models, Arduino App Lab curated model library, 3rd party and custom inference engines.

Can I train my own custom AI models for VENTUNO Q?

Absolutely. Use Edge Impulse’s integrated platform to upload your training data; train models using Edge Impulse’s cloud infrastructure; automatically optimize and quantize for VENTUNO Q hardware; deploy directly into Arduino App Lab with one click. You can also train your own models on 3rd-party and custom inference engines.

Does VENTUNO Q support ROS (Robot Operating System)?

Yes, VENTUNO Q is compatible with ROS 2.

When will VENTUNO Q be available for purchase?

VENTUNO Q is coming soon! To ensure you’re among the first to get your hands on it, sign up for our availability alerts. We’ll notify you the moment it hits the shelves at the Arduino Store and our official resellers, such as Digikey, Farnell, Macfos, Mouser and RS. Stay tuned!

Qualcomm branded products are products of Qualcomm Technologies, Inc. and/or its subsidiaries. Arduino, VENTUNO, UNO, and Modulino are trademarks or registered trademarks of Arduino S.r.l.