Last month, TITLES hosted Model as Medium, in collaboration with Rhizome. The event brought together five artists, each with distinct practices, into a shared experiment: training custom models on their own work.

Model as Medium asked one central question: what changes when artists train models on their own work rather than relying on the undifferentiated aesthetics of general-purpose image generation? The evening centered on artist-trained models as a creative medium in their own right: not just tools for producing more images, but systems that can preserve a visual language, distort it, extend it, or hand it over to others in new ways.

Across the conversation, a few themes kept surfacing. One was constraint as craft: every artist worked with a small dataset of images, and the real labor sat in curation, captioning, and deciding what the model should not be able to do. Another was transformation: these models were most interesting not when they perfectly reproduced existing work, but when they generated a related world, a derivative language, or a productive misreading.

The artists and their models:

Louis’ model comes out of his sculpture practice. His current body of work consists of anthropomorphic sperm figures, cast as different personas — philosophers, brides, comedians. The sperms are his way of working around representation without cleanly negating it: a “bad figuration,” deliberately silly and deliberately blank, that stays opaque rather than resolving into any clear meaning. Louis was openly ambivalent about AI, but that ambivalence is part of what made the project interesting to him. His sperms are already a joke about copying and reproduction, reshuffling the same figure over and over, so, to him, training a model on them felt like an extension of the work’s internal joke.

balfua’s practice takes place inside of an immersive digital world he calls Sayssiworld, a sprawling digital ecosystem populated by shapeshifting creatures. His practice moves between CGI, animation, music, sculpture, VR, AI and 3D printing, and is deeply rooted in the practice of worldbuilding. His TITLES model is not just a stylistic sampler, but a way of opening a portal into a coherent cosmology. He considers works made by others using his model as extensions of Sayssiworld.

Working from damaged negatives in a family photo archive stored for decades in South India, Aarati focused on discoloration, fungal branching, humidity, and other visible marks of age. For the model’s training data, they cropped out family members and concentrated instead on the damage itself, pairing the marked images with cleaned versions to build an image-edit model.

The model’s visual style is just the beginning of a larger conceptual project (currently beyond TITLES’ technical capability). Aarati imagines a system in which, as the model is used, the image outputs created with it accumulate decay. Early outputs remain relatively legible, while later ones would become increasingly distorted, eventually becoming completely undecipherable. In other words, the model would not simply make images, but record its own history of use.

Maya Man approached her TITLES model through the logic of performance, simulation, and the unstable category of the “real.” Much of her practice is software-based and already concerned with online behavior, staged identity, and vernacular image systems. For this project, she drew from Star Quest, a work built from AI-generated video clips that re-stage the visual and emotional universe of Dance Moms. First, she built a database of hundreds of screenshots taken while rewatching the show. For each clip, she wrote prompts based on those screenshots and generated variations in an AI video model, before selecting a final clip. Her TITLES model was then trained on images from that already-synthetic world, making it, in her words, an “unauthorized spin-off.”

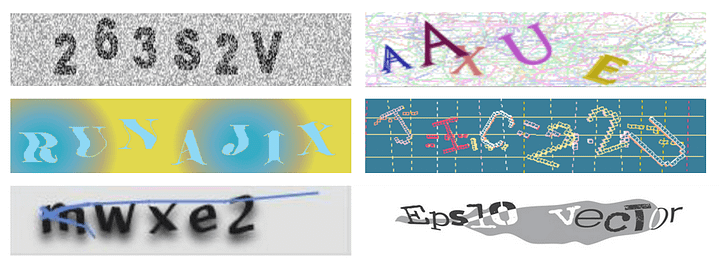

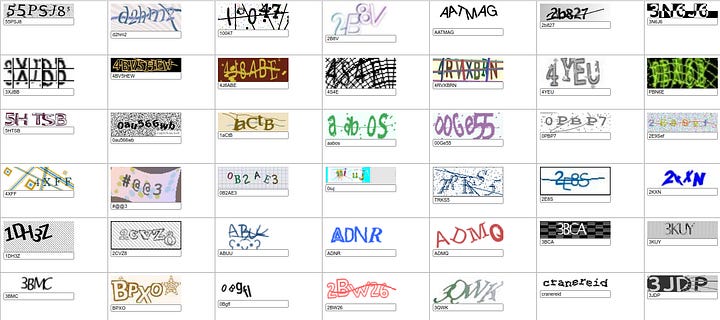

Alix Vernet’s practice centers around sculpture and urban language. She presses clay onto the surfaces of New York’s monuments and collects images of the city’s storefronts, building a library of letterforms drawn from public text. For her TITLES model, she was drawn to the found language of CAPTCHA, which arrange words into accidentally poetic nonsense. Her model is the first component of what she hopes will become a “poem-place machine” — eventually, a user would enter an address and receive a CAPTCHA-styled poem composed from the text found around that location.