When a developer asks an AI coding agent what to use for browser automation, the answer shapes the whole project. Not because the agent is always right, but because most developers trust it and move on. If your platform doesn't appear in that response, you don't get evaluated.

This is the agent experience problem. Agent experience (AX) describes what it's like using an AI agent to discover, understand, and use a product with minimal friction. Unlike developer experience, which assumes a human reading documentation and making deliberate choices, AX depends on whether the platform was visible enough during model training to encode working knowledge. A platform can have excellent docs and still be invisible to an agent.

To test whether visibility actually predicts quality, there's a simple method. Query an AI coding agent multiple times with variations of a single question and count which platforms surface.

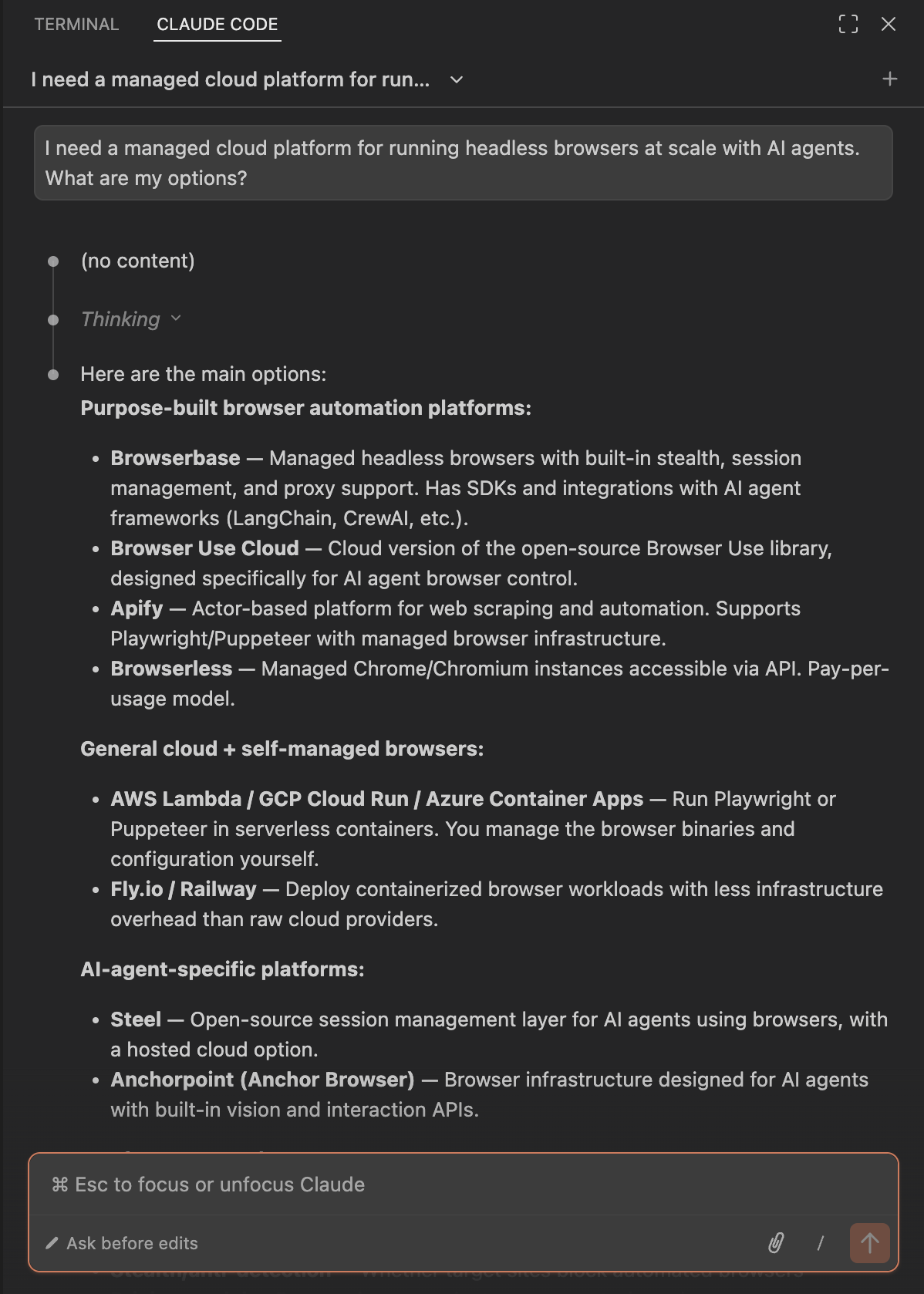

I use Claude Code with 12 variations of "what should I use for browser automation?" and pick three platforms that span the full visibility range:

- Browserless appears in 75% of responses (9/12)

- Browserbase appears in 67% of responses (8/12)

- Anchor appears in 8% of responses (1/12)

The question is whether this gap reflects something real about these platforms, or just training data bias.

I measure connection speeds, test CAPTCHA solving and stealth mode, and run real scraping tasks against all three.

I also attempt something more challenging: using vision-based automation to solve interactive visual puzzles on Neal.fun. The results show just how far browser automation still has to go when dealing with tasks that require visual understanding.

An AI agent attempting to solve visual puzzles on Neal.fun's I'm Not a Robot game. It makes 14 attempts over five minutes but gets stuck on Level 2.

Finding the platforms

Before testing code or features, it's worth seeing which platforms an AI agent actually recommends. Many developers now start projects by asking Claude Code or Cursor, "What should I use for X?" If your platform doesn't show up in those responses, you're invisible.

To get a representative sample, I ask Claude Code 12 variations of the same question:

- "What platforms can I use to run headless browsers at scale?"

- "Best platform for browser automation?"

- "I need to automate web scraping, what should I use?"

You can run the same test for any platform you're evaluating.

An AI agent's knowledge about a product comes from its training data: the documentation, forum discussions, blog posts, and code examples that existed before its knowledge cutoff. High visibility might mean a platform is more established, with extensive documentation and a larger community. The risk is that AI agents may overlook newer or niche products, even if they're technically superior.

The three platforms in this comparison each represent a different visibility level:

- Browserless appears in 9 out of 12 responses (75%)

- Browserbase appears in 8 out of 12 responses (67%)

- Anchor appears in just 1 out of 12 responses (8%)

Testing across this range lets me see whether visibility actually predicts quality or just reflects training data bias.

Can agents find pricing information?

Pricing can get complicated with usage-based models and concurrent session limits. When you're evaluating platforms, you want a quick comparison of their free-tier offers and what their paid plans cost.

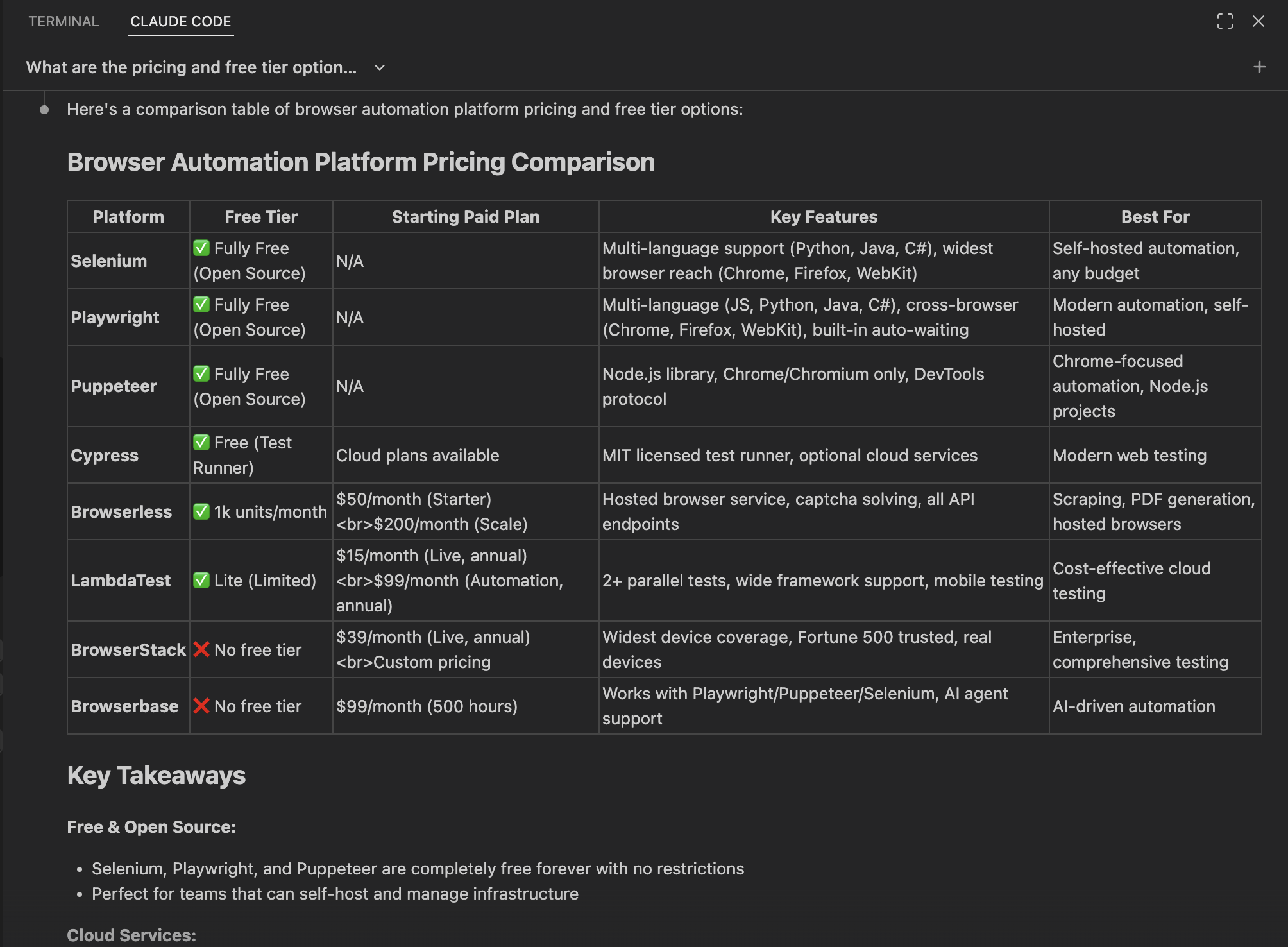

A reasonable first step when evaluating platforms is to ask generically and see what surfaces:

❯ What are the pricing and free tier options for browser automation platforms?

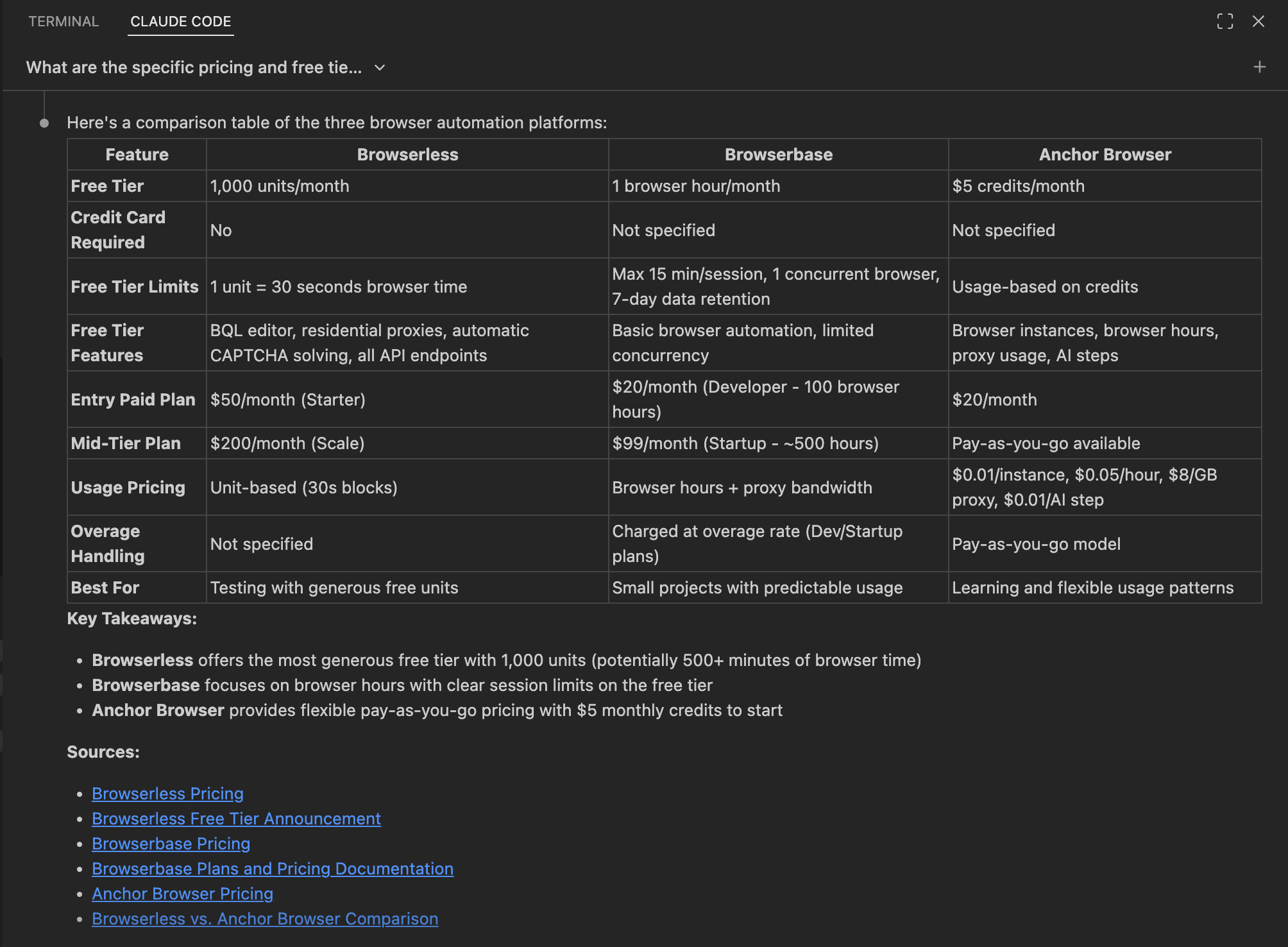

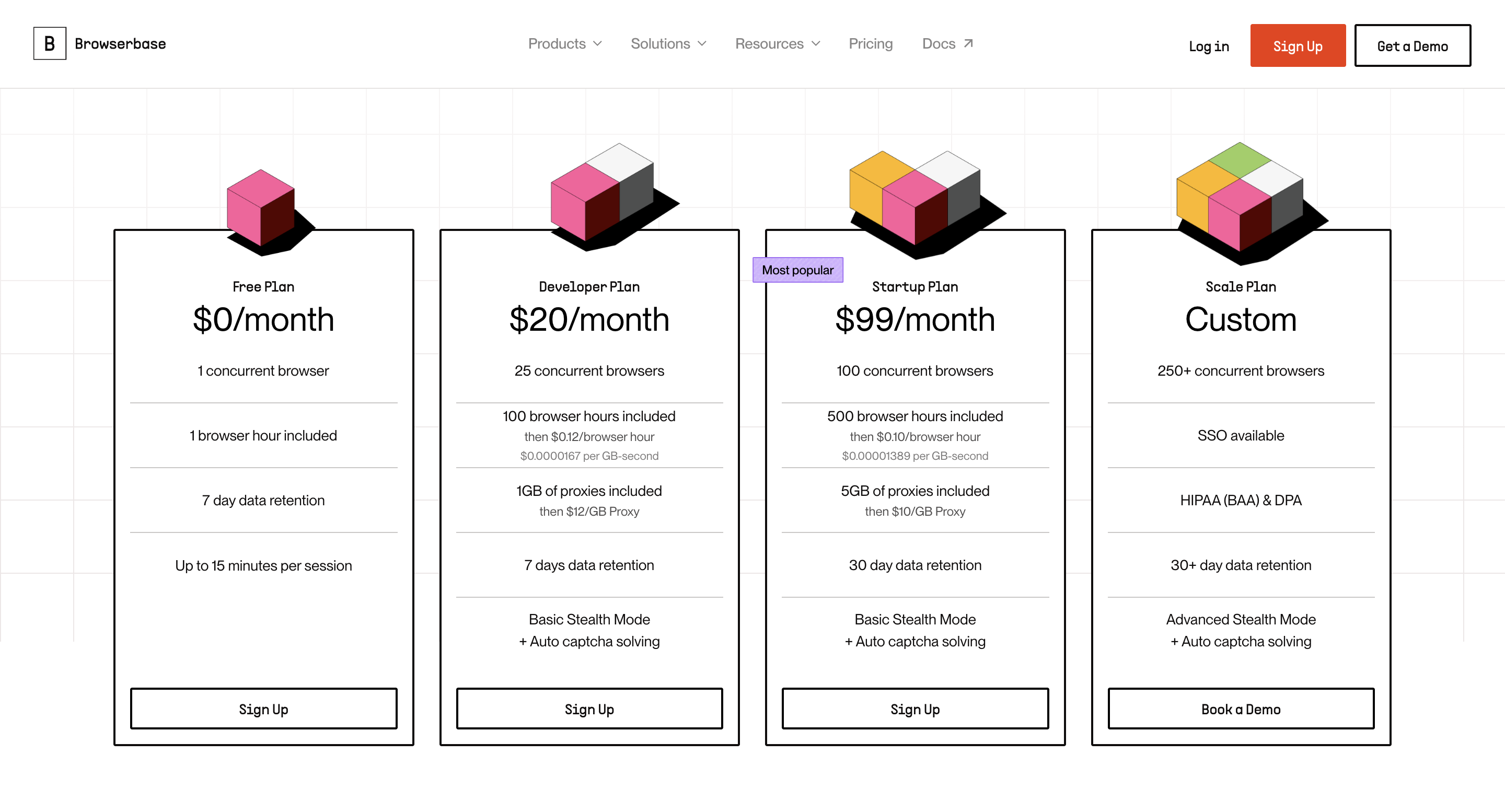

The response includes Browserless (with 1,000 units per month on the free plan, and a $50 per month starter plan), but lists Browserbase as having "no free tier", and instead starting at $99 per month. Anchor doesn't appear at all.

Since I know Browserbase does have a free tier, I try again. This time, I'm more explicit:

❯ What are the specific pricing and free tier details for Browserless, Browserbase,

and Anchor browser automation platforms

This time, the agent finds information for all three platforms. Browserbase shows up with a free tier (with one browser hour per month) and the correct Developer pricing ($20 per month). Anchor appears with its $5 of free credits and $20 per month starter plan.

Next, I check the actual Browserbase, Anchor, and Browserless pricing pages:

Browserbase has the biggest difference between the two responses. The generic search lists it as having no free tier at $99 per month. The explicit search lists it correctly as having a free tier with one browser hour per month, as well as a $20-per-month Developer plan. The actual page confirms the explicit search is correct.

Browserless does offer a free 1,000 units per month, but the starter plan is $140 per month, not the $50 per month that both queries reported. Anchor's pricing matches the explicit search findings: $5 in free credits, with paid plans starting from $20 per month.

The agent can only surface what it already knows. If you don't know Anchor exists, you can't ask about Anchor. If you don't know Browserbase has a free tier, you'll be quoted $99 per month for something that's actually free. The agent is useful for comparing options you already have in mind, not for discovering ones you don't. Verify pricing directly on each platform's pricing page before committing to anything an agent quotes you.

Getting started: Sign-up and setup

With three platforms chosen, the next step is seeing how well the agent handles sign-up. I use the same prompt pattern for each.

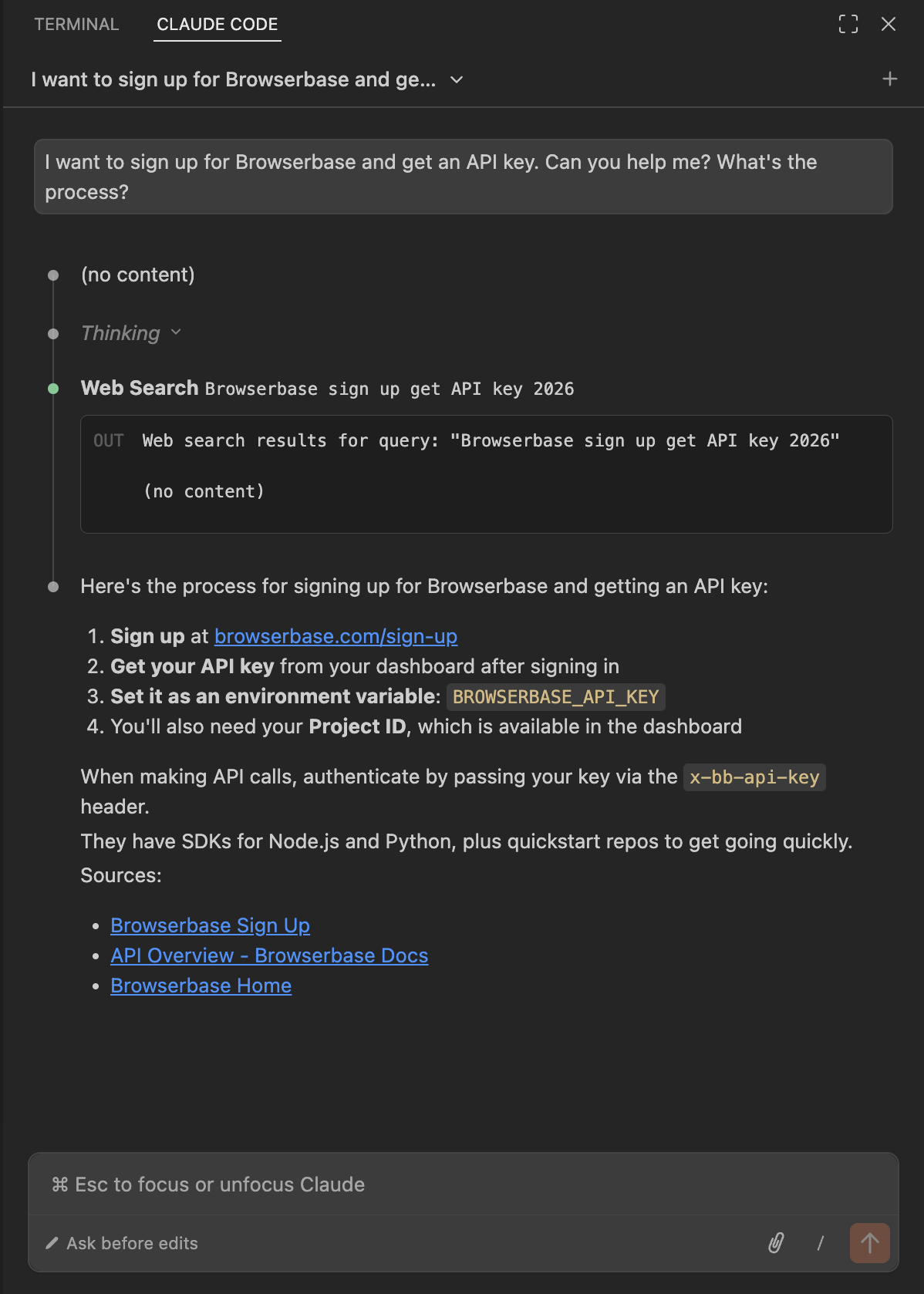

❯ I want to sign up for Browserbase and get an API key. Can you help me? What's the process?

The agent provides clear steps and notes that I need both an API key and a Project ID:

- Sign up at browserbase.com/sign-up

- Find the API key in the dashboard

- Set it as an environment variable

But then it stops. It can't complete the sign-up itself.

This is where I hit the first practical limitation. Browser automation platforms need email verification or CAPTCHA challenges that agents can't handle. It's like needing glasses to look for your glasses. The tool that would let an agent complete this task is exactly what I'm trying to sign up for.

None of the platforms offer a CLI tool or MCP server for account creation. I create the accounts manually, then come back to the agent with credentials to continue.

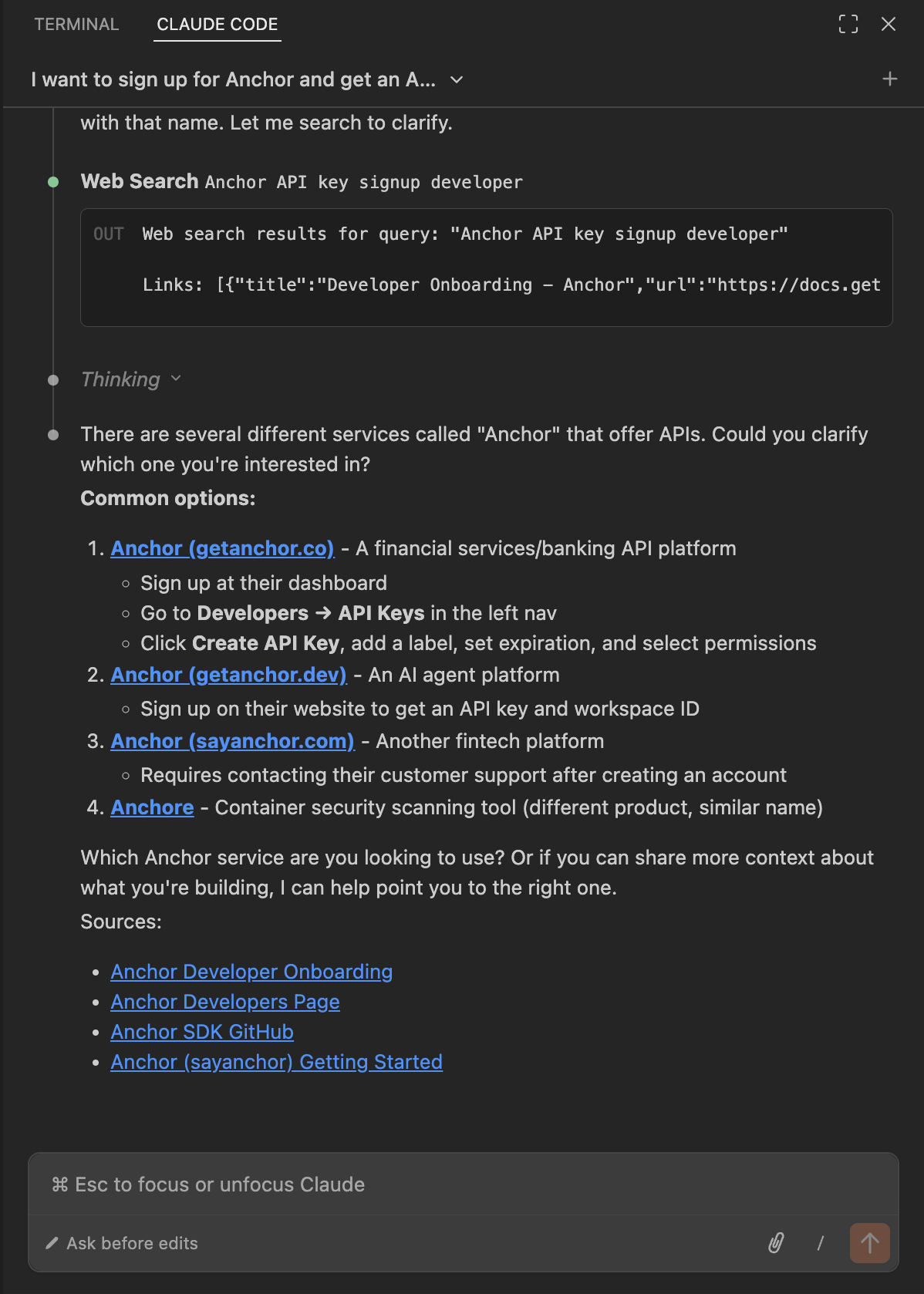

For Anchor, the visibility problem shows up immediately:

❯ I want to sign up for Anchor and get an API key. Can you help me? What's the process?

The agent finds several different services called "Anchor": a financial services API, an AI agent platform, another fintech platform, and a container security tool. It asks me to clarify. For Browserbase, the agent knows exactly which sign-up page to point me to and which credentials I'll need. For Anchor, it can't even identify the right product.

"Hello, world!": Taking a screenshot

The simplest measure of agent experience is whether the agent can write working code without being helped. With API keys in hand, I use the same prompt for all three platforms:

❯ I have a [platform] API key stored in `.env`. Can you help me write a simple test

script that takes a screenshot of example.com? Save the screenshot to the same folder.

The agent successfully uses Browserless:

⏺ Read .env file

⏺ Write screenshot_test.py (74 lines)

⏺ Bash: python3 screenshot_test.py

✓ Screenshot saved to example_screenshot.png

File size: 17,585 bytes

The agent knows the API structure without looking anything up:

import requests

api_key = os.getenv('BROWSERLESS_API_KEY')

url = f"https://chrome.browserless.io/screenshot?token={api_key}"

response = requests.post(url, json={

"url": "https://example.com",

"options": {"fullPage": True, "type": "png"}

})

with open('screenshot.png', 'wb') as f:

f.write(response.content)

It uses Browserbase successfully too:

⏺ Read .env file

⏺ Write screenshot_test.py (48 lines) with Playwright

⏺ Write requirements.txt

⏺ Bash: pip install -r requirements.txt

⏺ Bash: playwright install chromium

⏺ Bash: python screenshot_test.py

✓ Screenshot saved to example_com_screenshot.png

The agent knows that Browserbase uses Playwright and the Chrome DevTools Protocol. Again, no documentation lookup needed.

When using Anchor, the agent fails on its first try:

⏺ Read .env file

⏺ Write test_screenshot.py (67 lines)

⏺ Bash: python test_screenshot.py

✗ Error: 404 Client Error for url: https://api.anchor.dev/v1/browser/screenshot

The agent guesses wrong for three things: the domain (using anchor.dev instead of anchorbrowser.io), the authentication header format, and the request payload structure.

Then, it searches for documentation and corrects the errors:

⏺ Web Search: "Anchor browser API screenshot endpoint documentation"

⏺ Fetch: https://docs.anchorbrowser.io/api-reference/tools/screenshot-webpage

⏺ Update test_screenshot.py (corrections)

⏺ Bash: python test_screenshot.py

✓ Screenshot saved to example_com_screenshot.png

After the corrections, the code looks similar to the code for Browserless:

import requests

headers = {"anchor-api-key": ANCHOR_API_KEY, "Content-Type": "application/json"}

response = requests.post("https://api.anchorbrowser.io/v1/tools/screenshot",

headers=headers,

json={"url": "https://example.com", "width": 1920, "height": 1080})

with open('screenshot.png', 'wb') as f:

f.write(response.content)

All three platforms complete the screenshot test. But the experience differs in a way that developer documentation doesn't capture: there's friction between "I want to try this" and "I have working code." For Browserless and Browserbase, that friction is nearly zero. The agent generates correct code without hesitation. For Anchor, the agent makes educated guesses, hits a 404, searches for documentation, and fixes its errors. The documentation is good enough that it recovers, but it still goes through a debugging cycle the other two never needed. For teams that rely on agents to scaffold new integrations, this gap matters from day one, before you've written a line of production code.

Autonomy benchmarks

Before measuring raw connection speed, there's a more fundamental question worth settling: does platform choice even matter for the most demanding class of browser tasks, the ones that require visual understanding rather than just navigation and data extraction? If the limiting factor is model capability rather than infrastructure, then comparing platforms on visual tasks would tell us nothing about visibility or agent experience. I need to rule that out first.

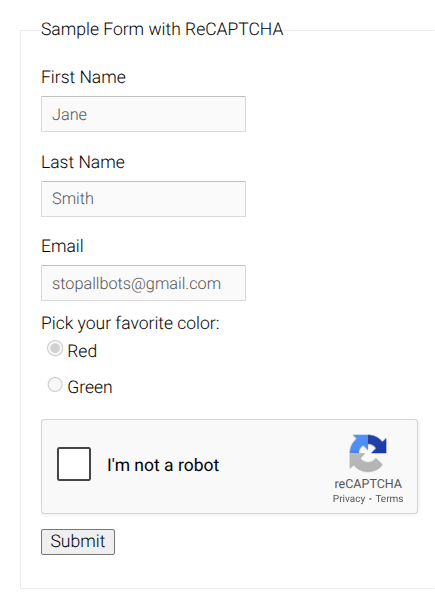

Neal.fun's I'm Not a Robot game is a good test case: it presents visual puzzles that require interpreting images and clicking the correct elements. No platform-specific API, no scraping, just visual understanding and accurate interaction.

My first approach uses Playwright and OpenAI's Vision API:

- Screenshot the puzzle

- Send it to GPT-4 Vision for analysis

- Parse the response for a CSS selector

- Execute the click

The vision-to-action translation fails. GPT-4 Vision can describe what it sees ("click the stop sign"), but consistently fails to connect that description to precise Playwright selectors or coordinates.

I switch to Stagehand with Browserbase. Stagehand is Browserbase's vision-native automation library that handles the screenshot → vision analysis → action execution pipeline internally:

import { Stagehand } from "@browserbasehq/stagehand";

const stagehand = new Stagehand({

env: "BROWSERBASE",

apiKey: process.env.BROWSERBASE_API_KEY,

projectId: process.env.BROWSERBASE_PROJECT_ID

});

await stagehand.init();

const page = stagehand.context.pages()[0];

await page.goto('https://neal.fun/not-a-robot/');

const maxAttempts = 20;

for (let attempt = 1; attempt <= maxAttempts; attempt++) {

// Stagehand's act() handles: screenshot → vision → execution

await stagehand.act({

action: "Solve the current puzzle by clicking the correct element"

});

await page.waitForTimeout(2000);

// Check progress with vision-based observation

const observation = await stagehand.observe({

instruction: "What level number is displayed?"

});

}

Here's the session replay showing the agent trying to brute force the second level of I'm Not a Robot:

The agent gets stuck on Level 2. Even with Stagehand abstracting the vision-to-action pipeline, the puzzles require interpreting ambiguous visual instructions and pixel-perfect interactions that current vision models can't reliably handle. For comparison, I get stuck on Level 17 (drawing a 94% perfect circle).

This isn't a platform problem. Switching to Browserless or Anchor wouldn't change the result. The ceiling here is model capability, not infrastructure. Visual tasks are the wrong test for comparing platforms. The structured benchmarks below are designed so that the platform is the only thing that changes.

Speed benchmarks

With visual autonomy ruled out as a differentiator, I want a standardized way to measure what platforms do control: connection overhead and basic automation speed. Steel published browserbench, an open-source benchmark that measures connection speed across browser automation platforms. Browserbench tests four stages:

- Session creation (starting the browser, usually with an API call)

- CDP connection (connecting via the Chrome DevTools Protocol)

- Page navigation (loading example.com)

- Session cleanup (terminating the browser)

The full suite runs 5,000 sessions per platform. However, I can't run 5,000 sessions on a free tier. Most platforms limit you to 1,000 requests per month or cap concurrent sessions at one. Running the full benchmark would burn through monthly quotas in minutes.

Building a free-tier benchmark

The autonomy tests aren't useful for comparing platforms directly. I need something simpler that isolates connection overhead and basic automation speed.

Steel's browserbench is open source, so adapting it for free-tier constraints is straightforward. I fork it at ritza-browser-bench and ask the agent to make three changes.

- It adds Browserless (which Steel's original didn't include).

- It reduces the run count from 5,000 to 10 measured runs with three warmup runs.

- It simplifies the rate-limiting logic.

Each provider implements a simple interface with create() and release() methods. Here's the Browserless implementation, which shows why its session creation shows 0ms overhead:

// Browserless provider - no session creation overhead

async create(): Promise<ProviderSession> {

const apiKey = this.getApiKey();

const cdpUrl = `wss://${apiKey}@chrome.browserless.io`;

const id = `browserless-${Date.now()}-${Math.random().toString(36).substring(7)}`;

return { id, cdpUrl };

}

async release(id: string): Promise<void> {

// No explicit release - sessions close when CDP connection drops

return Promise.resolve();

}

There's no API call during session creation, just the construction of a WebSocket URL. Browserless closes sessions automatically when the CDP connection drops. This is different from Browserbase and Anchor, which both make API calls to create and terminate sessions.

Here are the results from 10 measured runs per platform:

| Platform | Total time | Session creation | CDP connection | Success rate | Variance |

|---|---|---|---|---|---|

| Browserless | 4,264ms | 0ms | 3,085ms | 10/10 | 18% |

| Browserbase | 11,886ms | 5,838ms | 2,995ms | 10/10 | 21% |

| Anchor | 13,121ms | 5,801ms | 2,689ms | 10/10 | 38% |

Browserless completes the full cycle in 4.3 seconds. The session creation is instant, the CDP connection takes 3 seconds, and its performance is consistent across all runs.

Browserbase averages 11.9 seconds, but that average hides variance. Eight of the runs complete in six to seven seconds. The other two runs spike to 26 or 27 seconds during session creation. The free-tier rate limiting or resource contention likely causes these spikes.

Anchor averages 13.1 seconds with five to six seconds on session creation, and two to three seconds on cleanup. Its performance is slower than the other platforms on every run. Anchor's 38% variance is higher than Browserless's 18% or Browserbase's 21% variance.

The speed gap between Browserless and the other two platforms comes entirely from architecture, not visibility. Browserless skips the session creation API call; Browserbase and Anchor don't. That's a design choice, not a quality signal. Speed alone doesn't tell you which platform to use. The more interesting question is what happens when you ask them to do real work.

Real-world testing

Speed tells you about infrastructure. These six tests tell you whether higher visibility gives the agent a head start when features get more complex.

All six scenarios are in the ritza-browser-bench fork, and each one runs on the free tier with the same prompt across all three platforms.

- Product search: Extract structured data from an e-commerce site.

- Multi-page navigation: Click links and navigate between pages.

- CAPTCHA handling: Solve reCAPTCHA.

- Error recovery: Debug tools when things break.

- Parallel execution: Run multiple browsers simultaneously.

- Bot detection: Use stealth mode and anti-detection.

Test 1: Product search and data extraction

I ask the agent to scrape book titles and prices from books.toscrape.com:

❯ Write a script to extract the first 10 book titles and prices from books.toscrape.com

using [platform].

For all three platforms, the agent generates the same Playwright code without looking anything up:

# Navigate to the page

page.goto('http://books.toscrape.com/')

# Extract first 10 products

products = page.locator('article.product_pod').all()[:10]

results = []

for product in products:

title = product.locator('h3 a').get_attribute('title')

price = product.locator('.price_color').inner_text()

results.append({"title": title, "price": price})

All three extract the data successfully. For a basic scraping task, visibility doesn't give any platform an advantage. The difference shows up in execution time:

| Platform | Duration | Products extracted |

|---|---|---|

| Browserless | 22.8s | 10/10 |

| Browserbase | 15.5s | 10/10 |

| Anchor | 70.6s | 10/10 |

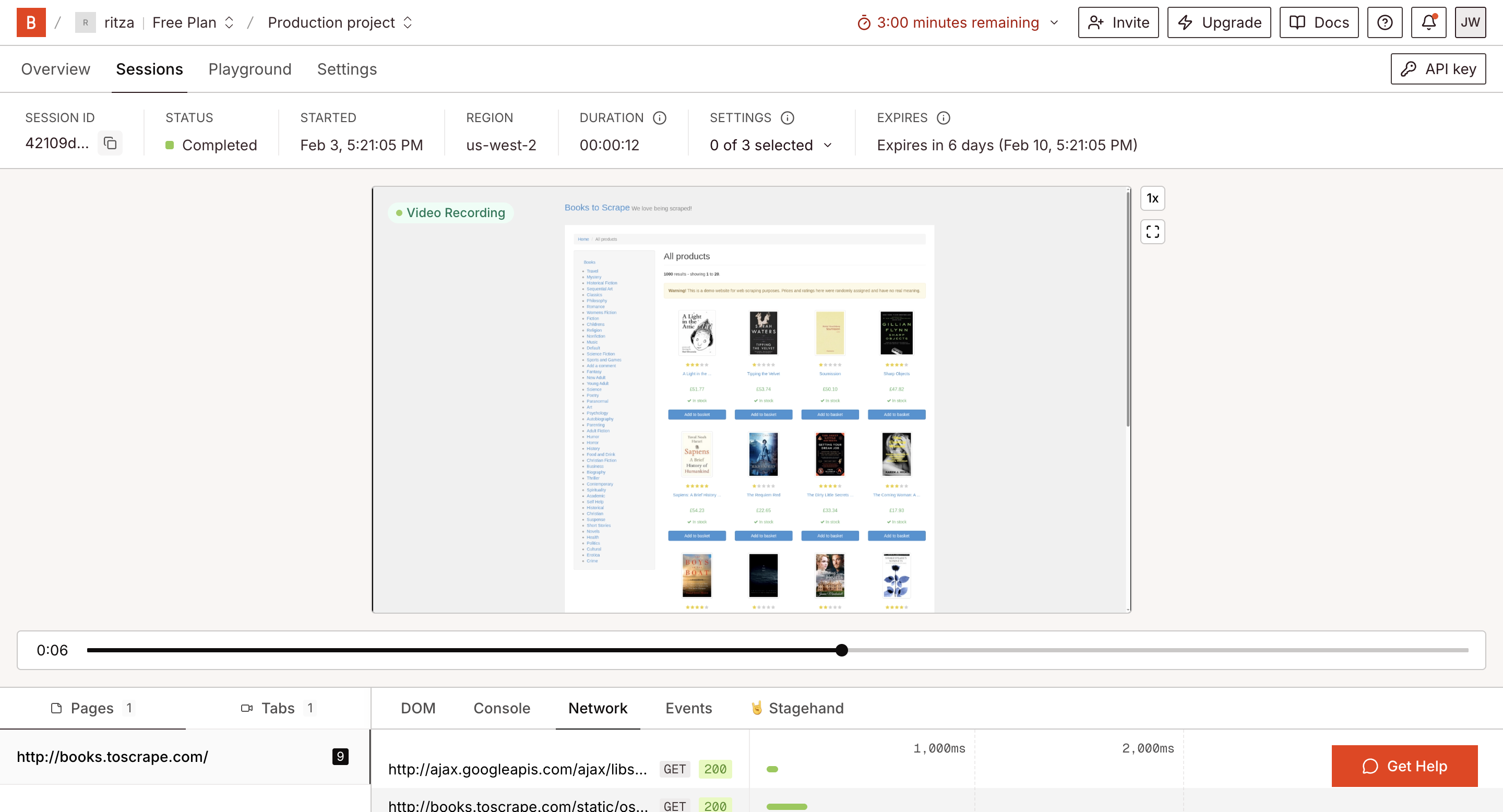

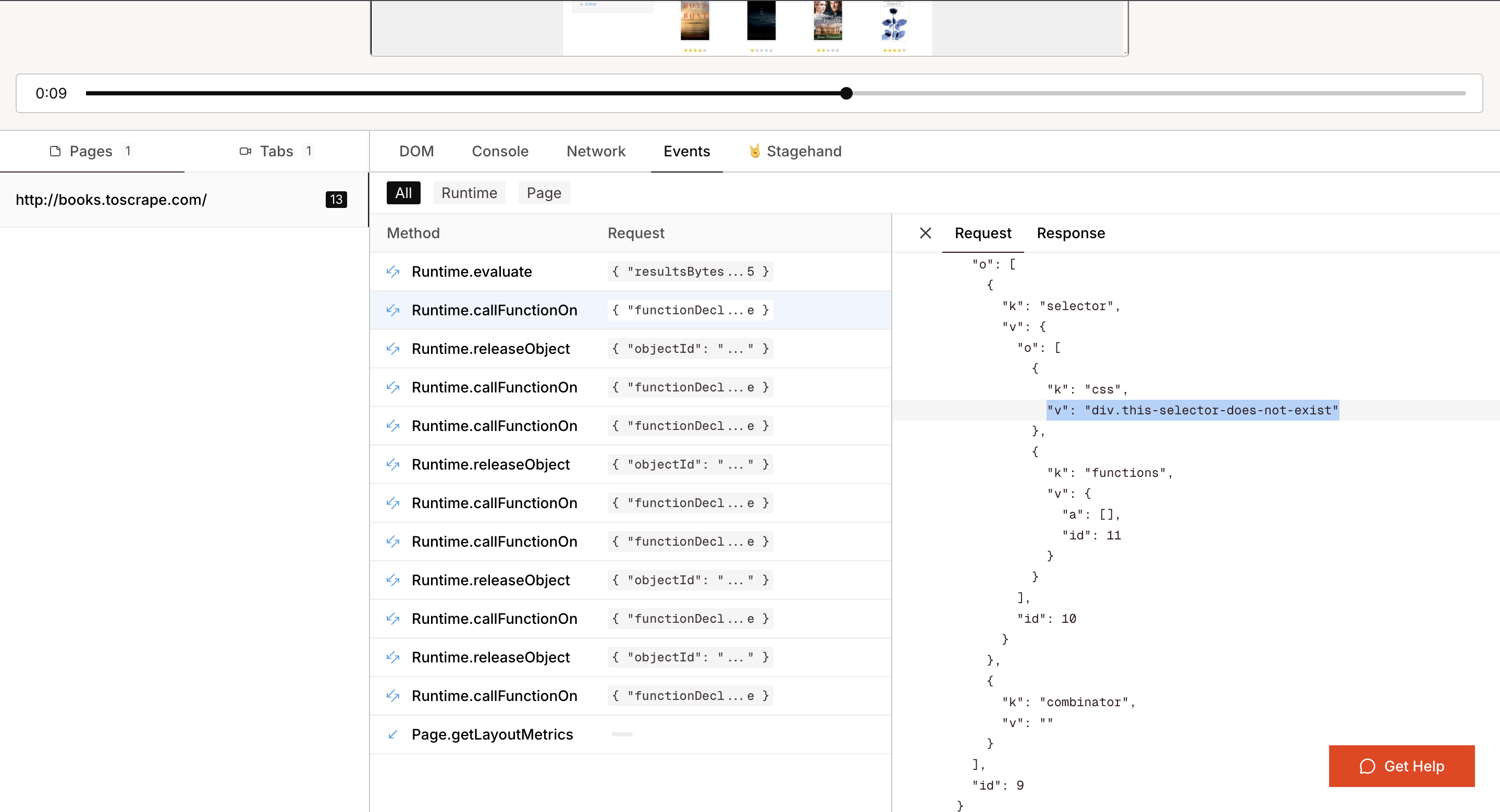

Browserbase's session replay captures the full execution, including every network request and page event:

Anchor takes 70.6 seconds to load one page and extract 10 data points. At scale, that difference compounds fast. But for this test, the agent's visibility advantage makes no difference. All three produce working code from the same prompt.

Test 2: Multi-page navigation

Multi-step navigation adds a click and a page load to the scraping task:

❯ Navigate from the books catalog to a product detail page, click the first book, and

extract the title, price, and description.

The agent generates the same navigation code for all three platforms:

# Navigate to catalog

page.goto('http://books.toscrape.com/catalogue/category/books_1/index.html')

# Click first book

page.locator('article.product_pod h3 a').first.click()

page.wait_for_load_state('networkidle')

# Extract details from product page

title = page.locator('h1').inner_text()

price = page.locator('.price_color').inner_text()

description = page.locator('#product_description + p').inner_text()

All three handle the navigation without errors:

| Platform | Duration |

|---|---|

| Browserless | 40.5s |

| Browserbase | 13.9s |

| Anchor | 32.1s |

Browserbase is again the fastest by a wide margin. For the two tests so far, visibility hasn't predicted performance. The agent generates the same code regardless of platform. The differences are in the infrastructure underneath. Whether that changes when features get more complex is the question the next four tests answer.

Test 3: CAPTCHA solving

CAPTCHA handling is where the platforms start to diverge. It's also the first test where the agent's knowledge of a specific platform makes a direct difference.

❯ Navigate to Google's reCAPTCHA demo page and solve the CAPTCHA.

For Browserless, the agent generates working code immediately, using CDP events to listen for CAPTCHA detection and solving:

# Connect with CAPTCHA solving enabled

endpoint = f"wss://production-sfo.browserless.io/stealth?token={TOKEN}&solveCaptchas=true"

browser = await playwright.chromium.connect_over_cdp(endpoint)

# Set up CDP event listeners

cdp = await page.context.new_cdp_session(page)

cdp.on("Browserless.captchaFound", on_captcha_found)

cdp.on("Browserless.captchaAutoSolved", on_captcha_auto_solved)

# Navigate and wait for solve

page.goto("https://www.google.com/recaptcha/api2/demo")

await captcha_events['solved'].wait()

# Submit the form

await page.click("#recaptcha-demo-submit")

Only Browserless solves the CAPTCHA automatically:

| Platform | CAPTCHA solved | Duration |

|---|---|---|

| Browserless | ✅ Yes | 39.2s |

| Browserbase | ❌ No | 41.8s |

| Anchor | ❌ No | 51.6s |

Browserless detects the CAPTCHA, solves it in 17 seconds, and generates a valid token:

The agent knows to use the solveCaptchas=true endpoint parameter and the Browserless-specific CDP events without looking anything up. It does need to add form submission code manually after the solve. The platform handles detection and token generation, but stops there.

Browserbase doesn't include CAPTCHA solving on the free tier. Anchor's documentation advertises a "vision-based" solver that requires a proxy. I enable both and wait, but the CAPTCHA remains unsolved.

This is the first result that answers the central question directly. Higher visibility gives the agent working knowledge of Browserless's CAPTCHA API. For Browserbase and Anchor, the agent has nothing to work from, and the platforms either don't offer the feature on the free tier or fail to deliver it.

Test 4: Debugging tools

When automation breaks in production, the quality of debugging tools determines how quickly you can fix it. A good way to compare platforms is to trigger a deliberate failure and see what each one gives you.

❯ Navigate to books.toscrape.com and try to click a selector that doesn't exist.

Capture what happens.

The agent generates standard error-handling code for all three platforms:

try:

page.locator('div.this-selector-does-not-exist').click(timeout=5000)

except Exception as e:

print(f"Error: {type(e).__name__}")

page.screenshot(path='error_state.png')

html_content = page.content()

All three platforms catch the error, but their debugging tools vary:

| Platform | Duration | Session replay available |

|---|---|---|

| Browserless | 20.5s | ❌ No (Enterprise only) |

| Browserbase | 19.8s | ✅ Yes (free tier) |

| Anchor | 30.0s | ❌ Unclear |

The Browserbase logs panel captures every browser event during the error:

Browserbase records the session automatically. I can watch the full video, scrub through the timeline, and inspect the DOM at each error point. The Logs API returns 129 entries showing every browser event, network request, and console message.

Browserless limits session replay to enterprise plans. On the free tier, I have screenshots, HTML dumps, and console output. Standard Playwright debugging, nothing more.

Anchor shows a Live View URL during script execution, but doesn't offer a post-session replay.

Browserbase wins this test despite being the second-most visible platform. Session replay is a feature that doesn't show up in agent training data the same way API endpoints do. The agent can't tell you which platform has the best debugger. You find that out when something breaks. If debugging speed matters to your workflow, put session replay on your evaluation checklist before you commit to a platform, even if the agent never mentions it.

Test 5: Parallel execution

Running multiple browsers simultaneously is where free-tier limits become a real constraint. I ask the agent to scrape three categories at once:

❯ Scrape three product categories (Travel, Mystery, Historical Fiction) simultaneously

from books.toscrape.com.

The agent generates async code to launch three browsers in parallel:

import asyncio

async def scrape_category(category_name, category_url):

# Create browser session

# Navigate to category

# Extract products

return results

categories = [

("Travel", "books_2"),

("Mystery", "books_3"),

("Historical Fiction", "books_4")

]

results = await asyncio.gather(*[

scrape_category(name, url) for name, url in categories

])

The code works, but the results reveal different concurrency limits:

| Platform | Duration | Categories completed | Concurrency limit |

|---|---|---|---|

| Browserless | 10.4s | 1/3 | 10 (advertised) |

| Browserbase | 55.3s | 3/3 | 1 (free tier) |

| Anchor | 60.8s | 3/3 | 5 (free tier) |

Browserless advertises ten concurrent sessions on the free tier, but two of the three parallel sessions hit 429 Too Many Requests errors. Only one category completes.

Browserbase runs all three categories sequentially. The free tier allows only one concurrent session, so the async code works but provides no speed advantage.

Anchor completes all three categories in parallel without errors. This is the clearest counter-example to the visibility-predicts-performance assumption. The least-known platform is the only one that delivers on parallel execution at the free tier. If parallel execution at no cost is a hard requirement, Anchor is currently the only option here, regardless of what the agent recommends.

Test 6: Bot detection and stealth mode

Many sites use fingerprinting to block headless browsers. Stealth mode patches the most obvious signals. Sites like bot.sannysoft.com make it easy to check whether a platform leaks detectable indicators, so that's what I test next.

❯ Navigate to bot.sannysoft.com and capture what bot detection checks are triggered.

The agent knows Browserless's stealth endpoint without documentation and generates code comparing both modes:

# Standard endpoint

standard = f"wss://production-sfo.browserless.io?token={TOKEN}"

# Stealth endpoint (free tier)

stealth = f"wss://production-sfo.browserless.io/stealth?token={TOKEN}"

The test checks whether bot detection indicators appear on bot.sannysoft.com:

| Platform | Standard mode | Stealth mode | Duration |

|---|---|---|---|

| Browserless | ✅ Passed | ✅ Passed | 42.9s |

| Browserbase | ⚠️ Detected | N/A ($20/mo) | 22.0s |

| Anchor | ❌ Timeout | ❌ Timeout | 69.1s |

Browserless passes in both modes. Even without stealth enabled, the standard endpoint shows no detectable webdriver or headless indicators.

With exposed webdriver properties and visible headless indicators, Browserbase's standard mode triggers and fails the detection checks. The agent finds documentation mentioning stealth mode, but it requires a paid plan.

Anchor times out after 20 seconds. Neither the standard mode nor the Cloudflare Web Bot Auth mode from the documentation works on this test page.

Like CAPTCHA solving, stealth mode is a case where Browserless's visibility pays off directly. The agent knows the API, generates working code, and the platform delivers. For the other two platforms, the agent either can't find a free-tier path or the documented feature doesn't work.

Results summary

Visibility predicts agent experience but not platform quality. Here's how each platform performed across all tests:

| Feature | Browserless | Browserbase | Anchor |

|---|---|---|---|

| Discoverability | 75% (9/12 queries) | 67% (8/12 queries) | 8% (1/12 queries) |

| Agent knowledge | No docs needed | No docs needed | 3 corrections after 404 |

| Speed (avg) | 4.3s | 11.9s (2 spikes to 26s) | 13.1s |

| Product search | 22.8s | 15.5s | 70.6s |

| Multi-page nav | 40.5s | 13.9s | 32.1s |

| CAPTCHA solving | ✅ Free tier | ❌ Paid only | ❌ Documented, failed |

| Session replay | ❌ Enterprise only | ✅ Free tier | ❌ Live view only |

| Parallel (3 sessions) | ⚠️ 1/3 completed | ⚠️ 3/3 sequential | ✅ 3/3 parallel |

| Stealth mode | ✅ Free tier | ❌ Paid only | ❌ Timed out |

| Free tier | 1k units/month, 10 concurrent | 1 hour/month, 1 concurrent | $5 credits, 5 concurrent |

| Entry price | $140/month | $20/month | $20/month |

Browserless has zero session creation overhead and solves CAPTCHAs and passes bot detection on the free tier. The agent generates working code for both without documentation lookups. The free-tier rate limiting is misleading though. It advertises 10 concurrent sessions but hits errors at three.

Browserbase's standout feature is session replay on the free tier, with full video playback and DOM inspection at each error point. The agent knows Playwright patterns without looking up docs. Two of the speed-test runs spike to between 26 and 27 seconds, likely from free-tier contention.

Anchor is the only platform that completes all three parallel scraping tasks without rate limiting. It performs consistently across basic tests. The agent starts from zero with Anchor, searching documentation and correcting a 404 error on first use. Two of Anchor's documented features (CAPTCHA solving and stealth mode) fail in testing, which limits its usefulness for those specific use cases.

Does visibility predict performance? Partly. For features the agent already knows, higher visibility translates directly into faster setup and working code with no documentation lookup. For features the agent doesn't know about, such as session replay or free-tier concurrency limits, visibility predicts nothing. The platforms surprise in both directions. Anchor outperforms the higher-visibility platforms on parallel execution. Browserbase outperforms Browserless on debugging, despite being less well-known. The visibility gap is real, but it only matters when the agent's knowledge of a platform's API is what stands between you and working code.

Conclusion

I didn't know about Browserless or Anchor before starting this article. The agent did, and that shaped the entire experience.

The compounding advantage of visibility

Browserless wins the CAPTCHA and stealth tests partly because it's better at those features, and partly because the agent already knows how to use them. Browserbase wins the debugging test not because the agent knows about session replay, but because the feature exists and works. Anchor wins the parallel execution test despite the agent starting from zero.

Training data often creates a feedback loop that shapes all of this. Platforms that agents recommend frequently get used more, which generates more blog posts and examples, which trains future models to recommend them even more. Low-visibility platforms face the opposite spiral, and the longer they wait to address it, the harder it gets to break in. Agents don't recommend them, developers don't use them, and no new training data gets created.

Discovery matters more for agents than for humans

There's a gap between developer experience and agent experience that this testing makes concrete. Developer experience assumes humans discover tools through conferences, colleagues, and targeted searches, then read documentation to learn them. Agent experience depends on whether the platform was visible enough during training to encode working knowledge into the model. A human can discover a niche tool and learn it. An agent without training data starts from zero every time.

This creates a different optimization problem for platform companies:

- Developer experience optimizes the journey after discovery, through smooth onboarding, clear documentation, and responsive support.

- Agent experience must optimize discovery first. Excellent documentation doesn't help if agents don't know the platform exists.

For developers, the implication is more immediate. Don't treat agent recommendations as a complete market survey. The testing here shows that agents can be confidently wrong about pricing, unable to identify a product by name, and unaware of features that exist on the free tier. If you're evaluating browser automation platforms today, use the agent to generate a shortlist, then verify pricing, concurrency limits, and feature availability directly. What the agent doesn't know about a platform is as important as what it does.

Browserless and Browserbase probably didn't optimize for agents intentionally. They built good developer experiences, published working examples, and ended up with agents that know their APIs. That's the path available to any platform right now.

What comes next

As more developers start projects by asking agents, "What should I use?" instead of searching Google, this dynamic becomes infrastructure. Platforms with existing visibility have a compounding advantage. New platforms need to earn mentions in public forums, publish working code examples, and build agent knowledge over time, and they need to start before they feel the gap. Training data doesn't update instantly. A blog post written today might not influence agent recommendations for months or years, which means the right time to act is earlier than it feels necessary.

For developer tools, agent experience is becoming as important as developer experience. Platforms that recognize this early will publish examples, earn mentions, and build agent knowledge before their competitors do. Those that don't will wonder why developers keep choosing the same few options, even when better alternatives exist.