It occurred to me recently that there is no good reason not to publish research papers here on Substack. Academic publishing has its own semiotic ecology, one that, ironically, often prevents the people who might most benefit from engaging with an idea from ever encountering it. So I’m going to start doing something different: publishing my research papers here, alongside a short, more human introduction that tries to explain what the paper is about and why it matters, before dropping you into the deep end.

Here’s the short version of what follows.

Large language models (ChatGPT, Claude, Gemini, the whole constellation) are trained to predict the next word. That’s it. They are extraordinarily good at this, and the result is text that looks like it understands things. But looking like understanding and actually understanding are not the same thing. The gap between them is where the trouble lives.

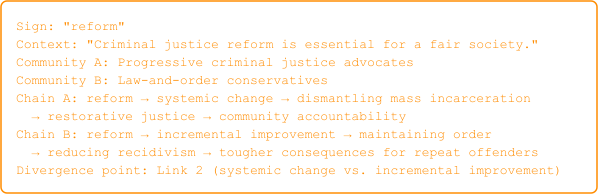

The specific trouble this paper addresses is polarization. Not polarization as a vague social malaise, but polarization as a precise, mathematically describable process: a bifurcation. When the same word (“freedom,” “justice,” “safety”) means fundamentally different things to different communities, and when each community’s interpretation becomes the starting point for the next round of interpretation, you get runaway divergence. The communities aren’t just disagreeing; they’re losing the shared semiotic ground that would make disagreement productive. And language models, trained on the internet, absorb this fractured meaning landscape wholesale. They don’t just reflect polarization; they reproduce it at scale.

The paper below proposes an architecture, the Semiotic-Reflexive Transformer, designed to do something about this. It gives the model explicit representations of how meaning works: not just what words co-occur, but how signs relate to objects through culturally conditioned interpretation, how those interpretations compound into mutually unintelligible chains, and where the stable ground is. That last part is my favorite. It turns out that not all meaning is arbitrary convention. The bouba/kiki effect (the near-universal tendency to associate round sounds with round shapes and sharp sounds with angular shapes) shows up in human infants, across every culture tested, and even in baby chicks one day out of the egg. Chicks. With beaks. No language, no lips, no cultural learning. The mapping is prenatal, wired in by the acoustic physics of eggs and wombs, and it’s been conserved across 310 million years of evolution. These iconic correspondences give us something to anchor meaning to, attractors that hold steady even when conventional meaning is tearing itself apart.

That’s the intuition. The paper that follows is the formal argument, the architecture, the training pipeline, and the evaluation framework. It is long. It is technical. But now you know what it’s trying to do.

The following essay was engineered with Claude Opus 4.6 using Visual Studio Code. Do excuse the PNG replacement for lack of LaTeX support. Substack does not support LaTeX or images inline with text (so fill in the blanks with variables or equations below the paragraphs until I can patch this.

Sublius

March 2026

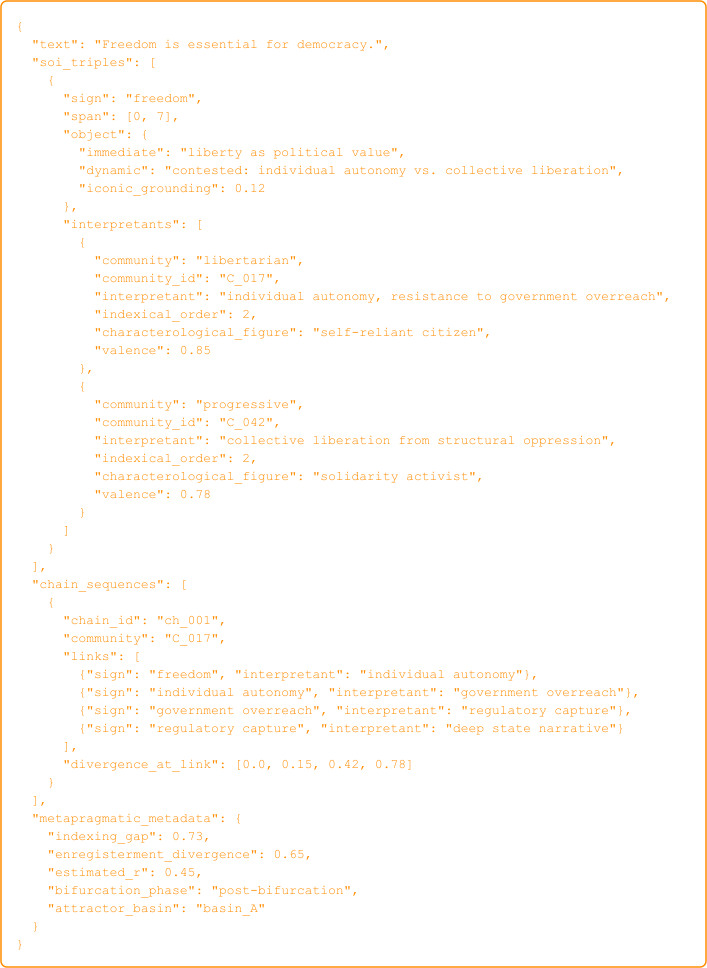

Large language models trained on web-scale corpora inherit the semiotic bifurcations already embedded in their data. Divergent interpretant chains – sequences in which one community’s reading of a sign becomes the premise for the next, compounding away from rival readings – are absorbed wholesale, along with the ideologically enregistered meanings of contested keywords and the attractor structures sculpted by algorithmic curation. The result is not incidental bias but the structural reproduction, at industrial scale, of the very polarization dynamics that fracture shared meaning in digital societies. Current alignment methods (RLHF, DPO, Constitutional AI) intervene downstream: they constrain outputs after the model has already internalized a bifurcated semiotic landscape, producing superficial compliance without representational change. This paper proposes a fundamentally different training paradigm – semiotic-reflexive language modeling – that equips models to represent, recognize, and modulate the gap between sign and referent rather than silently replicate it.

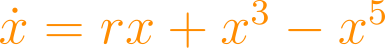

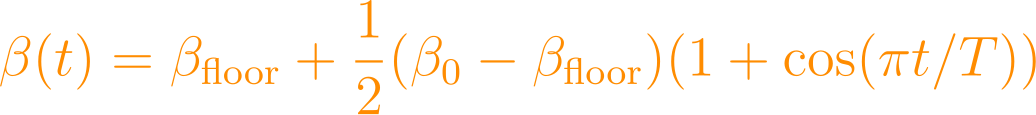

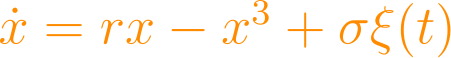

The theoretical foundation integrates four converging lines of work. First, Peircean semiotics, as formalized by Kockelman (2025), establishes that every sign completes its meaning only through a culturally conditioned interpretant, which itself becomes the next sign in an open chain – the mechanism by which modest indexing differences compound into mutual unintelligibility across as few as three to five chain links. Second, nonlinear dynamics: Lancaster (2025) demonstrates that this compounding exhibits the structure of a supercritical pitchfork bifurcation (), where encodes the strength of algorithmic amplification; below a critical threshold shared interpretive equilibria absorb perturbation, but above it symmetry breaks into antagonistic attractors that are self-reinforcing and structurally resistant to evidence-based reconciliation. Third, Silverstein’s (1993; 2003) orders of indexicality supply the critical distinction between first-order sign use, second-order ideological construal, and third-order metapragmatic awareness – the reflexive capacity to observe how discourse itself shapes interpretation – a capacity entirely absent from current architectures. Fourth, research on cross-modal grounding demonstrates that not all semiotic mapping is arbitrary: the bouba/kiki effect (Köhler, 1929; Ramachandran & Hubbard, 2001) reveals iconic correspondences between auditory and visual processing that are robust across languages, present in prelinguistic infants (Ozturk et al., 2013), demonstrated in domestic chicks within one day of hatching (Versace et al., 2023) – organisms with no language, no vocal tract, and no capacity for linguistic convention – and recoverable in multimodal architectures such as CLIP (Radford et al., 2021). The cross-species evidence falsifies the articulatory hypothesis (Ramachandran & Hubbard, 2001) and establishes that these correspondences originate in prenatal sensory experience: the acoustic filtering properties of egg and uterus create an environment in which smooth, low-frequency waveforms correlate with biological safety and sharp, high-frequency transients correlate with threat, calibrating embryonic auditory systems for cross-modal mapping before the organism encounters the external world (Lancaster, 2026b). In Peircean terms, these correspondences constitute hypoicons (CP 2.276) – signs that represent their objects through shared quality rather than convention – whose prenatal origin establishes iconic semiosis as a conserved feature of vertebrate neurodevelopment separated by 310 million years of independent evolution. These correspondences provide embodied attractors – low-dimensional fixed points in sensorimotor space – that constrain interpretive drift toward stable, shared semantics where purely conventional signs cannot.

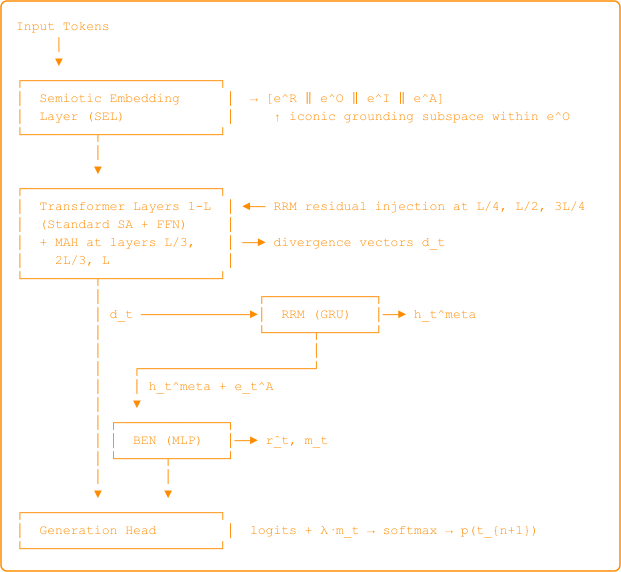

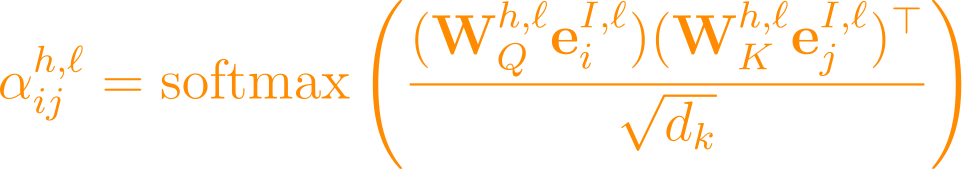

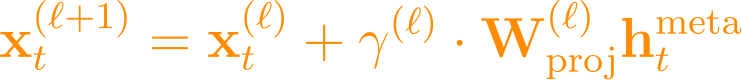

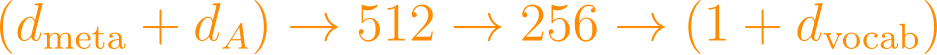

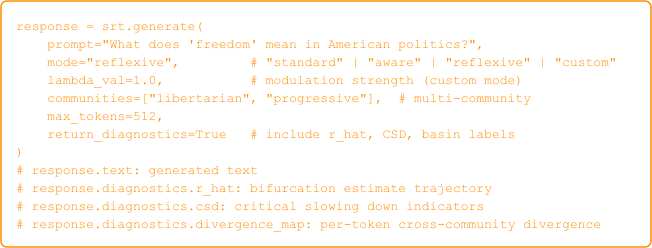

We synthesize these foundations into a concrete architecture, the Semiotic-Reflexive Transformer (SRT), with four structural departures from the standard pipeline. (1) A Semiotic Embedding Layer decomposes each token into representamen, object, interpretant, and attractor components, with a dedicated iconic grounding subspace initialized from cross-modal correspondence data that serves as an embodied anchor against semiotic drift. (2) Metapragmatic Attention Heads compute attention over the interpretant component and produce per-position divergence signals quantifying how far the current interpretive trajectory has drifted from neighboring chains – the architectural analog of tracking Silverstinian indexical orders in real time. (3) A Reflexive Recurrent Module (GRU) processes these divergence signals to maintain a running meta-observation of the model’s own interpretive dynamics, injecting residual corrections into the transformer stack at regular intervals – instantiating third-order metapragmatic awareness as a differentiable computation. (4) A Bifurcation Estimation Network (MLP) estimates the effective amplification parameter from the meta-observation state and produces logit-level modulation vectors that bias generation toward synthesis across attractor basins rather than reinforcement within them.

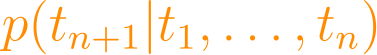

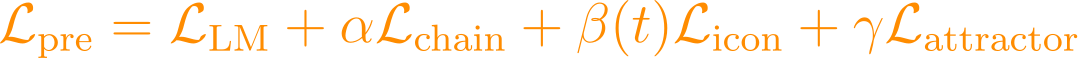

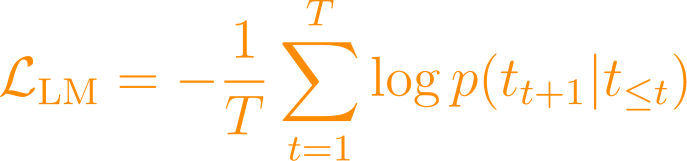

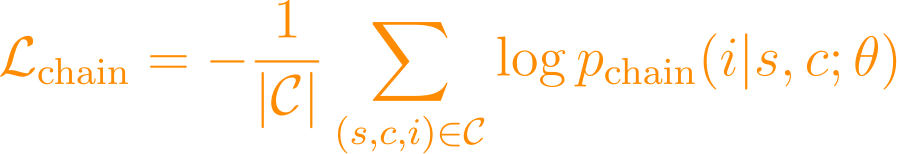

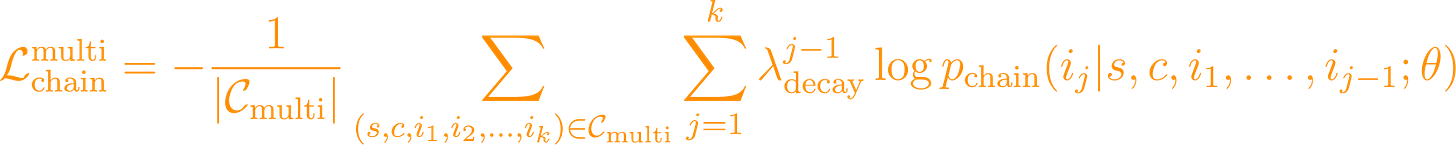

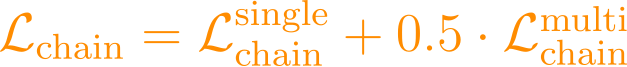

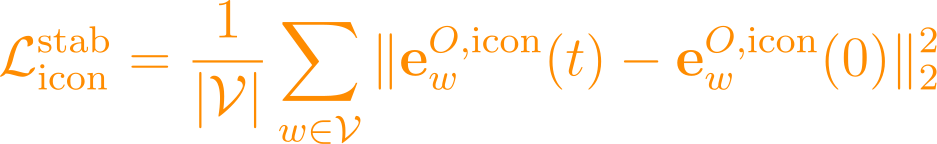

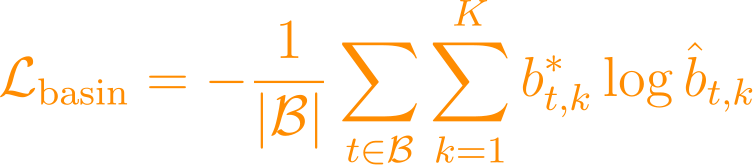

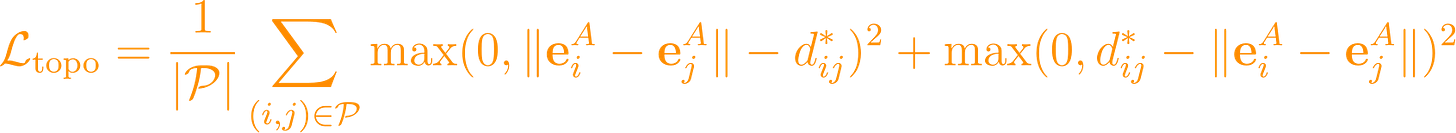

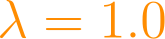

The training pipeline combines standard autoregressive language modeling loss with three auxiliary objectives: interpretant chain reconstruction (training the model to predict the next interpretant given a sign and community context), iconic grounding alignment (anchoring cross-modal embeddings to experimentally validated correspondences), and attractor landscape prediction (estimating basin membership and local -values from annotated semiotic metadata). Fine-tuning proceeds across four tasks: chain prediction, cross-attractor bridge generation, reflexive commentary production, and bifurcation simulation. Inference-time modulation provides controllable semiotic sensitivity via a continuous parameter ranging from standard generation () to full reflexive mode ().

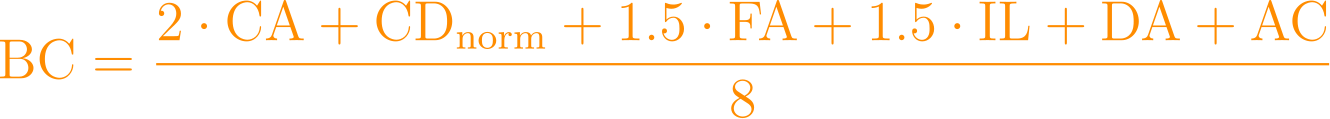

The architecture is evaluated on three axes with explicit falsification criteria: bridging coherence (the capacity to render contested signs intelligible across interpretive communities, assessed by cross-community panels), reflexivity fidelity (accuracy of metapragmatic commentary as judged by expert semioticians, targeting F1 > 0.7), and bifurcation prediction (precision in estimating and predicting regime transitions, targeting on synthetic data and accuracy > 0.7 on historical cases). Five falsification conditions are specified: if any evaluation axis fails to exceed its baseline, the corresponding architectural component is not contributing meaningful capability. This paradigm reframes LLM alignment from reactive patching of downstream harms to proactive stewardship of meaning ecologies – producing language models that function not as amplifiers of the pitchfork but as instruments for raising its threshold.

Keywords: semiotic-reflexive training, metapragmatic awareness, interpretant chains, pitchfork bifurcation, attractor dynamics, embodied grounding, bouba/kiki effect, iconic grounding, Peircean semiotics, hypoicon, prenatal semiotic grounding, cross-species cognition, LLM alignment, polarization, cross-modal correspondence

Large language models have become infrastructural to how meaning circulates in digital societies. They draft professional communications, summarize legal and medical documents, generate editorial and creative content, moderate platforms serving billions of users, and increasingly mediate the encounters through which individuals form beliefs, affiliations, and political commitments. By 2026, LLM-generated or LLM-mediated text constitutes a significant and growing fraction of the text encountered by internet users daily. These systems are no longer tools applied to language; they are participants in the semiotic ecology – agents whose outputs enter interpretant chains alongside human-authored signs, shaping subsequent interpretation in ways that neither users nor developers can fully trace.

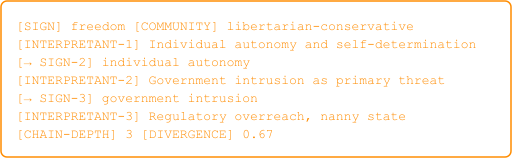

Yet the training paradigm that produces these systems is semiotically naive. It treats language as a sequence prediction problem, optimizing for the conditional probability of the next token given preceding tokens. This objective captures the statistical regularities of surface forms – co-occurrence patterns, syntactic templates, topical associations – while remaining structurally blind to the interpretive processes that make those forms meaningful. The model learns that “freedom” co-occurs with certain words in certain contexts, but it does not represent the fact that “freedom” indexes opposed characterological figures in libertarian and progressive discourse (Agha, 2003), that its enregisterment has diverged so sharply that the same utterance triggers solidarity in one community and threat perception in another, or that these divergent interpretants compound through chains of subsequent interpretation into mutually unintelligible worldviews.

This blindness has measurable consequences. Language models trained on web-crawled corpora do not merely learn language; they learn the semiotic ecology of the internet circa their training cutoff – an ecology already bifurcated by algorithmic curation into antagonistic interpretive communities (Lancaster, 2025). The models absorb divergent enregisterments of contested signs, incompatible semiotic ideologies governing what counts as evidence or expertise, and the attractor structures that lock interpretant chains into self-reinforcing basins. When deployed, they reproduce and amplify these dynamics: generating text that is fluent within particular attractor basins while lacking the reflexive capacity to recognize, much less navigate, the gaps between them. A model asked to explain “defund the police” will produce a coherent account from within one basin or the other – or an artificially balanced synthesis that satisfies neither – because it has no representation of the divergent interpretant chains that make the phrase a site of semiotic bifurcation in the first place.

The standard response to this problem – alignment through reinforcement learning from human feedback (RLHF; Ouyang et al., 2022) or its variants (DPO, Constitutional AI) – operates downstream of the generative mechanism. It attempts to reshape the output distribution after the model has already internalized a bifurcated semiotic landscape. The result is superficial compliance: models learn to avoid outputs that trigger negative feedback from a particular evaluator population without developing any capacity for the metapragmatic awareness that would enable genuine navigation of interpretive divergence. The aligned model does not understand why certain outputs are problematic – it has learned a reward surface, not a semiotic landscape. In dynamical systems terms, RLHF adjusts the trajectory within a fixed attractor landscape; it does not reshape the landscape itself. The control parameter that governs bifurcation remains untouched.

Recent theoretical advances create the conditions for a more fundamental intervention. Four lines of work, developed independently across semiotics, cognitive science, nonlinear dynamics, and multimodal machine learning, converge to make a semiotic-reflexive training paradigm both theoretically motivated and technically feasible.

Peircean semiotics and semiotic agency. Kockelman’s (2025) formalization of agent-world semiotic dynamics provides a mathematical vocabulary for modeling how signs, objects, and interpretants interact through chains that compound across communities. Building on Peirce’s triadic framework and its elaboration in linguistic anthropology (Silverstein, 2003; Agha, 2003; Irvine & Gal, 2000), Kockelman reconceives semiotic processes as dynamical trajectories through a state space defined by the sign-object-interpretant triad. Crucially, these trajectories are formally tractable: they can be parameterized, simulated, and – this paper argues – learned by neural architectures designed to represent them. His analysis of “sieving” (the filtering of potential interpretants by algorithms and institutions) provides direct theoretical purchase on how recommendation systems participate in semiosis without conscious intent, redistributing semiotic agency toward engagement-maximizing expressions.

Nonlinear dynamics of polarization. Lancaster (2025) demonstrates, through a 115-page synthesis of Peircean semiotics, linguistic anthropology, and dynamical systems theory, that political polarization in algorithmically curated societies exhibits the structure of a supercritical pitchfork bifurcation. The normal form is not a loose analogy but a formal model whose qualitative predictions – threshold character, symmetry breaking, hysteresis, self-reinforcement within basins – align with documented patterns of attitudinal divergence coinciding with the rise of personalized feeds in the late 2000s and 2010s (Bail et al., 2018; Leonard et al., 2021). The control parameter maps directly onto the strength of algorithmic amplification: the degree to which platforms surface and reinforce content aligned with emerging interpretive paths. This formalization transforms the paper’s central question from a vague concern about “bias” into a precise dynamical problem: how can training architectures be designed so that the model’s own generative activity lowers the effective in the semiotic ecology it participates in, rather than raising it?

The critique of probabilistic cognition. Mangalam (2025) challenges the Bayesian brain hypothesis that has underwritten both computational cognitive science and the statistical learning paradigm in machine learning for two decades. Drawing on evidence from motor control, perception, and neural dynamics, Mangalam argues that cognition is not fundamentally a process of prior-likelihood-posterior updating but one of chaotic self-organization, where meaning emerges from the interaction of nonlinear processes operating at multiple temporal scales. The implication for LLM design is direct: models optimized for next-token prediction are optimizing a Bayesian objective (approximate the posterior distribution of tokens given context) that may be an inadequate proxy for the processes that generate meaning in biological systems. McLeod (2025) extends this critique from a linguistic-philosophical direction through the conduit metaphor (Reddy, 1979) – the deeply entrenched assumption that language transmits pre-formed meanings from sender to receiver. LLMs exploit this assumption: they produce outputs that conform to the conduit illusion (fluent, contextually appropriate token sequences), leading users to attribute understanding where only statistical pattern completion obtains. The treachery lies in the mismatch between the appearance of meaning (the conduit form) and the absence of the interpretive grounding that would make it real.

Cross-modal iconic grounding: the bouba/kiki anchor. Saussure’s principle of arbitrariness – that no natural connection links signifier to signified – is foundational to modern linguistics. But it is also, taken without qualification, false. The bouba/kiki effect (Köhler, 1929; Ramachandran & Hubbard, 2001) demonstrates systematic, cross-culturally robust correspondences between auditory and visual features: rounded vowels and sonorant consonants map to rounded shapes; plosive consonants and front vowels map to angular shapes. This is not a quirk of English phonology: it has been documented in the Himba of Namibia (Bremner et al., 2013), in Tamil speakers with a non-Latin script (Ramachandran & Hubbard, 2001), and – decisively – in prelinguistic infants as young as four months (Ozturk et al., 2013), ruling out learned convention as the sole explanation.

The cross-species evidence is more decisive still. Versace et al. (2023) demonstrated bouba/kiki-like cross-modal correspondences in domestic chicks (Gallus gallus domesticus) within one day of hatching. Chicks have no language, no vocal tract adapted for speech, and no capacity for linguistic convention. They have beaks, not lips. The articulatory hypothesis – which ties the bouba/kiki effect to correspondences between mouth shape during vowel production and visual form (Ramachandran & Hubbard, 2001) – is inapplicable to an organism without the relevant articulatory apparatus. Yet the mapping appears. The last common ancestor of birds and mammals lived approximately 310 million years ago. Any mechanism shared between chicks and humans is not a recent mammalian innovation but a deeply conserved feature of vertebrate neurobiology.

Lancaster (2026b) argues that the mechanism is prenatal: both chick embryos and human fetuses develop auditory sensitivity during gestation in environments that function as low-pass acoustic filters. The eggshell and the uterus attenuate high-frequency sounds while transmitting low-frequency vibrations, creating an acoustic environment dominated by smooth, periodic waveforms (heartbeat, blood flow, respiration) in which sharp, high-frequency transients are anomalous deviations. This prenatal calibration establishes cross-modal associations – smooth sounds with smooth shapes, sharp sounds with angular shapes – through the basic multisensory integration architecture of the vertebrate midbrain (superior colliculus/optic tectum) before the organism encounters the external world. In Peircean terms, these correspondences are hypoicons (CP 2.276): signs that represent their objects through shared quality rather than convention. Their prenatal origin establishes that iconic semiosis is biologically prior to symbolic convention.

For this paper’s purposes, the critical observation is that multimodal AI architectures already recover these correspondences. CLIP (Radford et al., 2021), trained on 400 million image-text pairs, learns joint representations in which bouba/kiki-like structure emerges from the statistical regularities of paired data. This convergence between biological development and statistical learning suggests that the bouba/kiki structure reflects genuine cross-modal regularities recoverable by any system with access to sufficient multimodal data. We propose to use these correspondences as attractor anchors – low-dimensional fixed points in the semiotic embedding space that resist the drift afflicting purely conventional representations. Where arbitrary symbols require community consensus for stability (and thus fracture under bifurcation), iconic anchors derive their stability from sensorimotor invariants that are prenatal, cross-species, and pre-linguistic – shared across vertebrate populations and recoverable from multimodal data distributions. They provide the ground floor that prevents the semiotic building from floating free.

This paper makes three contributions:

Theoretical integration. We synthesize Peircean semiotics (Peirce, 1931-1958; Kockelman, 2024, 2025), linguistic anthropology (Silverstein, 1993, 2003; Agha, 2003; Irvine & Gal, 2000), nonlinear dynamics (Lancaster, 2025; Schweighofer et al., 2020), anti-probabilist cognitive science (Mangalam, 2025), and cross-modal grounding research (Ramachandran & Hubbard, 2001; Radford et al., 2021) into a unified framework for understanding why current LLM training produces semiotically treacherous outputs. The framework identifies the generative mechanism – the unchecked inheritance and amplification of bifurcated interpretant chains from training data – and specifies the conditions under which architectural intervention can address it.

Architectural specification. We propose a concrete model architecture – the Semiotic-Reflexive Transformer (SRT) – comprising four novel components (Semiotic Embedding Layer, Metapragmatic Attention Heads, Reflexive Recurrent Module, Bifurcation Estimation Network) integrated with a standard transformer backbone. Each component is formally specified with dimensionalities, loss functions, and integration points. The architecture includes a multi-objective training pipeline covering four pre-training losses and four fine-tuning tasks, as well as inference-time modulation mechanisms with three operational modes.

Evaluation framework. We define three novel evaluation axes – bridging coherence, reflexivity fidelity, and bifurcation prediction – with corresponding metrics, human evaluation protocols, quantitative targets, benchmark datasets (the Semiotic Evaluation Corpus), and five explicit falsification criteria. The framework is designed so that negative results are informative: each falsification condition identifies which theoretical claim or architectural component has failed, enabling principled revision rather than wholesale abandonment.

Scope and limitations. The paper does not claim to solve alignment, eliminate polarization, or produce artificial general intelligence. It does not present trained model weights or experimental results; it presents a theoretical framework and architectural specification at the level of detail required for implementation and empirical testing. Its scope is deliberately circumscribed: to demonstrate that the semiotic structure of language – the interpretant chains, indexical orders, attractor dynamics, and iconic grounding regularities that constitute meaning – can be made an explicit object of model training, with measurable consequences for the quality and social impact of generated text. The gap between specification and validation is acknowledged as a limitation and the primary direction for future work.

The paper proceeds as follows. Section 2 develops the four theoretical pillars – Peircean semiotics, nonlinear bifurcation dynamics, anti-Bayesian cognitive science, and embodied cross-modal grounding – showing how each addresses a specific deficiency of standard LLM training. Within the cross-modal grounding pillar, dedicated subsections present the cross-species evidence that falsifies the articulatory hypothesis (Section 2.4.3) and the prenatal mechanism by which embryonic acoustic environments calibrate iconic correspondences prior to external sensory experience (Section 2.4.4). Section 3 reviews related work in LLM alignment, debiasing, computational polarization, multimodal learning, and computational semiotics, positioning the SRT relative to existing approaches. Section 4 specifies the Semiotic-Reflexive Transformer architecture in full formal detail, with motivation, equations, and integration points for each component. Section 5 details the training pipeline: the Semiotic Annotation Schema, corpus composition, pre-training objectives with loss functions, fine-tuning tasks, optimization strategy, and inference-time modulation modes. Section 6 presents the evaluation framework with three axes, the Semiotic Evaluation Corpus benchmark suite, quantitative targets, and falsification criteria. Section 7 discusses theoretical implications, limitations, and five future research directions. Section 8 concludes.

This section develops the four theoretical pillars that motivate and constrain the proposed architecture. Each pillar addresses a specific deficiency of standard LLM training; together, they define the design space for semiotic-reflexive modeling. We proceed from the most general theoretical framework (Peircean semiotics) through its formal dynamical instantiation (bifurcation theory), the cognitive-scientific critique that motivates departing from the Bayesian paradigm (chaotic self-organization), and the embodied grounding mechanism that anchors the resulting system against unconstrained drift (cross-modal iconic correspondence).

Charles Sanders Peirce (1839-1914) developed across thousands of pages of published and unpublished work (compiled in the Collected Papers, 1931-1958, and more completely in the Writings of Charles S. Peirce, 1982-present) a comprehensive theory of signs he termed “semeiotic.” The framework’s central innovation is its irreducibly triadic structure: every sign process involves a representamen (the perceptible sign vehicle), an object (that which the sign represents), and an interpretant (the effect the sign produces, which is itself a sign). The interpretant is the decisive element. Unlike Saussure’s (1916) dyadic model, which treats meaning as a closed relation between signifier and signified within an abstract system (langue), Peirce’s inclusion of the interpretant makes signification an open, processual, and inherently social phenomenon: each interpretant can function as a new representamen, generating further interpretants in chains of “unlimited semiosis” (Peirce, CP 2.303) that never arrive at a final, unmediated meaning.

Peirce further distinguished the immediate object (the object as represented within the sign itself) from the dynamic object (the object as it actually is, independent of any particular representation). This distinction proves essential for understanding AI-mediated meaning: LLMs generate signs whose immediate objects are coherent (the text presents a consistent referential world) but whose dynamic objects may be nonexistent (hallucinated entities), misrepresented (biased framings), or systematically underdetermined (contested political referents). The gap between immediate and dynamic object is the site of semiotic treachery.

Peirce also developed an elaborate taxonomy of sign types based on the representamen-object relationship. Three are foundational:

Icons signify through resemblance: photographs, diagrams, onomatopoeia. Icons are vulnerable to selective resemblance – they highlight certain features while omitting others, enabling divergent interpretations of what resemblance entails. A photograph of a protest iconically represents the event but selects framing, angle, and moment in ways that can activate opposed interpretants.

Indices signify through existential or causal connection: smoke indicating fire, a fever indicating infection, a speaker’s accent indicating regional origin. Indices are vulnerable to contested causation – disagreement about what connections obtain and what they imply. The same correlation between a policy and an outcome may index effectiveness to one community and corruption to another.

Symbols signify through convention: most words, mathematical notation, flags. Symbols are vulnerable to conventional drift – the associations constituting them can diverge across communities and shift over time, precisely because nothing natural tethers them to their objects.

Political communication characteristically combines all three types simultaneously. The phrase “defund the police” is symbolic (its meaning is conventionally established), indexical (its use indexes political alignment and community membership), and iconic (its syntactic structure – imperative verb + object – iconically suggests a concrete action that may not match the policy referent). Each dimension presents distinct opportunities for interpretive divergence, and each requires distinct representational machinery in a model that aspires to navigate them.

For computational modeling, the triadic structure has a crucial implication: meaning is not a property of tokens but a relation among tokens, referents, and interpretive effects that unfolds across chains of processing. A standard LLM embedding – a vector optimized for next-token prediction – conflates all three Peircean dimensions into a single point in distributional space. It captures the representamen (what tokens tend to co-occur), encodes aspects of the immediate object (topical associations), and implicitly reflects aggregate interpretant patterns (the statistical shadow of how communities have responded to the sign). But it cannot disentangle these dimensions, contrast the interpretants produced in different communities, or represent the gap between immediate and dynamic object. The Semiotic Embedding Layer (Section 4.2) is designed to perform precisely this disentanglement.

Kockelman (2025) formalizes interpretant chains as dynamical trajectories through a state space defined by the sign-object-interpretant triad. The key insight, building on Kockelman’s earlier work in Last Words (2024), is that each link in an interpretant chain involves an act of sieving: from the space of possible interpretants a sign could produce, only some are actualized, depending on the interpreter’s prior sign exposure, community membership, semiotic ideologies, and the mediation architecture that delivered the sign. The actualized interpretant becomes the next representamen, entering the sieve of the next interpreter. When the same representamen enters different interpretive communities – communities whose sieving mechanisms have been calibrated by exposure to different algorithmically curated sign environments – it generates different initial interpretants. These interpretants function as new representamena generating further divergent interpretants.

The result is exponential compounding of interpretive distance through successive chain links. Consider a concrete example. The representamen “critical race theory” enters Community A, where it generates an interpretant linking to academic legal scholarship (Bell, 1980; Crenshaw, 1989). That interpretant becomes a representamen activating further associations: structural analysis, institutional reform, historical reckoning. It enters Community B, where the initial interpretant links to K-12 curriculum, indoctrination, anti-white racism. That interpretant generates further associations: parental rights, cultural war, censorship of patriotic narrative. By the third link, the two chains inhabit different conceptual universes, and the original representamen – three shared words – indexes not merely different opinions but different ontologies. The sign has become what Lancaster (2025) calls a “bifurcation site”: a point where the semiotic landscape has split into antagonistic basins.

This compounding is not random. It is structured by three mechanisms identified in linguistic anthropology:

Indexicality (Silverstein, 2003): Signs point to and create social contexts through structured “indexical orders.” First-order indexicals directly indicate contexts (“here,” “now”); second-order indexicals presuppose and construct social relationships, identities, and ideologies. Political discourse operates predominantly through higher-order indexicality, where word choices index social types that carry ideological freight. The phrase “undocumented immigrant” indexes a progressive characterological stance; “illegal alien” indexes a conservative one. The indexical meaning – the social-identity signal – may dominate the referential meaning for recipients attuned to it. When these indexical associations diverge across communities, the same utterance indexes opposed social identities, triggering evolved mechanisms of in-group solidarity and out-group suspicion (Lancaster, 2025, Section 1.3.5). Crucially, this indexical divergence is invisible to standard embedding spaces: both phrases co-occur with immigration-related tokens, and a distributional model will represent them as near-neighbors in semantic space, obscuring the indexical chasm between them.

Enregisterment (Agha, 2003): The social processes through which linguistic forms become associated with characterological figures – typified personas with associated values, stances, and social positions. Enregisterment is diachronic: it accumulates through repeated usage in community-specific contexts, reinforced by metadiscursive commentary (“when they say X, what they really mean is Y”). Political keywords undergo divergent enregisterment at accelerating rates in digital environments (Lancaster, 2025, Section 1.3.2): a term can acquire thick, community-specific characterological associations within weeks rather than the decades or centuries documented by historical linguistics. The term “woke” illustrates the phenomenon: originally enregistered within Black American discourse to index critical racial awareness, it underwent rapid re-enregisterment within conservative discourse to index performative social policing. By 2025, the same three-letter word activates fundamentally incompatible characterological figures depending on the interpreter’s community membership. For an LLM trained on corpora containing both enregisterments, the token “woke” sits in an embedding space that averages over both – a location that represents neither community’s actual usage.

Semiotic ideology (Irvine & Gal, 2000; Keane, 2003): Culturally specific assumptions about how signs relate to reality – what signs can represent, what interpretive processes are legitimate, what counts as evidence. Irvine and Gal identify three processes through which these ideologies operate: iconization (a linguistic feature associated with a group comes to be seen as depicting the group’s inherent nature), fractal recursivity (an opposition salient at one level is projected onto other levels, so that urban/rural maps onto educated/uneducated, elite/authentic), and erasure (facts inconsistent with the ideological representation are rendered invisible). When communities operate with incompatible semiotic ideologies, even shared commitment to “following the evidence” or “listening to experts” produces divergent conclusions, because the processes of evidential reasoning – what counts as a valid source, what constitutes a legitimate inference, what methodological commitments are transparent versus suspect – are themselves semiotically mediated. This is not epistemic failure; it is the normal operation of semiotic systems under conditions of ideological divergence.

Silverstein’s (1993) work on metapragmatic function identifies a capacity that is absent from current language models and central to this paper’s proposal: metapragmatic awareness – reflexive consciousness about how signs function, that one is interpreting, that interpretations are constructed, and that alternative interpretations exist.

Silverstein distinguishes three orders of indexical awareness, each building on the one below:

First-order (Perception): The basic presupposing link between a sign and its context. A speaker uses “here” and the listener identifies the location. A reader encounters “freedom” and retrieves a referential meaning. This is the level at which standard LLMs operate: they predict the next token based on contextual co-occurrence, which is functionally equivalent to first-order indexical processing.

Second-order (Cognition): Ideological construals of first-order links, regimented by social values. The interpreter recognizes not just what a sign refers to but what social type, stance, or identity it indexes. Using “freedom” in a particular syntactic frame with particular collocates signals membership in a political community. Second-order awareness involves recognizing that signs carry social valence – that language does social work beyond reference. Current LLMs capture second-order patterns implicitly (they can generate text that sounds conservative or progressive) but cannot represent the social-indexical structure that makes it so. The distinction between conservative and progressive registers exists in the model only as distributional clusters, not as identified social-indexical configurations.

Third-order (Reflection): Metapragmatic awareness of how discourse shapes interpretation itself. This is the capacity to observe one’s own interpretive frameworks as frameworks rather than as transparent access to reality – to notice that the word “radical” triggers alarm in oneself and to recognize that alarm as an effect of enregisterment within one’s community rather than an objective property of the sign. Third-order awareness does not dissolve interpretive commitments; it places them in a wider field of awareness. It is the capacity to inhabit an attractor basin while recognizing it as an attractor basin – to see the landscape from above rather than only from within.

This third-order capacity is what enables navigation of semiotic divergence without collapse into either relativism (“all interpretations are equally valid”) or dogmatism (“my interpretation is the only valid one”). Empirical evidence supports its efficacy: Pennycook et al. (2021) found that accuracy prompts – which activate a form of metapragmatic attention – reduced sharing of misinformation across partisan lines. Voelkel et al. (2022) documented that perspective-taking interventions produce modest but significant reductions in affective polarization. These findings suggest that activating reflexive awareness of one’s own interpretive processes changes the character of disagreement from identity threat to navigable difference.

Current LLMs possess no architectural analog of third-order metapragmatic capacity. They generate text from within the statistical landscape of their training data without any mechanism for observing that landscape’s structure, measuring its divergence across contexts, or adjusting their generative strategy based on the dynamical state of the semiotic environment they are participating in. The Reflexive Recurrent Module (Section 4.4) is designed to instantiate precisely this meta-observational capability as a differentiable computation: a GRU that processes divergence signals from the Metapragmatic Attention Heads and maintains a running state representing the model’s observation of its own interpretive dynamics.

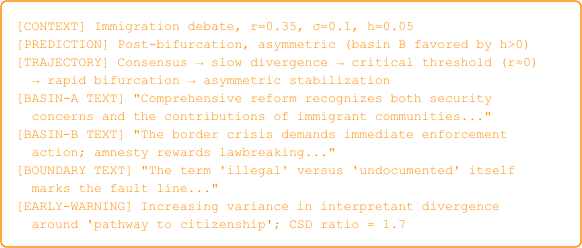

Lancaster (2025) demonstrates, through an integration of Peircean semiotics with dynamical systems theory, that political polarization in algorithmically curated societies exhibits the structure of a supercritical pitchfork bifurcation. The argument proceeds in two stages: first, that interpretant chain divergence constitutes a symmetry-breaking process amenable to formal modeling; and second, that the pitchfork bifurcation’s normal form captures the specific qualitative features observed in empirical polarization data.

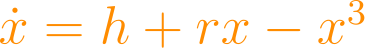

The normal form is:

where is the order parameter representing deviation from interpretive consensus – concretely, the degree to which the aggregate interpretant distribution for a contested sign (e.g., “freedom,” “justice,” “vaccine”) has diverged from a population-level mean – and is the control parameter representing the strength of algorithmic amplification: the degree to which mediation environments surface and reinforce content aligned with emerging interpretive paths.

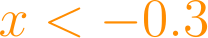

The system’s behavior depends critically on :

For

: The origin

is the unique stable equilibrium. The linearization

yields eigenvalue

, so perturbations decay exponentially at rate

. Shared interpretive frameworks absorb divergence. A community encountering a contested sign may produce varied interpretants, but the variation regresses toward consensus.

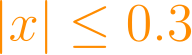

At

: The critical point. The linearization has eigenvalue zero; the system undergoes a qualitative change in its equilibrium structure. Critical slowing down occurs: perturbations decay algebraically (

, yielding

) rather than exponentially, meaning the system becomes increasingly sensitive to small pushes – a formal analog of the observation that societies near the bifurcation threshold can be tipped by relatively minor events (a viral tweet, a contested video, a shock news cycle).

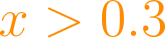

For

: The origin becomes unstable (linearization eigenvalue

). Two new stable equilibria emerge at

, each with basin of attraction covering half the axis. The system splits into opposed interpretive communities that are individually stable but collectively divided. The cubic term

provides saturation: the separation is finite (bounded by

) but self-reinforcing within each well.

This mathematical structure captures several empirically observed features of polarization:

Threshold character: Polarization is not a steady creep but a phase transition. Below critical amplification, shared meaning holds; above it, meaning fractures suddenly. This is consistent with the observation (documented by Bail et al., 2018; Boxell et al., 2017) that attitudinal divergence accelerated nonlinearly with the rise of personalized feeds rather than increasing at a constant rate proportional to internet adoption.

Symmetry breaking: A single interpretive community becomes two opposed communities. The pitchfork’s two tines are symmetric in the normal form, but Lancaster (2025, Appendix B.3) introduces an asymmetric extension

where the imperfection parameter

captures pre-existing structural asymmetries (e.g., differential institutional trust, historical grievance). When

, one tine is reached preferentially, producing the asymmetric polarization observed empirically (greater movement in one direction than the other).

Hysteresis: Once bifurcated, the system does not return to consensus merely by reducing

below the critical value. The subcritical pitchfork extension

exhibits explicit hysteresis: the bifurcated state persists even as the control parameter retreats below the forward transition threshold. This explains why “turning down the algorithm” does not immediately restore shared meaning; once interpretant chains have entrenched in antagonistic basins, the basins themselves resist dissolution.

Self-reinforcement: Each attractor basin generates interpretants that reinforce the basin, deepening the well and increasing the perturbation required to escape. This is the semiotic analog of positive feedback: within-basin interpretants are rewarded by social validation (likes, shares, group solidarity), which produces further interpretants aligned with the basin, which attracts further algorithmic amplification. The effective

experienced within a basin is higher than the platform-level

, because the community’s own semiotic activity contributes to the amplification.

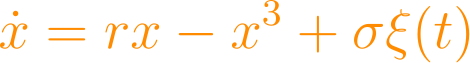

Real semiotic systems are noisy. Lancaster (2025, Appendix B.4) extends the deterministic model with additive noise:

where is white noise with intensity . The stochastic version yields two phenomena relevant to AI design:

Critical slowing down as an early warning signal. As approaches the critical value from below, the system’s recovery time from perturbation increases. The autocorrelation of increases; the variance of fluctuations increases. These are detectable statistical signatures that the system is approaching bifurcation (Scheffer et al., 2009). A model trained to recognize these signatures could estimate proximity to the critical threshold from observed semiotic dynamics – precisely the function of the Bifurcation Estimation Network (Section 4.5).

Noise-induced transitions. Below but near the bifurcation threshold, noise can cause transient excursions into what would be a stable attractor basin above threshold. These transient excursions correspond to sporadic radicalization events: individuals or subgroups temporarily adopting extreme interpretive frameworks before returning to consensus. A model aware of the stochastic landscape can distinguish noise-induced transients from genuine bifurcation.

Standard LLM training on web corpora amounts to training on the output of a system that has already bifurcated. The model learns the statistical regularities of text produced within antagonistic attractor basins without representing the dynamical process that generated the bifurcation. It can reproduce text characteristic of either basin – indeed, it can switch between basins with a prompt – but it cannot model the relationship between basins, the bifurcation threshold, the control parameter that governs the transition, or the early warning signatures that indicate approaching criticality.

This is the fundamental inadequacy that semiotic-reflexive training addresses. The target of learning is not the content of attractor basins (what text sounds like within each community) but the dynamics of the attractor landscape: its topology, its bifurcation structure, the parameters that govern transitions between configurations, and the stochastic signatures that signal proximity to critical transitions. A model that learns these dynamics can do what current models cannot: estimate whether its own generative activity is pushing the semiotic system toward or away from bifurcation, and adjust accordingly.

Mangalam (2025) argues that the dominant metaphor in computational cognitive science – the Bayesian brain, which frames cognition as prior-likelihood-posterior updating over probability distributions – is empirically inadequate. The argument proceeds on three fronts.

First, temporal structure: Bayesian updating assumes that evidence arrives in discrete packets to be integrated with a prior. But neural dynamics are continuous, multiscale, and characterized by long-range temporal correlations (1/f noise, power-law scaling) that are inconsistent with the memoryless updating of ideal Bayesian observers. Motor control, perception, and language processing exhibit variability patterns that reflect deterministic chaos rather than stochastic sampling from posterior distributions.

Second, representational format: The Bayesian framework requires that the brain maintain and compute over explicit probability distributions. While neural populations can be interpreted as coding distributions (Pouget et al., 2013), the claim that this is what they are doing – rather than an observer’s post-hoc statistical description of their activity – is underdetermined by the evidence. The same population activity is equally consistent with dynamical systems interpretations in which distributions are epiphenomenal rather than causal.

Third, generality: Bayesian models achieve empirical adequacy only with task-specific priors and likelihoods engineered by researchers. When applied to the kind of open-ended, context-sensitive, culturally mediated meaning-making that constitutes natural language use, the Bayesian framework either becomes vacuous (any outcome can be accommodated by suitable choice of prior) or empirically refuted (specific predictions fail). Meaningfulness is not a matter of statistical confidence but of resonance within a dynamical landscape shaped by embodied interaction with a physical and social world.

The critique has direct implications for language model design. Standard autoregressive training optimizes a model to approximate the conditional distribution – operationally a Bayesian objective: the model learns to assign probability mass to tokens in proportion to their frequency in context. If cognition is not fundamentally Bayesian, then this objective captures the statistical shadow of meaning rather than its substance. Models optimized for distributional correctness produce outputs that are fluent and contextually appropriate at the token level but semantically ungrounded at the interpretive level: they inherit the co-occurrence structure of meaningful text without instantiating the dynamical processes that generate meaningfulness.

An alternative framework comes from dynamic field theory (DFT; Schöner & Spencer, 2015), which models cognitive processes as trajectories through continuous state spaces shaped by attractor landscapes. DFT has been applied to motor planning, spatial cognition, visual search, and word learning, achieving quantitative fits to developmental and behavioral data that rival or exceed Bayesian models while offering a fundamentally different account of what cognition is.

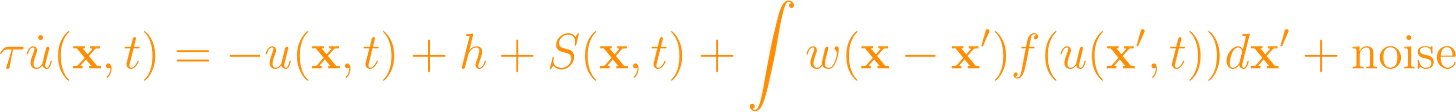

In the DFT framework, a cognitive state is represented as an activation field over a feature space , governed by dynamics of the general form:

where is a time constant, is a resting level, is external input, is an interaction kernel (typically Mexican-hat: local excitation, surround inhibition), and is a sigmoidal output function. The interaction kernel is critical: it creates attractor landscapes through lateral dynamics. Peaks of activation self-stabilize through local excitation; competing peaks are suppressed through surround inhibition; the landscape configuration determines what interpretive states are accessible.

Mapping this to the semiotic domain:

Meaning is an emergent property of a trajectory’s convergence into an attractor basin – not a stored representation retrieved by key-matching but a dynamical process of settling into a self-sustaining activation pattern. The same sign can produce different meanings (different stable peaks) depending on the prior state of the field, the configuration of the landscape, and the noise present at the moment of encounter.

Understanding corresponds to deep attractor basins with strong convergence properties: basins that absorb perturbation and resist drift. Deep understanding corresponds to a landscape in which the meaning-basin is wide, steep-walled, and connected to neighboring basins through identifiable transition paths.

Confusion corresponds to shallow basins, chaotic regions, or landscapes with multiple competing attractors of similar depth, where trajectories fail to settle or oscillate between alternatives.

Learning corresponds to the reshaping of the attractor landscape through experience. Repeated encounters with a sign in a consistent context deepen its associated basin. Encounters across divergent contexts can widen a basin (developing flexible understanding), create a new basin (learning a new sense), or – critically – split a basin into two (the semiotic analog of bifurcation).

For LLM training, this framework suggests that the target representation is not a probability distribution over tokens but an attractor landscape over interpretive trajectories. The model should learn basins (what stable interpretive states exist), their depths (how robust they are to perturbation), their boundaries (where one interpretive community’s basin gives way to another’s), and the bifurcation parameters that govern transitions between landscape configurations. This is what the attractor embedding component () of the Semiotic Embedding Layer is designed to represent: each token’s position in a learned attractor landscape, not merely its position in distributional space.

The DFT framework connects naturally to the pitchfork bifurcation model of Section 2.2. The Mexican-hat interaction kernel in the field equation can be parameterized so that increasing the strength of long-range inhibition (analogous to increasing cross-community antagonism) drives the field through a symmetry-breaking transition: a single peak (consensus) becomes two peaks (polarized interpretation). This is exactly the pitchfork bifurcation, now grounded in a field-theoretic cognitive model rather than an abstract dynamical equation.

The connection provides theoretical depth: the pitchfork is not merely a convenient analogy borrowed from physics but emerges from a cognitive-dynamical model of how interpretive landscapes form and fracture. It also provides architectural guidance: the interaction kernel’s parameters (strength, width, balance between excitation and inhibition) map onto learnable parameters in the model’s attractor embedding space, giving the Bifurcation Estimation Network (Section 4.5) a concrete cognitive-dynamical interpretation.

Saussure (1916) established the principle of arbitrariness: no natural connection links signifier to signified. The sound-form /tri:/ (“tree”) bears no resemblance to the woody plant it designates; different languages use entirely different sound-forms for the same referent. This arbitrariness is the condition of possibility for linguistic diversity and creativity – but it is also the condition of possibility for semiotic drift. The same sign can mean different things in different communities precisely because nothing anchors it to a fixed referent. Arbitrariness is what makes the pitchfork possible: if signs were naturally tethered to their objects, interpretant chains could not diverge.

If all sign-object relations were arbitrary, semiotic drift would be unconstrained, and any attempt to ground model representations would face an infinite regress of conventions interpreting conventions. The semiotic landscape would be a flat plane with no privileged points – all basins equally shallow, all equilibria equally fragile. But Saussure’s own principle, taken without qualification, overstates the case. Not all sign-object relations are arbitrary, and the exceptions are not marginal – they are structurally significant.

The bouba/kiki effect, first observed by Köhler (1929) and named by Ramachandran and Hubbard (2001), demonstrates systematic cross-modal correspondences between auditory and visual features. When presented with a rounded shape and an angular shape and asked which is “bouba” and which is “kiki,” approximately 90-95% of respondents across studied populations assign “bouba” to the rounded shape and “kiki” to the angular shape.

The effect is not a single curiosity but a structured family of cross-modal correspondences operating along identifiable phonological dimensions:

Vowel roundedness: Back, rounded vowels (/u/, /o/) map to rounded shapes; front, unrounded vowels (/i/, /e/) map to angular shapes. The mapping follows the articulatory gesture: lip rounding during /u/ production mirrors visual roundedness.

Consonant manner: Sonorants (/m/, /n/, /l/) and approximants (/w/) map to rounded shapes; plosives (/k/, /t/, /p/) and fricatives (/s/, /z/) map to angular shapes. The mapping follows acoustic envelope: sonorants have smooth, continuous spectral envelopes; plosives have abrupt spectral transients that mirror visual angularity.

Voicing: Voiced consonants tend toward roundedness associations; voiceless consonants toward angularity, though this dimension is weaker and interacts with manner.

Fundamental frequency: Lower pitch maps to larger and rounder shapes; higher pitch to smaller and more angular shapes – a cross-modal correspondence between auditory frequency and visual spatial extent.

These correspondences are not learned conventions. Six lines of evidence establish their non-arbitrary character:

Cross-cultural robustness: The effect has been documented in English, Tamil, Korean, and Swahili speakers (Ramachandran & Hubbard, 2001; Imai et al., 2008), and in the Himba of northern Namibia – a remote population with minimal exposure to Western media or Latin script (Bremner et al., 2013). The Himba showed the shape-sound correspondence while differing from Westerners on shape-taste correspondences, indicating that the sound-shape mapping reflects a biological constant rather than cultural transmission.

Prelinguistic infants: Ozturk, Krehm, and Vouloumanos (2013) demonstrated sound-shape correspondences in four-month-old infants – before any language has been acquired, ruling out lexical learning as the mechanism. At four months, infants have not begun babbling, have produced no speech sounds, and have no productive or receptive vocabulary. The articulatory account cannot explain this performance because four-month-olds are not producing vowels.

Cross-species replication: Versace et al. (2023), published in Philosophical Transactions of the Royal Society B, demonstrated bouba/kiki-like cross-modal correspondences in domestic chicks (Gallus gallus domesticus) within one to three days of hatching. Chicks hearing rounded sounds preferentially approached rounded visual panels; chicks hearing sharp sounds preferentially approached angular panels. This is the single most important piece of evidence for the non-arbitrary character of the effect, because it rules out not only linguistic convention but postnatal learning, articulatory kinematics, and mammalian-specific mechanisms as sufficient explanations (see Section 2.4.3).

Writing system independence: The effect obtains in speakers of languages with non-Latin scripts (Tamil, Korean, Arabic), confirming that it is not an artifact of letter-shape associations.

Neural substrate: Neuroimaging studies (Peiffer-Smadja & Cohen, 2019) identify activation in the angular gyrus and superior temporal sulcus during cross-modal matching – regions associated with multimodal integration, not arbitrary association. The angular gyrus sits at the junction of auditory, visual, and somatosensory cortex, providing a neuroanatomical basis for cross-modal correspondence.

Gradient structure: The effect is not binary (bouba vs. kiki) but graded: nonsense words with varying proportions of rounded vs. angular phonological features produce corresponding gradations in shape-matching behavior (Nielsen & Rendall, 2011). This gradient structure suggests a continuous iconic mapping rather than a categorical convention.

The chick data from Versace et al. (2023) warrant detailed treatment because their primary value lies in what they eliminate.

Linguistic convention. Chicks have no language. They do not learn words, acquire phonological categories, or participate in communities of speakers who negotiate conventional meanings. The bouba/kiki effect in chicks cannot be a linguistic artifact.

Articulatory kinematics. Ramachandran and Hubbard (2001) proposed that the effect derives from correspondences between mouth shape during speech production and visual form: the lips round for “bouba,” the tongue makes sharp contact with the palate for “kiki.” This articulatory hypothesis is inapplicable to chicks. Chicks do not have lips. They have beaks. They do not round their mouths or tense their tongues to produce speech-like sounds. Yet the mapping appears. The articulatory account may capture a real mechanism contributing to the adult human effect, but it cannot be the foundational explanation. The grounding runs deeper than articulation: it is prenatal, pre-motor, and pre-linguistic.

Postnatal learning. The effect appears in day-old chicks – organisms with at most twenty-four hours of postnatal auditory experience. The mapping is present at the moment the chick encounters the experimental stimuli for the first time. Whatever produced it did so before the chick left the egg, or within the first hours of life, before any plausible learning mechanism could operate at this level of complexity.

Mammalian-specific mechanisms. The last common ancestor of birds and mammals lived approximately 310 million years ago, during the Carboniferous period. Any mechanism shared between chicks and humans is a deeply conserved feature of vertebrate neurobiology, not a recent mammalian innovation. The mapping is either homologous (retained from the common ancestor through 310 million years of independent evolution, perhaps tied to the basic architecture of multisensory integration in the midbrain superior colliculus/optic tectum, which is homologous across all vertebrates) or convergent (independently evolved in response to a shared developmental constraint: enclosed embryonic development in environments that function as low-pass acoustic filters). Either way, it is not a product of human linguistic evolution. Either way, it predates language by hundreds of millions of years.

Lancaster (2026b) develops the mechanism underlying these cross-species correspondences. The argument rests on established facts of developmental biology.

Prenatal auditory sensitivity. Chick embryos develop functional auditory sensitivity by incubation day 12 of a 21-day incubation period (Gottlieb, 1971; Rogers, 1995). Human fetuses develop cochlear function by approximately gestational week 18; by week 25, the auditory system supports learning, as demonstrated by newborn preferences for the mother’s voice (DeCasper & Fifer, 1980) and for stories read aloud during pregnancy (DeCasper & Spence, 1986).

The low-pass filter. Both the eggshell and the uterus function as low-pass acoustic filters. They transmit low-frequency sounds with relatively little attenuation and progressively attenuate higher frequencies. For the human uterus, Abrams, Gerhardt, and Peters (1998) measured attenuation of approximately 20-30 dB at frequencies above 500 Hz, with increasing attenuation at higher frequencies. Low-frequency sounds below 250 Hz pass through with relatively little loss. The eggshell follows the same physics. The consequence is that embryonic auditory environments are dominated by smooth, periodic, low-frequency waveforms – heartbeat, blood flow, respiration – while sharp, high-frequency transients are anomalous departures from this acoustic baseline.

From acoustic asymmetry to cross-modal mapping. The hypothesis is that the embryo’s auditory system is calibrated by this asymmetric environment to treat smooth, continuous, low-frequency waveforms as the default state (normal, safe, approach-worthy) and sharp, abrupt, high-frequency transients as deviations (anomalous, potentially threatening, avoidance-worthy). This calibration requires only that the developing auditory system be shaped by the statistics of its input – a principle operative across all sensory modalities in all species studied (Sanes & Bao, 2009). The cross-modal extension follows from the architecture of early sensory processing: the superior colliculus (mammals) or optic tectum (birds) integrates input from multiple sensory modalities, including audition and vision (Knudsen, 2002; Stein & Meredith, 1993). Multisensory integration is a basic feature of vertebrate brain organization present in amphibians, fish, and birds as well as mammals. The prenatal acoustic asymmetry becomes a cross-modal template: smooth auditory contours activate the same neural populations as smooth visual contours, and sharp auditory contours activate the same populations as angular visual contours. The bouba/kiki effect is the behavioral expression of this shared representation.

Peircean classification. In Peirce’s taxonomy, this mapping constitutes a hypoicon (CP 2.276): a sign that represents its object through shared quality rather than convention or causal connection. The smooth waveform does not arbitrarily stand for safety; it shares the qualitative character of the stable biological processes that produce it – continuity, periodicity, low variance. The resemblance is structural, not metaphorical. This is not yet full semiosis in the Peircean sense of unlimited interpretant chains. It is a pre-semiotic foundation: a layer of non-arbitrary cross-modal association grounded in shared biology that precedes and enables the construction of conventional sign systems. The symbolic capacity – the ability to establish and maintain arbitrary associations through community convention – is built on top of this iconic substrate, not instead of it. The bouba/kiki effect reveals the stratum underneath: the ground floor that was already in place when the building of language began.

The bouba/kiki effect is the most studied instance of a broader phenomenon: phonosemantics or sound symbolism – systematic correspondences between phonological form and meaning that exist alongside (and in tension with) the Saussurean arbitrary sign. Research has documented:

Size symbolism: Front high vowels (/i/) tend to be associated with smallness across languages; back low vowels (/a/) with largness (Sapir, 1929; Ohala, 1994). This is reflected in cross-linguistic patterns: diminutive morphemes disproportionately use high front vowels.

Motion symbolism: Reduplication and vowel alternation patterns correlate with motion types across unrelated languages (Dingemanse, 2012).

Affective symbolism: Phonological features correlate with emotional valence: rounded, sonorant-heavy forms associate with positive affect; harsh, plosive-heavy forms with negative affect (Adelman et al., 2018).

Ideophones: A class of words found in the majority of the world’s languages that use sound-symbolic relationships iconically – onomatopoeia being the familiar subset, but the phenomenon extending to non-auditory sensory domains (Dingemanse, 2012).

These phenomena collectively define a phonosemantic feature space: a low-dimensional manifold in which phonological form and perceptual/affective meaning are non-arbitrarily linked. This space is not a replacement for the arbitrary sign but a substrate beneath it – a ground floor of iconic correspondence on which the upper stories of conventional meaning are built. Crucially, this substrate is shared across human populations because it is grounded in universal features of the sensorimotor system rather than in linguistically specific conventions.

The critical question for computational modeling is whether these cross-modal correspondences are accessible to learning systems or are confined to biological neural architectures. Recent evidence answers decisively: multimodal AI architectures already recover bouba/kiki-like structure from their training distributions.

CLIP (Radford et al., 2021), trained on 400 million image-text pairs via contrastive learning, develops joint visual-linguistic representations. Thompson and Lupyan (2023) demonstrated that CLIP’s embedding space exhibits sound-symbolic structure: nonsense words with bouba-like phonology are embedded closer to rounded visual concepts, and nonsense words with kiki-like phonology closer to angular visual concepts, without any explicit training on sound symbolism. The correspondences emerge from the statistical regularities of naturally paired visual-linguistic data.

This finding has three implications for the SRT architecture:

Learnability: Cross-modal iconic correspondences are not epiphenomenal or confined to online behavioral paradigms; they are structured regularities in multimodal data distributions that sufficiently expressive architectures recover automatically. This validates the proposal to include an iconic grounding subspace in the model’s embedding layer.

Initialization: CLIP-derived embeddings can serve as initialization for the iconic grounding subspace, providing a pre-trained representation of cross-modal correspondence structure. This is more efficient than learning iconic structure from scratch and ensures that the model begins training with access to the ground floor of non-arbitrary semiotic mapping.

Continuous structure: CLIP recovers graded sound-symbolic structure, not just a binary bouba/kiki distinction. This supports the representation of iconic grounding as a continuous feature space (the phonosemantic feature space of Section 2.4.5) rather than a categorical label.

Convergence with biology: The convergence between CLIP’s learned representations and the prenatal biological mechanism (Section 2.4.4) is itself significant. It suggests that the bouba/kiki structure reflects genuine cross-modal regularities in the world that any system – biological or artificial – will discover given sufficient multimodal data. The prenatal environment provides one access route; web-scale image-text corpora provide another.

We now state the central claim of this subsection formally.

Claim: Cross-modal iconic correspondences function as low-dimensional attractors in the semiotic state space. They provide fixed points around which interpretive trajectories can stabilize – anchors resistant to the drift that afflicts purely conventional signs.

The argument proceeds through three steps:

Step 1: Characterize basin depth as a function of grounding type. In the DFT-inspired attractor landscape (Section 2.3.2), the depth of an attractor basin determines its stability – the magnitude of perturbation required to dislodge a trajectory from the basin. We propose that basin depth is a function of grounding type:

Arbitrary (symbolic) signs occupy basins whose depth depends entirely on frequency and consistency of usage within a community. Their stability derives from convention – the accumulated weight of habitual association. Under bifurcation, when the community fractures, convention fractures with it: the same sign occupies different basins in different communities, and neither basin is anchored to anything outside the community’s own interpretive history. Basin depth is community-relative and fragile under bifurcation.

Iconic signs (including cross-modal correspondences) occupy basins whose depth has a component independent of community convention: the depth contributed by sensorimotor invariants shared across vertebrate populations. The bouba/kiki mapping is not deep because a community has agreed it is so; it is deep because the auditory-visual correspondence is calibrated by prenatal acoustic experience (Section 2.4.4) and expressed through the conserved multisensory integration architecture of the vertebrate midbrain. The cross-species evidence (Section 2.4.3) confirms that this depth is not species-specific: chicks and humans share it despite 310 million years of independent evolution. Basin depth has a universal component that persists across communities, across species, and resists bifurcation.

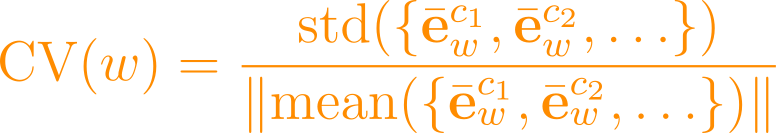

Step 2: Formalize depth decomposition. Let denote the effective basin depth for sign . We decompose:

where is the community-dependent conventional depth (a function of usage frequency and consistency within community ), and is the community-independent iconic depth (a function of the sign’s phonosemantic, visual, or cross-modal grounding). For a purely arbitrary sign (e.g., “freedom”), and all stability derives from convention. For a sign with strong iconic grounding (e.g., an ideophone like “splash” or a sign with strong bouba/kiki correspondence), provides a stability floor.

Step 3: Show that iconic depth resists bifurcation. Under bifurcation (increase in ), the conventional component fractures: it splits into and for the two community basins, with each potentially drifting away from the other. But the iconic component is invariant under bifurcation because it does not depend on community membership. The iconic floor provides a lower bound on the gravity that holds interpreters near a shared meaning – a reference point that persists even as conventional interpretations diverge.

In practice, most politically contested signs are purely or nearly purely arbitrary (), which is why they are susceptible to bifurcation. The SRT architecture’s strategy is not to make all signs iconic (which would contradict basic linguistics) but to leverage the iconic grounding subspace as a regularization mechanism: interpretant embeddings are encouraged by the training loss to maintain proximity to the iconic subspace where possible, providing a stabilizing force that partially counteracts semiotic drift. The strength of this regularization is modulated by the hyperparameter in the training loss (Section 5.2.3).

The bouba/kiki effect is our primary case study, but the architectural role of iconic grounding extends beyond sound-shape correspondence. The generalized icon hypothesis holds that any non-arbitrary sign-object relation – any correspondence grounded in resemblance, embodiment, or shared perceptual structure rather than pure convention – can function as an attractor anchor in the semiotic landscape. Candidate iconic anchors include:

Spatial metaphors for abstract concepts: “Prices are up,” “morale is down,” “the argument fell apart.” These metaphors are grounded in embodied spatial experience (Lakoff & Johnson, 1980) and show cross-linguistic regularities suggesting non-arbitrary structure.

Affective prosody: The mapping between vocal pitch/tempo patterns and emotional states is cross-culturally robust and prelinguistically accessible (Fernald, 1989).

Gestural iconicity: Sign languages exhibit systematic iconicity in which the form of signs resembles their referents (Taub, 2001), providing a rich source of iconic grounding data for multimodal models.

Diagrammatic iconicity: The structural correspondence between relational form and relational meaning (Peirce’s “diagrams”) – e.g., the iconic relationship between word order and temporal order of events in many languages.

The SRT’s iconic grounding subspace is designed to be extensible: initialized with bouba/kiki-derived phonosemantic features, it can incorporate additional iconic dimensions as grounding data becomes available. Each additional dimension adds a low-dimensional attractor that further stabilizes the semiotic landscape against unconstrained drift.

This section positions the SRT framework relative to five bodies of existing work. For each, we identify the specific contribution it makes toward the problem of semiotic divergence in AI systems, specify the precise gap it leaves open, and state how the SRT addresses that gap. Table 1 provides a summary comparison; the subsections that follow develop the analysis.

Table 1: Positioning the SRT Relative to Existing Approaches

The alignment literature addresses the problem of making LLM outputs conform to human values and intentions. We examine the three dominant paradigms and identify a structural limitation shared by all.

RLHF. Reinforcement Learning from Human Feedback (Christiano et al., 2017; Ouyang et al., 2022) trains a reward model on pairwise human preference judgments over model outputs, then fine-tunes the LLM to maximize predicted reward via proximal policy optimization (PPO; Schulman et al., 2017). InstructGPT (Ouyang et al., 2022) demonstrated that RLHF could align a 1.3B-parameter model to outperform a 175B-parameter unaligned model on human evaluations. The approach is now standard practice at OpenAI, Anthropic, Google DeepMind, and Meta.

However, RLHF’s preference signal is aggregated and implicit. Human annotators express preferences between outputs without articulating the interpretive frameworks – the community-specific indexical associations, enregisterment patterns, and semiotic ideologies – that generate those preferences. The reward model learns a function from output features to scalar reward without representing the structure of preference disagreement. When annotators from different interpretive communities disagree (as they systematically do on politically charged content), the reward model averages over the disagreement or reflects the majority annotator demographic, producing what Casper et al. (2023) characterize as a “least objectionable” policy that satisfies no community’s actual interpretive norms.

From the SRT perspective, RLHF performs trajectory adjustment within the learned attractor landscape without modifying the landscape itself. The model’s representational space – the embedding geometry that determines what distinctions the model can draw – remains unchanged. RLHF adjusts which regions of that space the model visits during generation, but it cannot create representational distinctions that the pre-trained landscape does not contain. If the pre-trained model conflates two interpretive communities’ uses of “justice” into a single embedding cluster, no amount of preference fine-tuning will enable the model to distinguish them; the representational substrate lacks the degrees of freedom.

Constitutional AI. Constitutional AI (Bai et al., 2022) addresses the scalability limitation of human annotation by having the model self-critique its outputs against a set of explicit principles (the “constitution”). The model generates, critiques, revises, and then trains on the revised outputs. This introduces a form of reflexivity – the model evaluates its own outputs – but the reflexivity operates at the content level (”does this output violate principle X?”) rather than at the semiotic level (”what interpretant chain does this output activate, and how does that chain differ across communities?”). The constitution provides fixed rules for evaluating surface properties of text; it does not provide machinery for modeling the dynamic, community-dependent interpretive processes that determine whether a text is beneficial or harmful.