For some spicier takes! Anthony Moser’s “I Am An AI Hater”, Jenny Zhang’s “choosing friction”, Rusty Foster’s “A.I. Isn’t People”, or Ed Zitron’s “The Case Against Generative AI” if you have most of an afternoon to read it. Emily Bender and Alex Hanna’s podcast interview with Paris Marx is also excellent.

Hello, it’s me: I’m a generative AI vegetarian.

The tech industry is convinced this is the future; every app on my phone and most of the apps on my computer want me to use their new AI features.

I don’t want any of them. I want to write my own emails. I want to write my own (mediocre) software code. I want to learn and think and ponder with other humans, not with a text-prediction system built by consuming all the text on the internet.

If you’re stoked about generative AI tools, that’s cool. A friend of mine runs workshops across Canada teaching people how to use AI systems. Friends in the government tech space have built really interesting and thought-provoking things with AI. People find these tools useful and fascinating. In my day job, I’m keen on helping people use AI tools to make government data more accessible. I’m not here to cut you down; I’m not a generative AI vegan, after all. (Sorry, vegans!)

If you’re a government department or public institution looking at adopting AI tools at scale, well, I’ve got some real strong opinions for you. (As it says on the tin: criticize systems, not people.) But I’ll save those for another blog post.

What is generative AI vegetarianism?

When I planned on writing this post (in April 2024!) I figured a background explainer on “what is generative AI” would be a good place to start. It’s 2026, and if you somehow haven’t already collided with your phone’s messenger apps or phone camera or your office suite at work telling you to summarize or generate or edit something with AI, well, I’d love to hear how you did it.

Generative AI tools are software products that produce text, or images, or other media by generating it from a user request and a very large quantity of underlying data. LLM-based chatbots like ChatGPT are the most well-known.

I often describe software as made up of three things: interfaces, data, and math. In the case of generative AI tools:

- The interface is pretty minimal (a chatbot or similar text interface, or a voice-recognition based interface like Siri or Alexa).

- The data, for most of these generative AI products, is the astounding part: comprehensively-scraped copies of all of the publicly available text on the internet, millions of scanned books, transcriptions of Youtube movies and other videos, and so on.

- And the math, in most cases, is based on “transformers”, mathematical operations that predict, for example, what words might come next after a series of previous words. It’s just, a lot more math and an a lot a lot more data than most software products.

That’s generative AI in a nutshell.

Generative AI vegetarianism, simply put, is avoiding generative AI tools as much as you can in your day-to-day life. For me, that means:

- Turning off all of the optional AI settings I can find: turning off Microsoft Copilot in my office suite (over and over again). Turning off Google Gemini on my phone. Turning off Apple Intelligence on my laptop.

- Not using any of the built-in AI features that I can’t turn off. (Hello, WhatsApp and Facebook Messenger. Yes, I’m bitter about it.)

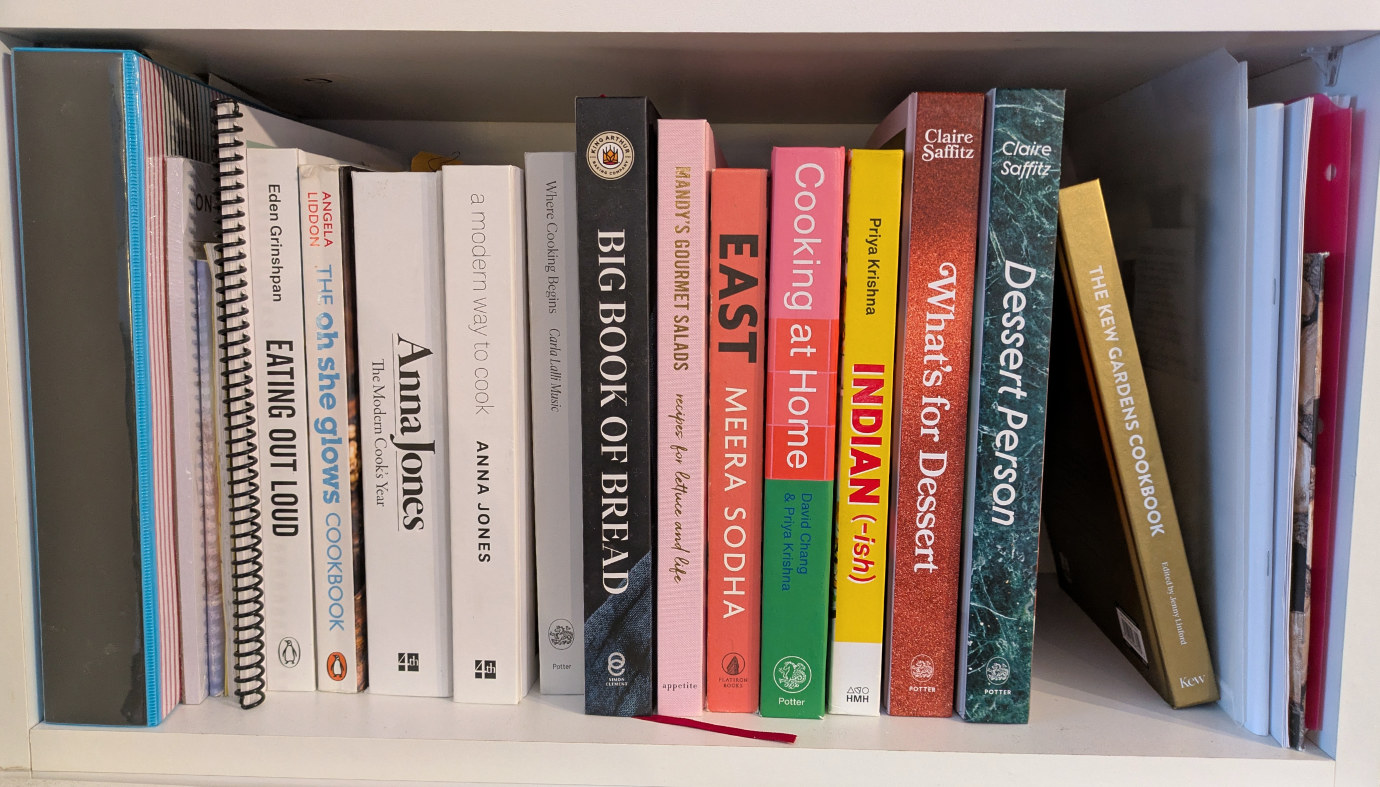

- Not consuming or re-sharing articles, photos, music, or videos that other people have produced with generative AI.

- Choosing software products that don’t have AI features, and supporting companies and organizations and creators that deliberately avoid generative AI tools.

It’s pretty easy! (Despite the tech industry’s best efforts.) And this isn’t even a particularly extreme position (see the spicier takes and vegan jokes above).

“AI” is a nebulous, marketing-driven label. I still listen to algorithmically-recommended music playlists; Google Photos still helpfully/creepily organizes my family photos using facial recognition; I appreciate that spellcheck and spam detection in my email inbox exist. I still think that computer vision systems and OCR and procedurally-generated art that predates the current wave of generative AI tools are all pretty neat. If someone made an AI tool that could correctly parse government ATIP request PDFs into Markdown format reliably, I would use it for sure.

But generative AI tools like ChatGPT or Microsoft Copilot or Claude or Gemini? I don’t want any of them, full stop.

Why generative AI vegetarianism?

Why choose generative AI vegetarianism? Just like real-life vegetarianism, there are a bunch of good reasons. (Full disclosure: I’m not real-life vegetarian! My wife is.) You can choose the reasons that resonate most with you.

Reasons to adopt generative AI vegetarianism:

Generative AI tools have a dial for bias, discrimination, and racism that you can’t control. From their source training material to the political alignment of major AI companies’ leadership to their adoption by fascist actors to consistently biased output, there’s a lot of red flags there. Their negative effects disproportionately affect women and historically marginalized groups, and contribute to a society-wide degradation in being able to understand what’s real.

Generative AI tools lead to a loss of critical thinking, creativity, and skill. Building skills and craft takes time and effort. It takes difficulty; short-circuiting that with generative AI tools makes it harder to become a creative and thoughtful person.

Generative AI tools are optimized to create what’s expected and predictable instead of what’s insightful. They have an “inbuilt tendency toward cliché” given that they’re, in large part, a big text-prediction system. Summaries of content by generative AI tools exclude the most counter-intuitive and unexpected (and therefore useful) parts.

The generative AI industry is destructive to occupations and human vocations that I value: writers, illustrators, musicians, and all kinds of other artists. I want our society to celebrate and support the livelihoods of more creative people; instead these jobs are rapidly disappearing.

Generative AI tools shift more power to the already powerful. More precarious employment as the result of companies adopting AI tools has already led to worse working environments and widespread layoffs (particularly in the technology industry). A constant theme that employees are replaceable or worth less is damaging to everyone across an organization. Meanwhile, many AI tools depend on very low-paid, exploitative manual human labour (often in developing countries) to actually work.

The process for creating and improving generative AI tools (scraping vast quantities of information without compensating the original creators) incentivizes restricting rather than sharing information. It also damages open source movements and collaborative human efforts (like Wikipedia) that society as a whole benefits from.

Frequently using generative AI tools or building them into an organization’s processes or workflows introduces a very real risk of vendor lock-in, where it becomes impossible to switch companies or become less dependent on the tools even if they become more expensive or less useful.

Generative AI tools are easily used as an “accountability sink”: harmful decisions can be made while obscuring the human decision-makers behind them. They can be used as a cover for decisions that cause harm either at an individual or at a societal level, and make it harder for civil society groups, opposition politicians, or the media to figure out who is responsible.

Generative AI tools, and the data centres and equipment that power them, use vast quantities of electricity and other resources. The rapid expansion of new data centres is already disrupting communities with minimal if any local benefits in return.

You don’t have to get behind the entire list here; if one or two of these resonate, that’s enough to become a generative AI vegetarian.

It’s a lifestyle choice

Overall: it feels freeing. I like it. It’s easier to say “no” to generative AI tools as an entire category instead of having to draw a more ambiguous line and use them for some purposes but not others. I just don’t want to use them at all.

And if this sounds too preachy, again, I’m not here to judge you. But if, likewise, you’re not a fan of generative AI tools, I want to make it easier to put that into words. To be able to say, if someone says “check out this new AI thing!”: naw, it’s cool, I’m vegetarian. And to hope that, over time, some subset of companies and organizations will lean into that, and make deliberately AI-free books and music and videos and video games, just like vegetarian restaurant options.

I want to create things that are good. I want to value quality and craft in the things that other people make.

And you could say, “hey, generative AI tools are getting better all the time, look at how high-quality this latest generative AI book or picture or song is.” It’s still just pretending, and meanwhile, it’s taking space away from an actual human creating an actual human creative work that means something.

As Mandy Brown wrote recently about specialization and automated systems:

Impossible not to think here of the rise of labor unions in the tech industry and the subsequent rapid (and surely coincidental) deployment of so-called AI which—unlike nearly every prior technological development in software—arrived with mandates for its use and threats of punishment for the noncompliant. …

But simply watching work happen, without any of the creative, autonomous activity that would occur if they were doing the work themselves, gives rise to a degree of boredom and stupefaction that can be physically painful and spiritually debilitating. Anyone who has experienced the pleasure of creative work is likely to greatly resist that reduction; better to create workers who have never known such things.

There is a whole industry, a lot of money and political power, behind the push to put generative AI tools in front of everyone. It makes our society more fragile and, cumulatively, it makes us worse people.

We can do better. Generative AI vegetarianism isn’t much, but it’s a small and useful step.

Simon Willison (a data scientist and AI researcher who also coined the term “prompt injection”) first described the concept of being “AI vegan” in a 2022 blog post. David Joyner wrote a similarly-themed article on “AI Veganism” in 2025. Many friends on Mastodon posted ideas that helped form this piece. I learned today that Mastodon links from 2024 – when I first started drafting this! – don’t, uh, stick around reliably. It’s still great.