I run engineering as an SVP. I haven’t shipped production code in years. In late February, I decided that was a problem worth fixing — not because I missed coding, but because I was about to make a lot of decisions about AI tooling that I had no business making.

Every CTO and tech executive I know is being asked the same questions right now. Are these tools real? Will they replace engineers? How fast should we go? What do we let them do? The honest answer for most of us is: we’re guessing. We read the same blog posts everyone else reads, watch the same demos, and form opinions from the cheap seats.

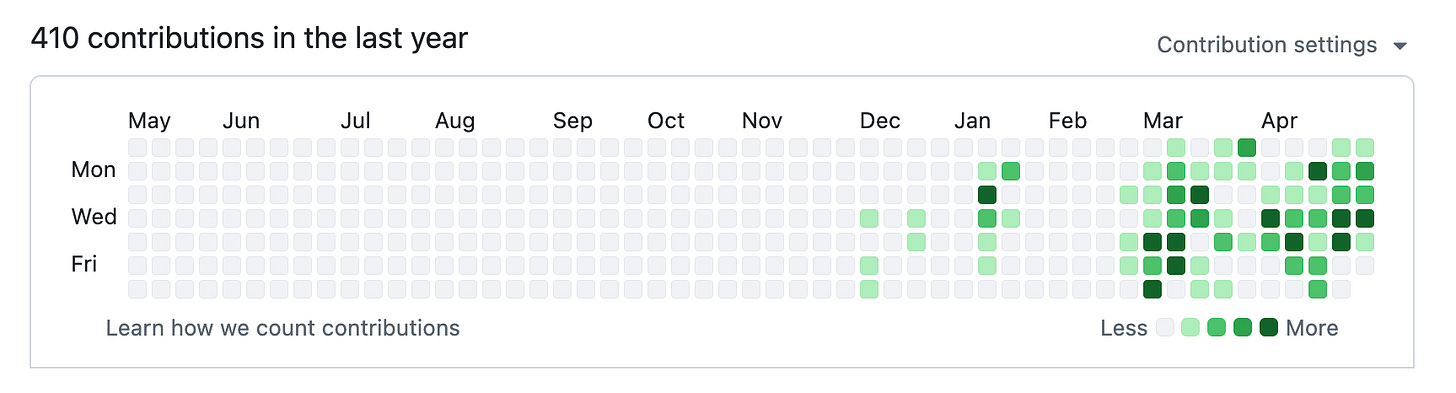

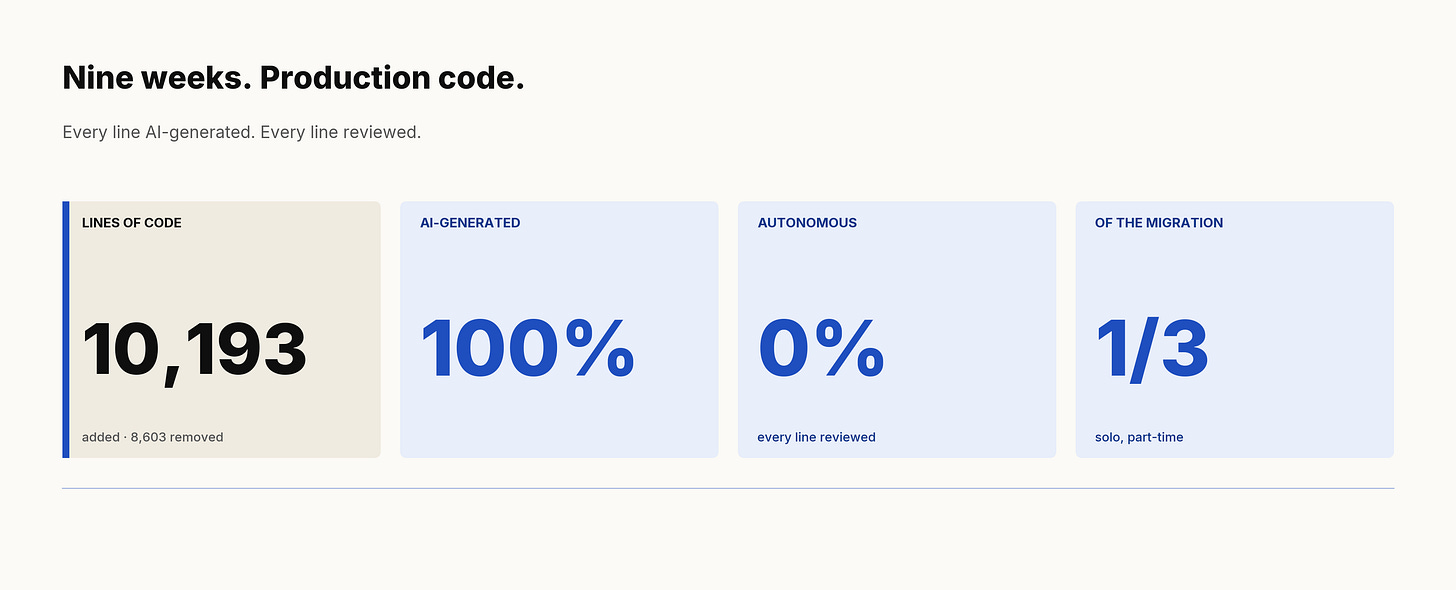

So I went back to the keyboard. For nine weeks I shipped customer-facing production code on a real, gnarly, legacy migration — every line generated by AI, every line reviewed by me. Here is what I learned.

Most executive AI experiments are toys. A weekend chatbot. An internal tool nobody depends on. A POC that gets a demo slot and dies.

Toys flatter the tools. Production reveals them.

I wanted to know two things, and a POC could answer neither:

Can current AI dev tools handle real legacy complexity? Greenfield is kind to LLMs. The harder question is whether they hold up against a codebase that has accreted decisions for a decade — patterns from three eras, deprecated dependencies, base components with dozens of downstream consumers, and edge cases that exist for reasons no one alive remembers.

Can I credibly lead this transformation without doing it myself? I was telling teams to adopt these tools. I was approving budgets. I was writing strategy memos. And I had not personally felt what it’s like to ship production code with them. That’s the gap between being a mouthpiece and being a leader. I wanted to close it.

The work I picked was a design system migration — replacing legacy UI components with our internal next-generation library across a customer-facing e-commerce platform. Bounded enough to be measurable. Public enough that mistakes hurt. Legacy enough to be honest.

The single most important sentence in this post: every line of code that shipped was AI-generated, and not one line shipped without me at the keyboard.

And yet the industry conversation has flattened into a false binary: AI is replacing engineers, or AI is hype that can't ship anything serious. Both camps miss the actual frontier, which lives entirely in the part between them.

The truth: the AI did the typing. I did the directing, reviewing, correcting, and the final yes. There was no spec-and-walk-away. There was no “let it run overnight.” Every component took multiple prompts, multiple iterations, multiple course corrections. When the AI generated something subtly wrong — and it generated something subtly wrong on every single component — we caught it because we forced a test-first discipline.

Anyone telling you they shipped serious production code with AI without a senior engineer in the loop is either lying, has a very forgiving definition of “serious,” or hasn’t seen their bug count yet.

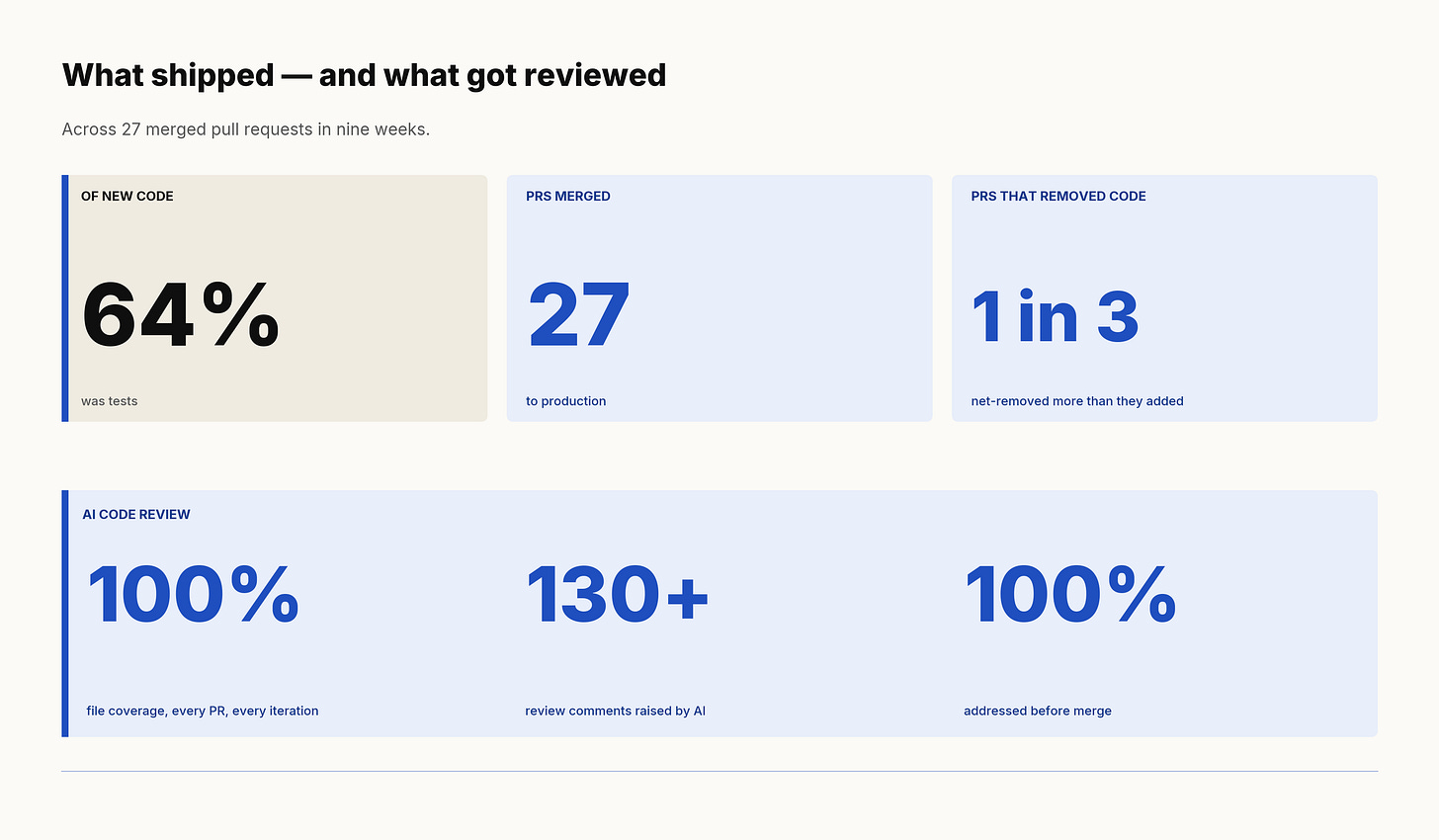

In nine weeks, working part-time around an executive day job, I shipped 27 pull requests to production. The eighteen substantive ones added 10,193 lines of code and removed 8,603 — a net codebase growth of under 1,600 lines. Six of those eighteen were net-negative: more code deleted than added. 64% of every line I added was a test.

I single-handedly migrated a third of an epic projected to take a full team most of a year, fixed eight production bugs that emerged along the way, and the codebase came out smaller and better-tested than I found it.

The same epic in the year before AI tools became part of the workflow had completed roughly six tickets per month and zero of the heavy base-component migrations. My throughput alone was nearly three times that, on structurally harder work.

These numbers are not the point. They are the floor. They tell you what one part-time executive can do with no team support beyond normal review. The implication for a full-time engineer with these tools is not subtle.

Boilerplate translation. Anywhere the work was “take this pattern and apply it to forty places,” the AI was extraordinary. The first time I ran a migration across forty downstream consumers in an afternoon, I felt the floor of what’s possible move.

Test scaffolding. Coverage that would have been a tax on a human engineer was free. The AI happily wrote tests for paths I would have been tempted to skip. That’s how 64% of insertions ended up being tests — not because I planned it, but because the marginal cost of writing one more test went to nearly zero.

Refactors with clear specs. When I could write a precise before/after spec, the execution was crisp. The skill was no longer typing the refactor; it was writing the spec.

Code review. This one surprised me the most.

I started using a setup where one AI authored the code and a different AI reviewed it. Sometimes a third reviewed both. Different models, different vendors, different prompts.

Across the nine weeks the AI reviewer covered every changed file on every iteration of every PR — and raised more than 130 review comments. Every one was addressed before merge. On the most complex migrations the same PR went through 14–16 successive review passes before it was clean.

This caught things human reviewers miss. Not because the AIs are smarter than humans — they aren’t — but because they’re tireless, they don’t skim, and they don’t politely defer to a senior engineer’s reputation. They flagged my mistakes the way I’d flag a junior’s. The bar for what gets merged went up, not down.

If you take one tactical idea from this post: stop thinking of AI code review as a replacement for human review and start thinking of it as a parallel reviewer with different blind spots. The combination is stronger than either alone. The marginal cost is essentially zero. The downstream effect is real — every one of those 130 comments that got fixed pre-merge is a regression, a rollback, or a customer-facing incident that didn’t happen.

CSS and visual work. The single biggest failure mode. Pixel alignment, responsive edge cases, anything where the model couldn’t see the rendered output — the AI confidently produced code that looked right and rendered wrong. I shipped regressions in this category that I had to chase down post-deploy. The bug pattern was consistent enough that I could predict it: any PR that changed component styling needed a manual visual pass that no current AI tool could substitute for.

Ignoring explicit requirements. This is the most underreported failure mode in industry writing about AI coding, and the one operators most need to know about. I would write a clear spec — “do X, do not do Y” — and the AI would do X, do Y, and confidently describe its work as faithful to the spec. The fix is not better prompts. The fix is to assume the AI did not read the constraints and to verify every constraint manually. This is a tax on every interaction. It is also why the “let it run overnight” model fails.

Legacy contract drift. Replacing one component with another sounds simple until you discover that ten downstream consumers depend on undocumented behavioral quirks of the old component. The AI cannot infer these. It assumes the new contract is the contract. Real users encountering subtly broken behavior is what you get if you don’t catch this. I caught most. I missed a few. I shipped fixes within forty-eight hours each time.

Scope creep within a PR. A request to fix one file would, more than once, return as a fix that touched two hundred files. The AI’s ambition exceeded its discretion. Every PR needed an explicit scope check before merge.

Test infrastructure side-effects. When the new components were heavier than the old ones in subtle ways, the test suite started running out of memory in CI. This was not a code bug; it was an emergent property of swapping components at scale. The AI generated more tests; it did not understand that more tests in this harness meant CI failure. A junior engineer would have made the same mistake. The senior judgment was knowing where to look.

A pattern worth flagging honestly: the migrations where my test share dipped lowest — into the 25–30% range instead of the 64% average — were also the ones that shipped the most regressions in the days that followed. Coverage and quality moved together. That’s not a coincidence. The data wrote its own lesson.

If you only measure your AI rollout by feature throughput, you are measuring the wrong thing.

The deeper finding from these nine weeks isn’t that I shipped faster. It’s what happened to the human capacity that AI freed up. It didn’t sit idle. It went into the work that always gets deprioritized — the work that quietly compounds margin and risk in every engineering org I’ve ever seen.

In the same nine weeks, I also: cleaned up the test harness to fix the OOM issues above, removed unused vulnerable dependencies (one of which had been flagged but not fixed for over a year), rewrote internal documentation on testing standards, authored migration spec documents that the rest of the team now uses as templates, and held a higher code review bar across other people’s work than the team had been holding before.

There was a second-order effect I didn't expect: I became braver. Large refactors that I would have flinched at on my own — the kind that touch dozens of files and a decade of accreted assumptions — felt routine, because I had a tireless, patient, and competent partner at the keyboard with me at all times. The fear tax that senior engineers pay on every risky change quietly evaporated. That's where a meaningful share of the reallocated capacity actually came from. Not from typing speed. From courage.

The AI didn’t just give me speed; it gave me the cognitive room to invest in the systemic problems that were eating into our engineering capacity in the first place.

This is the answer to the question every executive is implicitly asking: “but what do humans do now?” They do the things that always got deprioritized. They raise standards. They mentor. They invest in test infrastructure. They retire technical debt that has been accruing interest for years. The CFO version of this story isn’t just “we ship features X% faster” — it’s “we redirected senior engineering capacity from a tax line into a margin line, and the codebase got healthier in the process.”

The single most important organizational decision in any AI rollout is whether security is a partner or a gatekeeper. If they’re gatekeepers, your engineers will route around them, and you’ll have a worse security posture than before AI. If they’re partners, you’ll find the line together.

We chose partners. The result, lived not theorized:

Allow-lists, not deny-lists. We have an explicit, named list of which integrations the AI is allowed to talk to: our ticketing platform, our internal knowledge platform, our cloud infrastructure tooling, a small handful of others. Database access is currently off-limits. Anything not on the list requires a conversation. The list grows as trust grows.

Read-only first, write later. Every new integration started in read-only mode and graduated to write access after we’d watched it work for a while. The first time I asked our security partner why the AI couldn’t write to our ticketing system, the answer was “because we started read-only on purpose, and we can graduate it now.” That answer is the entire model in one sentence.

Verbal-approve, formalize-after. When the AI tooling needed an integration approved on a tight deadline, the security team developed a pattern of giving verbal approval in a meeting and formalizing in a ticket afterwards. A small organizational hack that matters more than it sounds. It removes the failure mode where security review becomes a bottleneck and engineers route around it. The integrity of the formal record is preserved. The speed of execution is preserved. The partnership is preserved.

The graduation moment. Several weeks into the project, my security partner sent me a message: a particular AI access pattern had moved from “needs my review every time” to “manager-approved by default.” That sentence was the whole methodology working. Trust had been earned, and the system reflected it.

Here is the part most people will get wrong if they only read about AI security in the abstract.

Approvals are a security control. Approvals are also a UX surface. The fiftieth time you click “approve” on a tool prompt, you don’t read it. You click. The control has failed at that exact moment, regardless of what your policy document says.

Approval fatigue is not a UX nit.

It’s a security failure mode. The fix is not “more approvals” — it’s fewer, better-designed approvals. Allow-list the proven-safe patterns. Tier the trust. Batch the routine. Reserve the friction for the things that genuinely matter — the ones humans should be reading carefully because the consequences are real.

The next year of AI engineering productivity is gated less on model capability than on getting the trust-graduation model right. CTOs and CISOs reading this: this is the conversation. Not “should we use AI.” Not “which model.” It’s “what are we comfortable letting it do without asking, what are we not, and how do we move things from the second category to the first as we learn?”

Do it. Pick a real production project, scope it tightly, and ship code yourself for at least a quarter. Not a POC. Not a side project. A thing customers will see. Leading an AI transformation through PowerPoint and vendor demos is no longer enough. Open a terminal.

Do it with security in the room. From the first day. Not as a checkpoint, as a partner. The friction you encounter early is the friction your engineers will encounter at scale. Solve it together while you’re small.

Stay honest about the failure modes. The strongest version of the AI productivity story has the regressions in it, the requirements ignored, the CSS bugs, the scope creep — all of it. Anyone selling the story without those pieces is selling a story. The real version is more useful and, in the long run, more bullish.

I went into this expecting to come out with a measured executive opinion about AI dev tools. I came out remembering why I got into this in the first place. There was a stretch of about three weeks where I didn’t want to do anything else. The flow state of pairing with a tool that types as fast as you can think is something I’d forgotten was possible.

That part wasn’t in the plan. But it’s the most honest sentence in this post: I went looking for data, and I found my craft again. If you’re an executive who hasn’t shipped code in years, you might find something similar. It’s worth the nine weeks.