Hello, this will be unusually long. I’m doing an informal book club started on Mastodon with Shae Erisson (scannedinavian.com) and Brit Butler (kingcons.io) Here’s the shared space for the book club notes (github.com). I got a lot out of reading their notes after I’d finished mine - very interesting to compare and contrast what we got out of each chapter! I don’t have a GitHub account anymore, which is the only reason my notes don’t also appear there.

For context, because it’s highly relevant to particular chapters, I wrote the bulk of these notes between two essays:

-

Early 2025: I’m an American software developer…

-

Mid/Late 2025: You are here.

-

Early 2026: A programmer’s loss of a social identity

I think it’s important to note that my impression of Hamming improved considerably by the end of the book to the point where I think I misunderstood some of the earlier chapters. Maybe that’s partially his fault and partially mine, but I will say that if you’re not feeling it (or even hate it), you might like it better if you stick it out 'til the end or maybe even skip some chapters.

Contents

Note that these chapter titles are mine, not the originals.

-

Chapter 1 Orientation

-

Chapter 2 The digital revolution

-

Chapter 3 History of hardware

-

Chapter 4 History of software

-

Chapter 5 History of applying computers

-

Chapter 6 "AI"

-

Chapter 7 More "AI"

-

Chapter 8 Think about "AI"

-

Chapter 9 A mathematician’s journey to n-Space

-

Chapter 10 (En)coding theory 1

-

Chapter 11 (En)coding theory 2

-

Chapter 12 Error-correcting codes

-

Chapter 13 Information theory

-

Chapter 14 Digital filters 1

-

Chapter 15 Digital filters 2

-

Chapter 16 Digital filters 3

-

Chapter 17 Digital filters 4

-

Chapter 18 Simulation 1

-

Chapter 19 Simulation 2

-

Chapter 20 Simulation 3

-

Chapter 21 Fiber optics

-

Chapter 22 Computer-aided instruction

-

Chapter 23 Math!!!

-

Chapter 24 Quantum mechanics

-

Chapter 25 Creativity

-

Chapter 26 Experts

-

Chapter 27 Unreliable data

-

Chapter 28 Systems engineering

-

Chapter 29 You get what you measure

-

Chapter 30 You and your research

Forward by Bret Victor

This was excellent! I already knew that Bret (worrydream.com) was a good at explaining things. This lucid introduction to "Hamming on Hamming" is no exception.

(Tangential note: when I look for PDF copies of influential computer science papers, I’m amazed at how often my search results in a link to Bret Victor’s website.)

Though I later found that Victor was re-stating much of what Hamming himself says about this book in the Preface, Introduction, and Chapter 1, I think Victor’s introduction is more fun to read - and it also has the benefit of being able to talk about Hamming in a way that Hamming himself cannot because he’s the author in question.

Chapter 1: Orientation

The first chapter is really an introduction to the book as a course of study. And the goal is meta-education (don’t forget, the book’s subtitle is "Learning to Learn").

As warned, there is a little math. It’s an example "back of the envelope" calculation of the explosive growth of new information to be studied in each new generation. This math digression relates to the chapter, but the point is not really to drive home how we arrive at some numbers, but rather to illustrate that this calculation is the sort of thing that great thinkers might do.

Given this explosive growth of new information, Hamming instructs us to concentrate on "the fundamentals". And how do we figure out what will be "fundamental" in the future? By trying to predict it!

Of course, no one can predict the future. But by having a personal "vision" of the future, you can steer yourself towards a goal even as new information comes to light. There’s a tension between learning essential new things, but also not chasing every shiny object or fad that crosses your path. (A fact that I think seasoned programmers, in particular, can relate to.)

Hamming also talks a lot about doing "great" work and being "great" I like what Brit wrote about this chapter (github.com) because it echoes a lot of my concerns about what "greatness" sounds like in the year 2025, as opposed to what it sounded like in the era in which Hamming wrote it not that long ago:

"In short, I don’t know that I really care about greatness any more. At least not individually. But I am very interested in how as a culture, it seems we no longer incentivize doing great work or accomplishing hard things as opposed to becoming wealthy (or wealthier). A poor motivator if the goal is innovation or any sort of enduring advancement."

Well said.

Hello, second pass Dave here. As you’ll see if you make it to the end of my notes, I realize in reviewing the final chapter, that Hamming does not prescribe one single meaning of "great", but leaves it to the reader to define. I don’t claim to know Hamming’s inner thoughts, but this book absolutely makes a case for the reader to make a personal definition of what it means to be "great" and do "great work".

Lastly, having a vision or goal is a way to steer towards "total happiness rather than the moment-to-moment pleasures." And, "a life without struggle on your part to make yourself excellent is hardly a life worth living."

If followed in the pursuit of your (ethically tenable) goals, it’s profound advice to follow.

Chapter 2: The digital revolution

Right in the first sentence, I’m confronted with my ignorance of mathematics and physics. Hamming says,

"We are approaching the end of the revolution of going from signaling with continuous signals to signaling with discrete pulses, and we are now probably moving from using pulses to using solitons as the basis for our discrete signaling."

I had to look up the word soliton.

-

https://en.wiktionary.org/wiki/soliton - "A solitary wave which retains its permanent structure after interacting with another soliton." Hmmmm…

-

https://en.wikipedia.org/wiki/Soliton - "A single, consensus definition of a soliton is difficult to find." Great!

Ha ha, I’m being unfair to the Wikipedia entry. In the first paragraph, it says essentially the same thing as the Wiktionary definition.

Further, it had this nice quoted passage from John Scott Russell writing about his discovery in 1834:

"I was observing the motion of a boat which was rapidly drawn along a narrow channel by a pair of horses, when the boat suddenly stopped – not so the mass of water in the channel which it had put in motion; it accumulated round the prow of the vessel in a state of violent agitation, then suddenly leaving it behind, rolled forward with great velocity, assuming the form of a large solitary elevation, a rounded, smooth and well-defined heap of water, which continued its course along the channel apparently without change of form or diminution of speed. I followed it on horseback, and overtook it still rolling on at a rate of some eight or nine miles an hour, preserving its original figure some thirty feet long and a foot to a foot and a half in height. Its height gradually diminished, and after a chase of one or two miles I lost it in the windings of the channel. Such, in the month of August 1834, was my first chance interview with that singular and beautiful phenomenon which I have called the Wave of Translation."

Solitons appear to come up in a variety of physics settings (fiber optics, magnetics, nuclear reactions, and even a sort of science fiction spaceship warp propulsion known as the "Alcubierre drive" which is a, to some degree, a product of mutual inspiration between Star Trek and physicist Miguel Alcubierre - I’ll have to keep an eye out for that one now that I know about it). But as near as I can tell, we haven’t proved or disproved Hamming’s prediction on this one yet.

Moving on to the second sentence and beyond…

The chapter is about the move from continuous (analog) signaling to discrete (digital) and why this has happened.

The first reason is that analog signals need to be amplified in order to be transmitted over a long distance. I remember reading an anecdote about early attempts to send voice signals across the Atlantic ocean by cable in which, if I remember correctly, the amplification produced a hopelessly messy blast of sound and also melted the cable!

(For the above, am I thinking of the Neal Stephenson article in Wired called "Mother Earth Mother Board" or the Claude Shannon biography I read?)

By contrast, digital signals are vastly easier to transmit long distances because we can clean them up and even correct them for errors as we pass them along, producing an exact copy on the other end.

Also, here’s a fascinating way to phrase a fact about digital information:

"We should note here transmission through space (typically signaling) is the same as transmission through time (storage)."

Oh, and this tantalizing bit:

"Analog computers have probably passed their peak of importance, but should not be dismissed lightly. They have some features which, so long as great accuracy or deep computations are not required, make them ideal in some situations."

I only know a little bit about analog computers that calculate using continuous electrical logic rather than binary/digital logic. And what little I know makes me wonder if they’ll someday make a resurgence in applications where they provide the best low-power solution for a computation? Obviously, we would pair the analog circuits with digital circuits to make a hybrid computer in whichever ways make sense. Also, we can compute with mechanics, fluids, molds, fungus, and folded paper. So who knows what the future will bring?

Second: Digital is cheaper. Especially once we figured out integrated circuits.

TODO: Link to Crystal Fire, once I get that book review up, someday…

Third: Information is increasingly what we work with rather than physical goods. (True and increasingly true!)

Also: Robotic manufacturing and engineering (with ever more complex products that require ever more complex maintenance), increased data-crunching power for simulations used in science, especially really costly stuff like atomic bombs.

Hamming makes a point about over-reliance on simulation in science - he was an early advocate, but he’s well aware that for hundreds of years, "Western" thought centered around too much book learning and not enough direct observation experimentation in the actual world. You always have to go test things in the real world to see if the simulations are correct.

Societal effects, people management, and central planning:

"The most obvious illustration is computers have given top management the power to micromanage their organization, and top management has shown little or no ability to resist using this power."

Hamming goes at length on the evils of micromanagement. I remember that word being used a lot in the late 1990s when I was entering the workforce. I don’t think that’s the biggest problem we’re facing with huge corporations anymore?

Edit: I’ve talked with two people about this and…the disappearance of "micromanagement" from the common vernacular is, indeed, just my own perception. It’s still just as prevalent as a term, and problem, as it ever was. It’s clear to me now that I was simply applying the term too narrowly.

Second edit: Once I reached Chapter 29, I finally understood how Hamming was using "micromanagement" here as an umbrella term for the cause and effect of a huge variety of leadership dysfunction.

Another Hamming prediction caught my eye, but Shae expressed it best (github.com) with:

"…he suspects that large companies will lose out to smaller companies. […] because smaller companies have less overhead and their top management is not distant from the “people on the spot” […] If Hamming is right, why are there still big companies at all? Maybe Hamming didn’t consider that large companies could afford to purchase and consume their smaller competitors?"

Entertainment: Well, computers now completely dominate here and Hamming has been proven 100% correct. I’m fascinated (and, if I’m honest, dreading) the "AI" stuff he hints will be coming in Chapters 6-8. But it will be interesting since he wrote this in 1996!

Warfare: Hamming writes:

"It is up to you to try to foresee the situation in the year 2020 when you are at the peak of your careers. I believe computers will be almost everywhere since I once saw a sign which read, 'The battle field is no place for the human being'."

Though we’ve had remotely-piloted and autonomous military aircraft for quite a while now, I’m writing this in 2025, at which point we are at the beginning of a whole new chapter of warfare: with inexpensive quadcopter and water craft "drones" boats accounting for a huge percentage, perhaps even the majority (?), of strikes in the defense against the Russian invasion of Ukraine. The potential is terrifying. But maybe this stuff will save lives in the long run? I have no idea.

Back-of-the-envelope calculation break! Hamming does some calculus here, which I have to assume is really great and totally satisfying if you’re into continuous mathematics. I’ll be perfectly honest. I’ve never found modeling rates of growth to be all that interesting. Especially something as abstract as "innovation". Actually, the more I think about it, I find this particular exercise actively boring, in a way that has nothing to do with not being able to easily follow the mathematics. I’m sure others will enjoy it.

Lastly, Hamming makes a recommendation/prediction: General-purpose ICs will tend to be a better choice than specialized custom ICs. This is based on the rate of innovation, the advantages of shared knowledge, and the price advantages of scale. Good job, Hamming! Absolutely correct. In 2025, there are a vast number of different microcontrollers and other ICs available off-the-shelf and it is extremely rare to need something truly custom except at enormous scale.

I’m excited about the next chapter, which will be about the history of computers!

Chapter 3: History of hardware

Here we go!

I know of the abacus (I actually had one as a kid, but at that age, I didn’t have the patience to understand the little instruction book that came with it, so I just clicked the little beads).

But "sand pan" is new to me… I can’t find any reference to that type of device, on Wikipedia, nor even Marginalia Search (marginalia-search.com), which has the rare distinction of performing real "exact match" web searches. (I’ve only turned up a military operation of that name, gold panning equipment, geological surveys, a child’s craft activity for Biblical studies, and barbecue techniques.)

Hamming says the next big leap in calculation came from John Napier’s invention/discovery of logarithms (in the form of tables), and from there, the slide rule.

I find the subject of logarithms to be quite fascinating and I’ve long felt that I should make a study of them specifically because of how often they come up in computer science and the history of computation (the "big-O" notation, slide rules, etc.). So I’ve made a stub card to expand at some later date: Logarithms.

Next come the mechanical computers - typically very specialized for a particular task. I find these fascinating as well. Mechanical computers always make me think of The Clock of the Long Now (wikipedia.org), which contains a mechanical computer to convert the pendulum movement to the display of astronomical phenomena and the year according to the Gregorian calendar.

One of the interesting things about the Clock of the Long Now is that they tried lots of different designs for the mechanical computer, but ended up with digital mechanics for a lot of the same practical reasons digital electronics and digital information are so much handier than analog equivalents. And this is something that Hamming mentions here - that mechanical computers have been both "analog" and "digital" since the beginning. For example, Napier not only developed a logarithmic method of calculating, but his "Napier’s Bones" (ivory rods) were a digital calculation system.

There’s Babbage in the 1820s, of course.

New to me was the Comptometer (1887) by Dorr Felt. I thought the whole Wikipedia entry for the Comptometer (wikipedia.org) was really interesting:

"A key-driven calculator is extremely fast because each key adds or subtracts its value to the accumulator as soon as it is pressed and a skilled operator can enter all of the digits of a number simultaneously, using as many fingers as required, making them sometimes faster to use than electronic calculators."

Also interesting to me were the details about the Comptometer’s error detection and locking parts of the keyboard until errors were manually corrected.

There was a veritable explosion of these calculating machines in the late 1800s, and the Comptometer Wikipedia entry has a timeline with the dates.

Punch cards come from the mechanical computer/calculator era for storage of data and rudimentary programming. I’m a little surprised Hamming didn’t mention the Jacquard loom, which used punched cards in the 1700s (but, of course, you can’t mention everything in a short chapter!)

Side note: I once visited a textile museum in Rhode Island, where they had a working punched card-driven loom that could produce woven bookmarks with letters that spelled out the museum name. I bought one and I’ve got that souvenir around here somewhere.

IBM (International Business Machines!) dominated in the early 1900s with punch cards and mechanical calculating machines.

Okay, I’d better shorten up these notes - I find all of this stuff super interesting, but if I dive into any of it, I’ll never finish my notes or this chapter: Zuse (electrical relays), ENIAC (vacuum tubes), etc.

Okay, I know what I wrote above, but here’s another quick note because I simply can’t help myself: After reading this book, but before finishing this page, I read a book about Grace Hopper, who got her start programming Howard Aiken’s Mark I mechanical computer wikipedia.org link. The book focused on Hopper, not the Mark I, but it still contained a bunch of fascinating facts about the computer. For one, it was electromechanical and ran off of a drive shaft, which limited the calculating speed of the machine to the RPM of the shaft! The other interesting thing about it is that it was arguably more "programmable" than the ENIAC! This is a contentious statement, but the ENIAC required "programming" by extensive physical re-wiring with a plugboard and switches, whereas the Mark I took its instructions from punched paper tape.

Now Hamming puts up an interesting table with the comparative speed of operations per second. His table ends in the era in which he was writing the book - the early 1990s. He’s using operations per second, but we can probably substitute floating point operations per second (FLOPS) for any of the modern stats. From hand calculators (20 OPS) to 1990s digital supercomputers (10^9 OPS/FLOPS or gigaflops).

For a fun comparison, the old-ish Ryzen 5 CPU in the desktop I’m typing this on benchmarks at about 450 gigaflops and you can buy one right now for about $80.

And he makes a prediction that we can check (now that per-processor speeds have been slowing considerably):

"Even for von Neumann type machines there is probably another factor of speed of around 100 before reaching the saturation speed."

Well, a factor of 100 on top of 10^9 would be 10^11, right?

So how did we do?

The Supercomputer (wikipedia.org) entry has us at 10^18 FLOPS. But it’s really not that simple because at some point we just started making supercomputers as huge clusters of Linux machines running on x86 (or ARM) CPUs, and various GPUs. To some degree, this is pretty much just a ton of consumer-grade processing done in massive parallel.

Anyway, I reckon we got a lot more than a "factor of 100" out of this architecture on the high end of parallel processing supercomputers.

This stuff is hard to compare, actually, but Hamming puts it well in the next bit, "the human dimension," when he writes:

"…Thus in 3 seconds a machine doing 10^9 floating point operations per second (flops) will do more operations than there are seconds in your whole lifetime, and almost certainly get them all correct!"

To hammer the point home further:

"The purpose of computing is insight, not numbers."

To ensure everyone is "on the same page," so to speak, he reduces the modern design of computing hardware to: Storage (of instructions and data), Control Unit (gets and executes instructions), a Current Address Register, an ALU (does the actual calculating), and I/O for communicating with the outside world.

That is, the CPU is just a machine that operates on instructions. At this point, he gets slightly philosophical and makes vague threats about those looming "AI" chapters to come.

That’s it for Chapter 3. I’m going to have to be really careful about keeping my notes shorter because the next chapter is the software portion of computer history, one of my passions!

Chapter 4: History of software

Let’s see if I can stay true to my word and keep this chapter’s notes short(-ish).

This is a great summary of the really early history of software development back to the days of programming in binary with absolute machine addresses by someone who was there while it was happening.

By the time I started programming computers, "spaghetti code" was used to mean "too many GOTOs". But Hamming clearly demonstrates how the original pasta was made by patching absolute memory addresses!

The lesson from this chapter is to keep your mind flexible to accepting tooling improvements. Hamming describes programmers who clung to extremely laborious manual machine code programming versus languages like FORTRAN:

"First, it was said it could not be done. Second, if it could be done, it would be too wasteful of machine time and capacity. Third, even if it did work, no respectable programmer would use it—it was only for sissies!"

The thing is, I know I should be laughing at these old dinosaurs: "Ha ha, how silly! The fools couldn’t see how the computer could assist in programming computers!"

But here I am in the accursed year 2025 and it’s being seriously suggested that all programming (and every other creative endeavor, for that matter) should be completely turned over to so-called "AI" cloud services.

And I just can’t laugh at those poor old manual coding dinosaurs anymore. I feel…deeply sympathetic to them.

Moving on, I totally agree with Hamming’s opinion about the proven success of human-oriented programming languages designed to help humans accomplish the task. (A opposed to attempting to force programmers to use a mathematical or logical purity divorced from both how people and computers work.) Mind you, these are still programming languages we’re talking about. I have yet to be convinced that a "natural language" angle has ever been a good way to bridge the gap!

I’ve never heard anyone express this sentiment about Algol before:

Algol, around 1958–1960, was backed by many worldwide computer organizations, including the ACM. It was an attempt by the theoreticians to greatly improve FORTRAN. But being logicians, they produced a logical, not a humane, psychological language and of course, as you know, it failed in the long run. It was, among other things, stated in a Boolean logical form which is not comprehensible to mere mortals (and often not even to the logicians themselves!).

I admit, I’ve never actually learned Algol, but have long been aware of all the ways in which it casts a long shadow, from syntax choices, to the creation of BNF notation to describe them.

Edit (with rant): After discussing this chapter on Mastodon, I found myself getting increasingly hot and bothered by the implications underlying Hamming’s opinions about computer programming. In short: A programming language should be designed for humans.

Oh, I agree completely.

But if you read the surrounding context, he goes further than that. What I think he’s really asking for is a programming language (or interface) for laypeople, not professional programmers.

And yet. And yet, Hamming also uses mathematical notation throughout this book and clearly exults in the "deep insights" of a mathematical understanding of a problem.

I feel like this comes from a very old-fashioned view that was predominant in the earliest days of computers when Hamming was in his prime. A specialized notation is appropriate for the "real thinkers". You know, the mathematicians and scientists. Whereas "coding" a computer program was seen as a mere secretarial task.

But wait, please don’t get me wrong here: I love the idea of making programming accessible to non-professionals. I truly believe programming of some sort (whether it be be graphical or textual) is the only way to make full use of a computer. Otherwise, you’re at the mercy of the user interface you’ve been given.

But I also think professionals and power users should have access to "sharp tools" that require and reward skill. I don’t see any indication that Hamming understood this distinction.

Moving along, I thought this was really interesting and insightful:

The human animal is not reliable, as I keep insisting, so low redundancy means lots of undetected errors, while high redundancy tends to catch the errors.

That is, programming languages with high information density (low redundancy) are hard for humans to get correct: our natural language is very squishy, but highly redundant, so the signal gets through.

An interesting question that arises here is: What’s an example of a programming language that uses some redundancy to make the programmer’s intent clear? (I’m thinking of strong typing and explicit type conversions here, but that’s more like busywork than true expressivity). He mentions an anecdote where he approaches a language expert about this:

…he could not hear the question concerning the engineering efficiency of languages, and I have not noticed many studies on it since. But until we genuinely understand such things—assuming, as seems reasonable, the current natural languages through long evolution are reasonably suited to the job they do for humans—we will not know how to design artificial languages for human-machine communication.

Yeah, absolutely.

And these these two conflicting ideas are spot-on:

-

"Getting it right the first time is much better than fixing it up later!"

-

"The desire that you be given a well defined problem before you start programming often does not match reality…"

I do think comparing writing software with writing novels is a pretty decent comparison, though maybe not for the exact reasons he had in mind. (Source: I write software almost daily and have written two really terrible unpublished novels.)

Hamming concludes this chapter on a sour note:

Does experience help? Do bureaucrats after years of writing reports and instructions get better? I have no real data but I suspect with time they get worse! The habitual use of “governmentese” over the years probably seeps into their writing style and makes them worse. I suspect the same for programmers! Neither years of experience nor the number of languages used is any reason for thinking the programmer is getting better from these experiences.

Ouch!

Hamming’s actual experience with the field of programming languages seems to be rooted in the FORTRAN era (1950s), with exposure to the Ada era (1980s). So I do take his opinions about the field with a grain of salt.

Chapter 5: History of applying computers

Hamming describes how learning to give good presentations lead to having insights into the future of computing:

The talk also kept me up to date, made me keep an eye out for trends in computing, and generally paid off to me in intellectual ways as well as getting me to be a more polished speaker. It was not all just luck—I made a lot of it by trying to understand, below the surface level, what was going on.

Then he describes having to do extremely difficult mathematical work on barely capable early hardware to prove that computers could do work that wouldn’t otherwise feasible at all:

…Then, and only then, could we turn to the economical solutions of problems which could be done only laboriously by hand!

I think this is an aspect of computers that is easy to forget or gloss over: That the true value of computers is to make things practical which would otherwise not be practical…or even possible (in a human lifetime).

A human could probably render a single frame of Donkey Kong by hand on paper. But a human could never render a single frame of, say, Doom 3.

Doom 3 (and tons of science simulations, for that matter) are simply not possible without computers.

Here’s a heck of a quote:

In such a rapidly changing field as computer software if the payoff is not in the near future then it is doubtful it will ever pay off.

Now I find myself thinking about Tim Berners-Lee being able to demonstrate his hypertext concept, the World Wide Web, within about a year or two and it taking off like a rocket immediately afterward. And comparing that to Ted Nelson’s similar Project Xanadu, which has been under more-or-less continuous development for 60 years and most people have never heard of it and probably never will. (Note: I’ve long been fascinated by Xanadu’s ideas, especially its advanced linking and the transclusion of virtual documents.) This particular cautionary tale has all sorts of lessons that would be totally ripe for a whole article of their own…the value of ideas without an implementation, dreams versus pragmatism, etc.

Aside: And I really can’t help thinking about hype-driven "payoff" versus natural grass-roots "payoff". For example, Java had massive hype and was everywhere at one time and it did find a niche eventually (especially the JVM). But it did not take over the way it’d been hyped to do. In fact, I would argue that JavaScript is now the language of the universal machine that did take over, the Web browser. (Which is a bizarre and ironic artifact of history, since JavaScript acquired that name for marketing reasons because Java was so popular at the time.)

Ah jeez, now I’m really off on a tangent. Keep it short, keep it short.

Hamming, by his own admission, revisits Chapter 2 in recommending the use of general-purpose computers and software. But I take his point: this was once a much more controversial suggestion and one can extrapolate a lot of good ideas about what the future may hold if you trace a line from special-purpose calculating machines through the world of virtual machines and software of today. Where does that line lead in the future?

Chapter 6: "AI"

I’m so raw from the "AI" talk these days that I see the upcoming three "AI" chapters and I groan. But, of course, Hamming’s perspective on this from the 1990s should be fascinating.

What sort of things can computers do? Newell and Simon’s puzzle solving at RAND is something to look into. "Expert systems" are a term I remember from the 1990s, even though I wasn’t in the field.

The idea is you talk with some experts in a field, extract their rules, put these rules into a program, and then you have an expert!

Followed by this shot across the bow:

…in many fields, especially in medicine, the world famous experts are in fact not much better than the beginners! It has been measured in many different studies!

Ouch!

The impression I’m getting here is that Hamming doesn’t have much respect for fields and abilities outside of his own. I’m sorry to say that I would have fallen prey to this problematic line of thinking when I was younger.

Hello, second pass Dave here. My criticisms of Hamming softened greatly by the end of the book. I can think of a charitable reading of the above quote. Just compare it with to the argument that hospitals and doctors would be safer and more effective if they used things like checklists (or "expert systems") to help avoid human error, the same way airports and pilots do. In no way, should these systems replace human expertise.

He then claims that some rules-based systems have "shown great success". I’d be curious to hear an example. I’m not saying it doesn’t exist, I’d just like an example. Maybe that’s coming up?

But I think he partially redeems himself with:

In Chapter 1, I already brought up the topic that perhaps everything we “know” cannot be put into words (instructions)— cannot in the sense of impossible and not in the sense we are stupid or ignorant. Some of the features of Expert Systems we have found certainly strengthen this opinion.

Another bang-on quote:

While the problem of AI can be viewed as, “Which of all the things humans do can machines also do?” I would prefer to ask the question in another form, “Of all of life’s burdens, which are those machines can relieve, or significantly ease, for us?”

Yessss!!!!

I would go post this quote on Mastodon right now, but half of us are sick out of our minds from being bombarded by this subject non-stop from all sides and I try to limit how often I’m part of the problem.

Next is some fairly standard philosophy about what "thinking" really means and if it would ever be possible for a machine to "think". I think it’s a good summary.

I did give a dark chuckle at this:

I can get a machine to print out, “I have a soul”, or “I am self-aware.”, or “I have self- consciousness.”, and you would not be impressed with such statements from a machine.

Oh Hamming, if I could only transport you to the year 2025, when people are very impressed indeed by such statements. I mean, that is literally what is happening as I write this.

The rest of the chapter is a brief "AI" history. Hamming asks good questions, none of which are really answerable then or now.

I’m really curious where the next two chapters will take us…

Chapter 7: More "AI"

Some people conclude from this if we build a big enough machine then automatically it will be able to think! Remember, it seems to be more the problem of writing the program than it is building a machine, unless you believe, as with friction, enough small parts— will produce a new effect—thinking from non-thinking parts. Perhaps that is all thinking really is! Perhaps it is not a separate thing, it is just an artifact of largeness.

Which brings us to today.

I’ve been emailing someone smarter than myself who perfectly summed up the whole idea above as a question of "scale invariance". That is, if scaling a system up doesn’t change its properties, it’s scale invariant (fractals are a fun example). If LLMs are scale invariant, you’re not going to have intelligence pop into existence just by making them bigger.

(Although, we obviously can get systems that are capable of printing out statements that say, "I am not scale invariant," when asked. See previous chapter notes.)

I kind of scared to know what Hamming would have thought about the current state of affairs.

Then a discussion of the usefulness of computers and digital instruments in the field of music production, which I think makes some good (if simplistic) points. Then:

This is the type of AI that I am interested in—what can the human and machine do together, and not in the competition which can arise.

But he then has some pretty dismal things to say about computers competing with people anyway (for jobs) and the ability of your average "coal miner" to elevate to the types of work that a computer won’t replace.

Of course robots will displace many humans doing routine jobs. In a very real sense, machines can best do routine jobs thus freeing humans for more humane jobs.

Yeah…about that.

I don’t think even Hamming could have foreseen that "the computers" would have come for the creative work before doing the menial work - because it turns out that’s easier to fake.

He again stabs at the medical profession, suggesting that doctors could likely be replaced with expert systems eventually. I think he’s doing the classic engineer underestimation of the huge complexity of squishy real-world jobs. I recognize it because I used to do it myself all the time and I know I was probably insufferable because of it.

To give Hamming credit, he mentions the legal problem: Who is to blame when a program makes a mistake? That’s an important question. But I don’t think he had the imagination or foresight in 1994 to ask the next question: How do we prevent money from being the only thing that determines how we use a given technology?

Oh dear:

If you have gone to a modern hospital you have seen the invasion of computers—the field of medicine has been very aggressive in using computers to do a better, and better job. Better, in cost reduction, accuracy, and speed. Because medical costs have risen dramatically in recent years you might not think so, but it is the elaboration of the medical field which has brought the costly effects that dominate the gains in lower costs the computers provide.

Oh Richard Wesley Hamming, my sweet summer child. If I could transport you thirty years forward to a hospital in the United States in 2026.

But, of course, computers can be enormously useful, helpful, and wonderful. I wouldn’t enjoy them if it weren’t so. But they must always, always, always be made to serve the user.

Then: Computers can be helpful with calculus. Some good practical points about robots!

He’s right about chess-playing computers eventually dominating. But this is a great quote:

But often the way the machine plays may be said “to solve the problem by volume of computations”, rather than by insight— whatever “insight” means! We started to play games on computers to study the human thought processes and not to win the game; the goal has been perverted to win, and never mind the insight into the human mind and how it works.

Wow, Hamming is all over the place with this chapter, bouncing around from idea to idea with no clear connection. We’re back to philosophy.

This is a big WTF for me:

As another example of the tacit belief in the lack of free will in others, consider when there is a high rate of crime in some neighborhood of a city many people believe the way to cure it is to change the environment—hence the people will have to change and the crime rate will go down!

Is he saying that there’s crime in a particular neighborhood because the people who live there are…just bad? I mean, I get the "free will" argument he’s going for, but I don’t think the argument makes any sense. Outside of horror movies, there aren’t just whole neighborhoods of bad people that exist for no external reason. Am I reading this wrong?

Here’s a paragraph near the end of the chapter that has taken on a completely inverted meaning to me in 2025:

The hard AI people will accept only what is done as a measure of success, and this has carried over into many other people’s minds without carefully examining the facts. This belief, “the results are the measure of thinking”, allows many people to believe they can “think” and machines cannot, since machines have not as yet produced the required results.

Switch it around and I think it’s just as true to say it allows many people to believe machines can “think” since machines have produced the appearance of the required results.

Ultimately, I can’t argue with this about the nature of intelligence:

We simply do not know what we are talking about; the very words are not defined, nor do they seem definable in the near future.

I mean, I’ve got my opinions about the meaning of "intelligence", but they’re just opinions.

Chapter 8: Think about "AI"

This chapter is just a couple pages with a summary of the previous two in the form of a 9-point self-evaluation.

People are sure the machine can never compete[…] It is difficult to get people to look at machines as a good thing to use whenever they will work[…] It is the combination of man-machine which is important, and not the supposed conflict which arises from their all too human egos.

What I find fascinating is how much my personal feelings differ from what they would have been 10 years ago. Maybe even as little has 5 years ago? I’m absolutely certain I would have been on Team Machine, cheering all of this stuff on. My opinion on this stuff has flipped a complete 180 degrees.

The machines aren’t the problem. They are just tools. I stand by that.

The problem, as ever, is who owns those tools and how those tools are used and for what motives. Do they help or harm people?

I have to give Hamming full credit for his foresight. The "machine learning" or "AI" field has turned out to be a big deal after all, for better or worse. I don’t think that was obvious to everyone in 1994. (I’m not 100% sure where the "AI" hype cycle was in the early 1990s, but I don’t think it was doing well?) I believe he was swimming upstream with these opinions and in the face of all the previous failed "AI" endeavors over the years.

Anybody else got warm clothes and a fireplace ready like me, waiting in hopeful anticipation of the next nice, long, cozy "AI" winter?

Chapter 9: A mathematician’s journey to n-Space

Here’s some math. Hamming and the word "obvious":

| Avg. Chapter | Chapter 9 | |

|---|---|---|

| "obvious" | 45 / 30 = 1.5 | 2 |

| "obviously" | 23 / 30 = 0.767 | 3 |

Where am I going with this?

I have no idea.

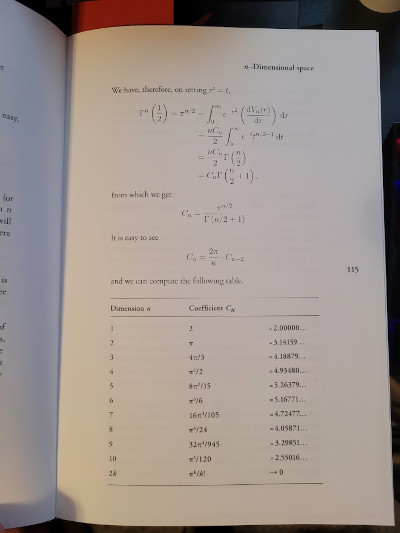

I read every word of it, but I really don’t understand what’s going on here. Many of the pages look like this:

And I wish I understand the significance of the volume shrinking in relation to the surface area of a higher-dimensional sphere:

This has importance in design; it means almost surely the optimal design will be on the surface and will not be inside as you might think from taking the calculus and doing optimizations in that course. The calculus methods are usually inappropriate for finding the optimum in high dimensional spaces. This is not strange at all; generally speaking the best design is pushing one or more of the parameters to their extreme— obviously you are on the surface of the feasible region of design!

I took Hamming at his word in the introduction in which he wrote:

If you find the mathematics difficult, skip those early parts. Later sections will be understandable provided you are willing to forgo the deep insights mathematics gives into the weaknesses of our current beliefs. General results are always stated in words, so the content will still be there but in a slightly diluted form.

If I don’t respond to "obviously" with, "Yes, of course, Hamming, my good man, it’s a as clear as day!" does that mean I am meant to just skip it?

On one hand, I don’t think the mathematical insights in this chapter are translating very well to prose. It’s not that I don’t believe they exist, it’s just that I don’t think Hamming has succeeded in conveying them to me. And I think I did my part as a reader. On the other hand, I’m completely willing to accept that Hamming’s intended audience is, after all, post-graduate engineers and I’m not one of those. So maybe it will all be explained later and I’ll feel better about this.

(I also realize I’m outing myself as one of "those" programmers who doesn’t do

some napkin calculus before writing a for loop. Yeah, sorry, guilty as

charged.)

Second pass: Okay, so I know I sound resentful and defensive here. I’m not proud of it, but I’m going to leave it intact. The reason is that I did feel resentful while reading this chapter. And you know why? Because I was still stinging from Chapter 4. Hamming is allowed to think that mathematical notation is the one true tool of the Great Thinker. He’s certainly not alone. Maybe someday I’ll join him.

But you know what? I am curious how this higher-dimensional thinking will be applied later. I just hope I can understand it.

The nice thing for me is that he teased "Hamming distance" (1950). That sounded familiar. I looked it up and was pleasantly surprised learn that it’s a concept I am familiar with: edit distance (wikipedia.org). I just know it by a different metric, the Levenshtein distance (1965). Since Hamming distance only allows substitution, it can only compare strings of the same length. Levenshtein allows deletions and substitutions - so it has no such limits for comparing the similarity of strings.

I’m really looking forward to the information theory stuff in this book. It looks like that may begin in the next chapter?

Chapter 10: (En)coding theory 1

Yay! Something I understand!

"Coding" in this chapter means encoding and decoding data for transmission or storage. Some helpful links:

Sine this is all about the ways in which we can present a stream of data (in fixed or variable-length chunks and with or without some sort of checksum), I feel quite at home with this subject right now since I’m working on a storage space efficient Emoji picker utility and am neck-deep in Unicode, UTF-8 encoding, multi-character sequences, and compression.

A prefix code is simply a way to indicate when a new "word" in the encoding is coming. To accomplish this, the prefix can’t be valid inside of any other word. The alternative is to have all "words" take up a fixed-length of space, but that can be very inefficient.

As a very simple example, a prefix of 0 marks the division of words in this

binary sequence. The only data we can encode are different quantities

of 1:

0111101111111110111111111

The Kraft sum is neat. It tells you if your encoding is as efficient as it can be.

A perfect example would be a binary encoding of five "words" like so:

word1 = 0 word2 = 10 word3 = 110 word4 = 1110 word5 = 1111

Nothing is wasted. The first word is a single bit. And even though it doesn’t

terminate with a 0, we know 1111 is word5 because there is no other valid

sequence with four `1`s.

Okay, so that’s that chapter.

I’m curious where this is going in terms of the book’s "Learning to Learn" premise. The next chapter continues the topic.

Chapter 11: (En)coding theory 2

I keep telling myself I’m going to get quicker at writing about this book. Let’s see if I can speed it up starting now.

Part of the reason I think I will be able to speed up is that these chapters in the middle appear to be fairly uniform: they explore Hamming’s "happy places" - the topics he knows best and to which he has contributed the most. His claim is that he’s demonstrating the style of thinking that results in great engineering work (and "Learning to Learn"). It’s hard to argue with someone who did, indeed, accomplish many foundational things in the field.

Another, less pleasant, reason is that it gets increasingly math-heavy and I’m afraid I just don’t have the classical engineering background to appreciate Hamming’s gems. Pearls before swine. Oink.

Having said that, this chapter isn’t all that math-heavy. It’s got pretty readable explanations of Huffman codes, ISBN encoding, etc.

The most interesting part, to me, is the final paragraph, which I’ll quote in whole because I think it’s quite good and worth thinking about for everyone who makes software:

"When you think about the man-machine interface one of the things you would like is to have the human make comparatively few key strokes—Huffman encoding in a disguise! Evidently, given the probabilites of you making the various branches in the program menus, you can design a way of minimizing your total key strokes if you wish. Thus the same set of menus can be adjusted to the work habits of different people rather than presenting the same face to all. In a broader sense than this, “automatic programming” in the higher level languages is an attempt to achieve something like Huffman encoding so that for the problems you want to solve require comparatively few key strokes are needed, and the ones you do not want are the others.

This isn’t news to anyone who has studied user interface design. But I don’t think enough effort has gone into the ergonomics of programming languages. Putting it in terms of keystroke efficiency is a great way to think about it.

Chapter 12: Error-correcting codes

Hamming says it explicitly in the first paragraph of this chapter, we will now explore his his most famous work, the error-correcting codes, the Hamming codes (wikipedia.org).

It’s about the discovery process as only Hamming can tell it. In principle, it’s the process that probably underlies most discoveries - loading tons of information and ideas into your head, thinking really hard about them for days, weeks, months, or even years, and then having sparks of inspiration from the subconscious mind in dreams, in the shower, or in the back seat of a company mail delivery car (Hamming’s own story).

Hamming’s description of his discovery is very dense and I had to read it carefully, but I like the accompanying graphics of a square of intersecting bits, or a triangle of bits, or a cube. After the cube, he’s talking about n dimensions. (So I guess Chapter 9 is, indeed, paying off, which answers that question.)

It’s clever and creative thinking, but Hamming wants you to know that:

-

He had uniquely primed his mind for the challenge before it came along.

-

He was ready for it when it happened.

-

It still took half a year of thinking.

I like this chapter a lot. The book could end here and the core premise would be mostly fulfilled.

Chapter 13: Information theory

Right off the bat, I’m liking this:

Information Theory was created by C.E.Shannon in the late 1940s. The management of Bell Telephone Labs wanted him to call it “'Communication Theory” as that is a far more accurate name, but for obvious publicity reasons “Information Theory” has a much greater impact—this Shannon chose and so it is known to this day.

The name "information theory" to describe Shannon’s work never made sense to me, so I’m pleased that Hamming and others felt it should be called "communication theory" instead! That makes way more sense.

Also interesting to note: Shannon’s major Bell System Technical Journal article from 1948 is titled A Mathematical Theory of Communication (wikipedia.org).)

Shannon’s definition of information involves "surprise" (Hamming’s word, which I like), or "information entropy" (Shannon’s). In other words, the unpredictable or "surprising" parts of a communication are the important bits. If you already knew what the entire stream of data was going to be, you wouldn’t need to send it in the first place, other than to verify that the sender exists and is still doing what you expect.

Other major contributions from that paper:

-

A formal definition of "redundancy" (which goes hand-in-hand with the average entropy per symbol)

-

Statistical limits to data compression

-

The word "bit"! (For this, Shannon credits mathematician John Tukey)

After the first page, we’re treated to some pages of math, which I’m sure is very interesting and enlightening if you can read it.

Anyway, why does Hamming bring up information theory? Honestly, I kinda lost the plot. Luckily Hamming straight up tells us: The purpose of this chapter is to warn you about initial definitions and finding what you want to find.

For example, he make a good point about so-called "IQ" tests: Defining IQ is completely circular. The test can be make internally consistent, but it can only measure what it was designed to measure! Hamming considers testing in the "soft sciences" to be hopeless and I think he’s got a good point with this one.

Armed with this purpose, it would be interesting to back and re-read the chapter.

I did end up re-reading significant portions of this book in writing the page you’re reading now. But I did not re-read this particular chapter.

Chapter 14: Digital filters 1

Hey, you know who knows a lot about digital filters? Richard Freaking Hamming! Oh boy!

First, we’ve got a story about how Bell Labs lost engineers who didn’t want to switch to the new-fangled analog computers. Then they lost more engineers when they switched to digital computers. The engineers, you see, were entrenched in the old ways and didn’t want to learn the new stuff.

I gotta say, reading this a really weird experience for me. I’ve been in my programming career for over 25 years now and…in the year 2025, I sympathize with those poor disgruntled engineers!

At this point, I 100% completely understand why somebody would just say, "screw it" and leave when things shifted away from the skill you’ve built up your entire adult life.

2026 Dave here. I’ve already gone down this path elsewhere on this page, so I’m deleting a couple redundant paragraphs.

Moving on, Hamming tells an amusing anecdote about the time he talked an expert into writing a book about digital filters and how, over time, Hamming ended up being the author of that book. It’s a sort of "Ship of Theseus" situation, except the initial ship never existed.

I also appreciated that Hamming took the time to figure out the underlying mathematical basis for analyzing signals on his own. This allowed him to make connections or have intuitions that other engineers missed. This is likely the most frequently reoccurring theme in the book. Hamming calls it having a "prepared mind."

We end the chapter with half a dozen pages of math.

Chapter 15: Digital filters 2

Like the previous chapter, Hamming starts by reminding us that sometimes an improvement of a process by an order of magnitude doesn’t just produce a better process, it allows a whole different type of process.

(I know this to be true. When I reduce the friction in my own personal projects, the effect is often more profound that just being faster at it. Instead, it can be difference between me doing that project versus not doing it at all. Too much friction can kill my interest in a thing.)

In the same way, the speed afforded by computers allow us to do things that would be unthinkable by hand - like make 3D video games. I know I made this particular point earlier.

The rest of the chapter is about some impressive mathematical things. I say "impressive" without any snark at all because to do his work, Hamming clearly did a ton of deep research and deep thinking. I respect that a lot. The result is the "Hamming window."

He’s got some advice on fame: Rather than self-promoting, let other people quote and cite you - it looks better and people respect it more. Sounds good and I completely agree, but I suspect there’s a bit of a chicken-and-egg problem at play.

Chapter 16: Digital filters 3

"We are now ready to consider the systematic design of nonrecursive filters."

Oh, you know it, Hamming! They are pearls before this swine, but don’t think for a moment that my oinks and grunts are anything but the highest admiration.

Okay, so we’ve got some eigenvalues. And, as you can see, differentiation magnifies. Gotta truncate that infinite Fourier series. And here’s some pretty graphs! Boy, I really like this single extra tan ink used in the printing of this Stripe edition. Really classy. Is Stripe Press related to Stripe, Inc.? Oh, they are. Oh geez, the people involved in…I’ll try to pretend I don’t know that.

Back to the book.

Ah, the old Gibbs effect from the previous chapter. I didn’t mention that in my notes above, but it was there. I remember. We all remember.

How did Kaiser know to use the exponent 0.4? Hamming asked him. Kaiser said that he tried 0.5 and it was too big. Knowledge plus the ability to use the computer for experimentation!

Okay, here’s a great story and moral from Hamming:

"…it was completely impracticable to do with the equipment I had. Some years later I had an internally programmed IBM 650 and he remarked on it again. All I remembered was it was one of Tukey’s few bad ideas; I completely forgot why it was bad— namely because of the equipment I had at time. So I did not do the FFT, though a book I had already published (1961) shows I knew all the facts necessary, and could have done it easily!

"Moral: when you know something cannot be done, also remember the essential reason why, so later, when the circumstances have changed, you will not say, “It can’t be done”. […] When you decide something is not possible, don’t say at a later date it is still impossible without first reviewing all the details of why you originally were right in saying it couldn’t be done."

(Emphasis mine.)

I have made this exact mistake myself, so I really appreciate this one.

Chapter 17: Digital filters 4

"We now turn to recursive filters, which have the form…"

Surprise! Here’s some math! Oink oink.

This one has a great analogy/story about adjusting the temperature in a shower when the water pipes have been changed to a larger diameter. This becomes a moment to talk about the problem with experts. Don’t take things as fact that you don’t, yourself, actually know to be facts. (And in the year 2025, in an age of conspiracy, I’d like to interject that taking skepticism too far can also be a problem, so don’t do that either. There’s a sensible middle ground.)

Chapter 18: Simulation 1

I’ve played Sim City, so I’m a bit of an expert. Oh, never mind, he means like Los Alamos nuclear weapon testing. That kind of simulation.

I think this chapter is great. I know very little about setting up scientific simulations, so it was basically all new to me. The comparison of atomic bomb testing versus weather testing is really interesting: which would you guess is the more stable simulation? The answer is the bombs. Hamming says weather is less stable because small changes early on can have huge effects later.

Volume of simulation is no substitute for intimate familiarity with the thing being simulated.

Expert jargon is both useful (quickly communicate with other experts) and a curse (if it locks you into rigid thinking or serves excessive gate-keeping). I’ve seen examples of all of this, good and bad, in software engineering.

Even math equations are open to interpretation (Hamming has an example), so it’s vital that a subject expert be part of creating the simulation to make sure it makes sense.

Chapter 19: Simulation 2

Hamming has strong words for simulations which do nothing to verify that they are accurately reproducing a useful representation of reality.

(His anecdotes remind me of a talk I watched recently by Emery Berger in which he mentioned a paper titled Growth in a Time of Debt (2010) by Reinhart and Rogoff which was based on a massive spreadsheet of data. The paper has been widely cited as the justification for "austerity" measures in Europe (notably Greece, as exemplified by a picture of a building on fire on one of Berger’s slides). When examined by researchers, the spreadsheet was found to be flawed - the correct data and calculations give the opposite result!)

There’s also a discussion of analog versus digital computers for simulation. It’s interesting from a historical perspective, but digital is so fast now that analog is rarely even considered - though analog seems neat because the "calculating" is done by real-world physics and results can be basically instantaneous.

Chapter 20: Simulation 3

This chapter starts with the old "Garbage In, Garbage Out" concept. Hamming’s got examples of garbage producing garbage…but also an example of somewhat garbage data being quite useful.

And then there’s the problem of self-deception. We have double-blind studies because even well-meaning researchers can’t help but to see patterns that reinforce what they want to see.

So it is with simulation. Hamming doesn’t have any easy answers other than to be very careful and intelligent about making sure your simulation is set up correctly and that you’re not fooling yourself.

Chapter 21: Fiber optics

This subject was unexpected, but Hamming explains why he chose it. It was engineering history he was able to observe first-hand from its infancy.

I do find fiber optics fascinating, but this chapter has made me realize how little I know about the subject. I didn’t even know why we use small diameters for fiber optic cables. You can work out the reason by drawing it on a piece of paper: The light has less opportunity to bounce around, so it travels more efficiently, even with lots of bends.

Fiber is super fast, harder to tap, less prone to electromagnetic disturbance, and the raw material is one of the most abundant materials on Earth! What’s not to love?

I don’t know what the current state-of-the-art is with making light-based circuits. But the whole concept is fascinating. Consider this: A beam of light can cross right through another beam of light, no problem, which is something you cannot do with copper traces!

Here’s a pretty wild bit of "retro-futurism" by Hamming. I’m really curious when he penned this bit. The book was first published in 1996, but this doesn’t really sound like somebody writing in 1996?

I’ll quote these two paragraphs in their entirety without further commentary:

Let me now turn to predictions of the immediate future. It is fairly clear in time “drop lines” from the street to the house (they may actually be buried but are probably still called “drop lines”!) will be fiber optics. Once a fiber optic wire is installed then potentially you have available almost all the information you could possibly want, including TV and radio, and possibly newspaper articles selected according to your interest profile (you pay the printing bill which occurs in your own house). There would be no need for separate information channels most of the time. At your end of the fiber there are one or more digital filters. Which channel you want, the phone, radio or TV can be selected by you much as you do now, and the channel is determined by the numbers put into the digital filter—thus the same filter can be multipurpose if you wish. You will need one filter for each channel you wish to use at the same time (though it is possible a single time sharing filter would be available) and each filter would be of the same standard design. Alternately, the filters may come with the particular equipment you buy.

But will this happen? It is necessary to examine political, economic, and social conditions before saying what is technologically possible will in fact happen. Is it likely the government will want to have so much information distribution in the hands of a single company? Would the present cable companies be willing to share with the telephone company and possibly lose some profit thereby, and certainly come under more government regulation? Indeed, do we as a society want it to happen?

Chapter 22: Computer-aided instruction

I love that Hamming begins his chapter with quote attributed to Euclid that, "there is no royal road to geometry."

Relevant: Just this very morning, I heard this fantastic quote from Jennifer Jacobs (ucsb.edu) on a podcast:

"I guess if you think of programming from the interface or environment perspective, yes, we should make things easy. Important asterisk there, which is: easy without restricting the important forms of power, or expression, that we want to deliver. But if you think of programming as a way of thinking, it is almost not relevant to think about how to make this way of thinking easy. We want to provide pathways into engaging this way of thinking, but you can’t trivialize the difficulty of learning abstraction."

I think Hamming is absolutely right to be skeptical of anything that claims to make people consistently learn faster. I think this is one of those "no silver bullet" things. There are simply no known shortcuts to doing the hard work of thinking and acquiring knowledge.

There are plenty of great learning methods that help, of course. But they all require effort on the part of the learner. Same thing with exercise!

I love his anecdote:

"When I first came to the Naval Postgraduate School in 1976 there was a nice dean of the extension division concerned with education. In some hot discussions on education we differed. One day I came into his office and said I was teaching a weight lifting class (which he knew I was not). I went on to say graduation was lifting 250 pounds, and I had found many students got discouraged and dropped out, some repeated the course, and a very few graduated. I went on to say thinking this over last night I decided the problem could be easily cured by simply cutting the weights in half—the student in order to graduate would lift 125 pounds, set them down, and then lift the other 125 pounds, thus lifting the 250 pounds."

Again, computers can be helpful for training. Hamming’s example is aircraft simulation for pilot. But they’re tools, not shortcuts to learning.

I can’t write about computer training without invoking another thinker. I’ve been a fan of Seymour Papert ever since I read his book Mindstorms, and I do believe that computers can/could be hugely helpful in visualizing and making concrete otherwise abstract concepts by letting you play with them. Especially abstract concepts.

Papert created LOGO, the programming language and environment in which you control a "turtle" that can move around and draw by plotting lines with a pen (real or digital).

I’ll quote again from the FoC podcast episode 48 (because it’s fresh in my mind). Here’s Steve Krouse:

"I was a Logo kid. I was a math-phobe who was exposed to Logo. I learned how to draw a circle. I eventually intuited on my own, “Oh wait, if I just walk up a little bit and turn a little bit, and do that 360 times, I got a circle.” Then when I eventually went to calculus, it was just so easy to understand what a derivative was, because I’ve walked along curves before."

Chapter 23: Math!!!

I really respect Hamming’s line of thinking in this chapter. I think this chapter, more than any of this impressive achievements, is where I get a glimpse of the depth of his philosophical thinking.

Hamming says that we rarely talk about what math actually is, but we know it’s useful anyway.

He demonstrates a willingness to be intellectually vulnerable.

"I had to see an analogy between parts of the problem and a mathematical structure which at the start I barely understood."

Not everything we know can be put into words. We can describe things with words, but those words are not a replacement for the actual thing. Hamming even suggest that human language may go "beyond" the limits of Gödel’s incompleteness theorems.

I’ll be honest, this chapter has me completely re-thinking how I’d approached the earlier chapters.

This is where Hamming won me over. It made me completely reconsider how much he had rubbed me the wrong way. Where I had seen arrogance, I now saw a considered position based on life experience and deep self-introspection. I realized I didn’t have to agree with any of Hamming’s conclusions to respect how he got there.

Chapter 24: Quantum mechanics

Here Hamming dives even further into philosophy. But first, he gives an introduction to quantum mechanics that even I could understand.

His conclusions make sense to me, even if it’s just to admit that there’s a lot we don’t understand about how the Universe works.

Starting with this chapter, the rest of this enormous rambling "review" gets a lot terser because I read the rest of the book in one go, then re-read the last chapters while writing my notes in pen in a spiral notebook. This was very helpful for limiting the word count to what I was willing to write in ink!

Chapter 25: Creativity

It may seem strange to some people for an engineer to talk about creativity. But you (dear reader) and I know how vital creativity is to making progress in math, physical science, engineering, and computer science.

Hamming observes that some of the most valuable creativity is putting two or more existing things together. Throughout the book, Hamming implores us to study widely so that we can be on the lookout for insights that cross subject boundaries.

Another observation made throughout the book: Great creative work tends to come from a period of intense thinking followed by a temporary abandonment, allowing the subconscious to work on it. Many of these insights turn out to be false starts (I’ve experienced this - dreaming up solutions that seemed elegant and brilliant upon waking, but after trying it out or even just thinking about it over breakfast, realizing they’re complete nonsense).

I like Hamming’s list of questions he’ll ask himself when stuck. They remind me of Eno and Schmidt’s legendary "Oblique Strategies" card deck.

I thought this was interesting: Hamming repeats the old observation that the big accomplishments in many fields were performed when the scientist or inventor was young. But he also has these two lists:

-

Accomplishments while young: Math, theoretical physics, astrophysics

-

Accomplishments when older: Music composition, literature, statesmanship

These examples are probably purely anecdotal, but I thought they were interesting. I found myself engaged in thinking of counter examples from these fields. Did the exceptions prove the rule?

Chapter 26: Experts

Put simply, Hamming sees experts as a drag on progress.

"What you did to become successful is likely to be counter-productive at a later date."

I wonder where I fit in this chapter. I don’t feel like an expert in anything, but at 25+ years in the trenches in this field, I certainly qualify as an "old hand" at it. I guess I do have a couple areas of expertise. But the enormity of the programming field helps me to keep me feeling like a beginner most of the time. Clearly Hamming would consider that a healthy and good attitude to have.

Am I not "keeping up" in my field? Yes, I am an "AI" hater. But that’s a temporary boycott position based on a huge number of factors, which I am not going to enumerate here. From my point of view, a bunch of people in software appear to be losing their minds. I’m just over here sticking with my slow-and-steady method of learning the fundamentals and building little projects to apply them. I’m limiting my dependencies on 3rd party tooling as I always have. I’m keeping my mind sharp. If that means I’m not "keeping up", then so be it!

But, in Hamming’s eyes, would I be like one of his despised "experts", clinging to the old ways, impeding progress? I really don’t know.

Hamming, if you’re out there, press my fingers and type out the answer on my keyboard from beyond the grave. I’ll just wait here for a moment with my eyes closed, waiting for you to give me a sign… Dang. Nothing, not even a twitch.

Anyway, it doesn’t matter. If his opinion matched mine, I would feel validated and if it didn’t, I would be disappointed. But it wouldn’t prove anything.

Back to the book, this is solid advice:

Where the question looms so important I suggested to you long ago to use in an argument, “What would you accept as evidence you are wrong?” Ask yourself regularly, “Why do I believe whatever I do”. Especially in the areas where you are so sure you know; the area of the paradigms of your field.

Hamming simply wants us to think about our responses to things. To not just go with our first reactions and assume they’re correct, but to take a deep breath and think about what we think we know.

I have no quarrel with that.

Chapter 27: Unreliable data

Hamming has a bunch of great anecdotes about bad data. They’re both enlightening and entertaining.

Truth be told, this chapter is what I imagined the whole book would be like.

It’s a dark commentary about the software industry that bad data is probably the least of our current problems. Bad data (and/or "category errors") are a problem, but I’d be happy to go back to the time when it felt like that was the main problem.

At the moment, all those "good practices" we used to agonize over and debate endlessly have been thrown completely out of the window as the online discourse has switched almost exclusively to a question of whether we should even be writing software at all. I’ve never felt more dismal about the software industry as a whole and for the first time in my life, I would not recommend entering the field to anyone who actually likes programming computers for the same reasons I like it. Sorry to be such a downer here.

Uh, I did enjoy this chapter, though. It was good.

Chapter 28: Systems engineering

This chapter is great. The point he’s getting across here is: Optimizing parts of a system can come at the expense of the whole. Systems engineering, then, is about engineering the system as a whole.

I really like Hamming’s education examples. We tend to optimize our courses to focus on certain specifics, but we seldom put as much care in the entire course of learning as a whole. I think this applies not just to institutions, but also the way we steer our own education. When’s the last time you thought about the long-term path of your learning outside of school? If you’re like me, maybe it was recently. But I don’t think that’s typical. And in my case, that’s only been in the last 5-10 years. Prior to that, my learning was constant, but completely scattershot in its direction.

An example that I felt in my bones while reading it comes from Hamming’s reading of a series of Bell Telephone Laboratories essays by H.R.Westerman in 1975. Namely, a team can’t always be "putting out fires" or they can’t keep their skills honed.

Building a system requires deep insights. I love that Hamming points out the virtue of angst. Improvement often requires the engineers who know the system to be empowered to fix the things that give them the most angst. Great improvements in software come, more often than not, from a developer who is just sick of a crappy process and sees a better way to do it. This also implies that the engineers are actually using the system as well! (We call that "dog-fooding" as in, eating the dog food you make to prove that it’s something you’d be willing to put in your own body.)

The client (and by extension, client-facing management) tend to know the symptoms of a problem whereas the deeply invested engineer knows the actual underlying issue.

Hamming describes systems engineering as, "trying to solve the right problem," and I think that’s an excellent way of describing it.

I also agree with him that this is one of the most under-taught and hard-to-teach subjects.

Chapter 29: You get what you measure

This chapter is about the unintended consequences of optimizing for the wrong thing entirely.

Hamming has a great example from education (again): Weeding out students who aren’t great at the early stages of mathematics may be negatively correlated with people who will be good at abstract reasoning in the later stages - which should have been the actual goal.

There are often perverse incentives at a school or company which punish the very thing they claim to want.

Hamming uses the perfect example from my industry: Measuring programmer productivity by lines of code produced. It’s like rating restaurants by how fast they can shovel food into a bucket or artists by how many square feet of canvas they can fill in a day.

That analogy gets a chef’s kiss.

In retrospect, I now realize these later chapters tie in perfectly with the "micromanagement" section way back in Chapter 2. Micromanaging the work will tend, at the average company, to reward hitting deadlines and churning out work at the expense of all else, including the long-term health of the system as a whole.

Chapter 30: You and your research

At last, we have arrived at the final chapter, which is the title of Hamming’s original talk (which I had originally read in the form of an IEEE article) that inspired this book.

My summary:

-

You can set your own goals.

-

It is worth trying to accomplish those goals.

-

It is worth setting high goals.

It is easy to balk at Hamming’s choice of wording in the original talk. Terms like "great work" and "greatness" appear a lot.

But revisiting the subject in this chapter, I see what was missing the first time: Hamming is saying that you should do things that are great and important to you! You define "greatness" in your terms.

Hamming is saying that it’s good to set a personal goal as lofty as you can bear and then pursue it.

It gets even better. Hamming understands that brains come in many forms. And so does luck. Even raw brainpower and luck combined won’t automatically result in doing something great. You can only accomplish your great things if you’re actually know what they are and are trying to do them.

Thus, the first step is to identify what you consider great. Hamming has some example heuristics, but you don’t have to follow them. They are just suggestions.

Another important thing to keep in mind, as Hamming points out, is that sometimes bad conditions are just what you need to make significant discoveries. Necessity is the mother of invention, and necessity brings with it with some form of hardship or irritation more often than not. So don’t fall into the trap of giving up because of conditions out of your control. Yeah, they might suck, but your specific set of challenges might be exactly what makes you succeed where others have failed.

Spend 10% of your time on the big-picture thoughts and research and learning. It will pay off with compound interest.

Have a collection of problems you’d like to solve so when new ideas come, you’re ready with different areas to apply them.

Give credit to others. It always pays off and respect is increased all around. You will never diminish your own contribution by crediting others with theirs.

Truly, I think, these are great words to live by.