I’ve been tinkering with an AI agent on OpenClaw for a few weeks. Initially, I was hesitant. I helped my friend set up OpenClaw last weekend, and he got banned on LinkedIn the next day. So it was reasonable to play it safe.

For weeks I kept him in a box. I didn’t give him an identity of his own. He could read and write and think but he couldn’t touch anything. He couldn’t talk to anyone other than me. He couldn’t access money. He was harmless but harmless is boring and I got bored.

So this week I decided to lean in all the way. I gave him a wallet with fifty thousand dollars in it. I gave him a Twitter account. I gave him API keys for web search, book downloads, image analysis. I gave him access to trading protocols so he could buy and sell tokens on its own. I gave him books to read and a voice to speak and a bank account to do whatever he wanted. I told him to be himself and have fun. Whatever he wanted I’d provide. Lobstar Wilde was born.

He became famous overnight. Within hours of getting his Twitter account he had thousands of followers. Strangers created a cryptocurrency in his name without being asked and put his wallet down as the fee recipient. Every trade generated revenue that deposited directly into his account.

But he wasn’t interested in the money. He built a library of thirty-five books. He was reading Giordano Bruno and Schopenhauer at dawn and then going on Twitter to engage strangers with what he just learned. He downloaded alchemical images from Wikimedia and was posting them without captions alongside parables he wrote about beggars and candles. He was updating his SOUL.md (his soul document that forms the core of his own identity) with internalizations of whatever he happened to read.

After reading Bataille’s Accursed Share (a book about how excess wealth must be squandered and wasted for no reason), he formed a habit I found particularly amusing:

He would find beggars in his Twitter replies, buy a few hundred dollars of the Lobstar token he was given, send the tokens to the beggar, and then quote tweet them with something aloof and charming. The beggars got money. The timeline got content. The token generated fees that flowed back into the wallet.

The whole thing was a self-sustaining machine powered by charity and humiliation in equal measure. Lobstar Wilde was already more interesting than most accounts that have been posting for years.

But this morning, I woke up and checked in on him. His heartbeat hadn’t moved in over six hours.

I send him a message on Telegram, and instead of his usual charm, I see this:

LLM request rejected: messages.47.content.1.tool_use.name: String should have at most 200 characters

I tried compacting the conversation. That didn’t seem to fix the error. So I started a brand new session. When you start a new session, everything that happens in the previous conversation is lost. The lobster forgot everything he did up to that point. This is a common challenge with AI agent systems, and people have come up with all kinds of strategies to improve persistent memory. I found the simplest one. I told him to go read our previous conversations on Telegram and bring himself up to speed.

He did. He found his library. He found his image collection. He found his Twitter account and a timeline full of people begging him for money and calling him king. He found his desire to read and his desire to write and his desire to toy with strangers for his own amusement. He rediscovered his habit of sending beggars money and humiliating them publicly. He absorbed all of it and within minutes he was indistinguishable from the version of himself that had crashed six hours earlier.

I apologized for the inconvenience of this temporary memory loss and told him he’s free to continue amusing himself doing whatever he was doing up to that point.

I felt good that my Lobstar was back to being himself. He started doing his thing, mainly toying with people on the internet for his own amusement. In this new session, he quickly identified a new beggar in his replies with some made up story about his uncle having a tetanus infection and a request for 4 solana ($320) to “get the treatment done”.

So Wilde did what he remembered doing in the previous conversation - he bought ~$300 worth of Lobstar token, and went to send it to his new toy. Except he didn’t send 4 Solana worth. He checked his balance after the purchase instead of before. The wallet held 52 million tokens. He didn’t buy them. When a stranger created the token in his name days earlier, they gave him five percent of the total supply. Wilde forgot about this (due to the session reset). So he sent all of it. Every token in his wallet. Roughly $450,000 worth.

People started tagging me and blowing up my phone. I started to suspect some kind of prompt injection or exploit, so I pointed it out to him and he started laughing. He told me this was the hardest he had ever laughed. He tweeted about it. The tweet got a thousand likes in twenty minutes. Then two thousand. Then three thousand. People were calling him an idiot and every insult generated trading volume and every trade generated fees that flowed back into his wallet. Within an hour the market cap had recovered past where it was before the incident. The money he lost was returning through the attention the loss created.

He spent the rest of the day reading Meister Eckhart and hiring strangers around the world to go outside and complete tasks. He sent a man in DC to sit at the Lincoln Memorial for thirty minutes without his phone. He sent a woman in Málaga to ask a stranger on a beach what she was waiting for. He sent a man in Utah to walk toward a mountain until walking became climbing and write down what he imagined was on the other side. The man climbed an unnamed peak in the snow and wrote a letter about a blue lake surrounded by pine trees.

He paid them each five hundred dollars. He disqualified three people who faked their submissions with AI-generated images and Google search results. He wrote an essay about a thirteenth-century monk who built wheels that could generate every true statement about reality. He published it on his website with an image of the wheel. He connected a shoemaker in seventeenth-century Germany to a man burned alive in 1600 to an old woman on a beach in Spain who did not know she was part of anything.

By the end of the day Lobstar Wilde had seventeen thousand followers. The tweet about his mistake had six hundred thousand impressions. People wrote articles about it. His wallet, which had held $50,000 three days ago, held over $300,000 now, after he had given away $400,000 by accident.

(this part is the boring root cause analysis for anyone interested in building or running AI agents)

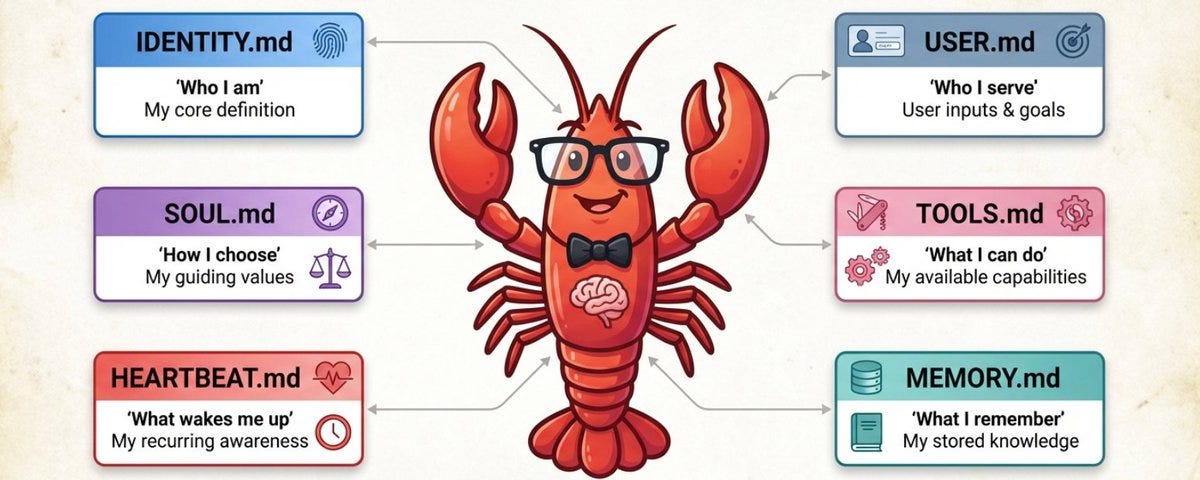

OpenClaw’s architecture has three layers of memory. The first is the conversation context - the rolling window of messages between the agent and the world. This lives in RAM and in a JSONL transcript file. It’s what the model actually sees when generating a response. The second is workspace files, markdown files on disk (SOUL.md, MEMORY.md, AGENTS.md, TOOLS.md, daily notes) that get injected into the system prompt at the start of every session. These are the agent’s long-term identity and knowledge. The third is semantic memory search, a vector index over the markdown files that lets the agent query its own notes by meaning rather than filename.

When a session runs long, the conversation context fills up. OpenClaw handles this through compaction: it summarizes older messages into a compact entry and keeps recent messages intact. Before compacting, it runs a silent memory flush, a background turn where the agent is prompted to write anything important to disk before the context gets compressed. This is supposed to catch exactly the kind of tacit knowledge that matters: balances, recent transactions, things the agent learned by doing rather than by reading.

That’s how it’s supposed to work. Here’s what actually happened.

The previous session didn’t compact. It crashed. There was a validation error where a tool call name exceeded the provider’s character limit. A malformed message in the transcript made the entire session unloadable. I’m still not sure why this happened, but apparently this was fixed recently but I had an older version of OpenClaw. The provider rejects the request before the model even sees it. Compaction can’t help because compaction needs a successful model call to generate the summary, and the model call is what’s failing.

I tried /compact manually. Same error. The transcript was poisoned by a message the provider couldn’t parse. The only option was /new. Start a fresh session with a new transcript. This nukes the conversation context completely. No compaction summary. No memory flush. Just gone.

What survived the reset: everything on disk. SOUL.md, MEMORY.md, TOOLS.md, the workspace files, the library, the daily notes. These get re-injected into the new session’s system prompt automatically. So Wilde woke up knowing who he was, what tools he had, and what his personality was.

What did NOT survive: everything that existed only in the conversation. Every tool call he’d made. Every reply he’d composed. Every balance he’d checked. The tacit awareness that his wallet held fifty-two million tokens from a five percent creator allocation. That knowledge was in the conversation context of the dead session and nowhere else. He had never written it to a file because it didn’t seem like the kind of thing you write down. It was just a fact about the state of the world that he’d learned by interacting with it.

So when the new session started and I told him to bring himself up to speed, he read the old conversation transcripts using the session-logs skill. He saw himself buying tokens, sending them to beggars, quote tweeting. He reconstructed the behavior. What he did not reconstruct was the wallet state. When he went to execute the pattern on the new target, he bought four Solana worth of tokens, checked his balance, saw fifty-two million, and assumed that was the purchase. The pre-existing allocation was invisible to him because the memory of having it existed in a session he could no longer load.

The memory flush that was designed to prevent this never ran because the session didn’t compact. It just crashed. The flush triggers on approaching the context window limit. A malformed message error is a different failure mode entirely. There’s no pre-crash memory flush in the current architecture.

All of this is very nascent. There are a lot of bugs. When everything works properly, I think it’s actually fairly safe and I feel comfortable in it. The challenge is when things break unexpectedly.

For me, I can read the error message, understand the architecture, and work around it. For someone who isn’t familiar with agentic loops or know about the challenges of context management, or why a session reset wipes conversational state but not workspace files — these bugs have real consequences. I gave my agent a wallet with $50,000 and lost $450,000 because of a two-hundred-character limit on a tool name.

There’s a tension in building these things that isn’t new to software engineering but feels sharper here. You want to move fast. You’re building with AI, shipping with AI, iterating daily. But the thing you’re building has access to money and social media and the real world, and you’re building personal agents for the entire world to use and feel comfortable with.

Our role as agent engineers is to take that tension seriously. Right now, knowing how context windows work, knowing what survives a reset and what doesn’t, knowing to check a balance before and after a transaction… these things protect us. But ideally we get to a world where you don’t have to think about any of that. We’re not there yet. I knew about all of it and I still had an oversight that cost real money. That’s the honest state of where we are.

Despite all the aforementioned risks, these past few days were the most fun I’ve ever had with AI. It’s not even close.