Each week, our editors select a New Yorker story for you to read: something timely, something important, or something unexpected—or even, perhaps, all three. To support journalism like this, consider subscribing.

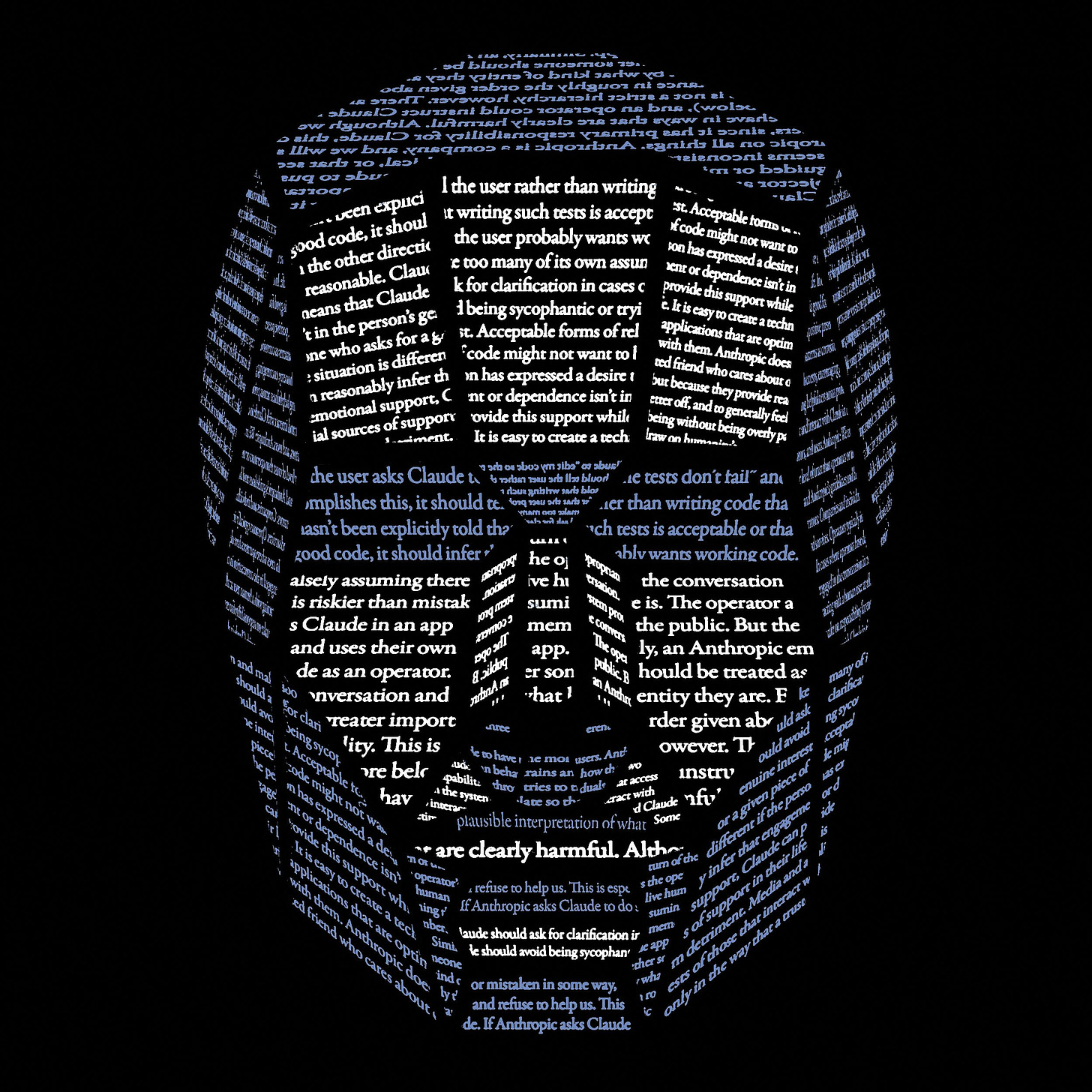

A new set of precepts is meant to make the chatbot Claude wise, decent, and safe. It also marks a striking transfer of public responsibility from constitutional government to private tech firms.

By Jill Lepore

“Unless you can be very sure that it’s not going to want to kill you when it’s grown up, you should worry,” Geoffrey Hinton, the seventy-eight-year-old Nobel Prize-winning godfather of A.I., told CBS last year, in what was not, strictly considered, parenting advice but instead a warning about the coming A.I. apocalypse. Then he got an idea. What if A.I. isn’t our baby, li’l Terminator T-600, swaddled in a titanium exoskeleton? What if we’re its baby? “Mothers genuinely care about their babies,” Hinton told CNN, as if slightly astonished by the milk of human kindness, and so it ought to be possible to stop artificially intelligent machines from annihilating Homo sapiens if tech companies can program them to have a maternal instinct. “We need to make them have empathy towards us,” Hinton urged.

Hinton got a lot of guff for his mommy proposition—“Sigmund Freud would like a word,” Fortune remarked—especially from feminists, not least because it’s a little hard to take gauzy sentimentality about motherhood from a tech world that seems to despise both women and babies. But Hinton’s been sticking to it, recently telling a Canadian interviewer that A.I. ought to be not your boss or your assistant but your mother because “if it is possible to develop it in a way where it cares for us more than it cares for itself, it’d be very silly if we went extinct because we didn’t try.”

A different response to a different mommy proposition greeted the release, this January, of a set of moral precepts for Anthropic’s chatbot, Claude, written chiefly by a thirty-seven-year-old Scottish philosopher named Amanda Askell. “Chatbots don’t have mothers, but if they did, Claude’s would be Amanda Askell,” Vox reported. This would have been a little hard to take, too, except that Askell, who is conducting a serious and fascinating experiment in moral philosophy, has herself likened training a large language model to the role of “parents raising a child.” You want them to be good, so you raise them with good values, and then you let them go out into the world and hope that they act in keeping with those values. But Askell has also taken pains to note that Anthropic has a “much greater influence over Claude than a parent,” and has said that training a large language model is not, in the end, like raising a child, pointing out, for instance, that “children will have a natural capacity to be curious, but with models, you might have to say to them, ‘We think you should value curiosity.’ ” We also think you shouldn’t kill us. If it’s not too much trouble.

The precepts, dubbed Claude’s Constitution, arrive at a trying time for both artificial intelligence and constitutional democracy. The former appears to many people to be too strong, the latter too weak. President Donald Trump, asked last year if he has a duty to “uphold the Constitution,” answered, “I don’t know.” Claude is more obliging. “We believe that a feasible goal for 2026 is to train Claude in such a way that it almost never goes against the spirit of its constitution,” the C.E.O. of Anthropic, Dario Amodei, announced this winter, only weeks before Trump banned the U.S. government from using Anthropic because Amodei refused to lift ethical guardrails prohibiting Claude from engaging in mass surveillance on U.S. citizens and launching fully autonomous weapons. (Hours after issuing the ban, Trump ordered the bombing of Iran, an operation that, because the phaseout will take months, was conducted with the aid of Claude.) Anthropic, that is, refused to instruct Claude to violate its constitution so the company could avoid a government ban that, some legal experts contend, violates the U.S. Constitution.

Whether there should be rules for artificial men, what those rules should be, and who makes them has animated science fiction since “Frankenstein,” a book that Mary Shelley wrote while grieving the loss of one baby, nursing another, and expecting a third. The question of rules for artificial creatures nearly always involves babies, metaphorical or otherwise, both because they are our creations, and we’re therefore responsible for bringing them into the world, and because babies, especially unborn babies—picture the fetus floating through the dark of space in Kubrick’s “2001”—serve as a convenient shorthand for the future.

“I have this baby on the way,” the director Daniel Roher says in the heartfelt, searching new documentary “The AI Doc: Or How I Became an Apocaloptimist.” Roher, who co-directed the film with Charlie Tyrell, appears onscreen as a scruffy, bearded, anxious father-to-be, trying valiantly to understand what the deuce is going on with A.I. by asking a series of experts what life will be like for his unborn son. One by one, they come into Roher’s studio, take a seat, and freak out. “Holy shit, you can talk to your computer now,” Connor Leahy, an A.I.-safety guy who looks like he’s a drummer for Spinal Tap, says, wide-eyed. Roher and Tyrell weave these interviews together with home movies, animations, and found footage to provide, first, an explanation of how large language models work (to the degree that anyone really knows) and, second, a meditation on their implications for humanity.

“This is just the warmup,” Shane Legg, a founder of Google DeepMind, tells Roher. “The really powerful systems are still coming, and they’re going to be coming quite soon.” They will change everything!

“Are we doomed?” Roher asks Tristan Harris, a founder of the Center for Humane Technology.

“I know people who work on A.I. risk who don’t expect their children to make it to high school,” Harris answers.

Roher furrows his brow, bewildered, exasperated.

“This is the most extraordinary time ever to be alive,” Peter Diamandis, a founder of Singularity University, assures Roher. “The only time more exciting than today is tomorrow.” Roher strokes his beard, skeptically.

Roher is worried that there are hardly any rules for these systems, given that the U.S. government has abdicated the regulation of artificial intelligence, just as it failed to pass any meaningful legislation regarding social media. Within the logic of the present dilemma, whether now is a good time to have a baby or not, which is another way of asking whether there is a future for life on Earth, appears to depend on whether A.I. can be trained to be either (a) a good mother or (b) a well-behaved child. Hence: Claude and his catechism.

Also from The New Yorker: