I grew up in Norway, where history education is a jumble of disconnected topics. Vikings one week, the Bronze Age the next, the French Revolution the third. By the time you finish school, you've heard all the names — Charlemagne, Constantinople, the Silk Road — but nothing connects to anything.

For most of my life, this didn't bother me much. Then I went to Crete.

Walking around Heraklion, we came across a massive fortress — walls several meters thick. A poster explained it had been besieged by the Ottomans for thirty years while held by the Venetians. I had lived in Italy, visited Venice many times, but never knew Venice had colonies. Then: "Before the Venetians, it was part of the Byzantine Empire." I just stood there. I could not tell you a single meaningful thing about the Byzantine Empire. Constantinople — does that have something to do with Rome? At what time? I was 42 years old.

I had read the big history books — Sapiens, Guns Germs and Steel — and they felt fascinating in the moment. But I retained almost nothing. Worse: I couldn't tell what was controversial. When everything is new, you can't see the choices the author is making. You don't have the background knowledge to think critically.

History is different from other kinds of reading. In a philosophy book, there are key ideas to extract. In a history book, every sentence contains new information — names, dates, places, causes. You feel cognitively overloaded, stressed because you feel like you should be remembering everything, and three months later it's all mush.

I was almost giving up on reading nonfiction. The gap between what I understood while reading and what I could reconstruct months later felt demoralizing.

Petrarca emerged from years of following hunches, not knowing what would work.

2020 — Learning from Podcasts. ListenNotes, Otter.ai voice notes while walking, Roam Research. Already experimenting with voice capture for learning six years before AI. The discovery: speaking about what you're learning forces prioritization in a way that note-taking doesn't.

2024 — 12 Centuries in 12 Months. A century per month from Charlemagne to the present. Minimal goal: for each century, know the map, major wars, a paragraph summary. Inspired by the Italian liceo classico, where the curriculum moves synchronously across art, music, literature, philosophy, political history. I got so interested in Charlemagne that I stayed with him for several months and never followed through to the other centuries. But it was an early attempt at the systematic scaffold I was looking for.

2025 — 1814 Day by Day. If the 12 Centuries project was wide and thin — scanning across centuries — this was the opposite: zoomed into a single place and a single year, reading Norwegian newspapers from 1814 day by day through 2025. History through primary source immersion.

2026 — Alif: Arabic from Scratch. A single-user Arabic learning app. Sentence-first learning, spaced repetition, capturing from real sources. ~1,300 words in 8 weeks. The crucial lesson: spaced repetition never worked for me in isolation. But the moment it integrated with real reading workflows, it became powerful.

The thread: voice capture works. Bounded structure works. Integration with real workflows works. AI changes what's possible. Petrarca combines them — but the approach is open-ended and exploratory. I have a hunch about what a learning companion should do, and a lot of experimentation to find out.

What does it actually feel like to use this?

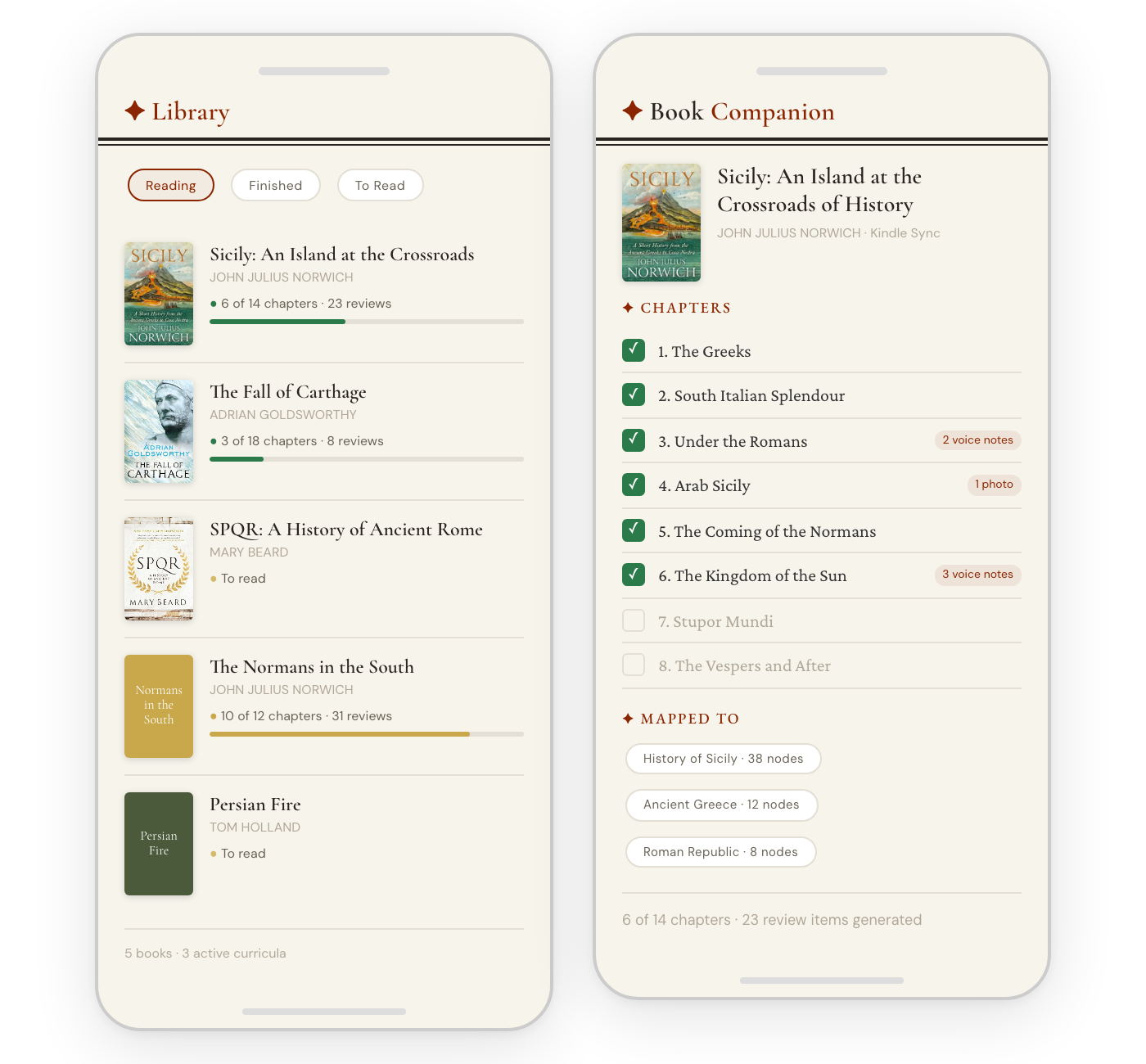

You started Norwich's Sicily last week. You snapped a picture of the front page of the book, and the system added it, researched it, and mapped it against your curricula. You read three chapters on the couch last night. While reading chapter 3, you tapped the margin and spoke a quick voice note about something Norwich said about Archimedes. In chapter 4, you photographed a page with a map of Arab trade routes — the app found the page number and OCR'd the text. No interruptions to the reading itself. The book is the book.

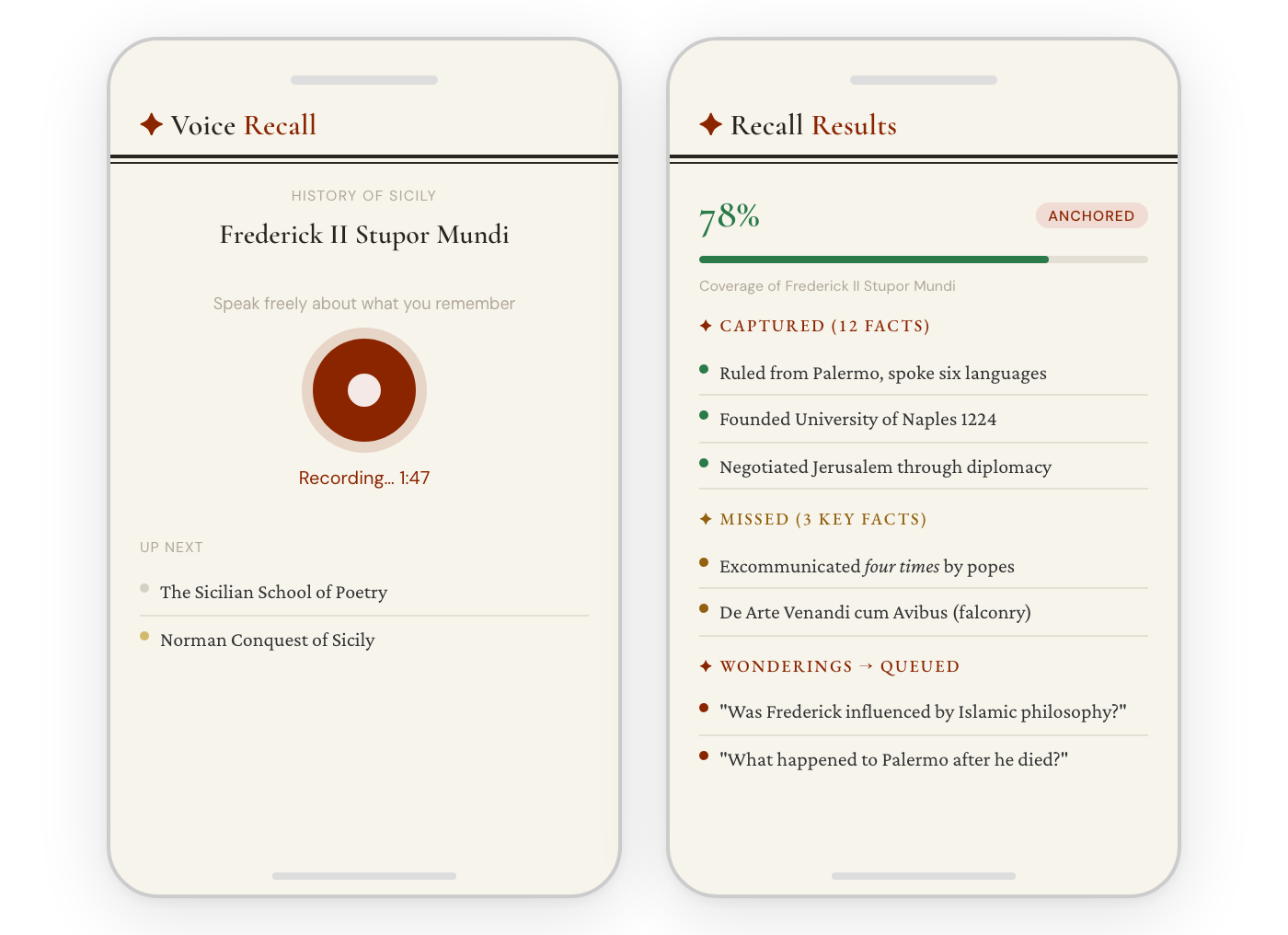

Getting in the car, you see voice prompts are available. "What do you know about Frederick II Stupor Mundi?" No hints, no description — that's the point: you have to recall it. You press record and talk for two minutes while driving. You mention his court in Palermo, the six languages, the Sixth Crusade. You wonder aloud: "Was he influenced by Islamic philosophy?"

78% coverage. Twelve facts captured, three missed. Two wonderings queued for the microlearning pipeline. By tomorrow, "Was Frederick influenced by Islamic philosophy?" will be a researched card. The missed facts get boosted priority in review. The things you forgot are exactly what you'll see next.

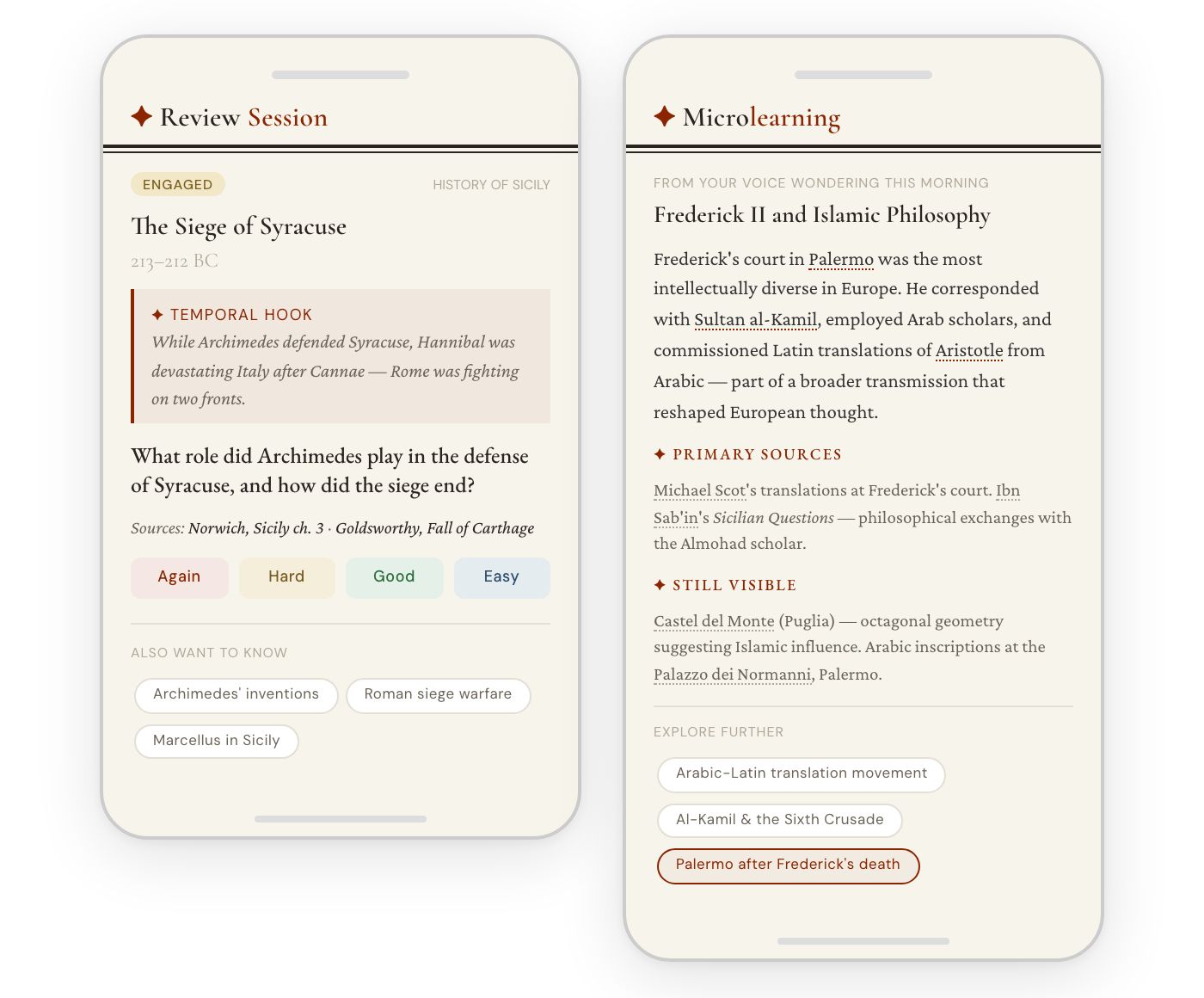

A review card asks about the Siege of Syracuse. The temporal hook helps: Archimedes and Hannibal on opposite sides of Rome's two-front war. You rate it "Hard." Next: a microlearning card triggered by your wondering this morning — Frederick II and Islamic philosophy, with primary sources and places you can visit. "Palermo after Frederick's death" — another wondering — is already queued.

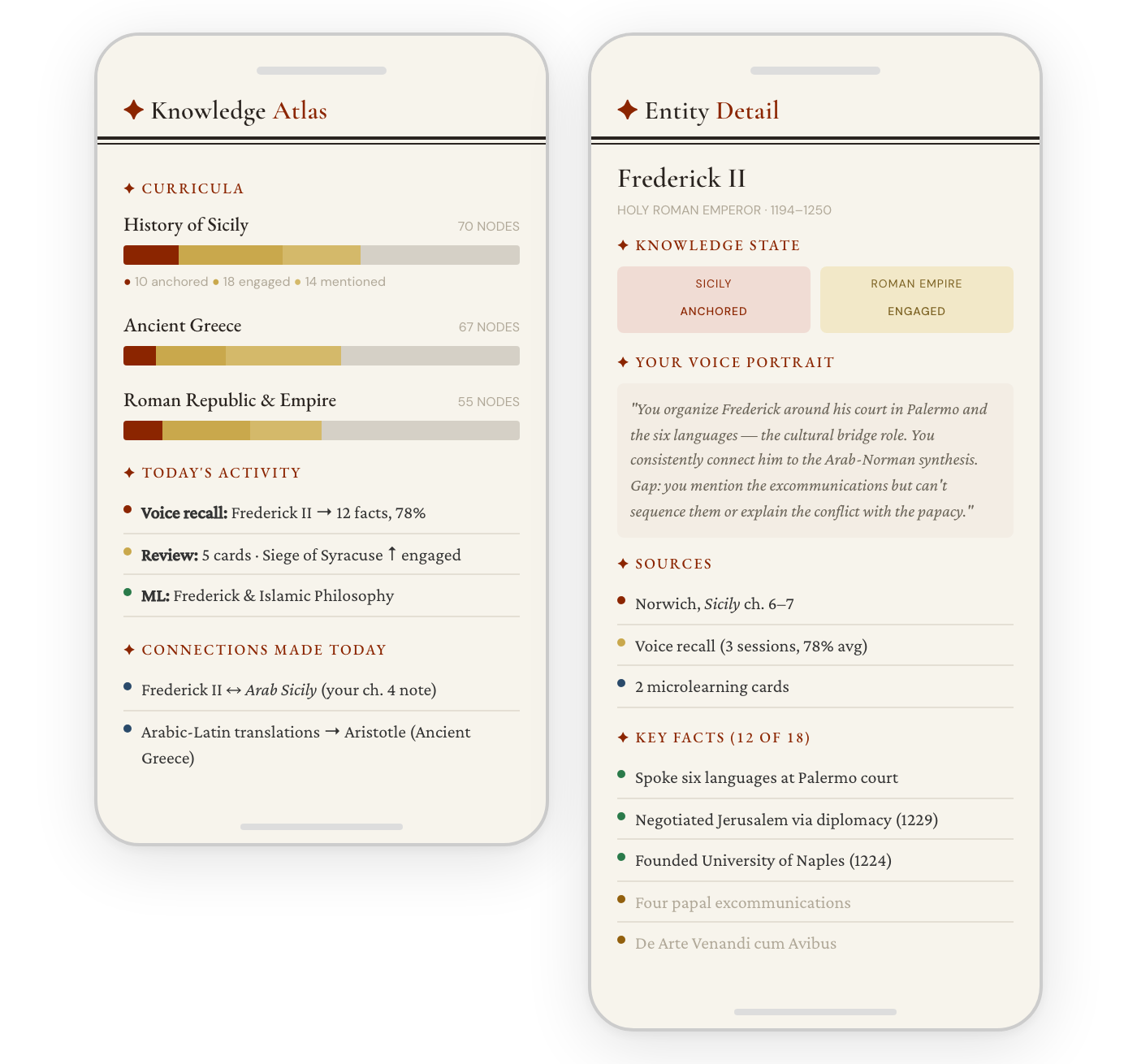

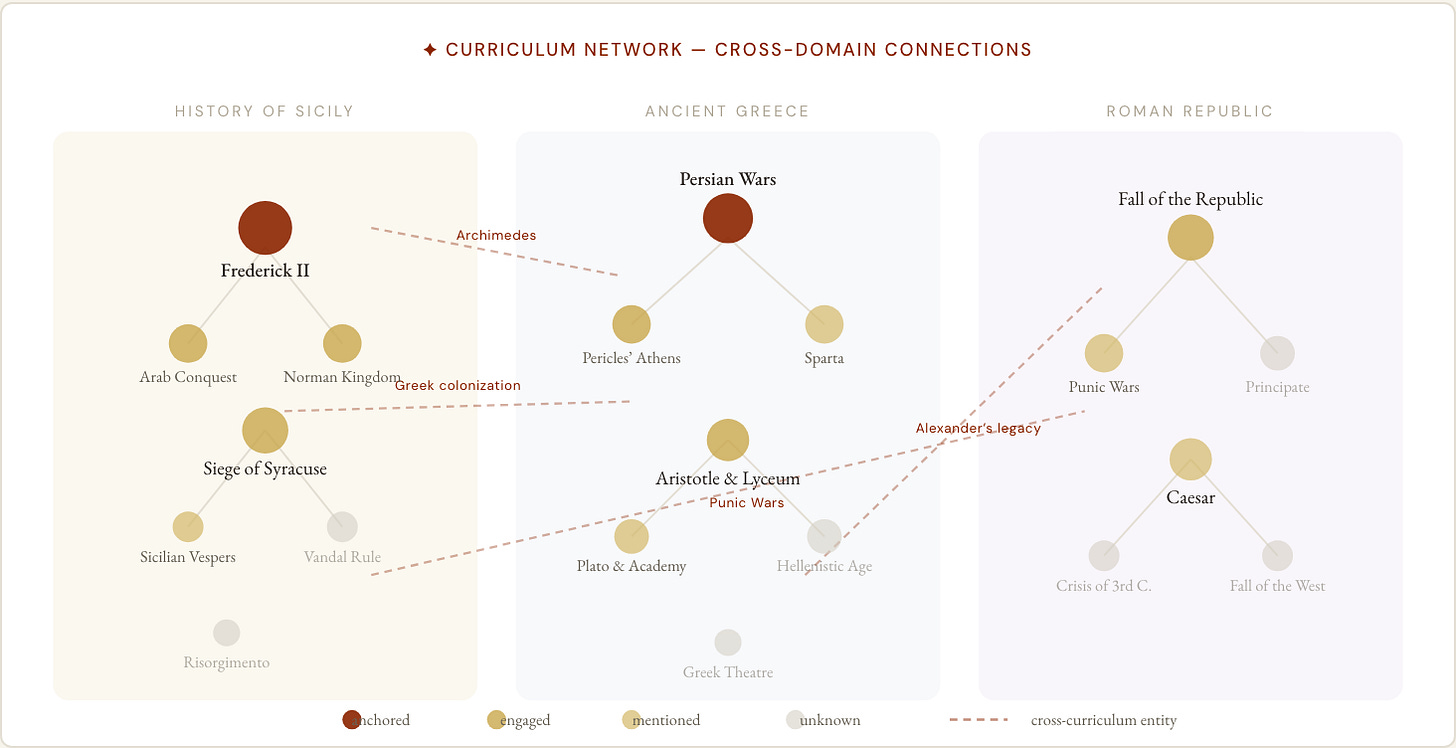

Three nodes moved from "mentioned" to "engaged." A new cross-curriculum connection: your voice recall about Frederick linked to the Arab Sicily chapter note, and the Arabic-Latin translations connected to Aristotle in Ancient Greece. The entity detail shows your personal voice portrait — how you organize what you know about Frederick, drawn from your actual words across sessions. The framing is always positive: here's what you know, here's how it's growing.

Eight minutes of your day. Tonight: three more chapters with a physical book on the couch, while wife is reading her novel.

Think of the Civilization game: the map is foggy except for the small area you've explored. You pick a spot and go deep — not a quick Wikipedia skim but multiple sources, historical fiction, documentaries, maps. You try to understand the people, the architecture, the living conditions. And from that patch of dry land, you expand to adjacent territory.

I described this in a short video using Charlemagne as my anchor. I started around 800 AD — the coronation as the new emperor of the Western Roman Empire. I ended up reading three historical novels in German, richly researched, covering the chronology but also the role of the church, the Germanic tribes, the pagan faiths. Then I could expand in every direction:

West to Spain — overrun by the Muslims, Charlemagne's failed expedition to Barcelona, the encounter with the Basques that became the Song of Roland.

East to Constantinople — Charlemagne's complex relationship with Empress Irene, the worry that his coronation would be seen as claiming the whole Roman Empire, the possible marriage between Charlemagne’s daughter and Irene’s son.

Further east to Baghdad — the Caliph who sent Charlemagne a white elephant that made it all the way to Germany and became a battle elephant (they found its bones in a river).

South to Italy, the Pope, the Langobards.

North to the Vikings — Alcuin, the great scholar at Charlemagne's court, came from Northumbria and wrote about the attack on Lindisfarne.

Backward in time: the fall of the Western Roman Empire, the migrating peoples. How did the Carolingians take power. How did the Pope end up having to beg Charlemagne for support, and crown him Emperor as thanks?

Forward in time: the split into Germany and France, the beginning of the Viking Age.

The same could be said about Caesar, Alexander, the Persian Wars. Each anchor opens adjacent territory. The knowledge grows outward like the explored area on a game map.

The anchors give you gist — deep, vivid, connected understanding. This is the most durable kind of historical knowledge. But specific facts matter too. Dates, names, sequences are the skeleton that everything hangs on.

An example. I picked up a Norwegian high school textbook on the French Revolution. It said people resented the church's taxes. And I thought: only a hundred years earlier, France had a devastating civil war over the conflict between Protestants and Catholics. Tens of thousands killed. Not mentioned by word in that chapter. Does it matter? I don't know. But I couldn't have asked that question without knowing about the Wars of Religion. The factual scaffold made the critical question possible. What happened before what, what happened at the same time in other places?

Sam Wineburg did a study of two historians reading the same Lincoln documents. The specialist — who knew the sequence of days within specific months — defined the interpretive issues in four minutes. The non-specialist spent forty minutes "cognitively flailing" before reaching a narrower interpretation. Same intelligence, same skills. The difference was the facts. Facts are the speed multiplier for deep thinking.

Michael Nielsen makes the same point from a different direction. Drawing on chess research: experts don't think differently, they chunk differently. A master recognizes 25,000–100,000 patterns as single units — expanded working memory. "Having more chunks memorized in some domain is somewhat like an effective boost to a person's IQ in that domain." If "the Punic Wars" is a rich chunk rather than an unfamiliar term, you can hold more of the argument in your head.

E.D. Hirsch extends this into a full theory of literacy: reading comprehension depends on specific background knowledge, not transferable reading skills. A striking study by Recht and Leslie gave students a passage about baseball: poor readers who knew baseball outperformed strong readers who didn't. Domain knowledge trumped reading ability.

This connects to cognitive load theory. Working memory holds roughly 7±2 items. But "items" can be large, richly-encoded chunks or small, disconnected fragments. Prior knowledge reduces cognitive load by letting you process larger units. When "the Investiture Controversy" is a rich chunk, I can hold the whole argument in working memory. Without it, each unfamiliar name consumes a slot and the argument overflows.

So hooks and facts form a virtuous cycle. Deep anchors give you gist. The factual scaffold gives you the skeleton. Each supports the other. Petrarca maintains both.

The Temporal Hook Hierarchy. From building Hamarquizen and my own experience, I've found four types of temporal connection, roughly ordered by how well they stick: (1) Anchoring to events I already know ("Archimedes died ~75 years after Alexander"). (2) Same-moment connections ("While Archimedes defended Syracuse, Hannibal was in Italy"). (3) Causal chains ("Archimedes' death was a consequence of Hannibal's invasion"). (4) Cross-domain surprises — but only if I already know the other domain. A connection to something unfamiliar isn't a hook, it's noise — so the system checks what I know before generating cross-domain links.

The core challenge: map the knowledge I have, the interests I'm pursuing, and the gaps I haven't filled. History is impossibly fractal — you can zoom in forever. The system needs some way of answering: what are the important things to know?

Topic maps of 50–80 concepts per domain, generated by Claude Opus, checked against open-source textbooks and curricula. Ancient Greece 67 nodes, Rome 55, Sicily 70. Roughly what a good lecturer would say you should understand. Bounded scope creates achievable goals and visible progress. There's a tension: I might want to go deep on Frederick II or the Arab-Norman synthesis beyond any curriculum. The entity system and microlearning capture that depth. This balance will need revisiting.

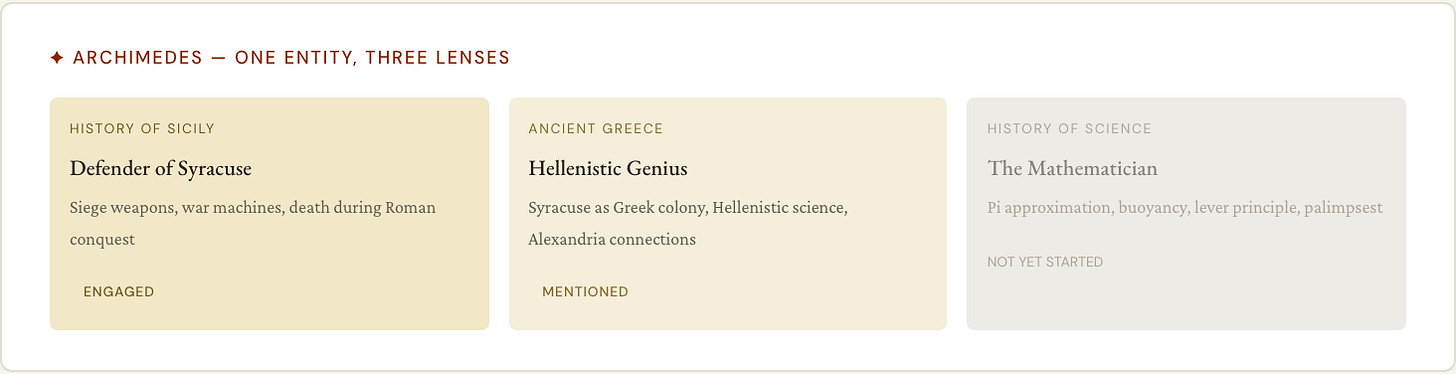

Archimedes exists once in the shared entity database, but the Sicily curriculum sees "Archimedes the defender of Syracuse" while History of Science would see "Archimedes the mathematician." Same entity, different knowledge states across curricula. When a node lights up in multiple curricula — a nexus point — those are the richest learning moments.

Unknown → mentioned → engaged → anchored. Always positive: "here's what you already know." Scheduling models decay underneath, but the visible level never drops.

Tracks books from Kindle (automatic sync) and physical books (photograph the cover). Maps content to curriculum nodes. While reading, I can tap and speak — the app knows which chapter I'm in. I can photograph a page (finds page number, OCRs text). These captures are lightweight enough not to interrupt reading. One knowledge item per curriculum node, not per chapter — a second book on the same topic adds a source, not a duplicate. I’ve also prototyped integration with podcasts, but I find mostly just hitting record after listening to a podcast and listing out what I remember/found most interesting is enough.

Go for a walk, talk about what you know about a prompted topic (selected by the system based on where the information will be the most useful for its system). Transcription → quality gate → dedup → analysis against curriculum and book sources → captured facts, missed facts, interesting connections, wonderings. Wonderings get routed to microlearning automatically.

But the real value goes beyond grading. Those transcripts contain far richer data than a score — my actual words, the connections I made, the things I found interesting, my organizing framework for the topic. Recognizing this led to the knowledge profile system.

Every voice transcript is chunked into atomic pieces — individual captured facts, interesting connections, wonderings, the raw speech itself — each embedded as a vector and linked to curriculum nodes and entities. When I talk about the Fourth Crusade during a Constantinople elicitation, those chunks get linked not just to the Constantinople node but to every node and entity I mentioned: the Venetians, the Latin Empire, Enrico Dandolo.

This creates two retrieval strategies working in parallel. Relational: which chunks are directly linked to this node? Semantic: which chunks across all my transcripts are most similar to this topic, even if I never explicitly connected them? When the system generates a review card about Aristotle, it now finds my words about the Alexander-Aristotle relationship, my confusion about Nicomachus's role, my interest in French neoclassical drama's reception of the Poetics. The LLM can reference my own connections, address my specific misconceptions, and build on what I found interesting rather than generating generic questions.

For domains with enough elicitation, the system synthesizes a domain knowledge portrait — a 300–500 word summary of my mental model, strong areas, gaps, interests, and organizing framework. This portrait is injected into every LLM prompt for that domain, so the system's understanding of me persists across sessions and deepens over time. It's not a score. It's a rich profile that gets more useful with every voice interaction.

Generated from books, voice, and microlearning. Enriched with temporal hooks, multi-source context, cross-curriculum connections. Factual questions generated deterministically from structured data; LLM only for contextual answers and analytical questions. After grading, "Also want to know" chips expand sideways.

When a wondering or follow-up triggers research: a structured card with primary sources, material evidence, places you can visit, and sideways follow-up questions. Each card generates quiz questions on expanding intervals. A wondering spoken during a drive on Tuesday becomes a researched card by Wednesday, quiz questions by Thursday, actively maintained by the following week.

I don't want an iPad app for reading PDFs with highlighting tools. During my PhD, I built elaborate pipelines — downloading papers, highlighting, extracting highlights, processing them. That worked as a full-time student. It doesn't work for an adult with a family.

I barely open my laptop outside work. I try not to look at my phone much when I'm home with my family. I treasure the hours of deep reading — by the fireplace early on a Saturday morning, or half an hour in bed. Those are sacred. The system should never make me feel like I should be annotating or feel guilty for not engaging with the app.

Stian Håklev@houshuang

In addition, I did a really fun book restoration workshop at Thaleia's Bookbindery (highly recommended! facebook.com/thaleiasbookbi…) where we reattached the bindings of the Plato book. Then I spent two long days in the library reading Euthyphor and Apologia with dictionaries etc...

3:00 PM · Jul 30, 2025 · 525 Views

1 Reply · 31 Likes

Stian Håklev@houshuang

Finally, I spent two days going around Athens and reading Plato dialogues in appropriate sites... I read all of Euthyphro right next to the King's Stoa where it "happened". I read Ion right next to the Dionysian theatre in Acropolis. I read the opening of Politeia in Pireaus...

3:00 PM · Jul 30, 2025 · 518 Views

1 Reply · 39 Likes

The same constraint shaped Alif. The way I learn Arabic might not be ideal for a full-time student, but the question isn't what's theoretically optimal — it's what will actually work in my life. 5% of my time with the app, 95% with books, podcasts, museum visits, historical movies. But that 5% should significantly improve retention, the ability to build on what I read, and the enjoyment of future reading.

The idea is simple: review information at expanding intervals — 1 day, 3 days, a week, a month. Each successful recall lengthens the interval. It started with physical flashcards and Leitner boxes in the 1970s, then went digital with SuperMemo and Anki. Medical students use it to memorize anatomy; language learners use it for vocabulary. It works spectacularly for discrete facts.

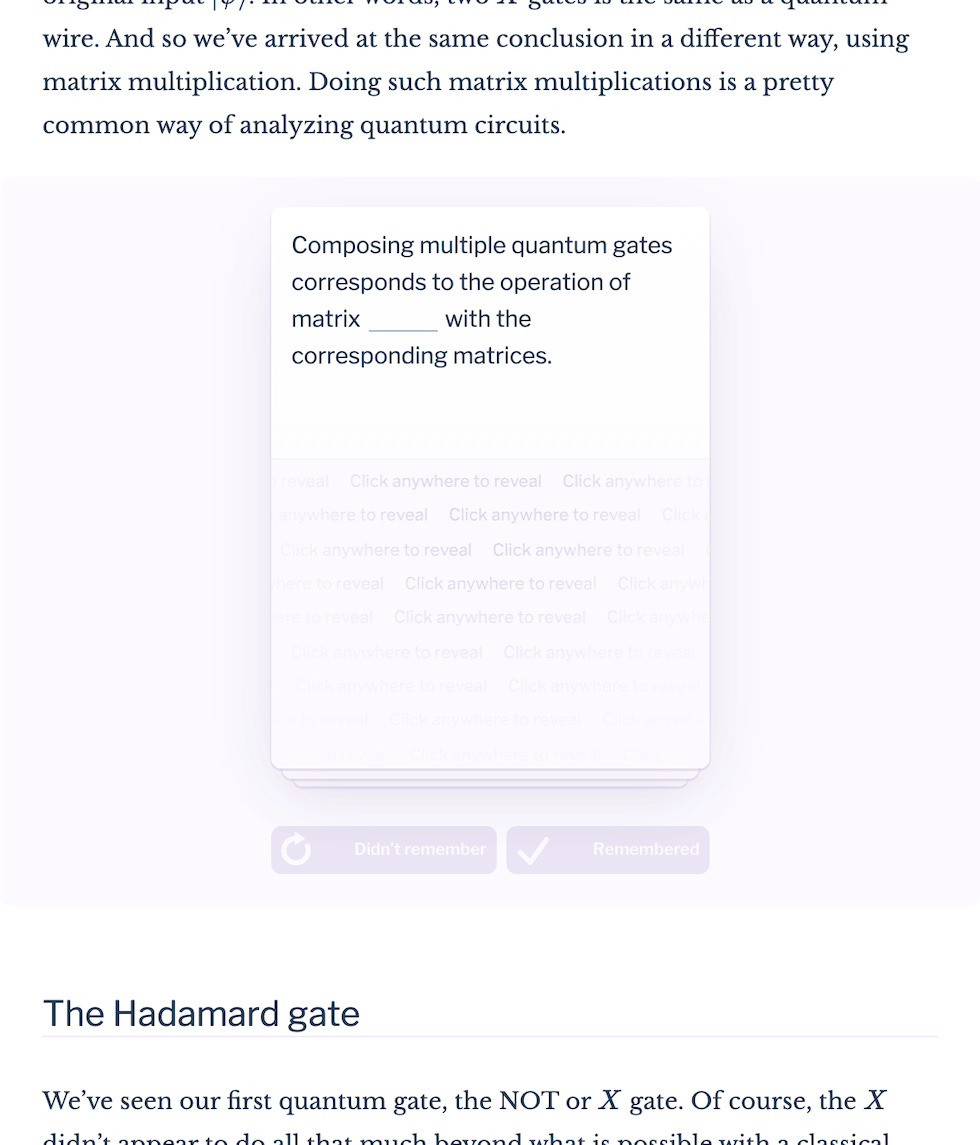

Michael Nielsen’s essay about how he used spaced repetition to quickly enter a new academic field, and the importance of memory and factual recall for understanding complex texts was an eye-opener for me, and extremely inspiring. With Andy Matuschak, he designed Quantum Country and Andy continued with the Orbit project, embedding review prompts directly into prose, integrating both stages.

If you accept the premise that spaced repetition can help you understand complex topics, there are two tensions: writing good SRS cards is difficult, and takes a lot of time. But using other people’s cards is not helpful, because of the gap between initial learning/encoding, and repetition (which we also saw with Alif). Seeing an unknown fact as an SRS review card with no context is not a good way to learn, and only becomes frustrating.

The brilliant insight was to embed spaced repetition cards in a textbook. You learn a concept through carefully sequenced text, examples, pedagogical examples, narrative and context. You immediately test your understanding, but the card uses to test your understanding then moves to a spaced repetition system, ensuring that you never forget what you learnt.

My son was participating in a local quiz competition about local facts, and had to memorize a lot of names and dates, difficult for an 11-year old. Inspired by Orbit, I created a curriculum of the 20 most important people and events, wrote micro-stories for each, and integrated quizzes that fed into a scheduling bucket — the PRIME → READ → TEST flow. Rich memory hooks tied facts together: Hanna Winsnes was born in 1789, the same year as the French Revolution. It worked — he learned a huge amount of facts in a few days and found it fun. That pattern — author-crafted prompts in narrative context, temporal hooks, bounded curriculum — carried directly into Petrarca’s microlearning design.

In Petrarca, the idea is to capture the learning you do through authentic sources – podcasts, books, documentaries, historical fiction, and make sure you don’t forget it, there the learning happens outside the app. There are also the micro-lessons that are triggered through “wonderings” in voice capture, as well as clicking on little pre-generated prompts to “go further” when reading an answer, which function more like Orbit, where both the learning (rich hooks) and recall/testing happens in the app.

In the broader personal knowledge management space, others have been experimenting too: SuperMemo's incremental reading (embedding cards within source context since 2000), Ryan Muller's fractal understanding, and various experiments with spaced attention — resurfacing ideas at intervals, not for drilling but for connection-making.

I've just started getting to where Petrarca feels genuinely useful day to day. It will need iteration and measurement over much longer — months, not weeks. Progress is harder to measure than Alif, where I can see 1,300 lemmas growing at a clear rate. History knowledge doesn't have an equivalent metric. Some approaches I plan to test:

Voice knowledge sweeps. Monthly: "Tell me the history of Sicily, roughly chronological." Same prompt repeated = comparable data. I can measure coverage, connectivity, accuracy, organization. Goldsmith et al. found that similarity between a student's network and the expert's correlates with exam performance at r = .74. The curriculum graph is the expert network; voice transcripts reveal mine.

Review card trends. Accuracy and interval growth by knowledge type. Are dates stabilizing? Are connections strengthening?

The knowledge portrait. The domain portrait is the most promising approach. Because it's generated from my actual voice transcripts, it captures not just what I know but how I organize it — whether I think chronologically or thematically, which connections I naturally make, what I find interesting versus what I struggle with. Regenerating it after new elicitations creates a longitudinal record: I can diff my April portrait against my July portrait and see what changed. The portrait also feeds back into the system — it guides which questions get generated, which topics get prioritized, and what the LLM assumes I already know.

Whether any of this produces a trustworthy signal of deep learning is an open question. The early signs are encouraging — review cards generated with learner context feel noticeably more relevant than generic ones — but I need months of data before drawing conclusions.

In the meantime, I’m off to learn about the difference in military strategy between the battle of Himera and the battle of Salamis. And was there a secret conspiracy between the Carthaginians and the Persians to attack at exactly the same time?

The full code is here, but it requires quite a bit of setup, ideally a VM, several API keys etc. Very much a work in progress. Feel free to play with it, and I’d love to hear from you if you fork it and do interesting stuff. https://github.com/houshuang/petrarca