Multi-agent LLM systems divide complex tasks among specialized agents, let them coordinate, and get better results than any single model could achieve. But, when deployed in production latency bites, token costs spikes, errors cascade through pipelines, and when something breaks, you often have no idea why.

ICLR 2026 had a notable number of papers addressing these exact pain points. What follows are five problems you will likely encounter and the ICLR papers that provides concrete solutions.

In production, latency increases as agents are exposed to more data, heavy prompts, larger context, or more chunks in RAG pipelines. The primary latency bottleneck in multi-agent workflows is sequential execution. Most systems wait for one agent to complete its turn before the next begins, leading to a linear accumulation of inference time. Add four agents to your pipeline and you have roughly quadrupled your response latency. This scales poorly as task complexity grows.

Speculative Actions borrows from speculative decoding in LLM inference to address this directly. The framework uses faster, smaller models to predict likely future actions, allowing multiple API calls to execute in parallel rather than sequentially. If the prediction is correct, you gain the speedup. If incorrect, the system reverts to standard sequential execution without losing correctness. Across environments including e-commerce, web search, and operating systems, this approach yields speedups of up to 30%.

The second source of latency is communication overhead. Even if individual agents are fast, broadcasting every message to every agent creates unnecessary waiting. Graph-of-Agents tackles this by using model cards, which are summaries of each agent’s domain expertise and task specialization, to select only the relevant agents for a given task. Instead of routing through all six agents in a pool, GoA might select three. The result is maintained accuracy with reduced aggregate response time. This matters because latency improvements from parallel execution can be erased by inefficient routing.

Latency and cost are related but distinct. You can have a fast system that is expensive, or a slow system that is cheap. In practice, multi-agent systems often manage to be both slow and expensive because agents engage in excessive communication or maintain unbounded contexts across long interactions.

ICLR 2026 research papers clusters into three approaches: changing how agents communicate, reducing how often they communicate, and intervening at runtime to prevent waste.

Changing the medium. Natural language is an inefficient carrier for inter-agent coordination. KVComm proposes selective sharing of Key-Value pairs instead of raw text. The method uses a layer-wise selection strategy based on attention importance scores with a Gaussian prior to identify the most informative KV pairs. Transmitting just 30% of layers’ KV pairs achieves near-full performance compared to merging complete contexts. This is a substantial reduction in the data volume moving between agents.

Reducing frequency. Some communication is simply unnecessary. PCE reduces the need for constant back-and-forth by converting fragmented assumptions into structured decision trees. Each path through the tree is scored by likelihood, goal-directed gain, and execution cost. This allows agents to select rational actions under uncertainty without repeatedly pinging other agents for clarification. The framework outperforms communication-heavy baselines in success rate while using fewer tokens.

For long-horizon tasks where context accumulates over many turns, MEM1 maintains constant context size through memory consolidation. It uses reinforcement learning to update a compact shared internal state, strategically discarding irrelevant information from prior turns rather than appending everything. Compared to standard full-context prompting, MEM1 reduces memory usage by 3.7x while improving performance by 3.5x on multi-hop QA tasks.

Cost and latency are optimization problems. Error propagation is a correctness problem, and it tends to be more painful in production.

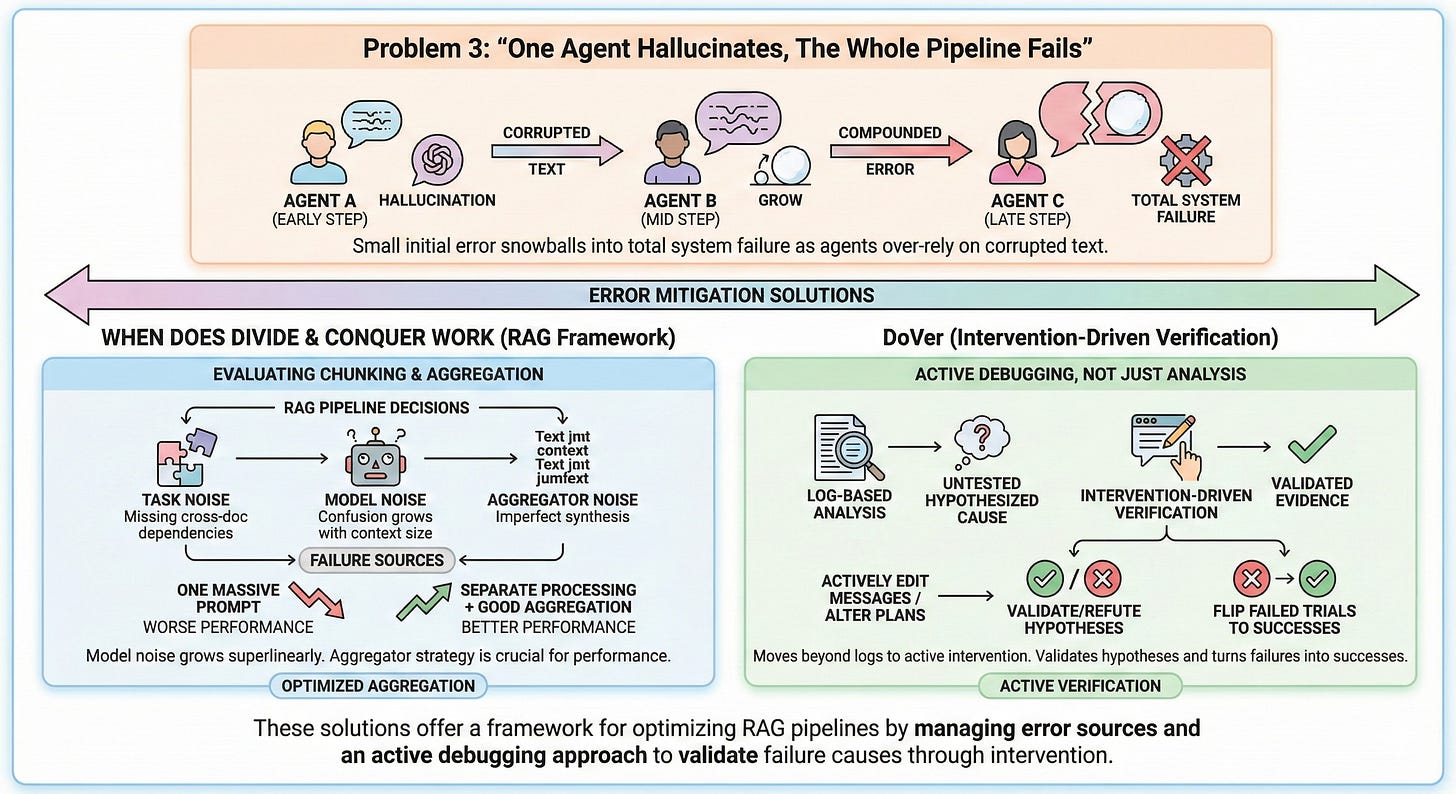

When agents relay information through text, errors compound. A hallucination in an early step gets passed downstream as if it were fact. Subsequent agents over-rely on the corrupted text rather than original evidence because they have no access to that evidence. The result is that a small initial error snowballs into total system failure.

If you run a RAG pipeline, you have made chunking and aggregation decisions, whether or not you thought about them explicitly. When Does Divide and Conquer Work gives you a framework for evaluating those choices. It identifies three failure sources: task noise (when your chunks lose cross-document dependencies), model noise (confusion that grows with context size), and aggregator noise (imperfect synthesis of retrieved results). The key finding for RAG practitioners: model noise grows superlinearly with input length. This means stuffing all retrieved chunks into one massive prompt can perform worse than processing chunks separately with a weaker model and aggregating well. Your aggregator strategy matters more than you think.

For active debugging rather than analysis, DoVer moves beyond log-based failure attribution to intervention-driven verification. Instead of just hypothesizing that agent X caused the failure, DoVer actively edits messages or alters plans to see if the failure resolves. This validates or refutes 30-60% of failure hypotheses and flips up to 28% of failed trials into successes. The key insight is that log analysis produces untested hypotheses, while intervention produces evidence.

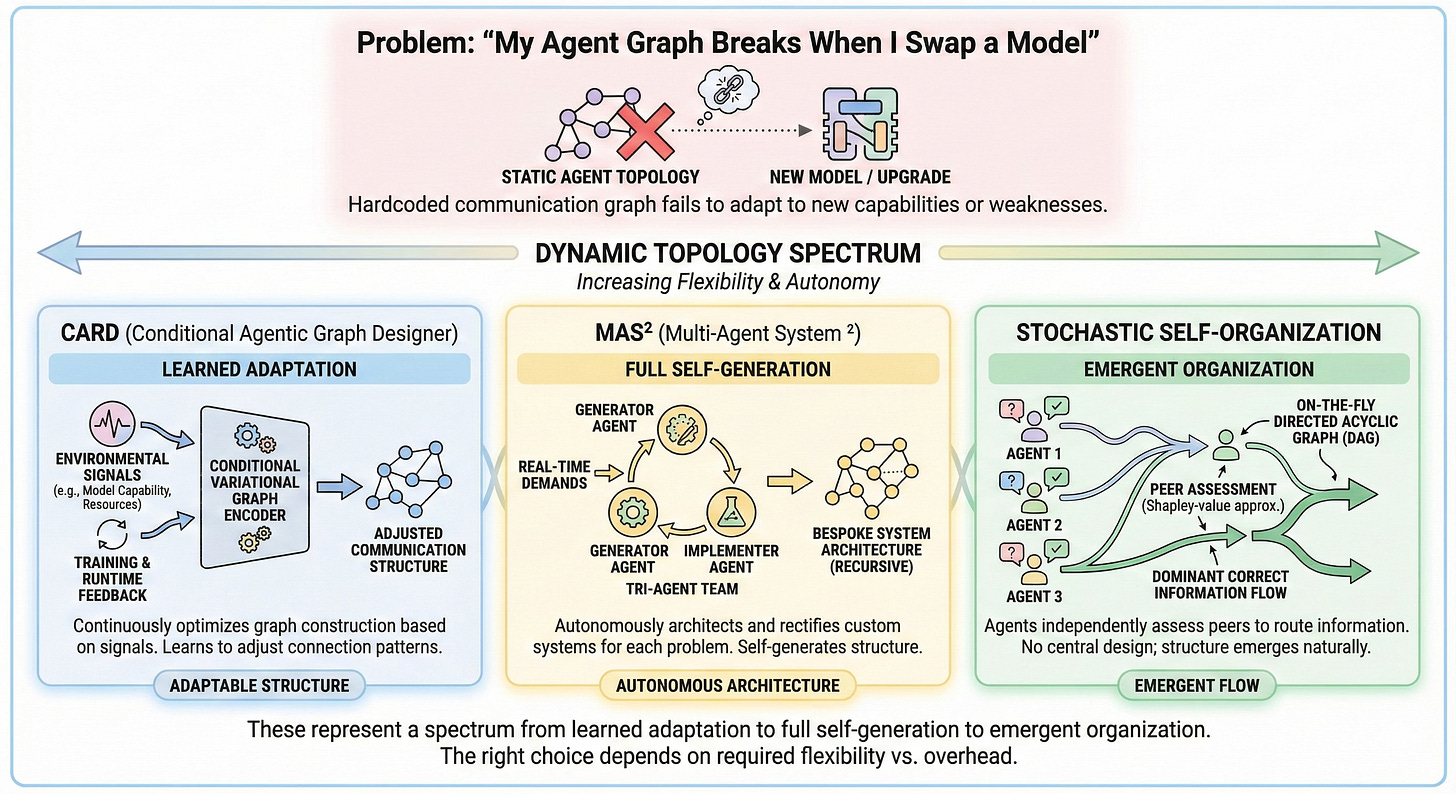

The problems above assume your agent topology is fixed and you are optimizing within it. But static topologies are themselves a source of fragility. When you upgrade a model, change an API provider, or alter a knowledge source, a hardcoded communication graph often fails to leverage new capabilities or compensate for new weaknesses.

Three papers offer different philosophies for addressing this.

CARD (Conditional Agentic Graph Designer) treats graph construction as a continuous optimization problem. It incorporates environmental signals, such as shifts in model capability or resource availability, into a conditional variational graph encoder. This enables topology adaptation at both training and runtime. The system learns to adjust its communication structure rather than relying on a fixed design.

MAS² takes a more radical approach. Instead of adapting a fixed architecture, it introduces a “generator-implementer-rectifier” tri-agent team that autonomously architects bespoke systems for each problem. The framework recursively self-generates and rectifies its own structure in response to real-time demands. This achieves performance gains of up to 19.6% over static systems when leveraging previously unseen model backbones.

Stochastic Self-Organization avoids learned adaptation entirely in favour of emergent structure. Agents independently generate responses and then assess peer contributions using Shapley-value approximations. A directed acyclic graph is constructed on-the-fly to route information from high-contribution agents to others. No external judges or additional training are required. Correct answers naturally dominate the information flow even when using weaker model backends.

These represent a spectrum from learned adaptation (CARD) to full self-generation (MAS²) to emergent organization (Stochastic). The right choice depends on how much architectural flexibility you need and how much overhead you can tolerate.

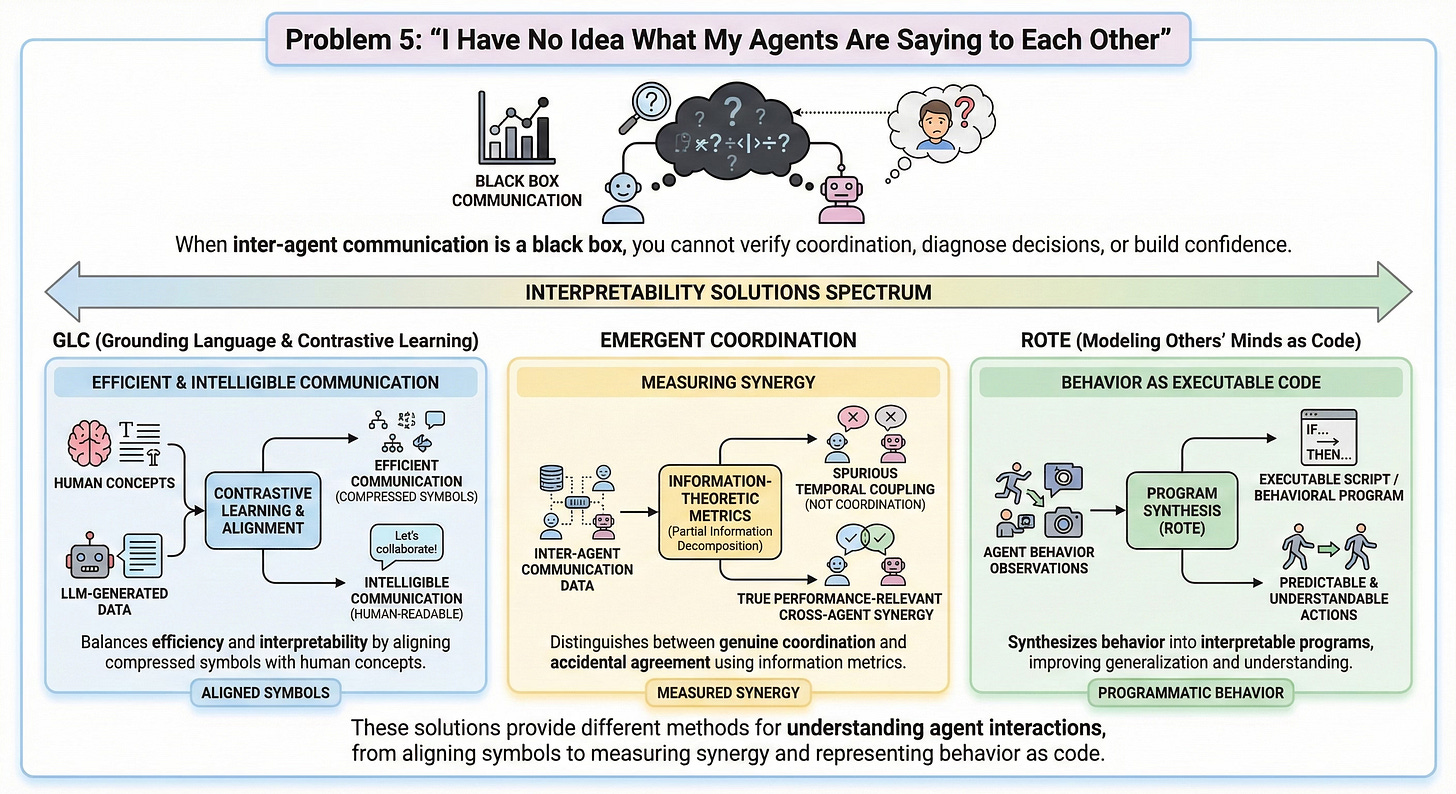

Efficiency and correctness matter, but so does understanding. When inter-agent communication is a black box, you cannot verify that coordination is actually happening, diagnose why a decision was made, or build confidence that the system will behave predictably in new situations.

GLC (Grounding Language and Contrastive Learning) addresses the fundamental tension between efficiency, performance, and interpretability. Efficient communication often means compressed representations that humans cannot read. Interpretable communication often means verbose natural language that wastes tokens. GLC learns discretized, compressed communication symbols and then semantically aligns them with human concepts using LLM-generated data and contrastive learning. The result is communication that is both efficient and intelligible to human observers.

Even with interpretable messages, you may not know whether your agents are genuinely coordinating or just happening to produce correlated outputs. Emergent Coordination provides information-theoretic metrics to answer this question. Using partial information decomposition of time-delayed mutual information, the framework distinguishes between spurious temporal coupling and true performance-relevant cross-agent synergy. This allows you to measure whether your prompt designs are creating a higher-order collective or just a collection of individuals that happen to agree.

Modeling Others’ Minds as Code (ROTE) offers a different form of interpretability by treating agent behaviour as executable scripts rather than opaque policies. The insight is that many social interactions follow predictable routines that can be represented as behavioural programs in code. By synthesizing these programs, you can predict and understand agent actions from sparse observations. The approach improves out-of-sample generalization by 50% compared to traditional behaviour cloning while providing a clear window into the routines your agents follow.