The cost of human sentience is effort.

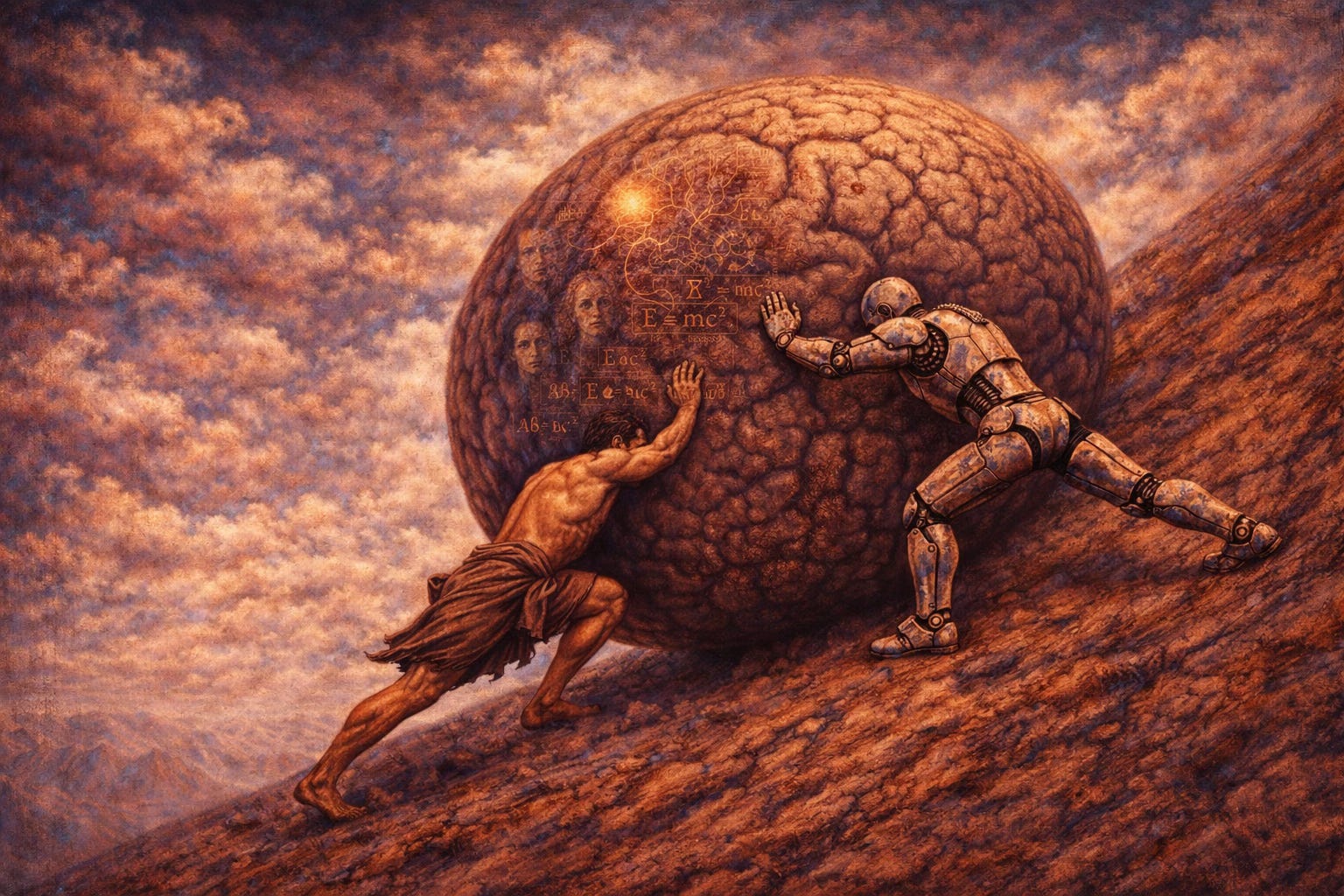

The farmer’s carry and the crash of USAir Flight 427 appear unrelated to each other and to AI; one a crude weightlifting exercise, the other a technical aviation disaster, the third a defacto human cognitive exoskeleton. But taken together, they reveal the same principle:

Human sentient systems do not remain coherent by default.

They remain reliable only when they bear significant load, detect deviation early, and correct themselves. When that responsibility is silently outsourced, their internal calibration reliably decays.

When sentient effort is offloaded, whether by weightlifting straps for farmer’s carry, autopilot for commercial flight, or artificial intelligence for human cognition, performance may improve but calibration decays. The results can be catastrophic.

I have been lifting weights for decades, and with aging have found myself increasingly drawn to the farmer’s carry. A farmer’s carry is exactly what the name implies. You pick up something very heavy in each hand and walk with it. There are no machines, no leverage, no spectacle. The exercise ends when posture slips, when the weight pulls you offline, or when your grip fails. The rules are simple and uncompromising, and there is nowhere to hide.

What looks brutish on the surface turns out to be inexorably elegant. A farmer’s carry is not about grip strength in the narrow sense. Grip is simply where failure becomes manifest first. What is actually being trained is a network. Carrying heavy weight while walking requires continuous coordination between balance, posture, sensation, and motion. The hands are merely the most obvious point-of-failure, making them diagnostic rather than heroic.

In a true farmer’s carry, every sway is tallied. At the lowest level, force sensors embedded in muscles and tendons constantly measure tension and stretch, adjusting output to prevent injury. The spinal cord integrates this information reflexively, making rapid corrections long before conscious thought can intervene. Sensory information about joint position and movement flows upward while the inner ear recalibrates balance with every step. The motor cortex initiates the task but quickly relinquishes moment-to-moment control. The cerebellum then predicts, compares, and corrects balance, smoothing motion to minimize error.

Every step introduces a new perturbation. All systems are on, under maximal load. Nothing is buffered, nothing is abstracted, and nothing is silently corrected. The system remains coherent only if deviations are detected early and adressed immediately and proportionately. When the carry is done well, it feels quiet and contained. When it is done poorly, it becomes chaotic and unstable with a real risk of injury. That difference is not aesthetic or motivational. It is structural.

This helps explain a well-known but often misunderstood finding: grip strength predicts all-cause mortality and dementia risk as we age. This is not because strong hands protect the heart or the brain. It is because grip reflects the integrity of the nervous system’s internal wiring. White matter tracts, the long fibers that allow distant brain regions to coordinate, degrades before raw strength disappears. When that wiring falters, integration fails first. Grip is simply where the failure becomes most measurable.

This is also why external grip supports are seductive and dangerous. Weightlifting straps do not just minimize the felt weight; they remove information. They bypass the earliest warning signal (grip integrity) and allow the task to continue without full- musculoskeletal engagement. A weightlifting belt does something similar for back muscle spinal stabilizers, offloading work that would otherwise maintain internal calibration. Used intentionally and sparingly, these tools can extend capacity. Used routinely, they mute feedback. Fragility accumulates quietly, and the first clear signal of failure often arrives catastrophically as injury or abrupt task collapse.

The same failure mode appears far from the gym. In 1994, USAir Flight 427 crashed after a rare rudder malfunction forced the aircraft into an abrupt unrecoverable roll after autopilot disengagement. The pilots were not reckless, inattentive, or poorly trained. The problem was that automation had quietly compensated for instability up to the point where it could not. When control effectively reverted to the human, it did so without warning and without the gradual sensory cues that normally precede failure. The system appeared stable until it suddenly was not. By the time the deviation became visible, it was already too large to correct. Autopilot did not remove load; it removed continuous low-level pilot feedback that might have averted disaster.

The farmer’s carry teaches the opposite lesson. It refuses silent correction and demands continuous authorship. You must “fly the plane” the entire time. That is why it generalizes so cleanly beyond exercise. Sentience is often treated as a capacity, defined by awareness or experience, but biologically it is also a responsibility. The responsibility of human sentience is effort. A sentient system must integrate competing signals, suppress destabilizing impulses, detect error early, and correct it before collapse. All of this is metabolically expensive, but none of it is optional.

Artificial intelligence belongs in this lineage not because it removes effort, but because it offloads integration itself. Unlike previous tools, it does not merely assist human execution; it can substitute for composition, planning, and judgment if used uncritically. When responsibility for integration is transferred, calibration does not disappear immediately. It decays quietly, becoming visible only under load.

There is already evidence of this effect in medicine. In colonoscopy, AI-assisted polyp detection improves short-term detection rates, but also deskills the operator. When lesions are highlighted in real time, the endoscopist apparently practices less of the slow, continuous visual search that once trained perception and judgment. Detection feels easier and safer while the system is active. But when a lesion falls outside the model’s sensitivity, or when the aid is unavailable, performance degrades. What has atrophied is not anatomical knowledge, but the preconscious calibration that allows a physician to notice subtle abnormalities without being prompted. When a clinically significant colon polyp is missed, the consequence is a preventable cancer discovered years later.

Artificial intelligence undermines human sentient responsibility in a way no previous tool has. AI removes friction not just from execution but from information integration and judgement. But human systems that are no longer utilized or required do not remain robust by default. They function well in stable conditions but may fail under load, often catastrophically.

In the farmer’s carry, there is no choice once the weight is in your hands. The choice happens beforehand. Do you carry the load yourself, or do you offload to weight straps and/or a weight belt? AI presents the same fork. Used as a strap or an autopilot, it absorbs deviation silently and leaves the human under-calibrated. Used as a demand amplifier, it increases load while preserving human sentient responsibility. The difference is not technological. It is sentient architecture. Who remains at the controls? Who owns error correction?

The farmer’s carry endures because it cannot be abstracted. It insists on presence. It looks brutish, but it is elegant because nothing unnecessary survives. It reminds us that some forms of intelligence only exist when something heavy has to be carried, step by step, without assistance. A world optimized entirely for ease risks becoming a prison built from comfort, not because effort is virtuous, but because effort is how sentient systems stay awake. Remove it indiscriminately, and what remains may still be conscious, fluent, and productive, but no longer fully responsible.

The cost and responsibility of effort, whether in a body, a cockpit, or a civilization, is the price of remaining sentient.