From our projections, it should have been possible to operate our entire cloud workload on two of these chips at a time. Now that would be bad, because when one breaks, I would lose sleep. However, the other issue with buying these chips is that it’s remarkably difficult to purchase server equipment in a reliable way. Typical consumer supply chains don’t exist, and our needs are minuscule compared to those of a hyperscaler. Thankfully, we’ve formed a great relationship with ASA Computers, where I can text my rep any SKU and he tells me the price, if it’s in a US facility, or how long it’ll take to come over from Taiwan.

In the theme of the challenge, I can’t operate in full compliance with the Berry Amendment, nor would I want to as a globally operational company. Therefore vendor diversity also covers procurement, and in addition to ASA, we’ve since spent two tortuous weeks trying to secure delivery from NeweggBusiness, have ordered second-hand from eBay, set up with Supermicro’s eStore, and have even bought directly from liquidation sales… one of the benefits of living in Mountain View, CA.

While a risk emerges by not using a tier-1 OEM server vendor that can use predictive failure monitoring as part of a paid support agreement, our thesis is simple: buy more warm and cold spares. As the price of equipment continues to plummet relative to the cost of hyperscalers, the value add simply isn’t there for our use case. To mitigate this risk, we run Netbox and track every single SKU in our infrastructure and follow a simple rule: keep N × 2 resources available to us (so as to never exceed 50% utilization), and then an additional cold spare of anything either already racked and unplugged, or if it’s an internal component – it stays binned in a Pelican case at the bottom of a cabinet. I don’t really know what I’m doing, but this seems like a reasonable safety net and everyone can sleep peacefully at night.

It’s also worth noting that every component has its own quirks and features regarding procurement. For example, it’s much more difficult to acquire Kioxia drives directly without feeling like you’re getting hosed, which then leads us to purchase low-hour S.M.A.R.T. metric NVMe drives second-hand from eBay. At the same time, I can just go to the Solidigm store on Amazon and buy a D7-P5620 online 6. While the irony isn’t lost on me, I’ve made sure to use our Amazon Business account’s remaining Amazon points to purchase as much equipment as possible to help the migration off AWS.

For other parts like network cards, fiber optic cables, and transceivers, other suppliers such as fs.com (from Shenzhen, China) have been used. No matter what, all purchasing decisions are logged and tracked to ensure replacement. While subject to future coverage, preventing fiber optic vendor lock-in is the primary concern.

As an extra-curricular activity, I would like to expand our host diversity to use Clearwater Forest chips from Intel Fab 52 in Chandler, Arizona when they become available 7. More immediately, there is no technical limitation for incorporating the AmpereOne platform today. A final goal of having three different vendor platforms would be nice, but comes with significant operational, research, implementation, and maintenance overhead.

Anitya & Governance

The world is unstable. I don’t trust any regime. I want full autonomy.

Lest you think I exaggerate, AWS ME-CENTRAL-1 and ME-SOUTH-1 got struck by fucking missiles last week (I believe more specifically it was a HESA Shahed 136 in Bahrain) 8, but whatever. These facilities are increasingly target-rich environments, and often sit vulnerable without anti-aircraft defense systems.

At around 4:30 AM PST, one of our Availability Zones (mec1-az2) was impacted by objects that struck the data center, creating sparks and fire. The fire department shut off power to the facility and generators as they worked to put out the fire. 9

The reason is simple: it’s cheaper and easier to maintain two locations in different parts of the world that hopefully don’t get bombed at the same time, and then rely on the elegance and resilience of the Border Gateway Protocol. The issue is that getting (1) Electricity, (2) IP Transit, and (3) Cabinets with that mentality is easier said than done. At a very high level, we want to host a cabinet with the full ability to move operations onto and off of it remotely once it is online.

Given that we have users that hit our APIs from all around the world, it would be nice to operate in three distinct geographical regions. We started the transition with a US and European location, primarily because it was easiest to live within driving distance of a fiber-dense facility, and Munich to simplify some GDPR processing requirements. However, we’ve already vetted a location in SE Asia and Djibouti, locations which both serve as critical submarine cable hubs.

IP addresses are not real and everything is a mirage.

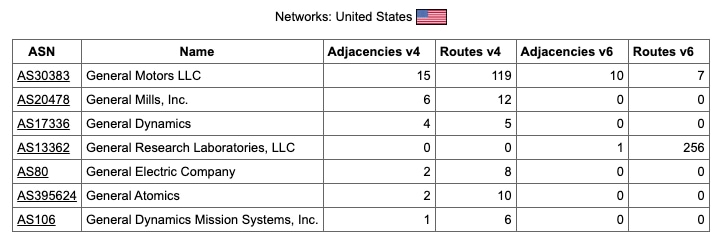

Future networking posts will cover this subject in more detail, but I would like to operate while maintaining relationships with a diversity of Regional Internet Registries (RIRs). We’re trying to acquire block space in an ethical manner, with the goal of an IPv4 and IPv6 block from at least three RIRs. The neo-colonialist Lu Heng 10 has set an extractivist policy that General Research does not want to fund, as he openly exploits African IP space. Purchasing clean IPv4 block space will be allowed, and we’re currently on the ARIN IPv4 waiting list.

A humble abode

Selecting a datacenter is more complicated than just finding an ideal geographical location, as you’re essentially selecting a landlord. They’re private companies and you must act in accordance with their own terms of service. When evaluating options for us, I look at Tier-1 ISPs and Internet Exchanges in the facility. However, other considerations are used, like the financial stability 11 of their operations, floor weight loads, power grid sources, days of fuel autonomy, physical security, and more. Less obvious factors I’ve learned to account for are loading dock access, shipping and receiving procedures, and whether there is a parent lease on the building (common for subdivided buildings in urban centers). We’re a small team, and knowing I can have deliveries arrive and be held until all the parts for an installation are there before I fly into a city and install is critical. Likewise, being able to keep cold spare parts on standby at the facility ready to be swapped or installed by smart hands in an emergency provides a lot of reassurance.

The two biggest players are Equinix and Digital Realty. To satisfy the challenge with my friend, the goal is to lease physical space from three distinct datacenter REITs. We’ve proudly based our main location with Hurricane Electric for all of the reasons above, but also for their IPv6 network and their controversial stance towards protecting minorities and disabled individuals from organized violence 12.

Plug in and breathe out

Networking was the most difficult part of migrating off of the cloud and has remained the most important, full stop. I ran OpenWRT when it came out, would wardrive with my friends as soon as I got a license… that doesn’t mean I knew how to start or run an ISP 20 years later. The domains of expertise in the software engineering fields provide a remarkably distinct subset of skills from those of a network engineer. I want to personally thank Trimarchi Manuele for some extremely insightful conversations during this process; please hire him if you have any form of networking design and implementation challenges to work through.

The progression of today’s college student

is to jettison every interest except one.

And within that one, to continually narrow the focus,

learning more and more about less and less– Liz Coleman, A Call to Reinvent Liberal Arts Education

A cabinet or room in a datacenter can have multiple “WAN connections” going into it, except each is essentially a transit line to a different ISP. In our case, a singlemode fiber cable comes via a 1310nm SFP LC module. Then you start an eBGP session to advertise your IP address space out into the world. This is a separate concept from paying for the transit itself, and an organization must register with an RIR before doing so. For example, our Autonomous System Number is AS13362 and this allows us to acquire IP blocks that we advertise rather than getting them leased. Once you have peering set up, the craft of the network engineer becomes more of an art than a science. Inbound and outbound traffic levels can be monitored and routed in such a way as to find the path of least resistance. If the transition off the cloud is partial or done in steps, you can even make direct connections to hyperscalers 13.

Likewise, you’ll need to familiarize yourself with JunOS (Juniper / HPE), Cisco IOS, and RouterOS (MikroTik), then spend countless nights up figuring out why things don’t work as you test your network designs. The vendor lock-in and proprietary nature of networking equipment is sickening. Moving forward, we’ll cover any networking concepts largely in relation to the CCR2216-1G-12XS-2XQ, or whatever new flagship router MikroTik provides, as long as they continue to be free from vendor licensing requirements. These routers run a Marvell Prestera 98DX8525 Application-Specific Integrated Circuit (ASIC), and you can think of it as a CPU that is extremely good at routing network packets. They can retain the rules for how to move packets around so that some traffic can operate near the 100G line rate. Our decision in this field was that it would be cheaper and better in nearly every way to simply purchase multiple CCR2216-1G-12XS-2XQ units per location than to deal with any HPE or Cisco sales representatives.