In this post, we're going to try and discover how LLMs can emulate the first and second musketeers of communication. If you haven’t already, I’d recommend a read of Part 1 of this series. It’s a short article and shouldn’t take over 2-3 minutes to read through.

Before jumping into anything else, let’s start with three simple questions.

How are the words happy and joy related?

How are the words happy and sad related?

How are the words happy and cat related?

Here’s Tom with his interpretation of these words:

It doesn’t take a genius to understand that:

The words happy and joy have very similar meanings:

\(\text{Happy} \approx \text{Joy}\)

And that happy and sad are opposites:

\(\text{Happy} = - \text{Sad}\)

And that cat is completely different from either happy or sad:

\(\{\text{Happy, Sad}\} \neq \text{Cat}\)

As humans, this intuition is built over years of understanding and using language. But how do computers build an understanding of the semantics behind each word?

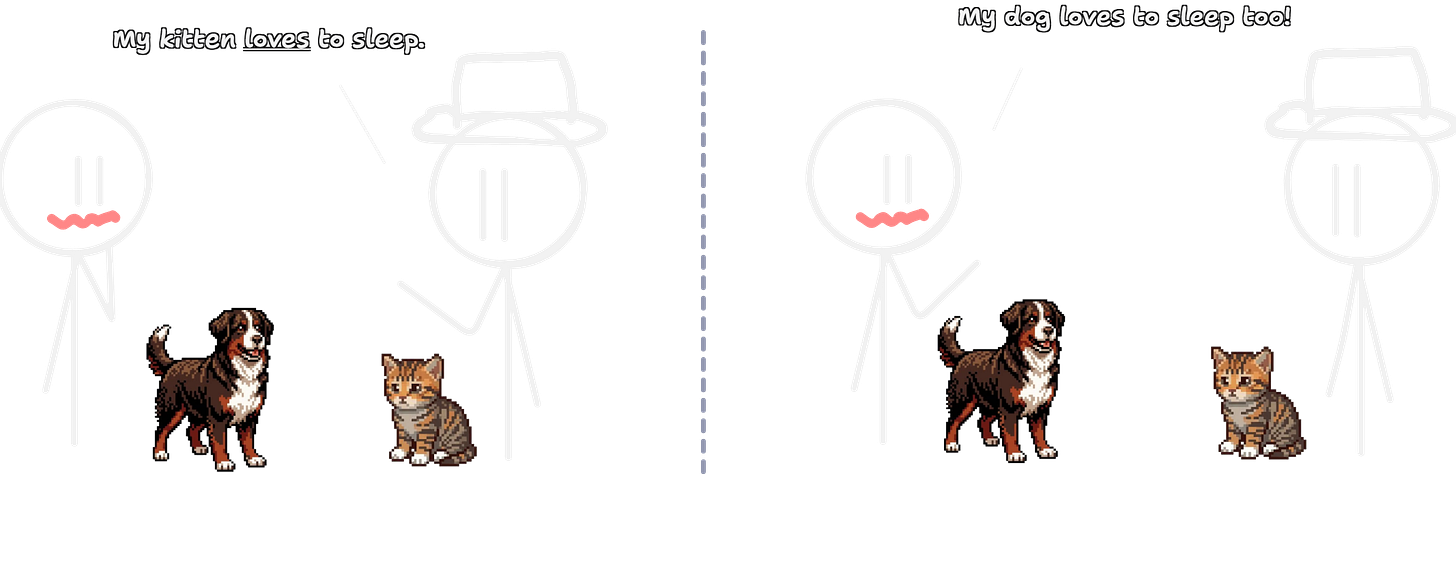

Tom and Hank were recently having a conversation about their pets.

Imagine you knew nothing about what the words kitten or dog meant. Despite this, by context alone, you could infer that these two words were similar or at least belong to the same category.

\(\underline{\text{kitten}} \text{ and } \underline{\text{dog}} \text{ are used interchangeably}\newline \implies \text{same category (pets)}\)

Now look at these two sentences:

Tom felt happy after getting a promotion.

Tom felt joy after getting a promotion.

Specifically, focus on the words happy and joy. In this context, the two are interchangeable. Though they might have different specific meanings, the fact that they are interchangeable somehow suggests that they must be similar!

Two words, despite being completely different in the letters they are made up of, will share the same meaning if they appear in similar contexts.

This very concept: that similar words are usually interchangeable, gives rise to a simple way to find the semantic relation between different words.

You shall know a word by the company it keeps.

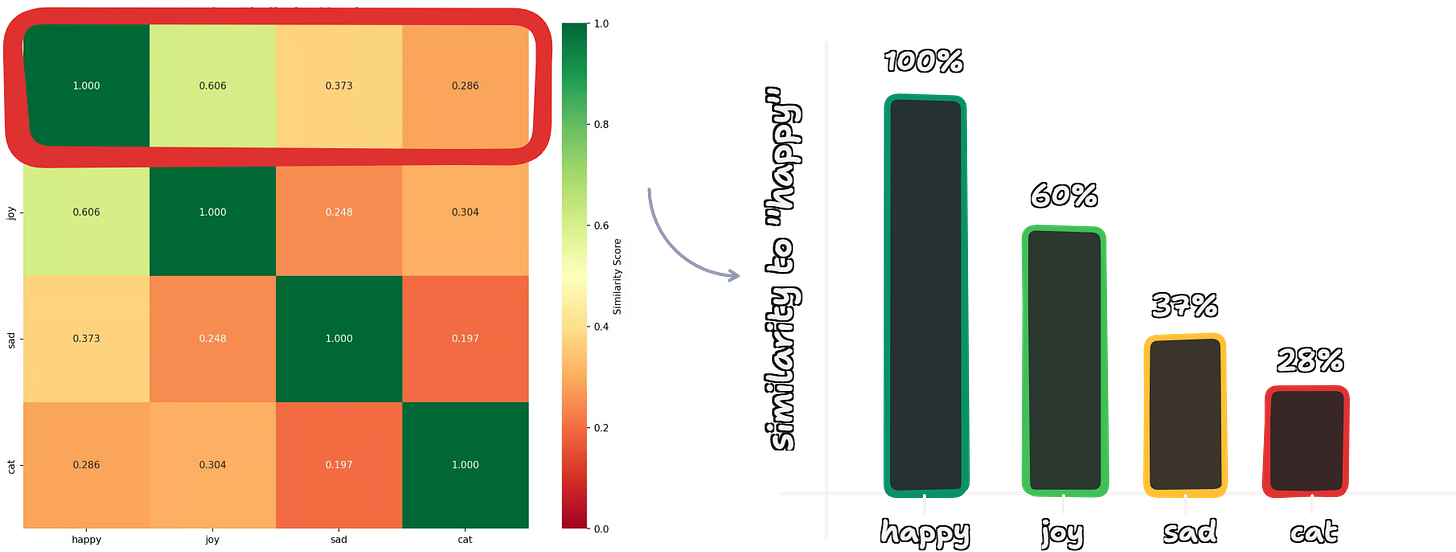

In fact, from a couple thousand of sentences, it becomes almost trivial to understand how closely related happy and joy are; or to understand how different happy and sad are.

Over hundreds of thousands of sentences from various sources, models build a solid understanding of how different words are related to each other. The models that specialize in learning only this semantic understanding are called embedding models.

Internally, how this works is through a long list of numbers called embeddings (hence the name of such models). Each word gets its own unique long list. The idea is that similar words will have lists of numbers that are similar in their values.

You can play around with embedding models in this online playground to visualize with real-world embedding models.

Keep in mind that though embeddings capture similarity, they do not necessarily capture meaning. Considering the words happy and joy, the model knows that they are similar; however, it doesn’t understand the meaning behind either of them.

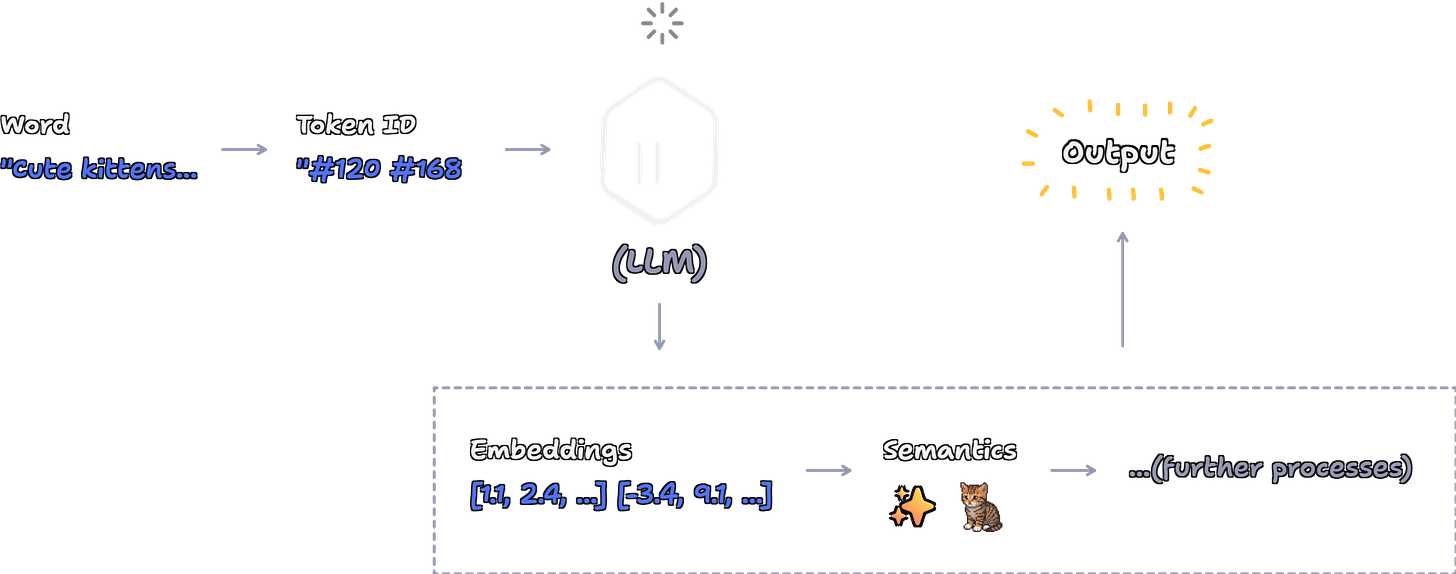

Embeddings are processed by LLMs in a different way to extract meaning from context. This is actually the third musketeer of communication and will be dealt with in a later post.

When you communicate with an LLM, though the model responds in English, that’s usually not what the model sees. Instead, the model sees something called tokens.

Tokens usually represent a word or part of a word or punctuation mark. Text is split up into tokens; each token has an ID associated with it. Each LLM usually has its own embedding layer that converts token IDs to embeddings. This sequence of embeddings is what is processed by the LLM.

A tokenizer is a program that, given a word, retrieves its token ID.

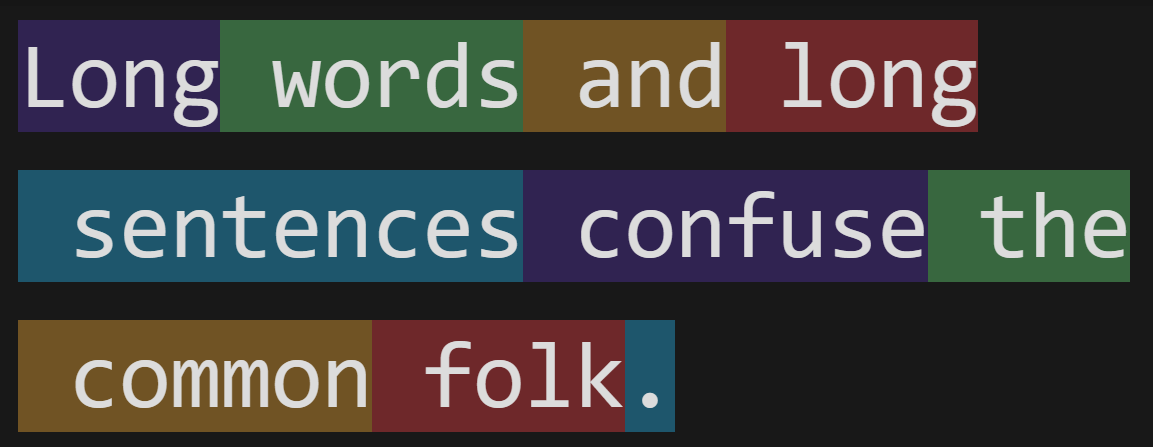

You can play around with the tokenizer used by ChatGPT here. Here’s an example, where each colour corresponds to a single unique token:

In this post, we looked at one of the most important principles of language is that similar words occur in similar contexts. Models learn to exploit this property to learn similarities between word semantics. This allows them to build an understanding of different words and how they relate with each other.

Next up: what exactly does the model do with these embeddings? If you have a guess, drop a comment below!

This post is a part of the “But How do LLMs Work?” series, designed to help you understand and discover LLMs through examples and intuition. If that’s something you’re interested in, I’d recommend subscribing!

If you don’t want to subscribe, that’s alright: share this post with a friend!

If you think kittens are cute, tell your friends about the (very) cute kitten in this post!