The COBOL apocalypse conversation this week has been useful, because it has forced the industry to confront something it has been avoiding for decades. But most of the coverage is stopping at the wrong point. Everyone is talking about COBOL. Nobody is talking about the architectural philosophy that COBOL gave birth to, the one that outlived the mainframe, survived the client server era, made it through the cloud revolution, and is still being sold to banks today with a straight face.

Core banking. The idea that you can package every conceivable banking function into a single platform, run it as a monolithic system, and call that an architecture. It was a reasonable compromise when banking was about cutting a cheque once a month and buying a house every twenty years. It is a completely useless approach to solving modern banking needs, and the fact that it has persisted this long is one of the most remarkable examples of institutional inertia in the history of enterprise technology.

This is a companion to my earlier article on the COBOL announcement that shook IBM’s stock price. That piece was about the death of COBOL as a moat. This one is about the death of the architectural philosophy that COBOL created, and why that second death is the one that actually matters.

1. Where Core Banking Came From

1.1 The Original Problem Was Real

To understand why core banking became so entrenched, you need to go back to where it started. The first computerised core banking systems emerged in the late 1960s and early 1970s, built in COBOL and running on IBM mainframes. The business problem they were solving was genuine and significant: banks had enormous volumes of transactions to process, they were doing it manually or with primitive automation, and they needed centralisation, speed, and reliability.

1.2 A Pragmatic Solution for a Narrow World

The solution was a single centralised computer that handled everything. Account management, transaction processing, interest calculation, fee charging, regulatory reporting, all of it in one place, in one codebase, with batch processing that ran overnight. Transactions were processed in groups at end of day because that was the technical reality of the hardware. Intraday balances required workarounds. The system was only accessible during banking hours. These were not design choices made out of laziness. They were pragmatic responses to the constraints of 1970s computing.

And it worked. For the banking reality of the 1970s, it worked extremely well. A customer visited one branch. They had a current account and perhaps a savings account. They wrote cheques. They took out a mortgage once in their adult life. The entire relationship was narrow, predictable, low volume, and slow moving. A batch processing system that updated balances overnight was entirely adequate for that world. The monolithic architecture made sense because the problem it was solving was genuinely monolithic.

1.3 When the Compromise Became the Convention

The architectural sin came later. It came when that original pragmatic compromise got packaged up, sold as a product, extended by vendors across decades, and eventually canonised as the correct way to build a bank’s technology. The compromise became the convention. The workaround became the standard. And by the time the banking world had changed beyond recognition, the core banking system had become too embedded, too expensive, and too complex to dislodge.

2. The Stuck Thought That Refuses to Die

2.1 How the Monolith Started to Fracture

By the 1980s and 1990s, banking had already changed enough that the monolithic core was showing its limitations. Banks were adding credit cards, mortgages, foreign exchange, investment products. Each of these added specialist systems, often with their own ledgers, their own data models, their own business logic. The monolith started to fracture, not by design but by accretion, as new modules were bolted onto an architecture that was never designed to accommodate them.

2.2 Vendors Responded by Building Bigger Monoliths

Vendors responded by building larger monoliths. Temenos, Oracle FLEXCUBE, Finacle, SAP Banking; these systems attempted to consolidate the sprawl by packaging more and more functionality into a single platform. The pitch was compelling: one vendor, one contract, one system of record, one throat to choke. For a generation of technology leaders who had lived through the nightmare of integrating dozens of incompatible specialist systems, the appeal was understandable.

2.3 The Cost of Change Became Prohibitive

But the packaging created a new problem. These systems were so comprehensive, so interconnected, and so deeply embedded in a bank’s operations that they became impossible to change without enormous risk and cost. Upgrading a core banking system became a multi year programme. Configuring a new product required navigating hundreds of interdependent parameters. Adding a feature that the vendor had not anticipated required either a costly customisation that would be deprecated in the next release, or a multi year wait for the vendor roadmap to catch up with the business need.

The result was that the rigid coupling of product features and core systems became inadequate, while the complexity protecting those systems kept growing. Banks found themselves in a situation where the cost of change was so high that they simply stopped changing. Instead they wrapped the core in middleware, built APIs around the edges, and told themselves that digital transformation was happening while the fundamental architecture underneath stayed frozen.

2.4 The Gap Between the Front Door and the Engine Room

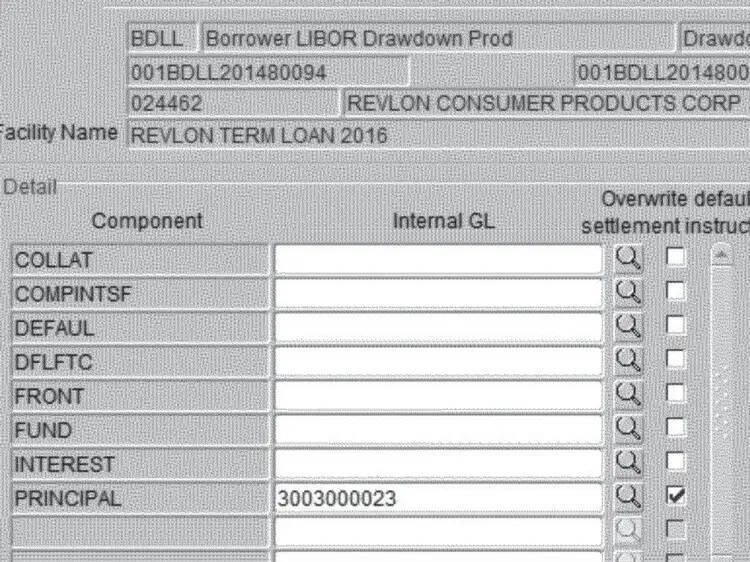

Look at the screenshot below. This is an Oracle FLEXCUBE drawdown screen, the kind of interface that bank operations staff use every day in institutions that run major corporate and syndicated lending books. It is not a screenshot from 1998. This is the actual class of interface that was active in Citibank’s operations in August 2020.

The screen is a form with unlabelled fields, cryptic component codes (COLLAT, COMPINTSF, DEFAUL, DFLFTC), checkbox columns with ambiguous headers, and no affordance whatsoever to indicate what selecting a given combination of options will actually do to real money. In the Citibank case, a contractor attempting to process a routine interest payment on the Revlon term loan instead initiated a full principal repayment of roughly $900 million dollars, to the wrong counterparties, with no confirmation step capable of catching the error before it cleared. Citibank recovered most of it after a long legal battle. They did not recover all of it.

This is not a UI design failure. It is an architectural one. The reason the FLEXCUBE interface looks the way it does is that it is trying to expose the full configurability of a system designed to handle thousands of product permutations across every banking function imaginable, through a single generalised screen. The monolith underneath has no concept of what a specific transaction is supposed to do in plain language terms. It has parameters. The operator maps parameters to intent. When that mapping is wrong, the transaction executes exactly as configured, not as intended.

A domain driven architecture inverts this. A payments domain knows what a principal repayment is. It has a specific workflow for authorising it. It has explicit confirmation gates appropriate to the size and type of transaction. It cannot be accidentally triggered by checking the wrong box on a generalised parameter screen because the operation exists as a named, typed, validated function rather than as a configuration state. The modern app on the customer’s phone and the modern interface on the operator’s screen share the same design philosophy because they are both built on top of systems that understand what they are doing. The engine room matches the front door.

The FLEXCUBE screenshot is not an embarrassing historical artefact. Banks running Oracle FLEXCUBE, Temenos T24, and Finastra Fusion are operating interfaces like this today, in production, across their most sensitive wholesale and retail operations. The Citibank incident was the moment the industry glimpsed what operational risk looks like when the complexity of a monolith is projected directly onto the people responsible for operating it.

3. Why the Monolith Cannot Serve Modern Banking

3.1 Modern Banking Has Different Problems

The problems modern banking needs to solve are completely different from the problems of 1970. A customer today might interact with their bank hundreds of times a month, not once. They expect real time balances, instant payments, instant lending decisions, personalised product recommendations, seamless integration with third party services, and the ability to open a new product in under two minutes. They expect the bank to know them across every product and every channel simultaneously. They expect changes to the product to happen in days, not years.

None of these requirements can be met by a batch processing system designed to update balances overnight. None of them are well served by a monolith where changing one component requires testing the entire system. None of them benefit from packaging every banking function into a single platform that can only scale vertically and can only be deployed as a whole.

3.2 The Monolith Cannot Serve Different Masters

The reason this distinction matters is not academic. When you force domains with fundamentally different characteristics into a single architectural model, you end up optimising for none of them and constraining all of them.

Payments needs to scale horizontally and instantly. On a major public holiday, payment volumes can spike to ten times normal load with almost no warning. In a monolithic core, scaling payments means scaling everything; the lending engine, the regulatory reporting module, the customer identity system, all of it, because they share infrastructure, share databases, and share deployment pipelines. You are paying to scale components that do not need to scale because the architecture cannot distinguish between them. And when the scaling event ends, you cannot scale down selectively either.

Lending has the opposite problem. A lending decision engine benefits from rich customer data, complex scoring models, and the ability to iterate rapidly on decision logic as credit conditions change. In a monolithic core, changing the lending decision model requires a change to the core system. That means a change freeze, a full regression test cycle across every function the monolith owns, a release management process designed for a system where everything is coupled to everything else. A lender who wants to tighten credit criteria in response to a deteriorating macroeconomic signal cannot do it in a day. They wait for the next release window.

Regulatory reporting needs something different again: a complete, immutable, auditable record of every state change in the system, queryable across arbitrary time ranges, accurate to the transaction. A monolith that was designed for operational speed is not designed for this. The data model optimised for processing transactions is rarely the data model optimised for reconstructing the history of those transactions for a regulator. Banks running monolithic cores typically solve this by building a separate reporting warehouse that ingests data from the core and tries to reconstruct an audit trail after the fact. That warehouse is always slightly wrong and everyone knows it.

Three domains, three completely different technical requirements, one architecture serving all of them badly. That is not a coincidence. It is what happens when the architecture is selected for comprehensiveness rather than fit.

3.3 What Domain Driven Architecture Actually Enables

What serves these requirements is a domain driven architecture where payments is a domain, lending is a domain, identity is a domain, notifications is a domain, and each of these domains owns its own data, exposes its own APIs, publishes its own events, and can be scaled, deployed, and changed independently of every other domain. When the payments domain needs to handle ten times the usual volume on a public holiday, it scales without touching the lending domain. When the product team wants to iterate on the lending decision engine, they do it without a change freeze on the rest of the bank. When regulatory requirements change the way identity must be handled, that change is contained to the identity domain rather than rippling through a monolith in unpredictable ways.

A domain driven architecture treats each of these as an independently deployable unit with clear ownership and explicit interfaces. Domains talk to each other by publishing events that other domains can consume, or by exposing APIs that other domains can call. They do not share databases. They do not share code. They own their own data and are responsible for keeping it consistent. When a domain changes, it publishes a new event schema or a new API version, and the downstream consumers can upgrade on their own schedule.

3.4 The Clean Sheet Answer

Here is the most telling evidence that core banking as an architectural philosophy is obsolete: virtually every new bank built in the last decade has explicitly rejected it.

Monzo and Starling built around domain driven design and event driven architecture from the ground up. They did not choose this approach because it was fashionable. They chose it because when you are starting from a clean sheet, building a monolithic core banking system is obviously the wrong answer to the problems you are actually trying to solve. The pattern works. It has been proven at scale. The only remaining argument for the monolithic core is switching cost and organisational inertia, and those are not architectural arguments.

4. The Vendor Trap

4.1 The Proposition and Its Hidden Costs

The core banking vendor market deserves its own examination, because it has been one of the primary mechanisms through which the stuck thought has perpetuated itself.

The major core banking vendors have built extraordinarily successful businesses on a straightforward proposition: banking is too complex for you to build yourself, so buy our platform and we will handle the complexity for you. For smaller and mid tier banks without large technology organisations, this proposition was often correct. The cost of building and maintaining a custom core was prohibitive, and the vendor platform, for all its limitations, was more reliable than what the bank could build in house.

But the proposition came with hidden costs that only became apparent over time. Implementation took years. Customisation was expensive and fragile. Upgrades required the kind of programme management that consumed entire technology departments. The vendor roadmap moved at the vendor’s pace, not the bank’s.

4.2 The Dependency Deepens Over Time

Most critically, the more deeply a bank embedded itself in a vendor’s platform, the more expensive it became to ever leave. This is the architectural equivalent of the MIPS pricing problem. Just as MIPS pricing gave IBM leverage over every new workload a bank wanted to run, core banking vendor contracts give those vendors leverage over every new product a bank wants to launch. The bank becomes dependent not just on the platform but on the vendor’s interpretation of what banking should look like, what products should be possible, what data models should exist. The vendor’s architecture becomes the bank’s architecture by default, and the bank’s ability to differentiate on technology becomes increasingly constrained.

4.3 The Complexity Moat Is by Design

The vendors know this. Their licensing models, their implementation dependencies, their proprietary data formats are all optimised to make the cost of leaving feel higher than the cost of staying. The limitations of the packaged approach have become undeniable but the switching costs have made change feel impossible. It is a very sophisticated form of the same complexity moat that COBOL built around the mainframe.

5. The Question Nobody in the Room Ever Asked

5.1 The Wrong Question, Asked Expensively

Here is what continues to baffle me about the generation of banking technology leaders who ran these programmes. Somewhere in every one of those core banking replacement journeys, there was a room full of smart people, expensive people, people with decades of enterprise software experience, and collectively they convinced themselves that the central question they needed to answer was: which vendor can replace my current mess with a different vendor’s mess?

5.2 The Requirements Document as a Form of Self Harm

Think about what that process actually looked like. A bank assembles a requirements document. That requirements document runs to hundreds of pages. It covers every feature, every workflow, every edge case, every regulatory obligation, every reporting requirement, every integration point the current system handles, and then, for good measure, it adds everything the business has ever wanted but never got. The team spends months on it. Consultants bill handsomely for it. It becomes a definitive statement of what the bank wants from its technology for the next twenty years.

And at no point in that process does the penny drop that writing a twenty year feature wishlist for a monolithic vendor platform is itself a form of institutional self harm. The very act of producing that document is an admission that you have outsourced your architectural thinking to a sales catalogue. You are not designing a technology strategy. You are shopping.

5.3 The Quote Arrives and Nobody Asks the Hard Question

Then the quotes come in. The implementation is going to take three years, minimum. The risk profile is enormous. The cost is tens of millions of dollars before a single client sees any benefit. The programme will consume your best people, freeze your change pipeline, and create the kind of organisational stress that makes good engineers leave. And somewhere in that moment, in every single one of those programmes, someone should have stood up and asked the question that apparently nobody ever did: what is in this for our clients?

Not for the vendor. Not for the compliance team. Not for the CIO who wants a modern system on their CV. For the clients.

5.4 Better MIS Is Not Client Value

The honest answer to what the programme delivers for clients is, in almost every case: nothing they will ever see.

Better MIS reports. Slicker ETL. A compliance model that does not require a spreadsheet army to maintain. Internal dashboards that no longer require a PhD to operate. These are real improvements. They are not nothing. But they are improvements the client will never encounter, never feel, and never benefit from in any direct way.

The standard defence is that operational resilience is client value. That a bank which cannot see its own positions clearly will eventually harm its clients through failure, mis-selling, or collapse. That argument is not wrong. But it proves too little. Operational resilience justifies modernising your reporting layer. It does not justify a three year programme, tens of millions of dollars, and a change freeze across your entire product organisation; and then delivering the same products, at the same price, through the same channels, to clients who were unaware anything had changed.

The clients were going to get the same products, at the same price, with the same service model, through the same channels. The account was still going to be the account. The loan was still going to be the loan. The three year programme, the tens of millions of dollars, the organisational disruption; all of it was being spent on internal plumbing dressed up as transformation.

5.5 The Uncomfortable Question That Was Never Asked

This is what makes the entire core banking replacement era so difficult to defend in retrospect. The industry hired armies of technologists, built enormous internal capability, and then concluded that the highest and best use of that capability was to manage the procurement of vendor platforms. The talent was real. The investment was real. The output was a new set of vendor dependencies that looked marginally more modern than the old ones and came with an implementation trauma that took years to recover from.

If that talent had been directed at building domain capability instead of managing vendor relationships, the outcome would have been categorically different. But that would have required someone in the room to ask the uncomfortable question: why are we paying all these people if the answer is always to pay a vendor to do the actual work?

6. The Exquisite Pain of the Core Banking Upgrade

There is a particular kind of suffering in enterprise technology that has no equivalent elsewhere in the industry. It is the core banking upgrade. And if you have never lived through one, you cannot fully appreciate the combination of expense, duration, risk, and ultimate anticlimax that defines the experience.

A typical core banking upgrade programme runs three to five years. It consumes hundreds of millions of dollars when you account for implementation partners, internal resource, parallel running, testing infrastructure, and the inevitable scope creep that accompanies any programme of this complexity. It occupies the attention of the most senior technology leadership in the organisation for its entire duration. It generates a programme governance structure so elaborate that the governance itself becomes a full time job. It dominates board reporting, risk committee agendas, and regulator conversations for years at a stretch.

And then it goes live. And the very best outcome, the outcome the programme director dreams about, the outcome that gets celebrated with a quiet internal announcement and a cautious all staff email, is that nobody noticed. Not the customers. Not the operations teams. Not the regulators. The system behaves exactly as it did before, processes the same transactions, produces the same outputs, and the only visible change is that the version number in the admin console has incremented.

That is the success case. Three years. Tens of millions of dollars. New leadership, because the old CTO either burned out or was quietly moved on sometime around year two. And the headline achievement is: we did not break anything.

The business case that justified the programme spoke of future capability. Once on the new platform, the bank would be able to launch products faster, integrate with partners more easily, respond to regulatory changes with less pain, and unlock features from the vendor roadmap that the old system could not support. Some of those things materialise. Many of them do not, or materialise so slowly that the business opportunity they were meant to serve has already been captured by someone else.

Because here is the uncomfortable truth about the features you were going to unlock after the upgrade: if a customer wanted them badly enough, they left before you finished the programme. The customers who stayed either do not care about those features, have adapted their behaviour around their absence, or are locked in by switching costs of their own. You spent three years and a hundred million dollars catching up to a market position you should have held five years ago, in a world that has moved on since then.

The cruelest part is the competitive dynamics. When a major bank announces a core banking replacement programme, the correct response from every competitor is quiet celebration. Not because you wish them ill, but because you know what is coming. That bank is about to disappear into an internal programme for three to five years. Their best technology people will be consumed by the upgrade. Their ability to ship new products will be constrained by change freezes. Their senior leadership will be distracted. Their risk appetite will contract because nobody wants a major incident during a core migration. They will emerge on the other side with a swollen balance sheet, exhausted teams, and technology leadership that has largely turned over, and they will need another year just to rediscover what they were doing before the programme started. During their upgrade cycle the bank will have essentially gifted all of their competitors an uncontested market space.

This is the final indictment of the monolithic core banking model. It does not just constrain your architecture. It periodically forces you to consume enormous organisational energy in programmes whose best case outcome is standing still, while your competitors who made different architectural choices are shipping features every sprint. The upgrade treadmill is not an accident. It is a structural consequence of the architecture, and it will not end until the architecture changes.

7. The Replacement Trap

The question, then, is what to do about it. And this is where the industry has consistently chosen the wrong answer.

When a bank decides to replace its core banking system, it produces a requirements document. That document captures everything the current system does, everything the business has wanted but never received, and everything compliance has been asking for since the last major programme. It is a comprehensive statement of what the bank needs from its technology for the next twenty years. The selection process that follows evaluates vendors against that document. The winning vendor is the one whose platform covers the most requirements at an acceptable cost with a credible implementation track record.

Notice what has just happened. The bank has selected a new system based on its ability to replicate the functional footprint of the old one. The selection criterion is comprehensiveness. The winner is the most capable monolith available. The bank has spent eighteen months and several million dollars in consulting fees to procure a more modern version of the architectural problem it was trying to escape.

Ripping out one monolithic core and replacing it with another, even a genuinely more capable one, does not change the fundamental constraint. The constraint is not the age of the technology. The constraint is the architectural model: a single system that owns all the data, embeds all the business logic, and must be deployed, upgraded, and changed as a whole. A newer monolith has a cleaner codebase and a more modern API layer. It has exactly the same properties that will make it unmovable in fifteen years. You will be having this conversation again. The vendors know this. It is not a flaw in their business model. It is the business model.

The problem is not the technology. The problem is the decision to package every banking function into a single platform and call that an architecture. No amount of re-platforming resolves that decision. It only defers the reckoning.

7.1 The Strangler Fig Is the Only Approach That Consistently Works

The right approach is to strangle the core incrementally, building new capabilities on modern domain architecture alongside the existing system, migrating workloads progressively, and shrinking the footprint of the legacy core until it either becomes so small that replacement is trivial or its remaining functions are so well isolated that they can be maintained indefinitely without constraining everything else. This is the strangler fig pattern applied to banking, and it is the only migration approach that consistently produces good outcomes at acceptable risk.

7.2 Why the Argument Keeps Getting Ignored

The reason this argument has been ignored for so long is partly organisational and partly commercial, and partly something more primitive than either.

The organisational problem is that incremental transformation is harder to fund and harder to explain to a board than a single big programme. A “core banking replacement” is a project. An “incremental domain migration” is a journey with no obvious end date, and boards are more comfortable writing cheques for projects than journeys. But that framing only explains the governance mechanics. It does not explain the appetite for it, the genuine enthusiasm that intelligent people bring to these programmes. That comes from somewhere else.

Everyone wants to be at the big reveal. The go live. The moment the new system takes over and the old one is switched off forever. There is a ribbon cutting energy to a major core banking replacement that a decade of quiet domain migration can never replicate. Careers are built around it. It has a name and a programme board and a war room and a launch date. It is the kind of thing you put at the top of your CV and describe in keynotes. The people running these programmes are not stupid and they are not cynical. They genuinely believe in the transformational moment. They want to be the ones who finally fixed it.

The problem is that technology is not a rehabilitation programme. You do not get ill, go to rehab, come out cured, and return to normal life. Banks that treat core banking replacement as rehab are back in the same room five years after go live, wondering why the new system is already calcifying, already accumulating the technical debt that made the old one unbearable, already generating the requirements document for the next replacement. The cycle is not a coincidence. It is what happens when you try to solve a continuous problem with a discrete event.

Technology change is an infinite game. There is no big reveal. There is no moment at which the architecture is finished and the organisation can declare victory and go home. The neobanks that built domain driven architectures did not do it in one programme. They did it continuously, deploying changes daily, evolving domain boundaries as the business changed, treating the architecture as a living thing that requires constant attention rather than a project that can be completed and closed. That is not a less exciting way to run technology. It is the only way that actually works.

The commercial dimension reinforces this dynamic, and it does not require conspiracy to explain. The vendors who benefit most from large replacement programmes are the same vendors with the most presence at industry events, the most investment in thought leadership, and the most seats at the tables where technology strategy gets discussed. They do not need to coordinate. They just need to keep showing up, sponsoring the conferences, funding the research, and making the case for the kind of programmes that happen to be good for their revenue model. A vendor whose business depends on large implementation programmes has no commercial incentive to sell you a philosophy of incremental continuous change. The big reveal is very good for their business. The infinite game is not. The result is an industry conversation that is shaped, without anyone necessarily intending it, by the organisations with the most to gain from the status quo.

8. The Objections That Miss the Point

8.1 The Wheelchair Argument

There is a class of objection to the domain migration argument that surfaces reliably in every serious conversation about replacing COBOL and core banking systems. It goes something like this: AI will never replicate the performance of those undocumented hand optimised assembly routines that the COBOL engineers wrote to extract maximum throughput from the mainframe. Therefore AI cannot replace COBOL.

This objection is technically accurate and entirely irrelevant. It is the equivalent of arguing that electric vehicles cannot replace combustion engines because an electric motor cannot replicate the precise combustion dynamics of a finely tuned V8. You are not trying to replicate the V8. You are trying to move people from one place to another, and electric motors do that better by most measures that actually matter.

The assembly optimisation objection assumes that the goal is to take the existing system and make AI run it faster. It is not. The argument in this article; and the argument the neobanks proved in practice; is that you do not start with a wheelchair and strap a rocket to it. You build something that was designed from the beginning to go fast. A domain built specifically to process payments at scale, running on modern hardware, with a data model designed for throughput rather than retrofitted from 1972, does not have the same performance constraints as a COBOL batch processor. It does not need to replicate the assembly tricks because it does not have the architectural problems those tricks were invented to solve.

The question is not whether AI can match the performance of a hand optimised mainframe routine. The question is whether a modern domain architecture can meet the actual performance requirements of a modern bank. The answer to the second question has been demonstrated conclusively. The answer to the first question is irrelevant to anything anyone is actually trying to build.

8.2 The CAP Theorem Objection

The other objection that follows closely is the distributed systems consistency argument. ACID transactions are impossible on distributed systems. You cannot have the same transactional guarantees across domain boundaries that you have inside a monolith. Therefore domain driven architecture cannot replace a core banking system.

This one has the additional quality of being technically outdated.

ACID compliance within a single domain is not meaningfully different from ACID compliance within a monolith, because a well designed domain owns its own data store and processes its own transactions with full consistency guarantees. The complexity arises at domain boundaries, in operations that span multiple domains, and this is where the objection has historically had some validity.

What the objection misses is that this is a solved architectural problem, and has been for years. Saga patterns manage distributed transactions across domain boundaries by breaking them into a sequence of local transactions with compensating transactions for rollback. Event sourcing provides an immutable audit log of every state change, giving you the historical consistency that regulators require without forcing every domain to share a database. Eventual consistency, applied correctly to the parts of a banking system where it is appropriate, is not a concession. It is a design choice that matches the actual consistency requirements of the operation in question.

Not every banking operation requires synchronous ACID consistency across the entire system. A payment confirmation can be issued once the payments domain has committed its local transaction and published its event, even before the downstream ledger domain has processed that event. The money has moved. The question is only how quickly all systems agree that it has moved, and modern distributed systems close that window to milliseconds.

The banks that raise the CAP theorem objection as a reason not to migrate are not grappling with a genuine technical constraint. They are using a theoretical framework to avoid a difficult organisational decision. The constraint is not the theorem. The constraint is the willingness to make the transition.

8.3 The dual-book problem

You don’t need to run two books. The migration strategy isn’t a lift-and-shift of the book of record — you leave the existing ledger exactly where it is and use its own APIs to post debits and credits while you extract business logic and products out into separate domains incrementally. The core banking system becomes a dumb ledger temporarily, not something you have to replicate in parallel. The regulatory complexity argument collapses because you’re not running two books — you’re re-routing product logic while the same ledger of record continues to serve as the source of truth throughout. The UBS/Credit Suisse situation is actually a perfect illustration of what not to do: that was a forced, wholesale client migration across incompatible platforms under acquisition pressure. Domain extraction from a living system is a fundamentally different problem.

8.4 Neo-banks only doing the easy parts

Scale before complexity is rational product sequencing, not a ceiling. And neo-banks are already tackling things that universal banks genuinely struggle with: BNPL, crypto custody and rails, advanced payment infrastructure, real-time cross-border settlement. These aren’t simple — they’re just differently complex. Low-frequency, high-complexity products like commercial lending, mortgages, and IB aren’t being ignored; they’re next. When neo-banks get there, they’ll apply the same decomposed, domain-driven architectural philosophy they’ve already proven at scale — which gives them a structural advantage over incumbents who’ve been bolting those products onto monolithic cores for decades.

9. The Moment That Changes the Calculation

9.1 AI Dissolves the “Understanding Is Too Expensive” Argument

The AI announcement that rattled IBM’s stock this week is relevant to this argument in a specific and limited way. The claim that AI can compress the COBOL analysis phase from months to weeks is also a claim that it can compress the domain decomposition analysis of a core banking system. The same capability that traces data flows and business logic dependencies across hundreds of thousands of lines of COBOL can produce the domain boundary map that has historically cost millions in consulting fees before a single line of new architecture is written.

This matters because one of the most durable objections to domain driven migration has been that understanding what the current system actually does is itself prohibitively expensive. Nobody alive knows the full behaviour of a system built over forty years. The documentation is wrong or missing. The institutional knowledge has retired. The only way to know what the monolith does is to run it and watch it, which means the analysis phase alone is a multi year programme before migration even begins. If AI genuinely compresses that phase by an order of magnitude, it removes the single most credible technical objection to starting.

The hard problems remain hard. Data migration, regulatory validation, parallel running, cutover risk, the organisational change management required to shift a bank’s operating model from vendor dependency to domain ownership — none of that gets easier because a language model can read COBOL faster. But the argument that the problem is too complex to even understand clearly has just become significantly less convincing.

9.2 The Honest Conversation Cannot Be Deferred Indefinitely

For technology leaders at established banks, the variables are now shifting in a way that makes continued deferral harder to justify. The neobanks have moved from proof of concept to proven at scale — Monzo and Starling are no longer experiments, they are operational competitors with cost structures and change velocities that the monolithic architecture cannot match. AI is reducing the cost of understanding the problem. And the regulatory environment in most major markets is moving toward open banking requirements that a monolithic core serves badly and a domain architecture serves naturally.

The question is not whether the transition to domain driven banking architecture will happen. The neobank evidence has settled that. The question is whether established banks will lead that transition or react to it after competitors have used it to take market position they cannot recover.

Every year of continued dependency on a monolithic core is a year in which the cost of the eventual transition grows, the competitive gap widens, and the argument for delay gets slightly weaker. The switching cost is real. It has always been real. But it is not a permanent barrier. It is a cost that compounds the longer it is avoided.

9.3 The Architecture Reflects the Belief

The deepest reason core banking persisted is not technical and not commercial. It is a belief, held by successive generations of banking technology leaders, that banking is too complex and too regulated to be built any other way. That belief was never correct. It was a rationalisation that the vendors reinforced because it was commercially useful to them, and that technology organisations accepted because it released them from the responsibility of building genuine architectural capability.

The neobanks disproved it. They built domain driven banks, at scale, under the same regulatory frameworks, with teams a fraction of the size of the technology organisations at major incumbents. They did not discover a secret. They simply refused to accept the premise that the only architecture available to a bank was the one the vendors were selling.

The monolithic core had its era. That era was the 1970s. The question worth asking now is not how to replace it with a better monolith. It is how to build a bank whose architecture reflects what banking has actually become, rather than what it was when the architecture was first designed.

Andrew Baker is Chief Information Officer at Capitec Bank. He writes about enterprise architecture, banking technology, and the future of financial services technology at andrewbaker.ninja.