Explore this post with:

Introduction

Treat "forests" well. Not for the sake of nature, but for solving problems too!

Random Forest is one of the most versatile machine learning algorithms available today. With its built-in ensembling capacity, the task of building a decent generalized model (on any dataset) gets much easier. However, I've seen people using random forest as a black box model; i.e., they don't understand what's happening beneath the code. They just code.

In fact, the easiest part of machine learning is coding. If you are new to machine learning, the random forest algorithm should be on your tips. Its ability to solve—both regression and classification problems along with robustness to correlated features and variable importance plot gives us enough head start to solve various problems.

Most often, I've seen people getting confused in bagging and random forest. Do you know the difference?

In this article, I'll explain the complete concept of random forest and bagging. For ease of understanding, I've kept the explanation simple yet enriching. I've used MLR, data.table packages to implement bagging, and random forest with parameter tuning in R. Also, you'll learn the techniques I've used to improve model accuracy from ~82% to 86%.

Table of Contents

- What is the Random Forest algorithm?

- How does it work? (Decision Tree, Random Forest)

- What is the difference between Bagging and Random Forest?

- Advantages and Disadvantages of Random Forest

- Solving a Problem

- Parameter Tuning in Random Forest

What is the Random Forest algorithm?

Random forest is a tree-based algorithm which involves building several trees (decision trees), then combining their output to improve generalization ability of the model. The method of combining trees is known as an ensemble method. Ensembling is nothing but a combination of weak learners (individual trees) to produce a strong learner.

Say, you want to watch a movie. But you are uncertain of its reviews. You ask 10 people who have watched the movie. 8 of them said "the movie is fantastic." Since the majority is in favor, you decide to watch the movie. This is how we use ensemble techniques in our daily life too.

Random Forest can be used to solve regression and classification problems. In regression problems, the dependent variable is continuous. In classification problems, the dependent variable is categorical.

Trivia: The random Forest algorithm was created by Leo Breiman and Adele Cutler in 2001.

How does it work? (Decision Tree, Random Forest)

To understand the working of a random forest, it's crucial that you understand a tree. A tree works in the following way:

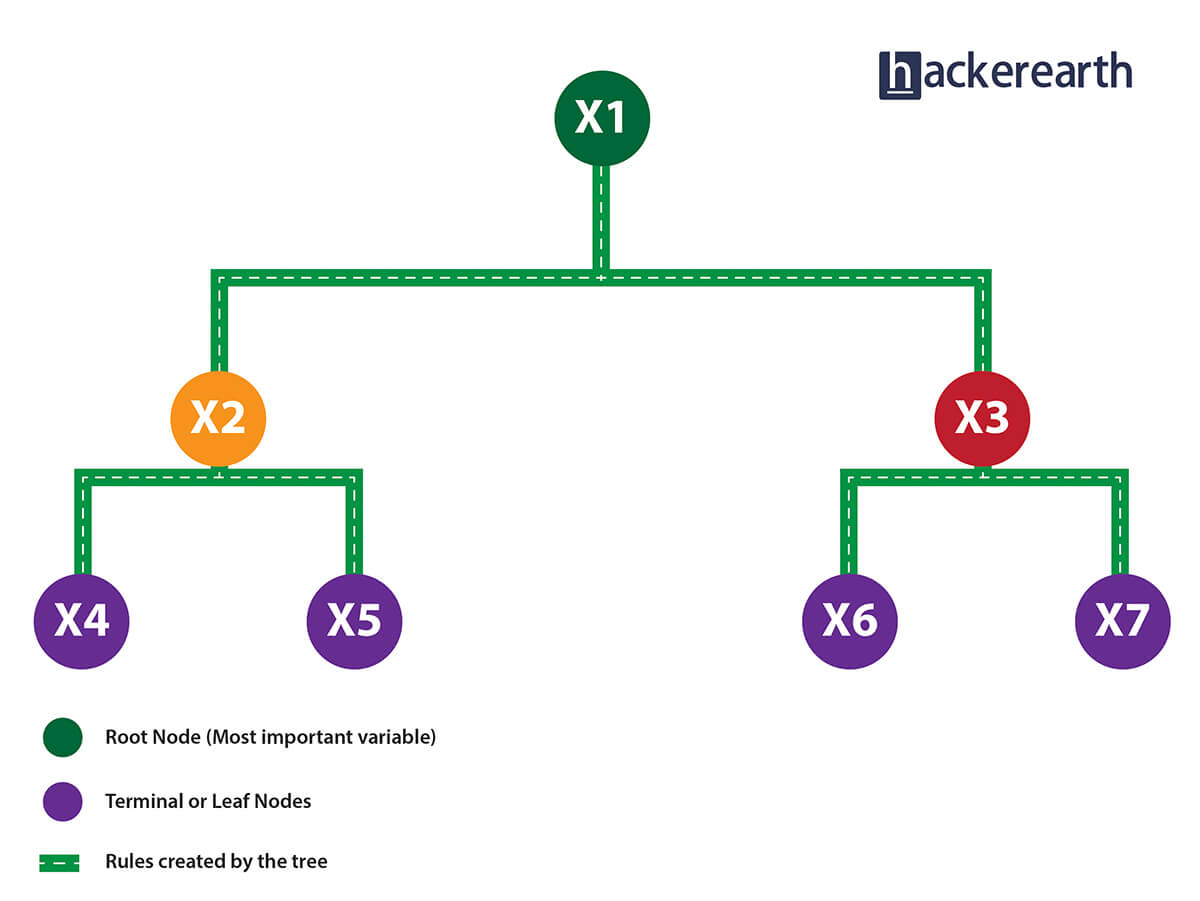

1. Given a data frame (n x p), a tree stratifies or partitions the data based on rules (if-else). Yes, a tree creates rules. These rules divide the data set into distinct and non-overlapping regions. These rules are determined by a variable's contribution to the homogeneity or pureness of the resultant child nodes (X2, X3).

2. In the image above, the variable X1 resulted in highest homogeneity in child nodes, hence it became the root node. A variable at root node is also seen as the most important variable in the data set.

3. But how is this homogeneity or pureness determined? In other words, how does the tree decide at which variable to split?

- In regression trees (where the output is predicted using the mean of observations in the terminal nodes), the splitting decision is based on minimizing RSS. The variable which leads to the greatest possible reduction in RSS is chosen as the root node. The tree splitting takes a top-down greedy approach, also known as recursive binary splitting. We call it "greedy" because the algorithm cares to make the best split at the current step rather than saving a split for better results on future nodes.

- In classification trees (where the output is predicted using mode of observations in the terminal nodes), the splitting decision is based on the following methods:

- Gini Index - It's a measure of node purity. If the Gini index takes on a smaller value, it suggests that the node is pure. For a split to take place, the Gini index for a child node should be less than that for the parent node.

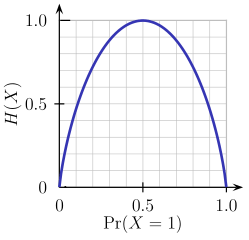

- Entropy - Entropy is a measure of node impurity. For a binary class (a, b), the formula to calculate it is shown below. Entropy is maximum at p = 0.5. For p(X=a)=0.5 or p(X=b)=0.5 means a new observation has a 50%-50% chance of getting classified in either class. The entropy is minimum when the probability is 0 or 1.

Entropy = - p(a)*log(p(a)) - p(b)*log(p(b))

In a nutshell, every tree attempts to create rules in such a way that the resultant terminal nodes could be as pure as possible. Higher the purity, lesser the uncertainty to make the decision.

But a decision tree suffers from high variance. "High Variance" means getting high prediction error on unseen data. We can overcome the variance problem by using more data for training. But since the data set available is limited to us, we can use resampling techniques like bagging and random forest to generate more data.

Building many decision trees results in a forest. A random forest works the following way:

- First, it uses the Bagging (Bootstrap Aggregating) algorithm to create random samples. Given a data set D1 (n rows and p columns), it creates a new dataset (D2) by sampling n cases at random with replacement from the original data. About 1/3 of the rows from D1 are left out, known as Out of Bag (OOB) samples.

- Then, the model trains on D2. OOB sample is used to determine unbiased estimate of the error.

- Out of p columns, P ≪ p columns are selected at each node in the data set. The P columns are selected at random. Usually, the default choice of P is p/3 for regression tree and √p for classification tree.

-

Unlike a tree, no pruning takes place in random forest; i.e., each tree is grown fully. In decision trees, pruning is a method to avoid overfitting. Pruning means selecting a subtree that leads to the lowest test error rate. We can use cross-validation to determine the test error rate of a subtree.

Unlike a tree, no pruning takes place in random forest; i.e., each tree is grown fully. In decision trees, pruning is a method to avoid overfitting. Pruning means selecting a subtree that leads to the lowest test error rate. We can use cross-validation to determine the test error rate of a subtree.

- Several trees are grown and the final prediction is obtained by averaging (for regression) or majority voting (for classification).

Each tree is grown on a different sample of original data. Since random forest has the feature to calculate OOB error internally, cross-validation doesn't make much sense in random forest.

What is the difference between Bagging and Random Forest?

Many a time, we fail to ascertain that bagging is not the same as random forest. To understand the difference, let's see how bagging works:

- It creates randomized samples of the dataset (just like random forest) and grows trees on a different sample of the original data. The remaining 1/3 of the sample is used to estimate unbiased OOB error.

- It considers all the features at a node (for splitting).

- Once the trees are fully grown, it uses averaging or voting to combine the resultant predictions.

Aren't you thinking, "If both the algorithms do the same thing, what is the need for random forest? Couldn't we have accomplished our task with bagging?" NO!

The need for random forest surfaced after discovering that the bagging algorithm results in correlated trees when faced with a dataset having strong predictors. Unfortunately, averaging several highly correlated trees doesn't lead to a large reduction in variance.

But how do correlated trees emerge? Good question! Let's say a dataset has a very strong predictor, along with other moderately strong predictors. In bagging, a tree grown every time would consider the very strong predictor at its root node, thereby resulting in trees similar to each other.

The main difference between random forest and bagging is that random forest considers only a subset of predictors at a split. This results in trees with different predictors at the top split, thereby resulting in decorrelated trees and more reliable average output. That's why we say random forest is robust to correlated predictors.

Advantages and Disadvantages of Random Forest

Advantages are as follows:

- It is robust to correlated predictors.

- It is used to solve both regression and classification problems.

- It can also be used to solve unsupervised ML problems.

- It can handle thousands of input variables without variable selection.

- It can be used as a feature selection tool using its variable importance plot.

- It takes care of missing data internally in an effective manner.

Disadvantages are as follows:

- The Random Forest model is difficult to interpret.

- It tends to return erratic predictions for observations out of the range of training data. For example, if the training data contains a variable x ranging from 30 to 70, and the test data has x = 200, random forest would give an unreliable prediction.

- It can take longer than expected to compute a large number of trees.

Solving a Problem (Parameter Tuning)

Let's take a dataset to compare the performance of bagging and random forest algorithms. Along the way, I'll also explain important parameters used for parameter tuning. In R, we'll use MLR and data.table packages to do this analysis.

I've taken the Adult dataset from the UCI machine learning repository. You can download the data from here.

This dataset presents a binary classification problem to solve. Given a set of features, we need to predict if a person's salary is <=50K or >=50K. Since the given data isn't well structured, we'll need to make some modification while reading the dataset.

# set working directory

path <- "~/December 2016/RF_Tutorial"

setwd(path)

# Set working directory

path <- "~/December 2016/RF_Tutorial"

setwd(path)

# Load libraries

library(data.table)

library(mlr)

library(h2o)

# Set variable names

setcol <- c("age",

"workclass",

"fnlwgt",

"education",

"education-num",

"marital-status",

"occupation",

"relationship",

"race",

"sex",

"capital-gain",

"capital-loss",

"hours-per-week",

"native-country",

"target")

# Load data

train <- read.table("adultdata.txt", header = FALSE, sep = ",",

col.names = setcol, na.strings = c(" ?"), stringsAsFactors = FALSE)

test <- read.table("adulttest.txt", header = FALSE, sep = ",",

col.names = setcol, skip = 1, na.strings = c(" ?"), stringsAsFactors = FALSE)

After we've loaded the dataset, first we'll set the data class to data.table. data.table is the most powerful R package made for faster data manipulation.

>setDT(train)

>setDT(test)

Now, we'll quickly look at given variables, data dimensions, etc.

>dim(train)

>dim(test)

>str(train)

>str(test)

As seen from the output above, we can derive the following insights:

- The train dataset has 32,561 rows and 15 columns.

- The test dataset has 16,281 rows and 15 columns.

- Variable

targetis the dependent variable. - The target variable in train and test data is different. We'll need to match them.

- All character variables have a leading whitespace which can be removed.

We can check missing values using:

# Check missing values in train and test datasets

>table(is.na(train))

# Output:

# FALSE TRUE

# 484153 4262

>sapply(train, function(x) sum(is.na(x)) / length(x)) * 100

table(is.na(test))

# Output:

# FALSE TRUE

# 242012 2203

>sapply(test, function(x) sum(is.na(x)) / length(x)) * 100

As seen above, both train and test datasets have missing values. The sapply function is quite handy when it comes to performing column computations. Above, it returns the percentage of missing values per column.

Now, we'll preprocess the data to prepare it for training. In R, random forest internally takes care of missing values using mean/mode imputation. Practically speaking, sometimes it takes longer than expected for the model to run.

Therefore, in order to avoid waiting time, let's impute the missing values using median/mode imputation method; i.e., missing values in the integer variables will be imputed with median and in the factor variables with mode (most frequent value).

We'll use the impute function from the mlr package, which is enabled with several unique methods for missing value imputation:

# Impute missing values

>imp1 <- impute(data = train, target = "target",

classes = list(integer = imputeMedian(), factor = imputeMode()))

>imp2 <- impute(data = test, target = "target",

classes = list(integer = imputeMedian(), factor = imputeMode()))

# Assign the imputed data back to train and test

>train <- imp1$data

>test <- imp2$data

Being a binary classification problem, you are always advised to check if the data is imbalanced or not. We can do it in the following way:

# Check class distribution in train and test datasets

setDT(train)[, .N / nrow(train), target]

# Output:

# target V1

# 1: <=50K 0.7591904

# 2: >50K 0.2408096

setDT(test)[, .N / nrow(test), target]

# Output:

# target V1

# 1: <=50K. 0.7637737

# 2: >50K. 0.2362263

If you observe carefully, the value of the target variable is different in test and train. For now, we can consider it a typo error and correct all the test values. Also, we see that 75% of people in the train data have income <=50K. Imbalanced classification problems are known to be more skewed with a binary class distribution of 90% to 10%. Now, let's proceed and clean the target column in test data.

# Clean trailing character in test target values

test[, target := substr(target, start = 1, stop = nchar(target) - 1)]

We've used the substr function to return the substring from a specified start and end position. Next, we'll remove the leading whitespaces from all character variables. We'll use the str_trim function from the stringr package.

> library(stringr)

> char_col <- colnames(train)[sapply(train, is.character)]

> for(i in char_col)

> set(train, j = i, value = str_trim(train[[i]], side = "left"))

Using sapply function, we've extracted the column names which have character class. Then, using a simple for - set loop we traversed all those columns and applied the str_trim function.

Before we start model training, we should convert all character variables to factor. MLR package treats character class as unknown.

> fact_col <- colnames(train)[sapply(train,is.character)]

>for(i in fact_col)

set(train,j=i,value = factor(train[[i]]))

>for(i in fact_col)

set(test,j=i,value = factor(test[[i]]))

Let's start with modeling now. MLR package has its own function to convert data into a task, build learners, and optimize learning algorithms. I suggest you stick to the modeling structure described below for using MLR on any data set.

#create a task

> traintask <- makeClassifTask(data = train,target = "target")

> testtask <- makeClassifTask(data = test,target = "target")

#create learner

> bag <- makeLearner("classif.rpart",predict.type = "response")

> bag.lrn <- makeBaggingWrapper(learner = bag,bw.iters = 100,bw.replace = TRUE)

I've set up the bagging algorithm which will grow 100 trees on randomized samples of data with replacement. To check the performance, let's set up a validation strategy too:

#set 5 fold cross validation

> rdesc <- makeResampleDesc("CV", iters = 5L)

For faster computation, we'll use parallel computation backend. Make sure your machine / laptop doesn't have many programs running in the background.

#set parallel backend (Windows)

> library(parallelMap)

> library(parallel)

> parallelStartSocket(cpus = detectCores())

>

For linux users, the function parallelStartMulticore(cpus = detectCores()) will activate parallel backend. I've used all the cores here.

r <- resample(learner = bag.lrn,

task = traintask,

resampling = rdesc,

measures = list(tpr, fpr, fnr, fpr, acc),

show.info = T)

#[Resample] Result:

# tpr.test.mean = 0.95,

# fnr.test.mean = 0.0505,

# fpr.test.mean = 0.487,

# acc.test.mean = 0.845

Being a binary classification problem, I've used the components of confusion matrix to check the model's accuracy. With 100 trees, bagging has returned an accuracy of 84.5%, which is way better than the baseline accuracy of 75%. Let's now check the performance of random forest.

#make randomForest learner

> rf.lrn <- makeLearner("classif.randomForest")

> rf.lrn$par.vals <- list(ntree = 100L,

importance = TRUE)

> r <- resample(learner = rf.lrn,

task = traintask,

resampling = rdesc,

measures = list(tpr, fpr, fnr, fpr, acc),

show.info = T)

# Result:

# tpr.test.mean = 0.996,

# fpr.test.mean = 0.72,

# fnr.test.mean = 0.0034,

# acc.test.mean = 0.825

On this data set, random forest performs worse than bagging. Both used 100 trees and random forest returns an overall accuracy of 82.5 %. An apparent reason being that this algorithm is messing up classifying the negative class. As you can see, it classified 99.6% of the positive classes correctly, which is way better than the bagging algorithm. But it incorrectly classified 72% of the negative classes.

Internally, random forest uses a cutoff of 0.5; i.e., if a particular unseen observation has a probability higher than 0.5, it will be classified as <=50K. In random forest, we have the option to customize the internal cutoff. As the false positive rate is very high now, we'll increase the cutoff for positive classes (<=50K) and accordingly reduce it for negative classes (>=50K). Then, train the model again.

#set cutoff

> rf.lrn$par.vals <- list(ntree = 100L,

importance = TRUE,

cutoff = c(0.75, 0.25))

> r <- resample(learner = rf.lrn,

task = traintask,

resampling = rdesc,

measures = list(tpr, fpr, fnr, fpr, acc),

show.info = T)

#Result:

# tpr.test.mean = 0.934,

# fpr.test.mean = 0.43,

# fnr.test.mean = 0.0662,

# acc.test.mean = 0.846

As you can see, we've improved the accuracy of the random forest model by 2%, which is slightly higher than that for the bagging model. Now, let's try and make this model better.

Parameter Tuning: Mainly, there are three parameters in the random forest algorithm which you should look at (for tuning):

- ntree - As the name suggests, the number of trees to grow. Larger the tree, it will be more computationally expensive to build models.

- mtry - It refers to how many variables we should select at a node split. Also as mentioned above, the default value is p/3 for regression and sqrt(p) for classification. We should always try to avoid using smaller values of mtry to avoid overfitting.

- nodesize - It refers to how many observations we want in the terminal nodes. This parameter is directly related to tree depth. Higher the number, lower the tree depth. With lower tree depth, the tree might even fail to recognize useful signals from the data.

Let get to the playground and try to improve our model's accuracy further. In MLR package, you can list all tuning parameters a model can support using:

> getParamSet(rf.lrn)

# set parameter space

params <- makeParamSet(

makeIntegerParam("mtry", lower = 2, upper = 10),

makeIntegerParam("nodesize", lower = 10, upper = 50)

)

# set validation strategy

rdesc <- makeResampleDesc("CV", iters = 5L)

# set optimization technique

ctrl <- makeTuneControlRandom(maxit = 5L)

# start tuning

> tune <- tuneParams(learner = rf.lrn,

task = traintask,

resampling = rdesc,

measures = list(acc),

par.set = params,

control = ctrl,

show.info = T)

[Tune] Result: mtry=2; nodesize=23 : acc.test.mean=0.858

After tuning, we have achieved an overall accuracy of 85.8%, which is better than our previous random forest model. This way you can tweak your model and improve its accuracy.

I'll leave you here. The complete code for this analysis can be downloaded from Github.

Summary

Don't stop here! There is still a huge scope for improvement in this model. Cross validation accuracy is generally more optimistic than true test accuracy. To make a prediction on the test set, minimal data preprocessing on categorical variables is required. Do it and share your results in the comments below.

My motive to create this tutorial is to get you started using the random forest model and some techniques to improve model accuracy. For better understanding, I suggest you read more on confusion matrix. In this article, I've explained the working of decision trees, random forest, and bagging.

Did I miss out anything? Do share your knowledge and let me know your experience while solving classification problems in comments below.

Subscribe to The HackerEarth Blog

Get expert tips, hacks, and how-tos from the world of tech recruiting to stay on top of your hiring!

Thank you for subscribing!

We're so pumped you're here! Welcome to the most amazing bunch that we are, the HackerEarth community. Happy reading!

December 14, 2016

3 min read

Related reads

Discover more articles

Gain insights to optimize your developer recruitment process.

10 Best AI Recruiting Software for Technical Roles in 2026

10 Best AI Recruiting Software for Technical Roles in 2026

Ninety-nine percent of hiring managers now use AI in some capacity (Novoresume, 2026), yet 65% of technology hiring managers still say finding skilled professionals is harder than it was a year ago (Robert Half, 2026). The problem is not access to AI recruiting software; it is that most teams are using tools built for generalist hiring to solve a specialist problem. This guide covers the best AI recruiting tools available in 2026 for AI for technical hiring specifically, and tells you which ones actually work for developer evaluation rather than just general-purpose screening.

How We Evaluated These AI Recruiting Tools

The right AI talent acquisition software for a developer hiring team looks very different from the right one for a retail team, and most evaluation frameworks fail to capture the difference. Each tool was scored on criteria that reflect technical hiring realities specifically.

AI-Powered Skill Assessment Accuracy

Does the tool evaluate actual coding ability, or does it infer skills from resume text? Those are not the same thing, and for engineering roles the difference determines whether your shortlist is credible.

Technical Role Coverage

Coverage across software engineering, data science, DevOps, ML, and other specialized disciplines. A single format for all engineering roles produces noisy signals.

Bias Mitigation and Compliance

NYC Local Law 144 requires annual independent bias audits for any automated employment decision tool used for NYC positions (effective July 2023). The EU AI Act classifies AI hiring tools as high-risk under Annex III. These are procurement requirements now, not optional considerations.

ATS and HRIS Integration

Native connectivity to Greenhouse, Lever, Workday, and SAP SuccessFactors. A platform that cannot route results back to your ATS creates manual reconciliation work that compounds at scale.

Candidate Experience

Thirty-one percent of candidates abandoned a job application because AI screening felt impersonal or confusing (Enhancv, 2026). Candidate experience is a direct signal about employer brand. For guidance on remote proctoring for online assessments that does not damage candidate trust, see HackerEarth's proctoring resources.

Pricing and Scalability

Can the platform handle enterprise volume and flex down for growing teams? Custom pricing is common in this category; where public pricing exists, it is noted.

Quick Comparison Table

| Tool | Best For | AI Assessment Depth | Live Coding | Proctoring | ATS Integration | Free Trial |

|---|---|---|---|---|---|---|

| HackerEarth | AI-driven technical hiring (all-in-one) | High (code + AI Interview) | Yes (FaceCode) | Multi-signal | 15+ native | Yes |

| HireVue | AI video interviewing at scale | Medium (coding limited) | No | Basic | Yes | Demo only |

| Eightfold AI | Talent intelligence and internal mobility | Low (sourcing/matching only) | No | No | Yes | Demo only |

| Codility | Code-testing focused screening | High (coding only) | Limited | Yes | Yes | Yes |

| iMocha | Skills-based hiring across tech and non-tech | Medium | No | Yes | Yes | Yes |

| Paradox (Olivia) | Conversational AI recruiting automation | None (scheduling only) | No | No | Yes | Demo only |

| TestGorilla | Budget-friendly pre-employment testing | Medium | No | AI-assisted | Limited | Yes |

| Fetcher | AI-powered talent sourcing | None (sourcing only) | No | No | Yes | Demo only |

| CoderPad | Live pair programming coding interviews | High (live coding only) | Yes | Limited | Yes | Yes |

| Pymetrics (Harver) | Neuroscience-based cognitive assessment | None (behavioral only) | No | No | Yes | Demo only |

Ready to transform your technical hiring? Book a HackerEarth demo.

1. HackerEarth: Best Overall for AI-Driven Technical Hiring

Most AI hiring software handles one stage of the funnel and hands off. HackerEarth is the only platform on this list that covers sourcing-to-shortlist in a single workflow purpose-built for technical roles, and it is trusted by 4,000+ companies including Google, Amazon, and Walmart.

The product that sets it apart is the AI Interview Agent. Where most platforms auto-grade submitted code, HackerEarth's agent conducts the actual first-round technical interview: it adapts follow-up questions based on what the candidate just said, catches surface-level answers that look confident but lack depth, and delivers a structured scorecard to the hiring manager without any recruiter involvement. For teams running high-volume technical pipelines, that is not an incremental efficiency gain. It eliminates the most expensive manual bottleneck in the process.

Key AI Features

The AI Interview Agent handles autonomous first-round interviews with adaptive questioning so your engineers are not burning two hours a day on screening calls. AI coding assessment tools vary widely, but HackerEarth evaluates code across 40+ programming languages with automated ranking against role-specific benchmarks, which means a hiring manager sees a ranked shortlist rather than 80 raw submissions. Multi-signal proctoring generates a per-candidate Assessment Integrity Score rather than leaving you to interpret session logs. Role-specific templates cover frontend, backend, data science, DevOps, and ML, so teams hiring across multiple disciplines simultaneously are not generalizing assessments to fit the tool.

Best For

Enterprise and mid-market companies hiring at scale across technical disciplines, and engineering teams that want to replace resume-based filtering with evidence of actual coding ability.

Integrations

Greenhouse, Lever, Workday, SAP SuccessFactors, iCIMS, and custom API access on enterprise plans.

Limitation

HackerEarth is built exclusively for technical hiring. Teams that also run high-volume non-technical programs (sales, support, operations) will need a separate platform for those pipelines.

Pricing

Custom pricing based on hiring volume. Free trial available with no credit card required. See HackerEarth's technical assessment platform for a full capabilities overview.

Start your free trial with HackerEarth and see AI-powered technical assessments in action. Try free at hackerearth.com/recruit

2. HireVue: Best for AI Video Interviewing at Scale

HireVue is the most widely deployed AI interview software for structured behavioral evaluation, running more than 20 million one-way video interviews in Q1 2024 alone (Enhancv, 2026). For teams comparing AI interview tools across categories, see this resource on best AI interview assistants for a breakdown of autonomous interview capabilities.

Key AI Features

AI-scored video interviews using structured behavioral frameworks; game-based cognitive assessments; conversational AI scheduling; basic coding assessments.

Best For

High-volume enterprise hiring programs spanning both technical and non-technical roles, particularly where structured behavioral evaluation at scale is the primary requirement.

Limitation

Coding assessment depth does not match platforms built exclusively for developer hiring. Thirty-one percent of candidates abandon applications specifically because AI video screening felt impersonal (Enhancv, 2026), and HireVue's one-way format is frequently cited. HackerEarth's AI Interview Agent takes a conversational, adaptive approach that developers generally find more relevant to the actual role.

3. Eightfold AI: Best for AI Talent Intelligence and Internal Mobility

Eightfold AI is an intelligent recruiting platform that operates at the sourcing and matching layer, not the assessment layer. Its deep-learning models infer skills and career trajectories from unstructured resume data and match candidates based on potential rather than keyword alignment, which makes it genuinely useful for enterprises sitting on large, underutilized talent databases.

Key AI Features

AI talent matching based on inferred skills and career trajectory; internal talent marketplace for redeployment; diversity analytics; resume-to-role scoring without structured input.

Best For

Large enterprises manage both external recruiting and internal mobility for technical talent across multiple business units.

Limitation

Eightfold does not offer live coding interviews or AI-graded code evaluation. Sourcing matches still need technical validation before an interview. Pairing Eightfold with HackerEarth covers both stages without adding a third tool.

4. Codility: Best for Code-Testing Focused Technical Screening

Codility has been a reliable choice for technical screening longer than most tools in this category have existed, and its coding challenge library is genuinely well-regarded among developers. It is a solid first-pass screening tool for backend and algorithmic roles.

Key AI Features

AI-assisted code evaluation with automated test-case scoring; plagiarism detection across the candidate cohort; automated scoring and basic candidate ranking.

Best For

Companies that want a dedicated coding test platform for initial screening, particularly for backend and infrastructure roles.

Limitation

Codility does not offer autonomous AI interview capability, system design evaluation, or adaptive questioning. For teams that need AI to do more than grade submitted code, it is a starting point rather than a complete solution.

5. iMocha: Best for Skills-Based Assessment Across Tech and Non-Tech Roles

iMocha is the right choice when the need is one assessment platform across both technical and non-technical functions, rather than depth in either. Its library spans coding, cloud, DevOps, communication, cognitive ability, and finance.

Key AI Features

AI-LogicBox for live coding assessment; skills benchmarking against industry norms; AI-driven talent analytics and skills gap identification; automated candidate ranking.

Best For

Organizations hiring across technical and non-technical disciplines who want a single assessment platform and unified reporting layer.

Limitation

Breadth trades against depth. Coding assessment rigor for senior engineering roles does not match platforms built exclusively for developer hiring, which matters for mid-to-senior technical pipelines.

6. Paradox (Olivia): Best for Conversational AI Recruiting Automation

Paradox solves a specific, unglamorous problem: the scheduling coordination and top-of-funnel communication work that consumes recruiter hours without requiring recruiter judgment. Olivia handles it around the clock so your team does not have to.

Key AI Features

AI chatbot for candidate communication and FAQ resolution; automated scheduling with calendar integration; initial screening questionnaires and knockout questions; multilingual support.

Best For

High-volume technical recruiting teams that need to automate top-of-funnel engagement and scheduling without adding headcount.

Limitation

Paradox does not evaluate technical skills in any form. For engineering roles, pair it with a coding assessment platform like HackerEarth to handle the evaluation that Olivia cannot perform.

7. TestGorilla: Best Budget-Friendly AI Assessment Platform

TestGorilla is the practical choice for startups and SMBs that need structured pre-employment testing without enterprise pricing. Its 400+ test library spans coding, cognitive ability, language, and personality, and setup is fast without implementation support.

Key AI Features

AI-generated custom test creation from job descriptions; anti-cheating AI with screen monitoring and shuffle logic; automated candidate ranking.

Best For

Startups and SMBs that need affordable technical screening across multiple role types without dedicated IT support for implementation.

Limitation

Coding tests do not match dedicated developer assessment platforms in depth or rigor. No live coding interview capability or autonomous AI interviewer. Best suited to early-stage filtering rather than final-round technical evaluation.

8. Fetcher: Best for AI-Powered Technical Talent Sourcing

Fetcher addresses a specific upstream problem: finding qualified technical candidates who are not actively applying. Its AI models search across professional databases and automate personalized outreach without requiring recruiter time per contact.

Key AI Features

AI candidate sourcing from multiple professional databases including LinkedIn and GitHub signals; automated multi-touch outreach sequences; diversity pipeline filters; recruiter productivity analytics.

Best For

Technical recruiting teams that need passive candidate pipelines for hard-to-fill engineering roles where inbound volume is insufficient.

Limitation

Fetcher is sourcing only. It does not assess, interview, or evaluate candidates. Every person it surfaces still needs technical screening. Pairing with HackerEarth covers the pipeline from sourced to assessed without adding a third platform.

9. CoderPad: Best for Live Collaborative Coding Interviews

CoderPad is the interviewing room, not the screening tool. Think of it as a shared whiteboard where the candidate and interviewer both have keyboards: useful for final-round evaluation, not a replacement for early-stage filtering. It supports 30+ languages and frameworks.

For teams evaluating live interview environments more broadly, see this guide to top online coding interview platforms for a detailed comparison.

Key AI Features

Optional AI-assisted hints during live sessions; session playback for post-interview review; language-aware syntax support; interview notes integrated into the session record.

Best For

Engineering teams that prioritize live collaborative coding interviews for final-round evaluation where observing real-time problem-solving matters.

Limitation

CoderPad covers the live interview stage only. No AI-powered screening, no autonomous interview capability, no proctored take-home assessment. HackerEarth's FaceCode is a comparable live coding environment that integrates directly with the broader assessment workflow.

10. Pymetrics (Harver): Best for Neuroscience-Based AI Assessments

Pymetrics measures what code tests cannot: working memory, risk tolerance, attention, and learning speed, using gamified assessments grounded in neuroscience research. Acquired by Harver in 2022, it includes bias auditing to check for demographic disparities in outcomes.

Key AI Features

Gamified cognitive and behavioral assessments from neuroscience research; AI trait-to-role matching; bias auditing across demographic groups; integration with Harver talent workflows.

Best For

Companies that want cognitive and behavioral fit data alongside technical evaluation, particularly for roles where adaptability and learning speed matter as much as raw coding ability.

Limitation

Pymetrics does not assess coding skills or technical knowledge. It must be paired with a dedicated developer assessment tool. Cognitive fit without technical validation is an incomplete picture for any engineering hire.

How AI Recruiting Software Transforms Technical Hiring

The ROI case is well-documented. These are the specific outcomes you should hold vendors accountable to.

Faster Screening Without Sacrificing Quality

AI resume screening software reduces time-to-shortlist by up to 75% compared to manual resume review (Impress.ai, 2025). For technical roles where average time-to-hire is 62 days globally (Workable, 2024), cutting two to three weeks from the upstream screening stage is the single highest-leverage intervention most teams can make.

Reduced Bias in Candidate Evaluation

Properly audited AI tools reduce unconscious bias by up to 60% (Fueler, 2026), because skills-based evaluation removes the demographic proxies that creep into unstructured resume review. Machine learning recruiting tools that are continuously monitored against demographic outcome data are more defensible than those that are audited once at launch and never again. NYC Local Law 144 and the EU AI Act now require vendors to demonstrate this: before purchasing any AI-based hiring platform, ask for bias audit documentation. This is a procurement filter at most large enterprises in 2026, not a nice-to-have.

Better Candidate Experience

AI done well shortens and clarifies the process. AI done badly drives candidates away: 31.4% have abandoned an application because of an impersonal AI video or chatbot screen (Enhancv, 2026), and 68.5% say AI was never disclosed to them. Transparency and relevance are the variables that separate AI that improves completion rates from AI that tanks them.

Lower Cost-Per-Hire

Teams report 20 to 40% lower cost-per-hire when AI automates screening and scheduling (Greenhouse and GoodTime, 2025). For technical hiring specifically, the compounding gain comes from consolidating AI candidate screening software, AI interview software, and proctoring into one platform rather than paying for and integrating three.

How to Choose the Right AI Recruiting Software for Your Team

The wrong way to evaluate automated recruiting software is to start with the feature list. The right way is to start with the specific stage in your funnel where qualified candidates are falling through or where recruiter time is being spent on work that should not require a human.

- Define your technical hiring volume and role types before evaluating anything.

- Decide which funnel stages need AI: sourcing, screening, interviewing, and proctoring each have different tool requirements.

- Verify ATS and HRIS integration compatibility before shortlisting. A platform that cannot connect to your system of record creates the same manual work you are trying to eliminate.

- Evaluate assessment depth for your specific tech stack, not a generic "coding" capability.

- Complete the candidate experience firsthand before committing. Request a demo environment and take the assessment as a candidate.

- Request bias audit and compliance documentation. For NYC and EU hiring this is mandatory; for everyone else it signals platform maturity.

Book a demo to see how HackerEarth consolidates assessments, AI interviews, and proctoring into one platform. Schedule at hackerearth.com/recruit

Frequently Asked Questions About AI Recruiting Software

Schema note for developers: Apply FAQ schema markup (schema.org/FAQPage with nested Question and Answer types) to this section for rich result eligibility in Google Search.

What is AI recruiting software?

AI recruiting software uses machine learning, NLP, and computer vision to automate sourcing, screening, assessment, interviewing, and candidate ranking. For technical hiring specifically, the distinction that matters is whether the tool evaluates actual code output or just infers skills from resume text -- those produce very different shortlists.

How does AI recruiting software compare to traditional hiring methods?

AI screens in minutes, applies consistent criteria across every candidate, and scales to any volume without additional headcount. The important qualifier is that AI works best as a filter and ranker, not as the final decision-maker: the judgment calls at the offer stage still require human context that no model fully captures.

How does AI recruiting software improve hiring speed?

AI reduces time-to-hire by up to 50% on average by automating resume parsing, scoring assessments instantly, and conducting autonomous first-round interviews without scheduling coordination (SHRM, 2025). The gains compound when a single platform handles multiple stages rather than three tools requiring manual handoffs between them.

Can AI recruiting software reduce hiring bias?

AI evaluates candidates on skill signals rather than name, school, or inferred demographics, and properly audited tools reduce unconscious bias by up to 60% (Fueler, 2026). The catch is "properly audited": models trained on historical hiring data can replicate historical bias, which is exactly why NYC Local Law 144 mandates annual independent bias audits rather than vendor self-reporting.

How do you integrate AI recruiting software with your existing HRIS or ATS?

Most platforms offer native integrations with Greenhouse, Lever, Workday, and SAP SuccessFactors, plus open API access. The integration that matters is not just whether results flow through but whether they trigger automatic stage changes and pass/fail routing -- if it still requires a recruiter to manually move candidates after each assessment, you have not actually automated the bottleneck.

What should you look for in AI recruiting software for developer hiring?

Must-haves: 30+ language support, AI-graded code evaluation, live coding interview capability, plagiarism detection, remote proctoring, ATS integration, and role-specific templates for frontend, backend, data science, and DevOps. The feature that separates tier-one platforms from the rest is an autonomous AI interviewer that adapts in real time rather than presenting a fixed question set to every candidate.

Final Verdict: Which AI Recruiting Software Is Best for Technical Roles?

Purpose-built AI developer hiring tools outperform generalist platforms at every stage of the funnel for engineering roles. A platform designed to evaluate all roles is structurally less equipped to evaluate code than one built specifically for engineering.

Best overall for technical hiring: HackerEarth. The only platform combining AI coding assessment, an autonomous AI Interview Agent, live coding via FaceCode, and multi-signal proctoring in a single workflow. Trusted by 4,000+ companies.

Best for AI video interviewing: HireVue. Proven enterprise-scale behavioral evaluation. Coding depth is limited for dedicated technical pipelines.

Best for talent intelligence and sourcing: Eightfold AI. Strong skills inference and internal mobility. Requires a separate assessment tool for technical validation.

Best for budget-conscious teams: TestGorilla. Accessible pricing, broad test coverage, fast setup. Suits early-stage filtering rather than final-round evaluation.

Best for technical talent sourcing: Fetcher. Strong passive candidate discovery for hard-to-fill roles. Needs pairing with an assessment platform for any evaluation.

Explore HackerEarth's technical assessment platform and hire developers with confidence. Start at hackerearth.com/recruit

HackerEarth vs HackerRank for Technical Hiring [2026]

HackerEarth vs HackerRank for Technical Hiring [2026]

HackerEarth is a technical hiring platform that combines role-specific coding assessments, AI-assisted candidate evaluation via its AI Interview Agent, and Smart Browser proctoring — positioned as a HackerRank alternative for teams hiring across multiple technical roles. If you're a recruiter or talent acquisition lead facing 200 applicants for a senior backend engineering role, with 40 credible resumes and engineering bandwidth for only eight interviews, the platform you choose determines whether you spend the next two weeks calibrating screens or making offers. HackerEarth is used by 500+ global enterprises, with customers among Google, Microsoft, Elastic, Flipkart, and Brillio across hiring use cases such as high-volume campus recruiting, multi-role technical screening, and remote assessment delivery.

HackerRank is a technical screening and developer community platform used by a self-reported ~3,000 companies (HackerRank, self-reported; pending Brand Guardian review) to run coding tests, certifications, and live interviews. HackerEarth is a coding assessment platform that combines skill-based assessments, live coding interviews via FaceCode, and an AI Interview Agent designed to support — not replace — human interviewers.

This guide compares both platforms across seven criteria: assessment library, AI-assisted evaluation, live coding interviews, remote proctoring, candidate experience, ATS integrations, and pricing.

Why technical hiring teams look for a HackerRank alternative

Most teams searching for a HackerRank alternative have already run into the same small set of problems. Whether the search is framed as finding a HackerRank competitor, a HackerRank replacement, or a more capable technical screening tool for hiring at scale, the friction points are consistent across G2, Capterra, Reddit's r/cscareerquestions, and Blind.

Assessment customization is gated behind enterprise pricing. On standard plans, creating tests for specialized roles — embedded systems, DevOps, niche backend frameworks — is either restricted or impractical, and many teams end up sending the same generic test to every candidate regardless of role. Pricing is opaque and scales poorly: some G2 reviewers note that costs increase substantially as hiring volume grows, often before the features that justify the cost become available. On the candidate side, HackerRank scores 2.0 out of 5 on Trustpilot from test-takers (retrieved 2025; competitor claim pending Brand Guardian review), with consistent complaints about outdated, algorithm-heavy challenges that feel disconnected from actual job requirements. If you are filtering for LeetCode performance rather than job readiness, you may not be reducing hiring risk in a meaningful way. Teams also report needing proctoring built for specific cheating patterns — candidates switching to ChatGPT in another browser tab, sharing screens with a remote assistant on a second device, or pasting from generative AI tools mid-assessment — rather than basic webcam monitoring.

These are the practical reasons teams look at alternatives. The sections below show how HackerEarth compares as a HackerRank alternative in each category, and where it falls short.

How we evaluated these coding assessment platforms

This developer assessment tool comparison covers seven dimensions, each assessed against publicly available feature data and verified user reviews from G2 and Capterra (2023 to 2025). The goal is to give buyers a clear side-by-side signal rather than a feature checklist.

- Assessment library and question quality — Breadth, depth, and real-world relevance of coding challenges across roles and difficulty levels.

- AI-assisted features — Automated scoring, AI interview tools, candidate ranking, and adaptive questioning.

- Live coding interviews — Collaborative IDE quality, interviewer tools, language support, and post-interview documentation.

- Remote proctoring and anti-cheating — Webcam monitoring, plagiarism detection, tab-switch alerts, and detection of specific cheating patterns.

- Candidate experience — Interface design, onboarding friction, mobile readiness, and completion rates.

- Integrations and ATS compatibility — Native connectors, API flexibility, and ease of setup with existing recruiting stacks.

- Pricing and value — Transparency, scalability, and cost relative to feature access.

HackerRank: platform overview

What HackerRank offers

HackerRank is the familiar name in technical hiring, which is both its clearest strength and its biggest limitation. The platform offers CodeScreen for take-home assessments, CodePair for live coding interviews, and a developer certification ecosystem. HackerRank publicly reports a large registered developer community on its site (competitor claim pending Brand Guardian review), integrations with Greenhouse, Lever, Workday, and SAP, and broad brand recognition that means many candidates have encountered it before. For entry-level hiring using standard algorithms and data structures, it does the job.

HackerRank strengths

Brand recognition carries real value in recruiting: candidates who already know the platform are less likely to abandon the assessment before finishing. HackerRank's certification ecosystem also gives teams a pre-validated signal they can reference in job descriptions. Pre-built role templates reduce setup time for standard engineering roles, and its ATS integrations are well-documented and reliable. For high-volume entry-level hiring built around standard algorithmic screens, HackerRank remains a defensible choice.

HackerRank limitations

The platform's gaps are well-documented in user reviews. Customization of assessments often requires enterprise access, which means teams hiring for anything outside standard software engineering roles are either stuck with generic tests or stuck paying more. Pricing is not publicly listed, and some reviewers note steep renewal increases. Trustpilot reviews from test-takers reflect feedback about outdated challenges and hidden test cases that leave candidates without clarity on where they went wrong. HackerRank's anti-cheating suite does not appear to generate per-candidate integrity scoring or detect specific AI-assistant usage patterns in the way some platforms now offer (competitor capability claims pending Brand Guardian review).

HackerEarth: platform overview

What HackerEarth offers

HackerEarth is built for the technical hiring context most recruiters are operating in now. The platform covers three core hiring products: HackerEarth Assessments (covering 1,000+ skills across 40+ programming languages), FaceCode (live coding interviews with multi-interviewer panel support), and the AI Interview Agent (an AI-assisted screening tool that uses video avatars to conduct screening-stage interviews — designed so human interviewers can focus on later-stage judgment, not to replace them entirely). The AI Interview Agent combines in-depth interviewing, integrated proctoring, and KYC-grade identity verification, with a deterministic evaluation framework intended to keep scoring consistent across candidates. The broader HackerEarth platform also includes additional products for developer sourcing (Hiring Challenges) and workforce skills analytics (SkillsGraph); this article focuses on the three products most directly compared with HackerRank.

HackerEarth strengths

Library breadth gives multi-role hiring teams more options on a single platform. If you are hiring a Python backend engineer, a React developer, and a DevOps architect simultaneously, recruiters can build three role-specific assessments inside one platform. The AI Interview Agent handles screening-stage interviews so human interviewers can focus on later stages — HackerEarth's public position is that AI handles screening so humans concentrate on later-stage judgment, not that AI replaces interviewers outright. The AI behind this product is scoped to conduct structured technical screening interviews, evaluate candidate responses against role-specific criteria, and surface a scorecard for recruiter review; underlying model architecture and training data are not publicly disclosed, and outputs should be treated as screening signals for human review rather than autonomous decisions. Smart Browser proctoring extends beyond tab-switching detection to flag patterns associated with unauthorized assistant use during assessments (specific capability scope pending product team confirmation), giving hiring managers a more interpretable signal than raw session logs.

Where HackerEarth has trade-offs

HackerEarth is worth weighing honestly against its limitations. It has less developer community recognition than HackerRank, which can mean slightly higher candidate familiarity friction during outreach. Procurement teams in regions where HackerRank has longer enterprise tenure may also encounter a steeper internal approval path. And the platform's depth — multiple products, AI features, and configuration options — can introduce a steeper onboarding curve for smaller teams compared with a pure algorithmic screening tool.

Where HackerRank may fit better than HackerEarth

There are scenarios where HackerRank is the more natural fit. Teams whose hiring is centered on entry-level software engineering with standard algorithmic screens, whose candidate funnel relies on HackerRank certifications as a pre-qualification signal, or whose recruiting workflow is already deeply built around HackerRank's certification ecosystem may find the switching cost outweighs the gains. Developer community engagement at HackerRank's reported scale is also difficult to replicate elsewhere.

HackerEarth vs HackerRank: feature-by-feature comparison

Assessment library and customization

HackerEarth, as a HackerRank alternative, takes a different approach to library depth. HackerRank's library covers algorithms, data structures, and SQL well — fitting for standard engineering roles, and sometimes insufficient for anything else. When a team needs to hire for embedded systems or QA automation, the standard question bank often requires enterprise-tier access to work around.

HackerEarth's library covers 1,000+ skills across 40+ programming languages. Custom questions, difficulty weighting, and role-specific templates are part of the platform's feature set (tier-level availability pending RevOps confirmation). Its assessment engine benchmarks candidates against role-specific thresholds on submission. HackerRank is adequate for standard screening; HackerEarth gives recruiters managing multi-role hiring more configuration room.

AI-assisted evaluation

HackerRank auto-scores submissions and monitors sessions — a passive system that grades after submission.

HackerEarth's AI Interview Agent handles screening-stage technical interviews using video avatars, asks calibrated follow-up questions based on candidate responses, and delivers structured scorecards intended to inform — not replace — human interviewers later in the pipeline. The AI is scoped to interview, evaluate, and score against role-specific criteria, with KYC-grade identity verification and a deterministic evaluation framework intended to keep results consistent across candidates; the underlying model architecture and training data are not publicly disclosed, and outputs should be treated as screening signals for human review rather than autonomous decisions. Some research on AI in HR points in a supportive direction: a BCG 2024 CHRO survey reportedly found measurable benefits among organizations using AI in HR, with talent acquisition cited as a leading use case (primary-source citation pending; treat as directional).

Live coding interviews

HackerRank's CodePair is functional: collaborative editor, video, multi-language support. It covers the basics for teams running a moderate volume of live technical interviews.

FaceCode supports a collaborative IDE across the same broad language coverage as the wider HackerEarth platform (40+ languages), includes a drawing and flowchart canvas for system design discussions, and supports a multi-interviewer panel format. It connects directly to HackerEarth's assessment workflow, so candidate data does not need to be moved between systems between stages. HackerRank's CodePair covers core needs; FaceCode adds depth for teams running live technical interviews regularly.

Remote proctoring and anti-cheating

This is the area where the difference between the platforms shows up most in day-to-day recruiting. For many remote hiring scenarios, basic webcam monitoring misses specific cheating patterns — candidates opening a ChatGPT tab during the assessment, screen-sharing the question to a remote assistant on a second device, or copy-pasting AI-generated responses into the IDE.

HackerEarth's Smart Browser remote proctoring capabilities detect tab switching, copy-paste behavior, screen sharing, extension usage, and patterns consistent with unauthorized assistant use during the assessment (specific capability scope pending product team confirmation). Outputs are summarized into per-candidate integrity signals (term pending product team confirmation) that hiring managers can review faster than raw session logs. For high-volume remote hiring, a summarized signal is more usable in practice than a log file. For recruiters working through technical assessment design alongside proctoring choices, HackerEarth's guide to remote proctoring for online assessments walks through the trade-offs in more detail.

Candidate experience

Candidate experience matters for offer acceptance. Some research suggests candidates who have a negative interview experience are more likely to decline the offer (directional claim; primary-source citation pending), which means your assessment platform can directly affect downstream conversion.

HackerRank scores well on G2 among recruiters but holds a 2.0 out of 5 on Trustpilot from test-takers (retrieved 2025; competitor claim pending Brand Guardian review), with feedback citing hidden test cases, outdated challenges, and unresponsive support. HackerEarth receives more positive candidate-facing feedback, particularly around interface clarity and responsive support. Some G2 reviewers on the recruiter side report lower candidate drop-off as a reason they switched (no specific count or date range available).

Integrations and ATS compatibility

Both platforms connect to major ATS systems. HackerRank integrates with Greenhouse, Lever, Workday, SAP, and Freshteam, with the Freshteam integration triggering assessments automatically at specific pipeline stages. HackerEarth supports native integrations with major ATS systems including Greenhouse, Lever, Workday, and SAP, with additional ATS connectors and API access on enterprise plans (specific connector list pending product catalog confirmation). Both are adequate for teams using mainstream ATS platforms. HackerEarth's API flexibility gives it an edge for teams with non-standard stacks.

Pricing and value

Neither platform publishes complete pricing publicly, which is worth knowing before you invest time in an evaluation. HackerRank's pricing is custom-quoted and not publicly listed; specific dollar figures are not included here pending verified third-party citation. HackerEarth's Skill Assessments tier pricing and free trial terms are subject to RevOps confirmation before publication. The more useful pricing comparison for recruiters is feature-per-tier: user reviews suggest HackerEarth's lower tiers tend to include customization depth that on HackerRank often requires a higher contract level.

HackerEarth vs HackerRank: summary comparison table

| Criterion | HackerRank | HackerEarth |

|---|---|---|

| Assessment library | Large algorithmic question bank; strong on standard CS topics | 1,000+ skills covered across 40+ programming languages |

| Language support | Broad language coverage (specific count not publicly disclosed) | 40+ programming languages |

| Custom assessments | Often gated to higher tiers | Customization available (tier-level availability pending RevOps confirmation) |

| AI-assisted evaluation | Auto-grading and session monitoring | AI Interview Agent (screening stage) with KYC-grade identity verification and a deterministic evaluation framework |

| Live coding interviews | CodePair (collaborative IDE, video) | FaceCode (collaborative IDE, drawing and flowchart canvas, multi-interviewer panels) |

| Remote proctoring | Session monitoring | Smart Browser, multi-signal monitoring, integrity signals (term pending product confirmation) |

| Candidate experience | Strong brand recognition; lower test-taker ratings reported | Higher candidate-facing satisfaction reported |

| Developer community | Large public developer community and certifications (competitor claim pending Brand Guardian review) | Smaller community footprint; enterprise-hiring focus |

| ATS integrations | Greenhouse, Lever, Workday, SAP + others | Greenhouse, Lever, Workday, SAP + API access on enterprise plans |

| Pricing transparency | Custom; specific figures not publicly listed | Tiered pricing, specific figures pending RevOps confirmation |

| Free trial | Not prominently advertised | Trial terms pending confirmation |

| Customers cited | Self-reported customer count (pending Brand Guardian review) | 500+ global enterprises |

| Best for | Standard algorithm screening; developer community engagement; certification-driven funnels | AI-assisted screening at scale; multi-role technical hiring; remote proctoring depth |

Who should choose HackerRank?

HackerRank is still a reasonable choice in several situations. If your team has spent years building HackerRank workflows, including integrated ATS configurations and custom question banks, the switching cost is real and worth factoring honestly. The platform also has genuine value for developer community engagement and certification — if your recruiting strategy uses HackerRank certifications as a pre-qualification signal, the developer ecosystem supports that directly at scale.

For low-volume hiring of entry-level engineers where standard algorithmic tests are appropriate and brand familiarity reduces candidate drop-off, HackerRank's Starter plan covers the use case. HackerRank also retains an advantage where procurement teams are already familiar with the vendor and security review has been completed previously — that operational lift is non-trivial for a switch.

If you are not hiring at scale, not hiring across multiple specialized roles, and not dealing with the proctoring demands of remote-first hiring, HackerRank may be adequate for your current situation.

Who should choose HackerEarth?

HackerEarth is worth considering as a HackerRank alternative for recruiters and talent acquisition teams where the cost of a wrong hire is high and the margin for slow screening is low.

If your recruiters are spending hours on manual technical screening calls, the AI Interview Agent can handle the screening stage with structured, scored reports — initial setup and calibration still require recruiter configuration to align with your hiring criteria. If you are hiring across multiple technical disciplines simultaneously, the platform's skill coverage and customization options reduce the need to compromise assessment quality to fit a narrow question bank. If you are hiring remotely and need assessment results that will hold up to scrutiny, Smart Browser's integrity signals give you something defensible. And if your candidates are comparing their experience with your company against your competitors, candidate-facing satisfaction is a factor worth weighing.

The verdict: HackerEarth as a HackerRank alternative for technical hiring

HackerRank is not a bad platform. It is a platform whose core product model — large algorithmic question banks paired with session-level proctoring — was set before the widespread availability of generative AI assistants candidates can use during assessments. When most hiring happened in offices, algorithmic tests were an acceptable proxy for technical skill. With generative AI tools now widely available to candidates during assessments, and engineering teams unable to spend a day screening 200 applicants, the evaluation criteria for an alternative have shifted for many teams.

HackerEarth's value as a HackerRank alternative comes down to three points. Broad skill coverage means recruiters are not generalizing assessments to fit the tool. The AI Interview Agent means engineers spend time reviewing scored screening reports rather than running every first call themselves. And Smart Browser's integrity signals give your results a clearer line of defense.

See how HackerEarth compares in practice. Start a free trial.

Frequently asked questions

What is the best alternative to HackerRank for technical hiring?

HackerEarth is a strong HackerRank alternative for recruiting teams hiring across multiple technical roles, especially when AI-assisted screening and detailed remote proctoring matter. The counterintuitive point most evaluators miss is this: the strongest alternative is rarely the one with the longest feature list — it is the one whose default tier matches your most common hiring scenario without forcing a multi-month migration. A practical free-trial tactic is to migrate one active role end-to-end rather than running a sample test, so the real switching cost surfaces before contract signature.

Is HackerEarth better than HackerRank?

HackerEarth is generally the stronger choice for recruiting teams hiring across multiple technical roles, needing AI-assisted screening, and running remote assessments with proctoring requirements; HackerRank holds an advantage for teams whose funnel depends on its developer community and certification ecosystem. The trade-off is between an established developer community (HackerRank) and configurable, AI-assisted screening (HackerEarth) — and in our experience, many teams underweight how much switching cost matters until they are inside it.

How much does HackerEarth cost compared to HackerRank?

Both platforms are custom-quoted at scale. HackerRank's entry tier pricing is not publicly listed and specific third-party figures are not included here pending verified citation. HackerEarth's published Skill Assessments tier pricing and free trial terms are subject to RevOps confirmation. The more useful comparison for buyers is feature-per-tier rather than headline price — particularly whether assessment customization and proctoring are available on the tier that matches your hiring volume.

Can HackerEarth handle enterprise hiring?

Yes — HackerEarth is used by 500+ global enterprises. It supports the major ATS integrations and API access on enterprise plans expected by enterprise procurement. The more useful question for most teams is whether HackerEarth's workflow matches your existing hiring stages, which a free trial is designed to answer.

Does HackerEarth offer AI-assisted interviews?

Yes. HackerEarth's AI Interview Agent uses video avatars to conduct screening-stage technical interviews and produce structured scorecards, with KYC-grade identity verification and a deterministic evaluation framework. The platform's public position is that AI handles screening so human interviewers can focus on later-stage judgment — the AI Interview Agent is designed to inform human decision-making, not replace interviewers entirely.

What coding languages does HackerEarth support?

HackerEarth supports 40+ programming languages covering frontend, backend, data science, DevOps, and mobile roles.

Editor notes (not for publication): - META TITLE (proposed): "HackerEarth vs HackerRank for Technical Hiring [2026]" (54 chars). Submission header, canonical H1, meta title, and CMS slug must all be

AI Recruitment Vendor Evaluation: Buyer's Checklist 2026

How to evaluate AI recruitment vendors: the buyer's checklist for 2026

Estimated read time: 12 minutes

Meta title: AI recruitment vendor evaluation: buyer's checklist 2026 (56 characters)

Meta description: How to evaluate AI recruitment vendors in 2026: a 10-step buyer's checklist covering bias audits, EU AI Act compliance, ATS fit, and pilots. (143 characters)

Primary audience: Head of Talent Acquisition (primary); Engineering Managers and CHROs (secondary).

To evaluate AI recruitment vendors in 2026, treat procurement as a compliance, integration, and candidate-experience exercise — not a software demo. The single biggest mistake teams make is scoring vendors on feature lists before defining their own hiring bottleneck, and the second is signing without a structured pilot. This guide walks through a ten-step framework you can run with TA, engineering, IT, legal, and finance in the room.

AI systems carry regulatory, ethical, and candidate-experience implications that standard SaaS procurement was never designed to evaluate. Learning how to evaluate AI recruitment vendors with that lens is now table stakes, because the regulatory clock is running. Under the EU AI Act, full enforcement for high-risk AI systems — which explicitly includes employment AI — takes effect August 2, 2026. NYC Local Law 144 has been in force since July 5, 2023; per the NYC DCWP, civil penalties begin at $500 for a first violation and can reach $1,500 for subsequent violations, with each day of non-compliance treated as a separate violation — buyers should confirm current penalty figures with counsel before relying on them in procurement. If your evaluation process does not include compliance gatekeeping, you are collecting demos, not evaluating vendors.

This buyer's guide gives procurement teams, TA leaders, and engineering managers a shared AI recruitment vendor checklist they can work through together.

Step 1 — Define your hiring pain points before you shop

Defining your own bottleneck before vendor conversations is the single most important step in any AI recruitment vendor evaluation. Skipping it is how teams buy tools that solve the vendor's problem, not theirs. A sound recruitment technology evaluation starts with your own hiring data, not a vendor's feature list.

Map your current workflow gaps

Fill in this table before your first vendor call. The gaps you identify should drive every scoring decision that follows:

| Funnel Stage | Current Tool or Process | Observed Gap or Delay | Impact |

|---|---|---|---|

| Sourcing | LinkedIn Recruiter, job boards | 7+ days to build shortlists for technical roles | Slow top-of-funnel; passive candidates missed |

| AI candidate screening | Manual resume review | 3–5 days; inconsistent criteria across recruiters | Quality varies; bias risk unquantified |

| Technical assessment | Ad hoc whiteboard or take-home | No standardized scoring; senior engineer time consumed | Inconsistent data; interviewer time wasted |

| Interview scheduling | Email coordination | 4–6 days of back-and-forth per candidate | Time lost; candidates drop off during wait |

| Offer | Manual tracking | Slow turnaround; no pipeline visibility | Competitive candidates accept elsewhere |

Set measurable goals for AI recruitment

Goals set before vendor conversations make hiring vendor selection defensible to finance and give you a real basis for pilot evaluation. Agree on these across HR, engineering, and finance before any demo is scheduled:

- Reduce time-to-hire for software engineering roles from 45 days to 30 days within two quarters

- Increase technical assessment completion rate from 62% to 85% within 90 days

- Cut cost-per-qualified-candidate by 40% for roles requiring coding evaluation

- Achieve SOC 2 Type II compliance for all candidate data processed by the new vendor within 60 days of contract signing

Step 2 — Understand the AI recruitment vendor landscape

The AI recruitment vendor landscape splits into five distinct categories, and scoring across categories without acknowledging that is how procurement teams end up comparing tools that don't do the same job. Running an effective AI recruitment software comparison requires knowing which category each vendor belongs to before you score them — comparing a sourcing tool against an assessment platform is like scoring a plumber and an electrician on the same rubric.

Categories of AI recruitment tools

The vendor landscape breaks into five segments. Most AI recruiting tools occupy one or two of these; very few cover all of them at depth:

- AI sourcing tools: Find and surface passive candidates from databases and code repositories.

- AI screening and assessment platforms: Evaluate candidate qualifications through resume scoring, skills tests, or cognitive assessments.

- AI interview platforms: Conduct, record, transcribe, or score interviews.

- AI scheduling and workflow automation (also called recruitment automation platforms): Handle calendar coordination and candidate communications.

- Full-stack AI recruitment suites: Attempt to cover multiple stages.

When you evaluate recruitment technology, your pain points from Step 1 should map to one or two of these segments, not all five.

Full-stack platforms vs. point solutions

The full-stack vs. point-solution decision is the one most procurement teams get wrong — usually by defaulting to a suite when a focused tool would outperform it at the specific stage that actually needs fixing:

| Factor | Full-Stack Platform | Point Solution |

|---|---|---|

| AI depth per function | Often broad but shallow | Deep in one area |

| Integration overhead | Lower (single vendor) | Higher (multiple vendors to connect) |

| Data continuity | Unified pipeline data | Fragmented across tools |

| Vendor dependency risk | High (single point of failure) | Distributed |

| Time to value | Longer (more to configure) | Faster for targeted problem |

| Cost at scale | Higher license cost | Can be modular and lower entry |

Step 3 — Evaluate core AI capabilities

The technical interrogation of an AI recruitment vendor — training data, update cadence, documented error rates — is what separates a real evaluation from a demo review. Skip it and teams discover post-contract that AI recruitment platform features that looked impressive in a demo do not hold up under real conditions. Knowing how to evaluate AI recruitment vendors at this layer means pressing on each of those dimensions explicitly.

Assessment and screening accuracy

"AI-powered" on a vendor's website means nothing without validation data behind it. Ask directly: what is the model trained on, when was it last updated, and what is the documented false-positive rate? Request specific benchmark data from each vendor in writing — the best AI recruitment platforms 2026 can produce these benchmarks on request; those that cannot should not advance past the RFP stage. HackerEarth's Skill Assessments use rubric-based scoring with role-based assessment design, which is the difference between an assessment that predicts job performance and one that measures interview prep.

AI interview and coding evaluation

When evaluating AI interview platforms, require candidates to demo the actual coding environment on real data, not a recorded walkthrough. Questions that separate real capability from polished demo:

- Does the platform execute code in a real runtime environment, or does it only analyze syntax?

- How many programming languages does it support natively versus through workarounds?

- Does AI scoring operate autonomously, or does it assist a human reviewer?

- Are transcripts and scoring rationale exportable for compliance audit?

- Can the interview AI adapt to candidate responses, or does it follow a fixed script?

Fixed-sequence interview AI can function like a test with a publicly available answer key. For a broader comparison of interviewing tools and approaches, see HackerEarth's overview of FaceCode, the interviewer-led technical interview platform.

Candidate matching and ranking algorithms

Black-box ranking is a compliance liability, not just a technical shortcoming. Any AI talent acquisition vendor that cannot explain why their algorithm ranked one candidate above another — in terms a hiring manager can read and defend — is handing you a legal risk alongside their platform license. Require end-to-end documentation of matching logic before any contract advances.

Step 4 — Audit for bias, fairness, and compliance

Any AI hiring platform that cannot produce independent bias audit documentation in 2026 should be eliminated before the scorecard is built. This step is the regulatory gate that everything else depends on.

Bias testing and audit documentation

Require vendors to produce their bias audit methodology, not just a claim that testing was done. The documentation must include adverse impact ratios for Title VII-protected groups, the auditor's name and independence from the vendor, and the dataset used. NYC Local Law 144 sets the operational benchmark: annual independent bias audits, public results, and 10-business-day advance notice to candidates. Penalty figures previously cited in this article — first-violation and subsequent-violation amounts under the law — should be confirmed against current NYC DCWP guidance before relying on them in procurement. Enterprise buyers increasingly expect bias audit documentation as part of procurement diligence.

AI Act compliance for recruitment